Join our daily and weekly newsletters for the latest updates and exclusive content on industry-leading AI coverage. Learn More

The latest AI large language model (LLM) releases, such as Claude 3.7 from Anthropic and Grok 3 from xAI, are often performing at PhD levels — at least according to certain benchmarks. This accomplishment marks the next step toward what former Google CEO Eric Schmidt envisions: A world where everyone has access to “a great polymath,” an AI capable of drawing on vast bodies of knowledge to solve complex problems across disciplines.

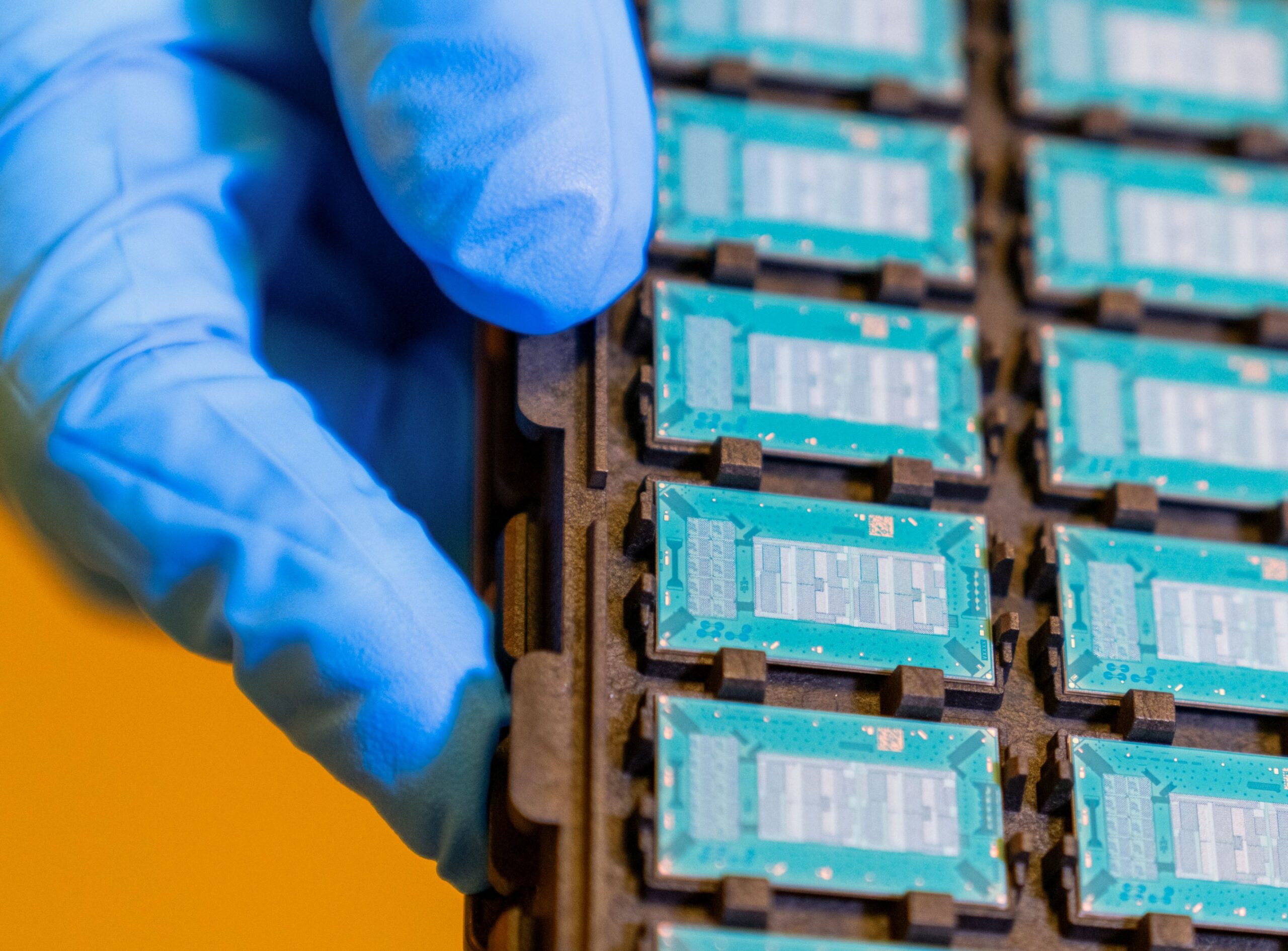

Wharton Business School Professor Ethan Mollick noted on his One Useful Thing blog that these latest models were trained using significantly more computing power than GPT-4 at its launch two years ago, with Grok 3 trained on up to 10 times as much compute. He added that this would make Grok 3 the first “gen 3” AI model, emphasizing that “this new generation of AIs is smarter, and the jump in capabilities is striking.”

For example, Claude 3.7 shows emergent capabilities, such as anticipating user needs and the ability to consider novel angles in problem-solving. According to Anthropic, it is the first hybrid reasoning model, combining a traditional LLM for fast responses with advanced reasoning capabilities for solving complex problems.

Mollick attributed these advances to two converging trends: The rapid expansion of compute power for training LLMs, and AI’s increasing ability to tackle complex problem-solving (often described as reasoning or thinking). He concluded that these two trends are “supercharging AI abilities.”

What can we do with this supercharged AI?

In a significant step, OpenAI launched its “deep research” AI agent at the beginning of February. In his review on Platformer, Casey Newton commented that deep research appeared “impressively competent.” Newton noted that deep research and similar tools could significantly accelerate research, analysis and other forms of knowledge work, though their reliability in complex domains is still an open question.

Based on a variant of the still unreleased o3 reasoning model, deep research can engage in extended reasoning over long durations. It does this using chain-of-thought (COT) reasoning, breaking down complex tasks into multiple logical steps, just as a human researcher might refine their approach. It can also search the web, enabling it to access more up-to-date information than what is in the model’s training data.

Timothy Lee wrote in Understanding AI about several tests experts did of deep research, noting that “its performance demonstrates the impressive capabilities of the underlying o3 model.” One test asked for directions on how to build a hydrogen electrolysis plant. Commenting on the quality of the output, a mechanical engineer “estimated that it would take an experienced professional a week to create something as good as the 4,000-word report OpenAI generated in four minutes.”

But wait, there’s more…

Google DeepMind also recently released “AI co-scientist,” a multi-agent AI system built on its Gemini 2.0 LLM. It is designed to help scientists create novel hypotheses and research plans. Already, Imperial College London has proved the value of this tool. According to Professor José R. Penadés, his team spent years unraveling why certain superbugs resist antibiotics. AI replicated their findings in just 48 hours. While the AI dramatically accelerated hypothesis generation, human scientists were still needed to confirm the findings. Nevertheless, Penadés said the new AI application “has the potential to supercharge science.”

What would it mean to supercharge science?

Last October, Anthropic CEO Dario Amodei wrote in his “Machines of Loving Grace” blog that he expected “powerful AI” — his term for what most call artificial general intelligence (AGI) — would lead to “the next 50 to 100 years of biological [research] progress in 5 to 10 years.” Four months ago, the idea of compressing up to a century of scientific progress into a single decade seemed extremely optimistic. With the recent advances in AI models now including Anthropic Claude 3.7, OpenAI deep research and Google AI co-scientist, what Amodei referred to as a near-term “radical transformation” is starting to look much more plausible.

However, while AI may fast-track scientific discovery, biology, at least, is still bound by real-world constraints — experimental validation, regulatory approval and clinical trials. The question is no longer whether AI will transform science (as it certainly will), but rather how quickly its full impact will be realized.

In a February 9 blog post, OpenAI CEO Sam Altman claimed that “systems that start to point to AGI are coming into view.” He described AGI as “a system that can tackle increasingly complex problems, at human level, in many fields.”

Altman believes achieving this milestone could unlock a near-utopian future in which the “economic growth in front of us looks astonishing, and we can now imagine a world where we cure all diseases, have much more time to enjoy with our families and can fully realize our creative potential.”

A dose of humility

These advances of AI are hugely significant and portend a much different future in a brief period of time. Yet, AI’s meteoric rise has not been without stumbles. Consider the recent downfall of the Humane AI Pin — a device hyped as a smartphone replacement after a buzzworthy TED Talk. Barely a year later, the company collapsed, and its remnants were sold off for a fraction of their once-lofty valuation.

Real-world AI applications often face significant obstacles for many reasons, from lack of relevant expertise to infrastructure limitations. This has certainly been the experience of Sensei Ag, a startup backed by one of the world’s wealthiest investors. The company set out to apply AI to agriculture by breeding improved crop varieties and using robots for harvesting but has met major hurdles. According to the Wall Street Journal, the startup has faced many setbacks, from technical challenges to unexpected logistical difficulties, highlighting the gap between AI’s potential and its practical implementation.

What comes next?

As we look to the near future, science is on the cusp of a new golden age of discovery, with AI becoming an increasingly capable partner in research. Deep-learning algorithms working in tandem with human curiosity could unravel complex problems at record speed as AI systems sift vast troves of data, spot patterns invisible to humans and suggest cross-disciplinary hypotheses.

Already, scientists are using AI to compress research timelines — predicting protein structures, scanning literature and reducing years of work to months or even days — unlocking opportunities across fields from climate science to medicine.

Yet, as the potential for radical transformation becomes clearer, so too do the looming risks of disruption and instability. Altman himself acknowledged in his blog that “the balance of power between capital and labor could easily get messed up,” a subtle but significant warning that AI’s economic impact could be destabilizing.

This concern is already materializing, as demonstrated in Hong Kong, as the city recently cut 10,000 civil service jobs while simultaneously ramping up AI investments. If such trends continue and become more expansive, we could see widespread workforce upheaval, heightening social unrest and placing intense pressure on institutions and governments worldwide.

Adapting to an AI-powered world

AI’s growing capabilities in scientific discovery, reasoning and decision-making mark a profound shift that presents both extraordinary promise and formidable challenges. While the path forward may be marked by economic disruptions and institutional strains, history has shown that societies can adapt to technological revolutions, albeit not always easily or without consequence.

To navigate this transformation successfully, societies must invest in governance, education and workforce adaptation to ensure that AI’s benefits are equitably distributed. Even as AI regulation faces political resistance, scientists, policymakers and business leaders must collaborate to build ethical frameworks, enforce transparency standards and craft policies that mitigate risks while amplifying AI’s transformative impact. If we rise to this challenge with foresight and responsibility, people and AI can tackle the world’s greatest challenges, ushering in a new age with breakthroughs that once seemed impossible.

Daily insights on business use cases with VB Daily

If you want to impress your boss, VB Daily has you covered. We give you the inside scoop on what companies are doing with generative AI, from regulatory shifts to practical deployments, so you can share insights for maximum ROI.

Read our Privacy Policy

Thanks for subscribing. Check out more VB newsletters here.

An error occured.