“With volatility now the norm, security and risk leaders need practical guidance on managing existing spending and new budgetary necessities,” states Forrester’s 2026 Budget Planning Guide, revealing a fundamental shift in how organizations allocate cybersecurity resources.

Software now commands 40% of cybersecurity spending, exceeding hardware at 15.8%, outsourcing at 15% and surpassing personnel costs at 29% by 11 percentage points while organizations defend against gen AI attacks executing in milliseconds versus a Mean Time to Identify (MTTI) of 181 days according to IBM’s latest Cost of a Data Breach Report.

Three converging threats are flipping cybersecurity on its head: what once protected organizations is now working against them. Generative AI (gen AI) is enabling attackers to craft 10,000 personalized phishing emails per minute using scraped LinkedIn profiles and corporate communications. NIST’s 2030 quantum deadline threatens retroactive decryption of $425 billion in currently protected data. Deepfake fraud that surged 3,000% in 2024 now bypasses biometric authentication in 97% of attempts, forcing security leaders to reimagine defensive architectures fundamentally.

Caption: Software now commands 40% of cybersecurity budgets in 2025, representing an 11 percentage point premium over personnel costs at 29%, as organizations layer security solutions to combat gen AI threats executing in milliseconds. Source: Forrester’s 2026 Budget Planning Guide

AI Scaling Hits Its Limits

Power caps, rising token costs, and inference delays are reshaping enterprise AI. Join our exclusive salon to discover how top teams are:

- Turning energy into a strategic advantage

- Architecting efficient inference for real throughput gains

- Unlocking competitive ROI with sustainable AI systems

Secure your spot to stay ahead: https://bit.ly/4mwGngO

Enterprise security teams managing 75 or more tools lose $18 million annually to integration and overhead alone. The average detection time remains 277 days, while attacks execute within milliseconds.

Gartner forecasts that interactive application security testing (IAST) tools will lose 80% of market share by 2026. Security Service Edge (SSE) platforms that promised streamlined convergence now add to the complexity they intended to solve. Meanwhile, standalone risk-rating products flood security operations centers with alerts that lack actionable context, leading analysts to spend 67% of their time on false positives, according to IDC’s Security Operations Study.

The operational math doesn’t work. Analysts require 90 seconds to evaluate each alert, but they receive 11,000 alerts daily. Each additional security tool deployed reduces visibility by 12% and increases attacker dwell time by 23 days, as reported in Mandiant’s 2024 M-Trends Report. Complexity itself has become the enterprise’s greatest cybersecurity vulnerability.

Platform vendors have been selling consolidation for years, capitalizing on the chaos and complexity that app and tool sprawl create. As George Kurtz, CEO of CrowdStrike, explained in a recent VentureBeat interview about competing with a platform in today’s mercurially changing market conditions: “The difference between a platform and platformization is execution. You need to deliver immediate value while building toward a unified vision that eliminates complexity.”

CrowdStrike’s Charlotte AI automates alert triage and saves SOC teams over 40 hours every week by classifying millions of detections at 98% accuracy; that equals the output of five seasoned analysts and is fueled by Falcon Complete’s expert-labeled incident corpus.

“We couldn’t have done this without our Falcon Complete team,” Elia Zaitsev, CTO at CrowdStrike, told VentureBeat in a recent interview. “They do triage as part of their workflow, manually handling millions of detections. That high-quality, human-annotated dataset is what made over 98% accuracy possible. We recognized that adversaries are increasingly leveraging AI to accelerate attacks. With Charlotte AI, we’re giving defenders an equal footing, amplifying their efficiency and ensuring they can keep pace with attackers in real time.”

CrowdStrike, Microsoft’s Defender XDR with MDVM/Intune, Palo Alto Networks, Netskope, Tanium and Mondoo now bundle XDR, SIEM and auto-remediation, transforming SOCs from delayed forensics sessions to the ability to perform real-time threat neutralization.

Security budgets surge 10% as gen AI attacks outpace human defense

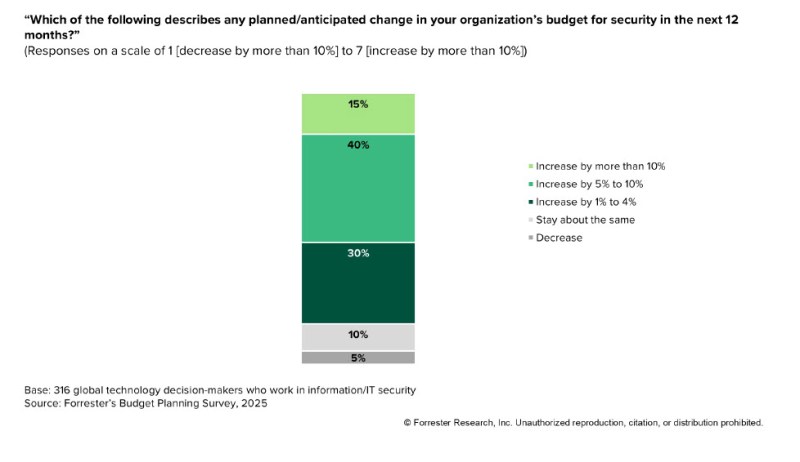

Forrester’s guide finds 55% of global security technology decision-makers expect significant budget increases in the next 12 months. 15% anticipate jumps exceeding 10% while 40% expect increases between 5% and 10%. This spending surge reflects an asymmetric battlefield where attackers deploy gen AI to simultaneously target thousands of employees with personalized campaigns crafted from real-time scraped data.

Attackers are making the most of the advantages they’re getting from adversarial AI, with speed, stealth and highly personalized, target attacks becoming the most lethal. “For years, attackers have been utilizing AI to their advantage,” Mike Riemer, Field CISO at Ivanti, told VentureBeat. “However, 2025 will mark a turning point as defenders begin to harness the full potential of AI for cybersecurity purposes.”

Caption: 55% of security leaders expect budget increases above 5% in 2026, with Asia Pacific organizations leading at 22% expecting increases above 10% versus just 9% in North America. Source: Forrester’s 2026 Budget Planning Guide

Regional spending disparities reveal threat landscape variations and how CISOs are responding to them. Asia Pacific organizations lead with 22% expecting budget increases above 10% versus just 9% in North America. Cloud security, on-premises technology and security awareness training top investment priorities globally.

Software dominates budgets as runtime defenses become critical in 2026

VentureBeat continues to hear from security leaders about how crucial protecting the inference layer of AI model development is. Many consider it the new frontline of the future of cybersecurity. Inference layers are vulnerable to prompt injection, data exfiltration, or even direct model manipulation. These are all threats that demand millisecond-scale responses, not delayed forensic investigations.

Forrester’s latest CISO spending guide underscores a profound shift in cybersecurity spending priorities, with cloud security leading all spending increases at 12%, closely followed by investments in on-premises security technology at 11%, and security awareness initiatives at 10%. These priorities reflect the urgency CISOs feel to strengthen defenses precisely at the critical moment of AI model inference.

“At Reputation, security is baked into our core architecture and enforced rigorously at runtime,” Carter Rees, Vice President of Artificial Intelligence at Reputation, recently told VentureBeat. “The inference layer, the exact moment an AI model interacts with people, data, or tools, is where we apply our most stringent controls. Every interaction includes authenticated tenant and role contexts, verified in real-time by an AI security gateway.”

Reputation’s multi-tiered approach has become a de facto gold standard, blending proactive and reactive defenses. “Real-time controls immediately take over,” Rees explained. “Our prompt firewall blocks unauthorized or off-topic inputs instantly, restricting tool and data access strictly to user permissions. Behavioral detectors proactively flag anomalies the moment they occur.”

This rigorous runtime security approach extends equally into customer-facing systems. “For natural language interactions, our AI only pulls from explicitly customer-approved sources,” Rees noted. “Each generated response must transparently cite its sources. We verify citations match both tenant and context, routing for human review if they do not.”

Quantum computing’s accelerating risk

Quantum computing is quickly evolving from a theoretical concern into an immediate enterprise threat. Security leaders now face “harvest now, decrypt later” (HNDL) attacks, where adversaries store encrypted data for future quantum-enabled decryption. Widely used encryption methods like 2048-bit RSA risk compromise once quantum processors reach operational scale with tens of thousands of reliable qubits.

The National Institute of Standards and Technology (NIST) finalized three critical Post-Quantum Cryptography (PQC) standards in August 2024, mandating encryption algorithm retirement by 2030 and full prohibition by 2035. Global agencies, including Australia’s Signals Directorate, require PQC implementation by 2030.

Forrester urges organizations to prioritize PQC adoption for protecting sensitive data at rest, in transit, and in use. Security leaders should leverage cryptographic inventory and discovery tools, partnering with cryptoagility providers such as Entrust, IBM, Keyfactor, Palo Alto Networks, QuSecure, SandboxAQ, and Thales. Given quantum’s rapid progression, CISOs need to factor in how they’ll update encryption strategies to avoid obsolescence and vulnerability.

Explosion of identities is fueling an AI-driven credential crisis

Machine identities now outnumber human users by a staggering 45:1 ratio, fueling a credential crisis beyond human management. Forrester’s guide underscores scaling machine identity management as mission-critical to mitigating emerging threats. Gartner forecasts identity security spending to nearly double, reaching $47.1 billion by 2028.

Traditional endpoint approaches aren’t capable of slowing down a growing onslaught of adversarial AI attacks. Ivanti’s Daren Goeson recently told VentureBeat: “As these endpoints multiply, so does their vulnerability. Combining AI with Unified Endpoint Management (UEM) is increasingly essential.” Ivanti’s AI-driven Vulnerability Risk Rating (VRR) illustrates this benefit, enabling organizations to patch vulnerabilities 85% faster by identifying threats traditional scoring methods overlook, making AI-driven credential intelligence enterprise security at scale.

“Endpoint devices such as laptops, desktops, smartphones, and IoT devices are essential to modern business operations. However, as their numbers grow, so do the opportunities for attackers to exploit endpoints and their applications, ”Goeson explained. “Factors like an expanded attack surface, insufficient security resources, unpatched vulnerabilities, and outdated software contribute to this rising risk. By adopting a comprehensive approach that combines UEM solutions with AI-powered tools, businesses significantly reduce their cyber risk and the impact of attacks,” Goeson advised VentureBeat during a recent interview.

Forrester saves their immediate call to action in the guide for advising security leaders to begin divesting legacy security tools immediately, with a specific focus on interactive application security testing (IAST), standalone cybersecurity risk-rating (CRR) products, and fragmented Security Service Edge (SSE), SD-WAN, and Zero Trust Network Access (ZTNA) solutions.

Instead, Forrester advises, security leaders need to prioritize more integrated platforms that enhance visibility and streamline management. Unified Secure Access Service Edge (SASE) solutions from Palo Alto Networks and Netskope now provide essential consolidation. At the same time, integrated Third-Party Risk Management (TPRM) and continuous monitoring platforms from UpGuard, Panorays and RiskRecon replace standalone CRR tools the consulting firm advises.

Additionally, automated remediation powered by Microsoft’s MDVM with Intune, Tanium’s endpoint management, and DevOps-focused solutions like Mondoo has emerged as a critical capability for real-time threat neutralization.

CISOs must consolidate security at AI’s inference edge or risk losing control

Consolidating tools at inference’s edge is the future of cybersecurity, especially as AI threats intensify. “For CISOs, the playbook is crystal clear,” Rees concluded. “Consolidate controls decisively at the inference edge. Introduce robust behavioral anomaly detection. Strengthen Retrieval-Augmented Generation (RAG) systems with provenance checks and defined abstain paths. Above all, invest heavily in runtime defenses and support the specialized teams who operate them. Execute this playbook, and you achieve secure AI deployments at true scale.”

Daily insights on business use cases with VB Daily

If you want to impress your boss, VB Daily has you covered. We give you the inside scoop on what companies are doing with generative AI, from regulatory shifts to practical deployments, so you can share insights for maximum ROI.

Read our Privacy Policy

Thanks for subscribing. Check out more VB newsletters here.

An error occured.