Data center information management covers a lot of ground. Here are five of the most important things you need to know about DCIM.

Data center information management covers a lot of ground. Here are five of the most important things you need to know about DCIM.

Data center information management covers a lot of ground. Here are five of the most important things you need to know about DCIM.

Stay ahead with more perspectives on cutting-edge power, infrastructure, energy, bitcoin and AI solutions. Explore these articles to uncover strategies and insights shaping the future of industries.

“Purpose-built for enterprise networking, Extreme’s AI Service Agent streamlines network management, automates routine workflows, and empowers IT teams to deliver faster, smarter support,” Extreme stated. “By taking on time-consuming tasks like evidence collection, ticket creation and case management, the Service Agent slashes manual effort by up to 95%, accelerating resolution and

The company specified that the 13,000x performance advantage refers to the OTOC algorithm running on Willow compared to “the best classical algorithm on one of the world’s fastest supercomputers,” though it did not identify which specific supercomputer served as the benchmark. The announcement positions Google ahead in the intensifying quantum

These reports can be effective for enterprises in improving their resilience, Dai said. However, he did point out that AWS could better help customers minimize downtime and business risk by promoting multi-region architectures, active-active failover, and redundant DNS strategies. Further, he said that while reports will help in accelerating post-mortem

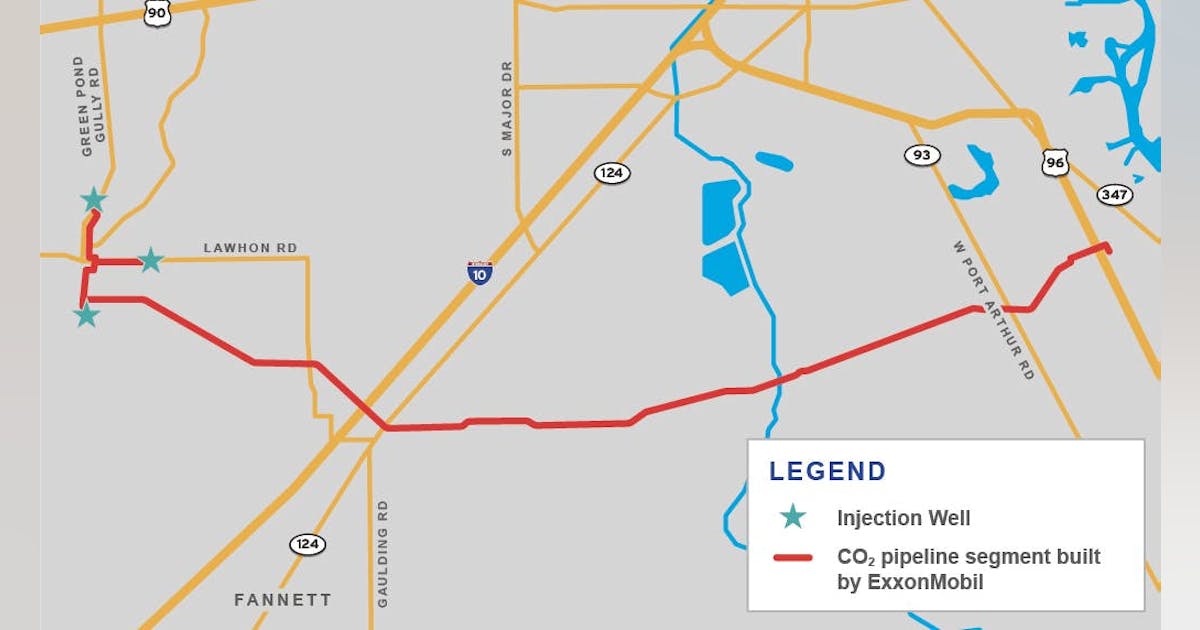

ExxonMobil Corp. received three final Underground Injection Control (UIC) Class VI permits from the US Environmental Protection Agency (EPA), advancing the operator’s Rose carbon storage project in Jefferson County, Tex. The Rose project scope includes storage of carbon dioxide (CO2) sited on over 13,000 acres of privately owned land containing

There are signs that the long-anticipated supply glut is now hitting the market, with the six-month and 12-month term spreads flipping into contango. That’s what analysts at BMI said in a BMI report sent to Rigzone by the Fitch Group on Friday, adding that, “sentiment is souring, with the ratio of long to short positions held by managed money in Brent crude falling to 1.8 as of mid-October, its lowest level since April, in the wake of the reciprocal tariff announcements”. “Absent major export disruptions in Russia, prices will remain under pressure over Q4 2025 and into early 2026, amid looser supply-demand fundamentals,” the analysts added. “However, a pause in the OPEC+ supply hikes – and scope for limited market intervention in response to extreme price weakness – should help to put a floor under Brent,” they continued. The BMI analysts noted in the report that, from the second half of 2026, they “expect stronger demand growth, slower supply growth, and healthier market sentiment will foster a recovery in prices”. “That said, this hinges on several key assumptions, including near-term restraint by OPEC+, a meaningful slowdown in the U.S. shale patch, robust import demand in Mainland China, and an improved global macroeconomic backdrop heading into 2027,” the analysts said. The BMI analysts stated in the report that oil prices have come under pressure this month, pointing out that Brent fell to a five-month low of $61 per barrel on October 20, “before partially rebounding to above $64 per barrel at the time of writing on October 23”. “The recent jump was triggered by the announcement that U.S. President Donald Trump was imposing Ukraine-related sanctions on Russia, including sanctions on Lukoil and Rosneft, two major exporters of Russian oil,” the analysts added. In the report, the BMI analysts highlighted that their

Petrofac Ltd said Thursday it was ending its “advanced stage” financial restructuring after TenneT dropped the British energy engineering company from multiple projects. “Having carefully assessed the impact of TenneT’s decision, the board has determined that the restructuring, which had last week reached an advanced stage, is no longer deliverable in its current form”, Petrofac said in a statement on its website. “The Group is in close and constant dialogue with its key creditors and other stakeholders as it actively pursues alternative options for the Group. “In the meantime, Petrofac remains focused on serving its clients and maintaining operational capability and delivery of services across its businesses. “Further information will be shared in due course”. European grid operator TenneT said Thursday it had terminated Petrofac’s scope under a March 2023 agreement also signed with Hitachi Energy Ltd for six direct current connection projects on the Dutch and German sides of the North Sea. The projects each have a capacity of two gigawatts. “Since 2024 Petrofac has been working on a financial restructuring of its business”, TenneT said in an online statement. ”In the past period TenneT has worked extensively with the Petrofac/Hitachi Energy consortium on mitigation measures. “Since Petrofac has not been able to meet its contractual obligations, TenneT has exercised its right to partial termination of the contract related to the Petrofac scope. “At the same time, a solution has been put in place involving a consortium of Hitachi Energy and a replacement contractor. Hitachi Energy and the replacement contractor will be responsible for the project portfolio of the Dutch offshore grid connections IJmuiden Ver Alpha, Nederwiek 1, Nederwiek 3, Doordewind 1, Doordewind 2 and the German offshore grid connection LanWin5”. On September 11 Petrofac said it had reached an agreement in principle with Saipem SpA and Samsung E&A Co Ltd on their claims from

Tamboran Resources Corp on Thursday confirmed an underwritten public offering of about 2.32 million common shares, under which Baker Hughes expressed interest to subscribe for up to $10 million. RBC Capital Markets LLC and Wells Fargo Securities LLC are underwriters in the placement. “The company expects to grant the underwriters a 30-day option to purchase up to an additional 348,666 shares of common stock from the company”, Sydney-based Tamboran said in a stock filing with the Australian Securities Exchange (ASX). Tamboran’s common stock trades on the New York Stock Exchange (NYSE). At ASX, it holds CHESS Depositary Interests (CDIs), each representing 1/200th of one common share, according to Tamboran. “The company intends to use the net proceeds of the offering to fund Tamboran’s development plan, working capital and other general corporate purposes”, the early-stage, Beetaloo basin-focused natural gas exploration and production company said. “Tamboran is in the process of conducting a bookbuild and price discovery process in relation to the public offering”, Tamboran added. In a prospectus filing with the United States Securities and Exchange Commission on Wednesday, Tamboran said, “Baker Hughes Energy Services LLC (the Interested Purchaser) has indicated an interest in purchasing up to an aggregate of approximately $10,000,000 of shares of common stock in this offering at the public offering price per share”. “Because this indication of interest is not a binding agreement or commitment to purchase, we can provide no assurances with respect to whether the Interested Purchaser will purchase shares in this offering or, if they elect to purchase shares, the number of shares they ultimately will acquire”, Tamboran said. “In addition, the underwriters may elect to sell fewer shares or not to sell any shares in this offering to the Interested Purchaser”. The prospectus added, “Concurrently with this offering, we are also offering to

German Chancellor Friedrich Merz said he’s optimistic that the US will exempt Rosneft PJSC’s German unit from Washington’s latest sanctions against Russia. “We will discuss this with the Americans,” Merz told reporters at a European Union summit in Brussels on Thursday. “I assume that a corresponding exemption for Rosneft will be granted.” The chancellor added that it was actually unclear whether the German business, Rosneft Deutschland, “even needs” an exemption, as the penalties say Rosneft must own at least 50 percent of the business. “It is 50 percent,” he said. There are concerns that Rosneft’s German unit may be cut off from key customers without a US sanctions exemption, Bloomberg reported earlier. Oil traders, banks and oil companies have already threatened to end relationships with the company. Merz welcomed the latest US sanctions against Russia on Thursday as an indication of President Donald Trump’s determination to pressure Russia into ending its war against Ukraine. The new US sanctions give customers until Nov. 21 to withdraw from “any entity” that’s more than 50 percent-owned by the penalized Russian firms. While Germany put Rosneft’s local assets under a temporary trusteeship after Russia invaded Ukraine in 2022, it stopped short of nationalizing the business. That means Berlin will likely have to negotiate a carve out from the latest restrictions. What do you think? We’d love to hear from you, join the conversation on the Rigzone Energy Network. The Rigzone Energy Network is a new social experience created for you and all energy professionals to Speak Up about our industry, share knowledge, connect with peers and industry insiders and engage in a professional community that will empower your career in energy.

Saipem SpA has reported EUR 221 million ($256.45 million) in net income and adjusted net income for the first three quarters, up 7.3 percent from the same nine-month period last year. Third-quarter (Q3) net result was EUR 81 million, up from EUR 63 million for Q2 but down from EUR 88 million for Q3 2024, the Italian energy engineering company said in a statement on its website. Saipem said it did not record any non-recurring item for January-September 2025. “The trend of improvement in operational, economic and financial performance that started in 2022 continues in the third quarter of 2025”, it said. January-September 2025 operating profit and adjusted operating profit totaled EUR 464 million, up 11.3 percent. “The positive change in adjusted operating profit of EUR 47 million, to which is added the effect of the improvement in the balance of tax operations of EUR 14 million, is partly offset by the worsening of the balance of financial operations of EUR 46 million”, Saipem, backed by state-controlled energy producer Eni SpA, said. Q3 2025 operating profit was EUR 159 million, up from Q2’s EUR 148 million but down from EUR 162 million for Q3 2024. January-September 2025 revenue totaled EUR 10.98 billion, up 8.4 percent against the first nine months of 2024. Q3 2025 revenue increased both quarter-on-quarter and year-on-year to EUR 3.77 billion. Backlog as of Q3 was EUR30.56 billion: EUR20.01 billion in Asset-Based Services, EUR 9.42 billion in Energy Carriers and EUR 1.13 billion in Offshore Drilling. “The Offshore Drilling backlog of EUR 1,129 million reflects the impact of the cancellation of the Perro Negro 12 jack-up rental contract, valued at EUR 35 million, following the notification of the termination for convenience by the client Saudi Aramco, in the second quarter of 2025”, Saipem said. Saipem expects to

WASHINGTON—U.S. Secretary of Energy Chris Wright directed the Federal Energy Regulatory Commission (FERC) today to initiate rulemaking procedures with a proposed rule to rapidly accelerate the interconnection of large loads, including data centers, positioning the United States to lead in AI innovation and in the revitalization of domestic manufacturing. Secretary Wright’s proposed rule allows customers to file joint, co-located load and generation interconnection requests. It will also significantly reduce study times and grid upgrade costs, while reducing the time needed for additional generation and power to come online. The proposed rule advances President Trump’s agenda to ensure all Americans and domestic industries have access to affordable, reliable, and secure electricity. Click here to read Secretary Wright’s letter and proposed rule. Secretary Wright also directed FERC today to initiate rulemaking procedures with a proposed rule to remove unnecessary burdens for preliminary hydroelectric power permits. Secretary Wright’s proposed rule clarifies that third parties do not have veto rights over the issuance of preliminary hydroelectric power permits. Click here to read Secretary Wright’s letter and proposed rule. President Trump and Secretary Wright have been clear: The United States is experiencing an unprecedented surge in electricity demand and the United States’ ability to remain at the forefront of technological innovation depends on an affordable, reliable, and secure supply of energy. ###

“Personally, I think that a brownfield is very creative way to deal with what I think is the biggest problem that we’ve got right now, which is time and speed to market,” he said. “On a brownfield, I can go into a building that’s already got power coming into the building. Sometimes they’ve already got chiller plants, like what we’ve got with the building I’m in right now.” Patmos certainly made the most of the liquid facilities in the old printing press building. The facility is built to handle anywhere from 50 to over 140 kilowatts per cabinet, a leap far beyond the 1–2 kW densities typical of legacy data centers. The chips used in the servers are Nvidia’s Grace Blackwell processors, which run extraordinarily hot. To manage this heat load, Patmos employs a multi-loop liquid cooling system. The design separates water sources into distinct, closed loops, each serving a specific function and ensuring that municipal water never directly contacts sensitive IT equipment. “We have five different, completely separated water loops in this building,” said Morgan. “The cooling tower uses city water for evaporation, but that water never mixes with the closed loops serving the data hall. Everything is designed to maximize efficiency and protect the hardware.” The building taps into Kansas City’s district chilled water supply, which is sourced from a nearby utility plant. This provides the primary cooling resource for the facility. Inside the data center, a dedicated loop circulates a specialized glycol-based fluid, filtered to extremely low micron levels and formulated to be electronically safe. Heat exchangers transfer heat from the data hall fluid to the district chilled water, keeping the two fluids separate and preventing corrosion or contamination. Liquid-to-chip and rear-door heat exchangers are used for immediate heat removal.

Why This Project Marks a Landmark Shift The deployment of 2.3 GW of modular generation represents utility-scale capacity, but what makes it distinct is the delivery model. Instead of a centralized plant, the project uses modular gas-reciprocating “power packs” that can be phased in step with data-hall readiness. This approach allows staged energization and limits the bottlenecks that often stall AI campuses as they outgrow grid timelines or wait in interconnection queues. AI training loads fluctuate sharply, placing exceptional stress on grid stability and voltage quality. The INNIO/VoltaGrid platform was engineered specifically for these GPU-driven dynamics, emphasizing high transient performance (rapid load acceptance) and grid-grade power quality, all without dependence on batteries. Each power pack is also designed for maximum permitting efficiency and sustainability. Compared with diesel generation, modern gas-reciprocating systems materially reduce both criteria pollutants and CO₂ emissions. VoltaGrid markets the configuration as near-zero criteria air emissions and hydrogen-ready, extending allowable runtimes under air permits and making “prime-as-a-service” viable even in constrained or non-attainment markets. 2025: Momentum for Modular Prime Power INNIO has spent 2025 positioning its Jenbacher platform as a next-generation power solution for data centers: combining fast start, high transient performance, and lower emissions compared with diesel. While the 3 MW J620 fast-start lineage dates back to 2019, this year the company sharpened its data center narrative and booked grid stability and peaking projects in markets where rapid data center growth is stressing local grids. This momentum was exemplified by an 80 MW deployment in Indonesia announced earlier in October. The same year saw surging AI-driven demand and INNIO’s growing push into North American data-center markets. Specifications for the 2.3 GW VoltaGrid package highlight the platform’s heat tolerance, efficiency, and transient response, all key attributes for powering modern AI campuses. VoltaGrid’s 2025 Milestones VoltaGrid’s announcements across 2025 reflect

Matt Kimball, VP and principal analyst with Moor Insights and Strategy, pointed out that AWS and Microsoft have already moved many workloads from x86 to internally designed Arm-based servers. He noted that, when Arm first hit the hyperscale datacenter market, the architecture was used to support more lightweight, cloud-native workloads with an interpretive layer where architectural affinity was “non-existent.” But now there’s much more focus on architecture, and compatibility issues “largely go away” as Arm servers support more and more workloads. “In parallel, we’ve seen CSPs expand their designs to support both scale out (cloud-native) and traditional scale up workloads effectively,” said Kimball. Simply put, CSPs are looking to monetize chip investments, and this migration signals that Google has found its performance-per-dollar (and likely performance-per-watt) better on Axion than x86. Google will likely continue to expand its Arm footprint as it evolves its Axion chip; as a reference point, Kimball pointed to AWS Graviton, which didn’t really support “scale up” performance until its v3 or v4 chip. Arm is coming to enterprise data centers too When looking at architectures, enterprise CIOs should ask themselves questions such as what instance do they use for cloud workloads, and what servers do they deploy in their data center, Kimball noted. “I think there is a lot less concern about putting my workloads on an Arm-based instance on Google Cloud, a little more hesitance to deploy those Arm servers in my datacenter,” he said. But ultimately, he said, “Arm is coming to the enterprise datacenter as a compute platform, and Nvidia will help usher this in.” Info-Tech’s Jain agreed that Nvidia is the “biggest cheerleader” for Arm-based architecture, and Arm is increasingly moving from niche and mobile use to general-purpose and AI workload execution.

What 6 GW of GPUs Really Means The 6 GW of accelerator load envisioned under the OpenAI–AMD partnership will be distributed across multiple hyperscale AI factory campuses. If OpenAI begins with 1 GW of deployment in 2026, subsequent phases will likely be spread regionally to balance supply chains, latency zones, and power procurement risk. Importantly, this represents entirely new investment in both power infrastructure and GPU capacity. OpenAI and its partners have already outlined multi-GW ambitions under the broader Stargate program; this new initiative adds another major tranche to that roadmap. Designing for the AI Factory Era These upcoming facilities are being purpose-built for next-generation AI factories, where MI450-class clusters could drive rack densities exceeding 100 kW. That level of compute concentration makes advanced power and cooling architectures mandatory, not optional. Expected solutions include: Warm-water liquid cooling (manifold, rear-door, and CDU variants) as standard practice. Facility-scale water loops and heat-reuse systems—including potential district-heating partnerships where feasible. Medium-voltage distribution within buildings, emphasizing busway-first designs and expanded fault-current engineering. While AMD has not yet disclosed thermal design power (TDP) specifications for the MI450, a 1 GW campus target implies tens of thousands of accelerators. That scale assumes liquid cooling, ultra-dense racks, and minimal network latency footprints, pushing architectures decisively toward an “AI-first” orientation. Design considerations for these AI factories will likely include: Liquid-to-liquid cooling plants engineered for step-function capacity adders (200–400 MW blocks). Optics-friendly white space layouts with short-reach topologies, fiber raceways, and aisles optimized for module swaps. Substation adjacency and on-site generation envelopes negotiated during early land-banking phases. Networking, Memory, and Power Integration As compute density scales, networking and memory bottlenecks will define infrastructure design. Expect fat-tree and dragonfly network topologies, 800 G–1.6 T interconnects, and aggressive optical-module roadmaps to minimize collective-operation latency, aligning with recent disclosures from major networking vendors.

In a new report spanning 2022 through 2024, the Union of Concerned Scientists (UCS) identifies a significant regulatory gap in the PJM Interconnection’s planning and rate-making process—one that allows most high-voltage (“transmission-level”) interconnection costs for large, especially AI-scale, data centers to be socialized across all utility customers. The result, UCS argues, is a multi-billion-dollar pass-through that is poised to grow as more data center projects move forward, because these assets are routinely classified as ordinary transmission infrastructure rather than customer-specific hookups. According to the report, between 2022 and 2024, utilities initiated more than 150 local transmission projects across seven PJM states specifically to serve data center connections. In 2024 alone, 130 projects were approved with total costs of approximately $4.36 billion. Virginia accounted for nearly half that total—just under $2 billion—followed by Ohio ($1.3 billion) and Pennsylvania ($492 million) in data-center-related interconnection spending. Yet only six of those 130 projects, about 5 percent, were reported as directly paid for by the requesting customer. The remaining 95 percent, representing more than $4 billion in 2024 connection costs, were rolled into general transmission charges and ultimately recovered from all retail ratepayers. How Does This Happen? When data center project costs are discussed, the focus is usually on the price of the power consumed, or megawatts multiplied by rate. What the UCS report isolates, however, is something different: the cost of physically delivering that power: the substations, transmission lines, and related infrastructure needed to connect hyperscale facilities to the grid. So why aren’t these substantial consumer-borne costs more visible? The report identifies several structural reasons for what effectively functions as a regulatory loophole in how development expenses are reported and allocated: Jurisdictional split. High-voltage facilities fall under the Federal Energy Regulatory Commission (FERC), while retail electricity rates are governed by state public utility

With the conclusion of the 2025 OCP Global Summit, William G. Wong, Senior Content Director at DCF’s sister publications Electronic Design and Microwaves & RF, published a comprehensive roundup of standout technologies unveiled at the event. For Data Center Frontier readers, we’ve revisited those innovations through the lens of data center impact, focusing on how they reshape infrastructure design and operational strategy. This year’s OCP Summit marked a decisive shift toward denser GPU racks, standardized direct-to-chip liquid cooling, 800-V DC power distribution, high-speed in-rack fabrics, and “crypto-agile” platform security. Collectively, these advances aim to accelerate time-to-capacity, reduce power-distribution losses at megawatt rack scales, simplify retrofits in legacy halls, and fortify data center platforms against post-quantum threats. Rack Design and Cooling: From Ad-Hoc to Production-Grade Liquid Cooling NVIDIA’s Vera Rubin compute tray, newly offered to OCP for standardization, packages Rubin-generation GPUs with an integrated liquid-cooling manifold and PCB midplane. Compared with the GB300 tray, Vera Rubin represents a production-ready module delivering four times the memory and three times the memory bandwidth: a 7.5× performance factor at rack scale, with 150 TB of memory at 1.7 PB/s per rack. The system implements 45 °C liquid cooling, a 5,000-amp liquid-cooled busbar, and on-tray energy storage with power-resilience features such as flexible 100-amp whips and automatic-transfer power-supply units. NVIDIA also previewed a Kyber rack generation targeted for 2027, pivoting from 415/480 VAC to 800 V DC to support up to 576 Rubin Ultra GPUs, potentially eliminating the 200-kg copper busbars typical today. These refinements are aimed at both copper reduction and aisle-level manageability. Wiwynn’s announcements filled in the practicalities of deploying such densities. The company showcased rack- and system-level designs across NVIDIA GB300 NVL72 (72 Blackwell Ultra GPUs with 800 Gb/s ConnectX-8 SuperNICs) for large-scale inference and reasoning, and HGX B300 (eight GPUs /

And Microsoft isn’t the only one that is ramping up its investments into AI-enabled data centers. Rival cloud service providers are all investing in either upgrading or opening new data centers to capture a larger chunk of business from developers and users of large language models (LLMs). In a report published in October 2024, Bloomberg Intelligence estimated that demand for generative AI would push Microsoft, AWS, Google, Oracle, Meta, and Apple would between them devote $200 billion to capex in 2025, up from $110 billion in 2023. Microsoft is one of the biggest spenders, followed closely by Google and AWS, Bloomberg Intelligence said. Its estimate of Microsoft’s capital spending on AI, at $62.4 billion for calendar 2025, is lower than Smith’s claim that the company will invest $80 billion in the fiscal year to June 30, 2025. Both figures, though, are way higher than Microsoft’s 2020 capital expenditure of “just” $17.6 billion. The majority of the increased spending is tied to cloud services and the expansion of AI infrastructure needed to provide compute capacity for OpenAI workloads. Separately, last October Amazon CEO Andy Jassy said his company planned total capex spend of $75 billion in 2024 and even more in 2025, with much of it going to AWS, its cloud computing division.

Join our daily and weekly newsletters for the latest updates and exclusive content on industry-leading AI coverage. Learn More Self-driving tractors might be the path to self-driving cars. John Deere has revealed a new line of autonomous machines and tech across agriculture, construction and commercial landscaping. The Moline, Illinois-based John Deere has been in business for 187 years, yet it’s been a regular as a non-tech company showing off technology at the big tech trade show in Las Vegas and is back at CES 2025 with more autonomous tractors and other vehicles. This is not something we usually cover, but John Deere has a lot of data that is interesting in the big picture of tech. The message from the company is that there aren’t enough skilled farm laborers to do the work that its customers need. It’s been a challenge for most of the last two decades, said Jahmy Hindman, CTO at John Deere, in a briefing. Much of the tech will come this fall and after that. He noted that the average farmer in the U.S. is over 58 and works 12 to 18 hours a day to grow food for us. And he said the American Farm Bureau Federation estimates there are roughly 2.4 million farm jobs that need to be filled annually; and the agricultural work force continues to shrink. (This is my hint to the anti-immigration crowd). John Deere’s autonomous 9RX Tractor. Farmers can oversee it using an app. While each of these industries experiences their own set of challenges, a commonality across all is skilled labor availability. In construction, about 80% percent of contractors struggle to find skilled labor. And in commercial landscaping, 86% of landscaping business owners can’t find labor to fill open positions, he said. “They have to figure out how to do

Join our daily and weekly newsletters for the latest updates and exclusive content on industry-leading AI coverage. Learn More 2025 is poised to be a pivotal year for enterprise AI. The past year has seen rapid innovation, and this year will see the same. This has made it more critical than ever to revisit your AI strategy to stay competitive and create value for your customers. From scaling AI agents to optimizing costs, here are the five critical areas enterprises should prioritize for their AI strategy this year. 1. Agents: the next generation of automation AI agents are no longer theoretical. In 2025, they’re indispensable tools for enterprises looking to streamline operations and enhance customer interactions. Unlike traditional software, agents powered by large language models (LLMs) can make nuanced decisions, navigate complex multi-step tasks, and integrate seamlessly with tools and APIs. At the start of 2024, agents were not ready for prime time, making frustrating mistakes like hallucinating URLs. They started getting better as frontier large language models themselves improved. “Let me put it this way,” said Sam Witteveen, cofounder of Red Dragon, a company that develops agents for companies, and that recently reviewed the 48 agents it built last year. “Interestingly, the ones that we built at the start of the year, a lot of those worked way better at the end of the year just because the models got better.” Witteveen shared this in the video podcast we filmed to discuss these five big trends in detail. Models are getting better and hallucinating less, and they’re also being trained to do agentic tasks. Another feature that the model providers are researching is a way to use the LLM as a judge, and as models get cheaper (something we’ll cover below), companies can use three or more models to

Join our daily and weekly newsletters for the latest updates and exclusive content on industry-leading AI coverage. Learn More OpenAI has taken a more aggressive approach to red teaming than its AI competitors, demonstrating its security teams’ advanced capabilities in two areas: multi-step reinforcement and external red teaming. OpenAI recently released two papers that set a new competitive standard for improving the quality, reliability and safety of AI models in these two techniques and more. The first paper, “OpenAI’s Approach to External Red Teaming for AI Models and Systems,” reports that specialized teams outside the company have proven effective in uncovering vulnerabilities that might otherwise have made it into a released model because in-house testing techniques may have missed them. In the second paper, “Diverse and Effective Red Teaming with Auto-Generated Rewards and Multi-Step Reinforcement Learning,” OpenAI introduces an automated framework that relies on iterative reinforcement learning to generate a broad spectrum of novel, wide-ranging attacks. Going all-in on red teaming pays practical, competitive dividends It’s encouraging to see competitive intensity in red teaming growing among AI companies. When Anthropic released its AI red team guidelines in June of last year, it joined AI providers including Google, Microsoft, Nvidia, OpenAI, and even the U.S.’s National Institute of Standards and Technology (NIST), which all had released red teaming frameworks. Investing heavily in red teaming yields tangible benefits for security leaders in any organization. OpenAI’s paper on external red teaming provides a detailed analysis of how the company strives to create specialized external teams that include cybersecurity and subject matter experts. The goal is to see if knowledgeable external teams can defeat models’ security perimeters and find gaps in their security, biases and controls that prompt-based testing couldn’t find. What makes OpenAI’s recent papers noteworthy is how well they define using human-in-the-middle

The app, and other tools like it, could help doctors and caregivers. They could be especially useful in the care of people who aren’t able

MIT Technology Review’s What’s Next series looks across industries, trends, and technologies to give you a first look at the future. You can read the

In partnership withSnowflake As organizations weave AI into more of their operations, senior executives are realizing data engineers hold a central role in bringing these

This is today’s edition of The Download, our weekday newsletter that provides a daily dose of what’s going on in the world of technology. This