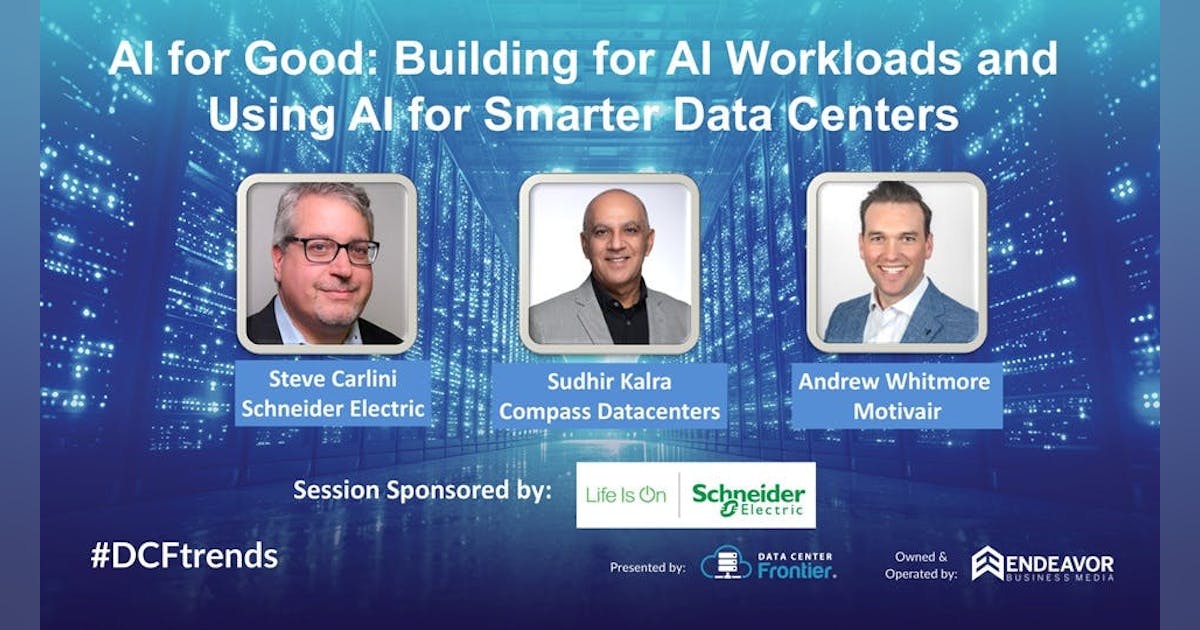

At the 2025 Data Center Frontier Trends Summit (Aug. 26-28) in Reston, Va., the conversation around AI and infrastructure moved well past the hype. In a panel sponsored by Schneider Electric—“AI for Good: Building for AI Workloads and Using AI for Smarter Data Centers”—three industry leaders explored what it really means to design, cool and operate the new class of AI “factories,” while also turning AI inward to run those facilities more intelligently.

Moderated by Data Center Frontier Editor in Chief Matt Vincent, the session brought together:

-

Steve Carlini, VP, Innovation and Data Center Energy Management Business, Schneider Electric

-

Sudhir Kalra, Chief Data Center Operations Officer, Compass Datacenters

-

Andrew Whitmore, VP of Sales, Motivair

Together, they traced both sides of the “AI for Good” equation: building for AI workloads at densities that would have sounded impossible just a few years ago, and using AI itself to reduce risk, improve efficiency and minimize environmental impact.

From Bubble Talk to “AI Factories”

Carlini opened by acknowledging the volatility surrounding AI investments, citing recent headlines and even Sam Altman’s public use of the word “bubble” to describe the current phase of exuberance.

“It’s moving at an incredible pace,” Carlini noted, pointing out that roughly half of all VC money this year has flowed into AI, with more already spent than in all of the previous year. Not every investor will win, he said, and some companies pouring in hundreds of billions may not recoup their capital.

But for infrastructure, the signal is clear: the trajectory is up and to the right.

-

GPU generations are cycling faster than ever.

-

Densities are climbing from high double-digits per rack toward hundreds of kilowatts.

-

The hyperscale “AI factories,” as NVIDIA calls them, are scaling to campus capacities measured in gigawatts.

Carlini reminded the audience that in 2024, a one-megawatt rack still sounded exotic. In 2025, it feels much closer to reality—and the roadmap is already targeting the next step.

AI’s Opportunity—and Operational Reality

For Compass Datacenters’ operations chief Sudhir Kalra, AI sits in a long line of transformative technologies.

“AI has the potential to fundamentally shift our daily lives like electricity did, like automobiles did,” he said. Whether that happens in five, ten or fifteen years is less important than the direction of travel.

Kalra underscored two realities:

-

The data center industry is the enabler.

AI’s boom is a primary driver of today’s construction pipeline. Where a 1 MW facility once felt large in enterprise, hyperscale customers now casually discuss gigawatt campuses. A 1 MW data hall is small. One-megawatt racks are nearly here. -

The workforce gap is widening.

Despite fears that AI will eliminate jobs, Kalra’s daily challenge is the opposite: he can’t find enough people who want to do and can competently perform critical facilities work. Even in creative fields, he noted, a recent Billy Joel video produced with AI ultimately required more people than a conventional workflow.

“All of this AI technology doesn’t just appear out of nowhere,” he said. “Someone has to design it, build it, code it, and fix it when it breaks.”

That shortage of skilled hands is exactly why operational teams are looking to AI—not to replace people, but to make the people they do have more effective and less error-prone.

Cooling the First Inning of the AI Era

From Motivair’s vantage point, the AI build-out is still at the very beginning.

“This is the first pitch of the first inning of probably a three-game series,” said Andrew Whitmore. Demand is “insatiable,” use cases are multiplying, and every part of the ecosystem—utilities, developers, operators, supply chain—is being asked to raise the bar.

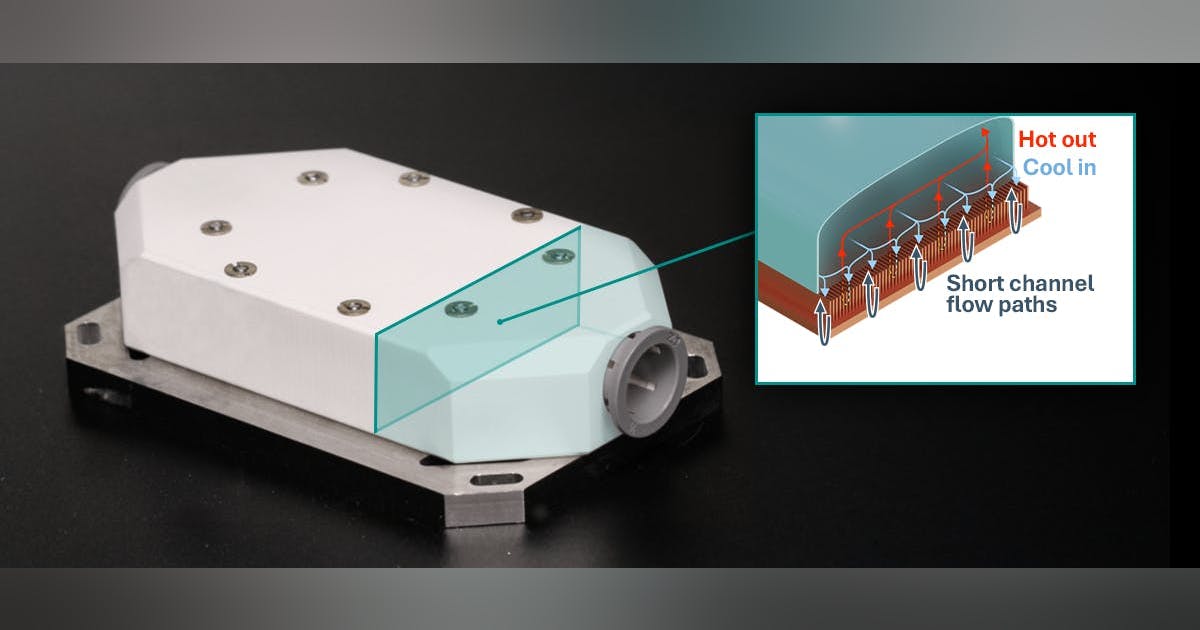

Nowhere is that more tangible than in cooling.

Whitmore traced the arc of the last two decades:

“The closer you are to the heat source,” he said, “the more effective and efficient you can be.”

Yet most of the world’s data centers weren’t built for AI racks drawing 100–600 kW. They’re brownfield sites, often with rack limits of 15–20 kW and air-only cooling. In the U.S. alone, nearly 5,300 existing facilities will need to be revitalized, not bulldozed.

That’s where Whitmore sees a huge role for liquid cooling infrastructure:

-

Introducing direct-to-chip cooling into existing air-cooled facilities.

-

Using liquid-to-air CDUs to bridge old and new architectures.

-

Designing solutions that can be installed and maintained without crippling day-to-day operations.

“There’s no monotony in the day-to-day,” he said. “No two designs or challenges are the same.”

Designing for 132 kW Racks—and 600 kW on the Horizon

Carlini then pulled back the curtain on Schneider Electric’s ongoing collaboration with NVIDIA to keep up with this densification.

NVIDIA supplies a chip roadmap; Schneider builds digital twins of the future clusters and runs full-scale testing before releasing any reference design. That process has already surfaced a critical reality: the real world is hotter than the spec sheet.

-

Early plans for Blackwell GB200 racks assumed ~120 kW per rack.

-

When Schneider tested, the actual number rose to ~132 kW due to higher real-world power draw and thermal behavior.

Looking ahead:

-

Blackwell Ultra (GB300) is expected to push densities even higher.

-

Future architectures like Vera Rubin and Kyber point toward rack densities in the hundreds of kilowatts—Carlini suggested 600 kW per rack is in sight.

“There just aren’t enough data center designers in the world to reinvent power and cooling for every new chip generation,” he said. That’s why validated, documented reference designs—from substation to rack manifold—are becoming essential for anyone trying to deploy AI clusters at scale and at speed.

Prefab, Modularity and the Manufacturing Mindset

As racks grow more intense and systems more complex, the panelists agreed: the industry has to stop thinking of data centers as one-off construction projects, and start thinking like manufacturers.

Whitmore emphasized the role of prefabricated modular blocks:

-

Electrical blocks and data hall blocks built in controlled factory environments.

-

Integrated piping, busway, fire protection and leak detection designed as a system.

-

Consistent builds that shorten construction schedules and eliminate trades “stepping on each other’s toes.”

This modularity not only increases speed to market, it makes unknowns more manageable. Hyperscalers today often provision for a 50/50 split between air and liquid cooling, knowing the roadmap could tip either way; a modular approach lets them adapt as strategies evolve.

Kalra picked up that theme from Compass’ perspective.

“We don’t think of ourselves as constructing a data center,” he said. “We think of it as assembling one.”

Compass designs buildings as 100-year shells wrapped around modular technology:

-

Technology stacks refreshed roughly every 4–7 years.

-

Facility stacks (power and cooling) refreshed every 20–25 years.

-

Precast panels instead of poured walls.

-

Modular electrical and cooling systems that can be swapped one block or one hall at a time.

The goal is simple: refresh without disrupting operations. That’s only achievable if modularity is baked in from the start.

Carlini added that the same principle applies at the rack and cluster level. An AI “pod” of NVL72 racks—$3 million each and weighing ~5,000 pounds—must arrive as a well-engineered system: pre-integrated liquid cooling, manifolds, sensors, leak detection, and control logic. “Time to cooling” is one thing, he said; “time to optimization” is another.

AI Inside the Operations: From Calendar-Based to Condition-Based

If “AI for good” begins with building AI factories more intelligently, it continues with using AI to run them more safely and efficiently.

Kalra highlighted one of the most stubborn realities in critical facilities: human error.

Uptime Institute has long observed that roughly 60–70% of facility incidents are tied to human factors. Combine that with a shortage of experienced staff, and the question becomes: how do you keep hands out of the machines unless they truly need to be there?

For Compass, the answer is a shift from:

Drawing on lessons from aviation’s Reliability Centered Maintenance (RCM), Kalra described Compass’ work with Schneider Electric to embed sensors into electrical and mechanical equipment and feed those signals into ML models that can:

-

Detect emerging anomalies before failures.

-

Recommend when to intervene and when to safely defer.

-

Reduce unnecessary truck rolls and invasive maintenance on healthy equipment.

The result, as Compass has shared publicly, has been a roughly 40% reduction in manual, on-site maintenance interventions and about a 20% decrease in OPEX—without compromising reliability.

Compass still uses a hybrid approach, he stressed, keeping some periodic checks where the models aren’t yet mature. But the direction is clear: AI-driven, condition-based maintenance will become ubiquitous in data center operations, particularly as densities and consequences of failure escalate.

Carlini added that as racks push past 100 kW, the margin for error shrinks dramatically. At 132 kW per rack and beyond, “your buffer for overheating and shutting down dramatically shortens,” which makes advance warning—not post-mortems—absolutely critical.

AI, Energy and Sustainability: From Grid to Chip

When the conversation turned to sustainability, Carlini zoomed out to the grid.

Modern utility distribution networks are increasingly complex and distributed. Schneider’s software platforms already embed AI to help grid operators:

-

Forecast loads across weather patterns, local events and diverse customer types.

-

Manage growing fleets of battery energy storage systems (BESS) and behind-the-meter assets.

-

Deal with curtailment of renewables by strategically charging and discharging storage.

For large AI campuses, he noted, a one-gigawatt facility could require “ten football fields of batteries” for multi-hour backup—assets that can be leveraged not just for resiliency, but for grid support. Meanwhile, regulations governing standby generators and fuel types are evolving to allow more flexible, lower-carbon participation.

Schneider participates in initiatives like EPRI’s DC Flex program, where utilities and data center operators collaborate on frameworks for flexible, grid-aware operations.

Kalra, staying in his lane, focused on build-time sustainability:

-

Using AI to optimize construction sequencing.

-

Maximizing offsite manufacturing to reduce heavy truck rolls and on-site disruption.

-

Treating the build as a “Lego project” rather than traditional construction, shortening time-to-ready and the period of community impact around a site.

Whitmore emphasized how liquid cooling itself advances sustainability. By moving heat transfer closer to the chip, facilities can:

-

Reduce fan horsepower and large-scale air movements.

-

Use tighter control through extensive temperature and pressure sensing.

-

Enable heat reuse schemes—for example, piping low-grade heat to nearby greenhouses or district systems.

“With the predictive analytics and the instrumentation in these systems,” he said, “you’re not just getting your cooling—you’re optimizing an ecosystem.”

Five Years Out: Power, Ubiquity and Standards

In the session’s final question, the panelists were asked to imagine one AI-driven innovation that would reshape data centers for a net-positive impact five years from now.

Carlini’s answer centered on power architecture. The industry, he said, is up against the limits of what can practically be delivered at 400 V. AI factories are already driving a shift to 800 V DC architectures—an approach Google and NVIDIA have publicly championed. Power supplies will move into side-mounted “power pump” units feeding dense, liquid-cooled GPU trays, fundamentally changing how power is distributed and protected in the white space. The entire industry—from switchgear to busway to rack—will have to adapt.

Kalra declined to pick a single innovation.

“I think AI will be everywhere,” he said, comparing it to the Industrial Revolution. Just as no one can point to a solitary invention that defined that era, he expects AI to permeate every part of design, construction, operations and community impact, for good or ill depending on how it’s applied.

Whitmore focused on standardization as the quiet but crucial innovation. Groups like ASHRAE TC 9.9 and international collaborators are already developing standards for coolant quality, temperature approaches and interoperability in liquid-cooled systems. That kind of global alignment, he argued, will be essential to scaling AI infrastructure “effectively, efficiently and sustainably” rather than as a collection of incompatible one-offs.

AI for Good: Beyond the Buzzword

If there was a through-line to the panel, it was that “AI for Good” is less about slogans and more about hard engineering and disciplined operations:

-

Designing power and cooling systems that can realistically support 100–600 kW racks.

-

Revitalizing thousands of existing data centers with liquid cooling and modular upgrades.

-

Using AI to reduce human error, target maintenance, and cut waste—both in operations and in construction.

-

Working with utilities and standards bodies to ensure that AI campuses are grid assets, not liabilities.

The AI wave is still in its early innings, but as Carlini, Kalra and Whitmore made clear in Reston, the industry’s choices now will determine whether today’s AI factories become tomorrow’s regret—or enduring examples of AI applied for good across technology, economics and the communities that host them.

This feature is the first in Data Center Frontier’s series recapping key sessions from the DCF Trends Summit 2025.