Analysts expect AI workloads to grow more varied and more demanding in the coming years, driving the need for architectures tuned for inference performance and putting added pressure on data center networks.

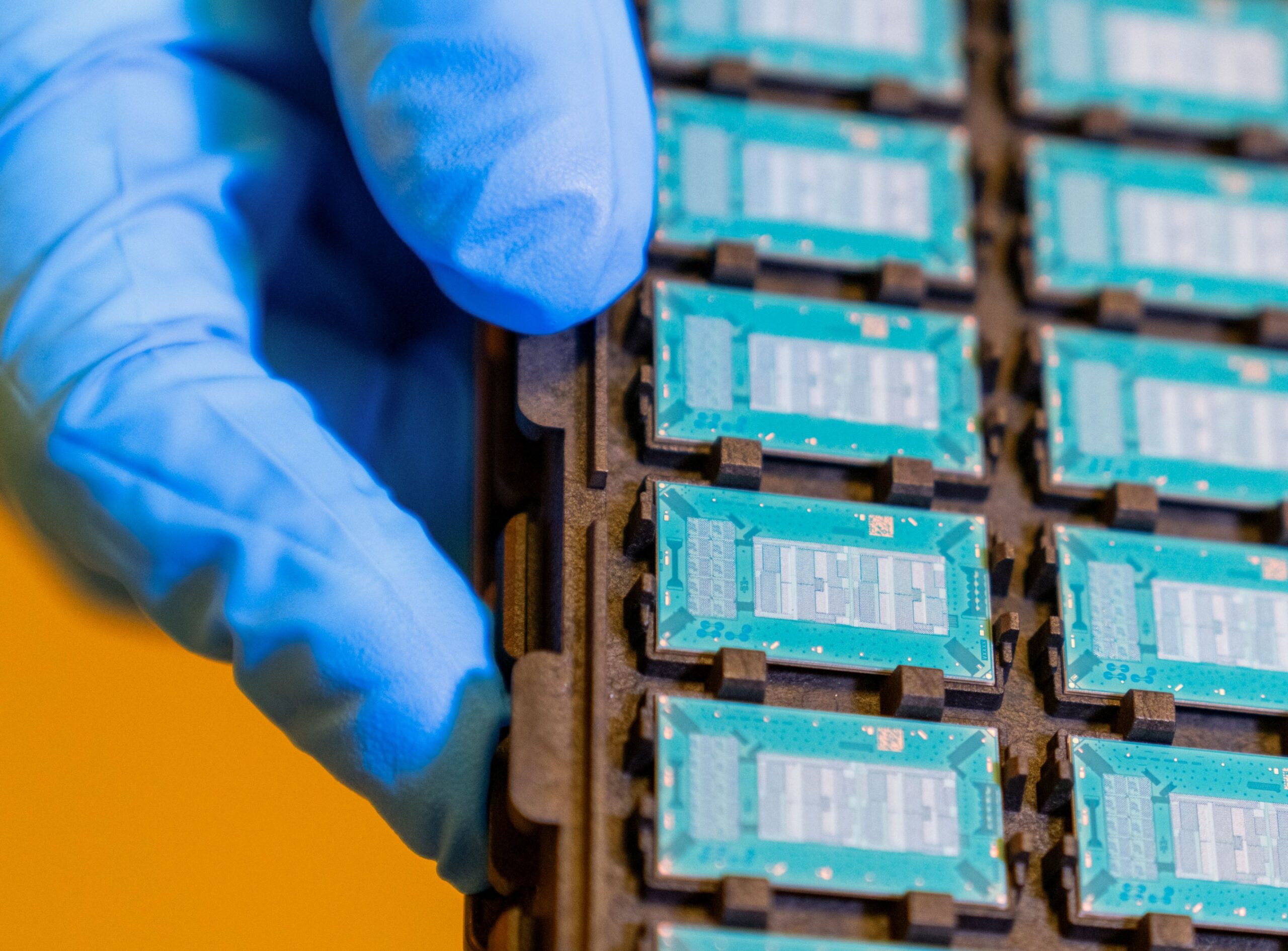

“This is prompting hyperscalers to diversify their computing systems, using Nvidia GPUs for general-purpose AI workloads, in-house AI accelerators for highly optimized tasks, and systems such as Cerebras for specialized low-latency workloads,” said Neil Shah, vice president for research at Counterpoint Research.

As a result, AI platforms operating at hyperscale are pushing infrastructure providers away from monolithic, general-purpose clusters toward more tiered and heterogeneous infrastructure strategies.

“OpenAI’s move toward Cerebras inference capacity reflects a broader shift in how AI data centers are being designed,” said Prabhu Ram, VP of the industry research group at Cybermedia Research. “This move is less about replacing Nvidia and more about diversification as inference scales.”

At this level, infrastructure begins to resemble an AI factory, where city-scale power delivery, dense east–west networking, and low-latency interconnects matter more than peak FLOPS, Ram added.

“At this magnitude, conventional rack density, cooling models, and hierarchical networks become impractical,” said Manish Rawat, semiconductor analyst at TechInsights. “Inference workloads generate continuous, latency-sensitive traffic rather than episodic training bursts, pushing architectures toward flatter network topologies, higher-radix switching, and tighter integration of compute, memory, and interconnect.”