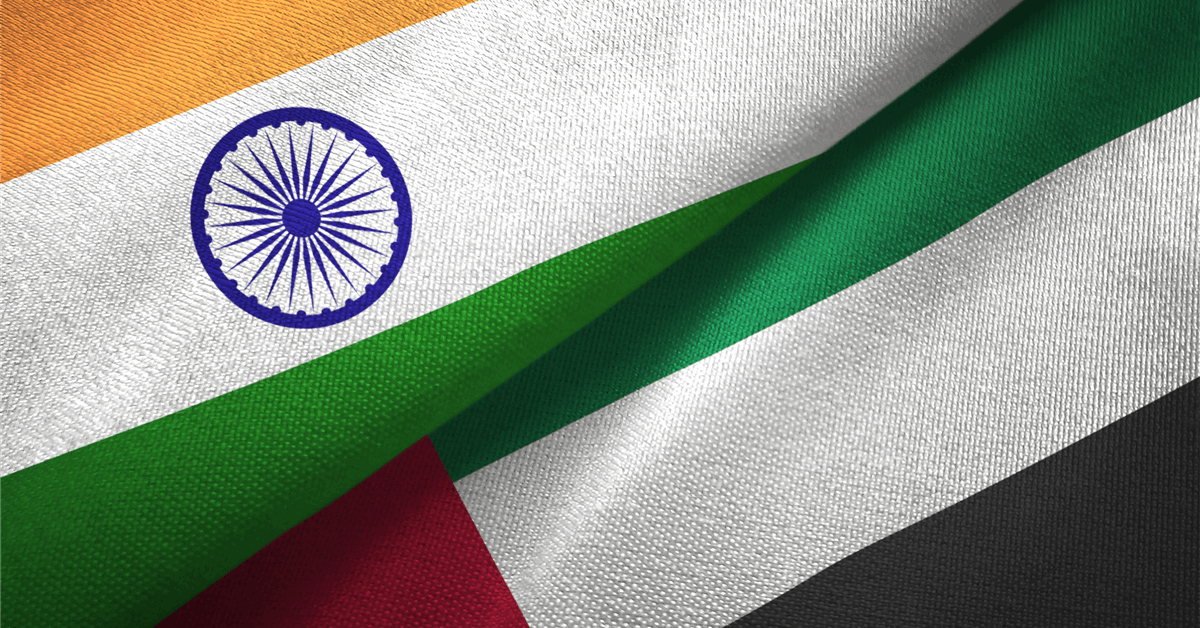

India is now the United Arab Emirates’ (UAE) largest customer of LNG, ADNOC Gas said in a release sent to Rigzone by the ADNOC Gas team on Monday.

The company noted in the release that 20 percent of LNG operated by ADNOC Gas will be supplied to India by 2029, revealing that $20 billion worth of LNG contracts were signed in last 24 months between ADNOC Gas and Indian companies.

In Monday’s release, ADNOC Gas announced the signing of a sales and purchase agreement valued at between $2.5 billion and $3 billion for a period of ten years with Hindustan Petroleum Corporation Limited (HPCL).

The latest agreement was announced during a visit to India by President Sheikh Mohamed bin Zayed Al Nahyan, where he met with the Indian Prime Minister, Narendra Modi, the release noted. It added that, during the visit, Ahmed Al Jaber, UAE Minister of Industry and Advanced Technology and ADNOC Managing Director and Group CEO, and Vikas Kaushal, Chairman and Managing Director of Hindustan Petroleum Corporation Limited, exchanged the signed contract, “reiterating the importance of the growing relationship between ADNOC, its partners, and customers in India”.

The deal converted a previously signed heads of agreement between the two companies into a long-term SPA, the release highlighted, pointing out that the agreement is for the export of 0.5 million tons per annum of LNG.

“The milestone agreement represents a further step in strengthening the strategic partnership between the UAE and India, while reinforcing ADNOC Gas’ role as a reliable and trusted supplier of LNG to Asia’s fast-growing markets,” ADNOC Gas said in the release.

“India is now the UAE’s largest customer and a very important part of ADNOC Gas’ LNG strategy. The company’s growth is tied to the continued success of India,” it added.

By 2029 ADNOC Gas will be the operator for 15.6 million tons per annum of LNG, ADNOC Gas noted in its release, adding that, of that, 3.2 million tons per annum is contracted to Indian energy companies including HPCL.

“This agreement will be supplied from ADNOC Gas’ Das Island liquefaction facility, which has a production capacity of up to six million tons per annum and ranks among the world’s longest-operating LNG plants,” ADNOC Gas said in the release.

“Since commencing operations, Das Island has delivered more than 3,500 LNG cargoes globally, demonstrating its strong operational performance and long-standing reliability,” it added.

“The Hindustan Petroleum agreement aligns with ADNOC Gas’ strategy to broaden its customer base and expand its presence in India and in key growth markets across Asia,” it continued.

“Over the past three years, the company has secured a series of long-term LNG agreements ranging from 0.4 to 1.2 million tons per annum, with contract durations of up to 14 years,” it said.

“These contracts further reinforce ADNOC Gas’ position as a leading supplier of reliable, lower-carbon LNG to Asia’s rapidly growing energy markets,” ADNOC Gas went on to state.

In the release, Fatema Al Nuaimi, Chief Executive Officer of ADNOC Gas, said, “we are pleased to sign this long-term LNG supply agreement with Hindustan Petroleum Corporation which reflects the strong and growing energy partnership between the UAE and India”.

“This agreement underscores ADNOC Gas’ commitment to delivering reliable LNG to meet global demand, while supporting India’s ambition to increase natural gas to 15 percent of its energy mix by 2030,” Al Nuaimi added.

In a release posted on its website on Monday, HPCL confirmed that it had signed a long-term LNG sale and purchase agreement with ADNOC Gas.

“Under the terms of this SPA, HPCL will receive LNG at its five million ton per annum LNG Regasification Terminal at Chhara, Gujarat, which was dedicated to the nation by Hon’ble Prime Minister in September 2025,” HPCL said in its release.

“The supplies under this agreement will support HPCL in meeting the requirements of its refineries, City Gas Distribution (CGD) network and gas demand across key sectors, such as, fertilizers, power and petrochemicals etc.,” it added.

“This partnership will further strengthen HPCL’s position as a reliable supplier of natural gas, complementing its portfolio of other petroleum products, to meet the nation’s growing and evolving energy needs,” it continued.

“This strategic partnership aligns HPCL with India’s aspiration of increasing the share of gas in its energy basket. Such long-term contracts play a vital role in ensuring reliability, affordability and supply security amidst a highly volatile geo-political and evolving global energy landscape,” HPCL noted.

HPCL went on to state in its release that “this agreement also signifies a deepening relationship between India and UAE, with India being the largest LNG customer of UAE”.

To contact the author, email [email protected]