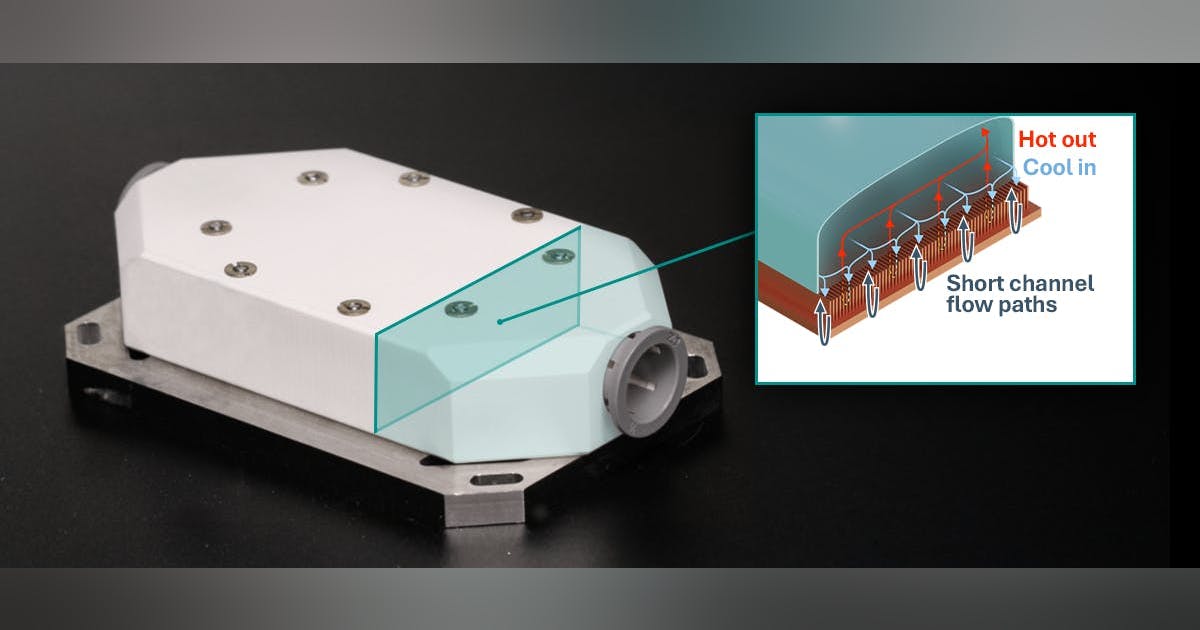

Jackson added, “it also looks like the bet will be on photon transfer optics. Photonics-based computers have been in development as prototypes for more than a decade, and seek to address the physical limitations of copper as an electrical conduit.”

By relying on the transfer of light through glass, he said, “this architectural approach is more energy efficient and promises to be much faster than current chips. If Nvidia can mass-manufacture a next-generation GPU that integrates photonics right into its silicon, then they can solve a couple of big problems for AI developers: power consumption and speed.”

Sanchit Vir Gogia, chief analyst at Greyhound Research, said that the dual $2 billion investment “sends a signal about AI infrastructure bottlenecks: this is the moment where the industry quietly admits that AI scaling is no longer primarily a chip story. It is a communication story.”

For the last few years, he said, “the visible constraint was straightforward. Enterprises could not get enough GPUs. Hyperscalers reserved allocation. Vendors rationed supply. That was the first choke point. But once accelerators are deployed at scale, the bottleneck moves. It does not disappear.”

Gogia added that in today’s AI clusters, “each accelerator depends on dozens of high-speed links to talk to its neighbours. Multiply that across the rack and you end up with thousands of interconnects operating continuously. Every one of those links draws power. Everyone introduces latency and signal integrity considerations. Everyone carries a probability of failure.”

What Nvidia is signalling is that the next bottleneck is the fabric itself, he pointed out. “You can add more GPUs, but if the network layer cannot scale proportionally, utilisation falls and economics deteriorate,” he said. “The company is moving upstream to ensure the arteries of AI infrastructure do not become the new point of scarcity. This is not a marketing flourish. It is a structural admission that the networking wall is real.”