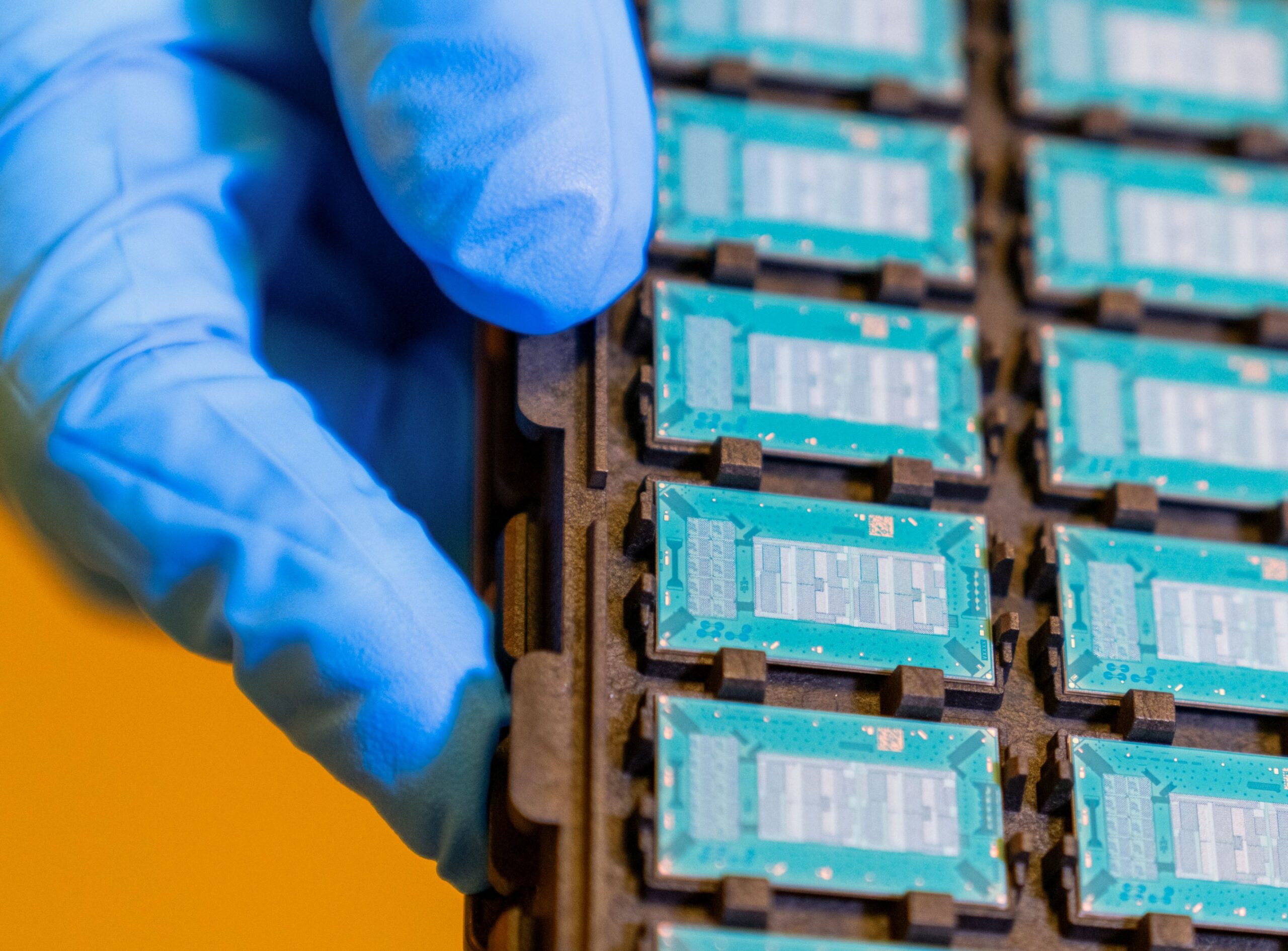

With demand for AI chips rising and supplies tightening, Meta is taking its AI computing needs into its own hands and developing more of its own chips: It will produce four new generations of chips over the next two years.

Cloud computing giants including Meta, AWS, and Google have been keen to develop their own chips to improve the performance of their own data centers. Meta started its own chip program in 2023, when it implemented the Meta Training and Inference Accelerator (MTIA), a family of custom-built silicon chips to power its AI workloads efficiently.

The MTIA 300, which Meta will use for ranking and recommendations training, is already in production, Meta said. It will use the other planned chips, the MTIA 400, 450, and 500, mainly for generative AI inference production, it said.