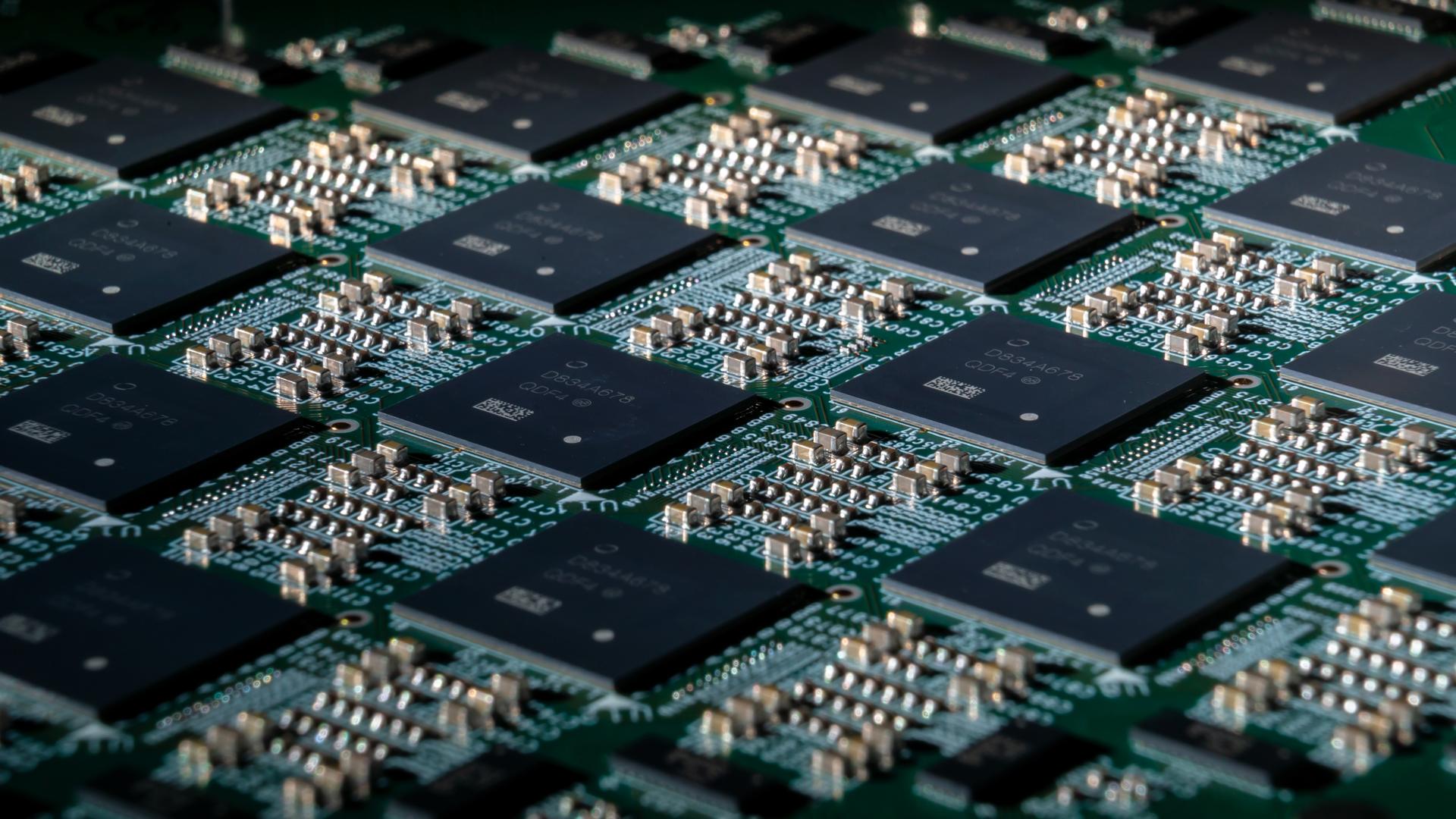

The transition reflects a deeper move from optimizing individual components to engineering entire systems for scalability and efficiency, said Sanchit Vir Gogia, chief analyst at Greyhound Research.

“Compute, memory behavior, interconnect bandwidth, and workload orchestration are being engineered together,” Gogia said. “Even physical design choices such as rack modularity, serviceability, and assembly efficiency are now part of performance engineering. Infrastructure is beginning to resemble an appliance at scale, but one that operates at extreme density and complexity.”

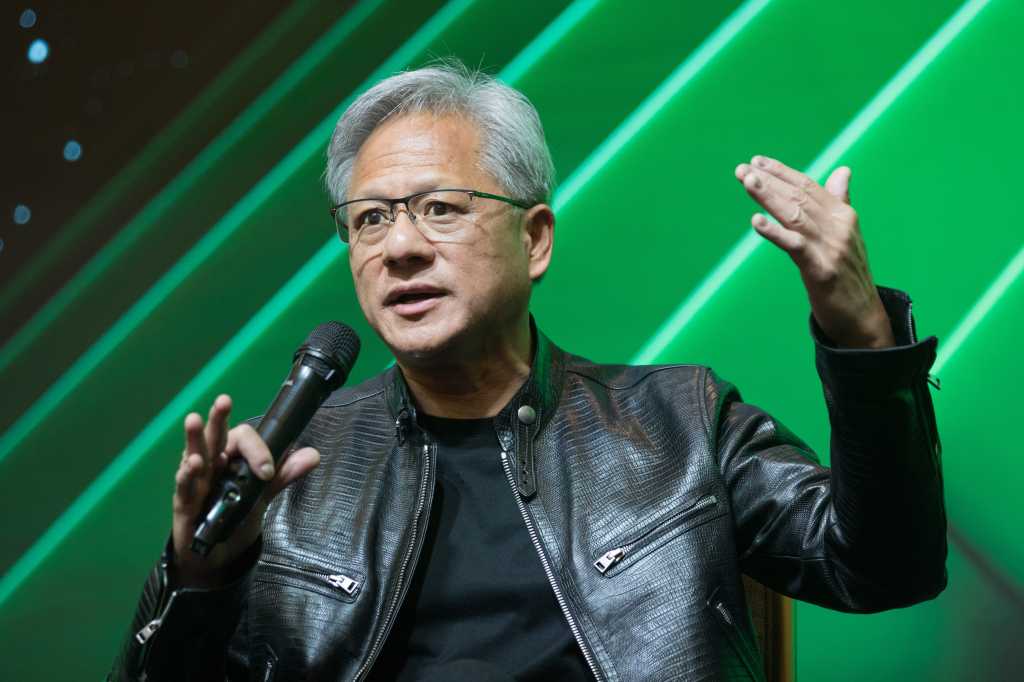

Industry observers said rack-scale systems, including Nvidia’s NVL72 and open standards such as OCP Open Rack, are enabling more flexible pooling and orchestration of infrastructure resources for AI and machine learning workloads.

“I am also seeing other operators are increasingly adopting chip-to-grid strategies, integrating onsite power generation (microgrids, batteries), advanced cooling technologies, and co-packaged optics to effectively manage power spikes, reduce conversion losses, and support rack densities exceeding 100kW,” said Franco Chiam, VP of Cloud, Datacenter, Telecommunication, and Infrastructure Research Group at IDC Asia Pacific.

“This collective industry response to adapt to the needs for higher power and thermal demands is further reinforced by leading vendors and hyperscalers aligning around open standards, facilitating scalable, gigawatt-class datacenter deployments,” Chiam added.

Networking takes center stage

Networking is emerging as a central component of AI infrastructure, as platforms such as Vera Rubin place greater emphasis on how data moves across systems rather than treating connectivity as a supporting layer.