The Data Center as Token Factory

If there was one line of thinking that defined the session, it was Huang’s insistence that the industry must stop thinking about computers as systems for data entry and retrieval.

That, he said, is the old paradigm. The new one is a “token manufacturing system.”

That phrase landed because it compresses a lot of Nvidia’s strategy into a single mental model. In this view, the modern data center is no longer just a warehouse of servers or a cloud abstraction layer. It is a factory, and the unit of output is increasingly the token.

For Data Center Frontier readers, this is a familiar direction of travel, but Huang pushed it further than most CEOs do. He repeatedly tied Nvidia’s roadmap to token throughput, token economics, and performance per watt. He is clearly trying to establish a new baseline metric for AI infrastructure value. Not raw capacity, but how much useful intelligence a facility can produce from a fixed power envelope.

That point also surfaced in his discussion of Grace and Vera CPUs. Huang’s argument was not that Nvidia intends to win every classical CPU market. It was that traditional measures such as cores per dollar are insufficient in AI data centers where the real economic risk is leaving extremely valuable GPUs idle.

In other words, the CPU matters because it must move work fast enough to keep the GPU estate productive. In a power-limited, AI-heavy environment, the purpose of the CPU changes. It is no longer optimized for the old hyperscale rental model. It is optimized for keeping the token factory fed.

That is a subtle but major shift. It suggests that the next-generation AI data center will be increasingly engineered around the productivity of the overall system rather than around legacy component economics.

Nvidia as Ecosystem Investor

Another important section of the briefing came when Huang was asked about Nvidia helping finance customer data center buildouts.

His answer was strikingly direct.

Yes, Nvidia is financing parts of the ecosystem, he said, and yes, that includes companies like CoreWeave, Nscale and Nebius. He described those bets as low-risk because Nvidia sees demand pipelines before others do and can identify where capacity will be needed.

That deserves attention well beyond financial coverage.

For infrastructure observers, it is further evidence that Nvidia is operating as a market shaper. The company is not content simply to sell hardware into whatever capacity emerges. It is actively helping bring capacity into being.

That has consequences across the AI infrastructure stack. It means Nvidia is using its balance sheet, forecasting visibility, and technical influence to accelerate both upstream supply chain readiness and downstream AI factory deployment. Later in the session, Huang explicitly described the company as constantly managing both directions: looking upstream at photonics, memory, packaging and manufacturing readiness, and downstream at land, powered shell, developers and future consumption.

That is not vendor behavior in the narrow sense. It is ecosystem orchestration.

And it tracks with what DCF readers are already seeing in the field. The AI buildout is no longer a simple buyer-seller transaction between cloud operator and equipment provider. It is a coordinated industrial campaign spanning developers, landlords, utilities, manufacturers, financiers and software providers.

Huang’s comments effectively confirmed that Nvidia now sees itself at the center of that campaign.

Networking, Optics and the Infrastructure Depth of the AI Buildout

For anyone still inclined to think of Nvidia primarily as a GPU story, the briefing offered a strong corrective.

Huang repeatedly emphasized that Nvidia’s opportunity extends beyond accelerators to networking, silicon photonics, storage, CPUs and software-defined factory design. At one point he noted that the trillion-dollar visibility figure he cited applies only to Blackwell and Vera Rubin through 2027 and does not include other categories such as standalone CPUs, Groq, storage systems, BlueField, or later architectures.

That was not just a financial clarification. It was a statement about how much broader Nvidia believes the AI infrastructure opportunity has become.

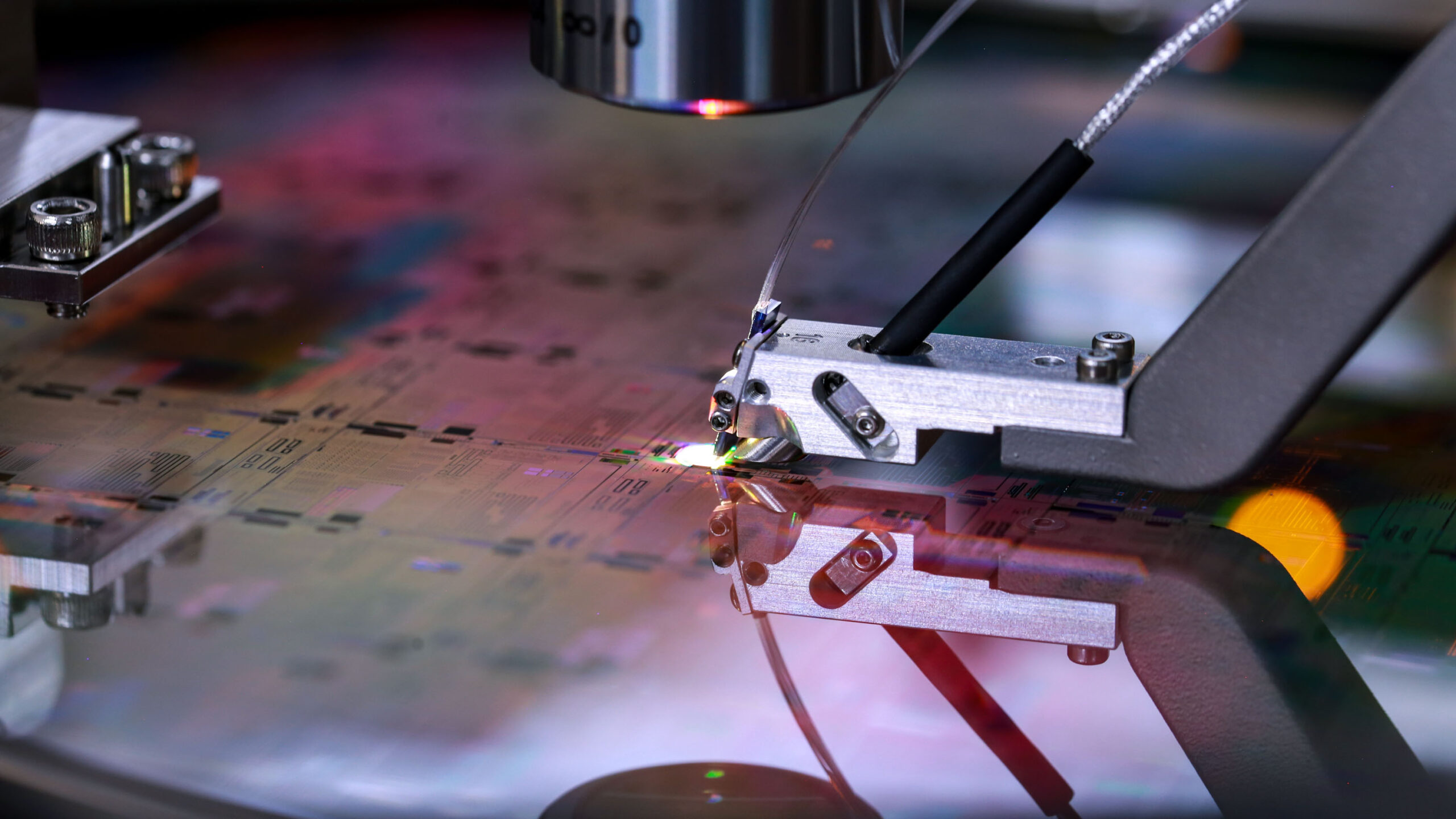

His comments on co-packaged optics were especially notable. Huang said Nvidia and TSMC had co-invented key technology for integrating electronics and silicon photonics and had filed roughly 100 patents across the supply chain. He described Nvidia as representing the vast majority of TSMC’s relevant co-packaged optics process today and said production ramping is underway.

For data center planners, that matters because the optics story is no longer adjacent to the AI buildout. It is becoming central to it. If AI systems continue scaling outward and upward, then photonics, packaging, and interconnect density become strategic bottlenecks rather than technical footnotes.

The same goes for storage. Huang went out of his way to say that Nvidia’s storage-related work is not even included in the trillion-dollar framing, even though he sees AI driving a major redesign of storage performance requirements. As AI systems become much faster at consuming and using data, the storage layer must change with them.

Again, the picture that emerges is not of a faster chip cycle, but of a wholesale redesign of data center architecture around AI workloads.

China, Manufacturing, and the Supply Chain Question

The geopolitical moments of the session were less expansive than some may have hoped, but they were still significant.

Huang said Nvidia had received licenses for many customers in China for H200 systems and had also received purchase orders, adding that manufacturing was being restarted. That was one of the clearest pieces of hard news in the session.

He also suggested that President Trump’s posture, as Huang characterized it, was to preserve U.S. leadership in access to Nvidia’s best technology while still allowing the company to compete globally rather than concede markets unnecessarily.

On Taiwan and global manufacturing, Huang’s tone was measured. He said the goal of moving 40% of Taiwan chip capacity to the United States would be difficult to achieve in the near term, largely because demand is growing so fast even as new fabs come online.

That answer, too, is useful for the data center industry. The pressure to regionalize manufacturing and de-risk the supply chain is real, but it exists alongside another force that may be even stronger: explosive growth in AI infrastructure demand. The industry is trying to add resilience without slowing expansion, and that is a difficult balancing act.

The Little Things: Silence, Cellphones, Work and the Mythology of Jensen

One reason Huang’s media sessions are so revealing is that they capture the managerial culture behind the public thesis.

At one point, a phone went off. Huang stopped and called it out. At Nvidia, he said, everyone knows the rule: no chimes, no vibration, complete silence in meetings.

It was a small moment, but an instructive one. For all the futurism and scale, Huang runs Nvidia with a founder’s intolerance for sloppy signals.

That same mentality showed up in his answer about work and AI. Asked how tools like OpenClaw were changing daily life, Huang did not say AI was making work easier. He said it was making everything faster and, in his own case, making him busier. Results come back sooner, projects multiply, and the executive remains in the critical path more often.

This is worth noting because it runs against a simplistic automation narrative. Huang’s view is not that AI will empty the office. It is that AI will compress cycle times so aggressively that people capable of making decisions may find themselves under more pressure, not less.

He returned to that theme near the end, in a surprisingly philosophical answer to a question about suffering. Growth, preparation, discomfort, anxiety, the work of getting better: that is the cost of striving to do something meaningful. There was a little founder mythology in the answer, naturally, but also a coherent ethic. Huang appears to believe that strain is not an unfortunate byproduct of ambition. It is part of the process.