The defining feature of our current data center cycle isn’t a shortage of customers or capital; it’s a shortage of power that can actually be delivered on time. In the space of three years, large‑load interconnection queues have gone from a planning tool to the main reason otherwise viable AI campuses are missing their deployment windows.

Multi‑year delays for large loads are quickly becoming the norm, not the exception, in major markets, turning what should be a sprint to deploy AI into a long and uncertain wait.

At the grid level, the same pattern is visible in the queues. Across U.S. markets, that queuing infrastructure is now a primary source of delay. Regional operators from PJM to ERCOT and NYISO report steep increases in both the number and size of large‑load requests, with data centers and other energy‑intensive digital infrastructure accounting for a growing share of new demand ( https://insidelines.pjm.com/pjm-board-outlines-plans-to-integrate-large-loads-reliably/, https://www.nyiso.com/-/energy-intensive-projects-in-nyiso-s-interconnection-queue/, https://www.latitudemedia.com/news/ercots-large-load-queue-has-nearly-quadrupled-in-a-single-year/). In practice, that means more projects are being told that meaningful capacity will not be available on the timeline their customers expect, forcing them into redesigns, phased power ramps, or alternative power strategies.

Time, in other words, has become the scarcest resource in the data center economy. The same 60 MW AI facility that looks attractive at a 17.1% IRR when delivered on schedule can see its returns fall to 12.6% with a three‑month delay and to 8.8% with a six‑month delay—nearly halving its investment case ( https://www.thefastmode.com/expert-opinion/47210-what-we-learned-in-2025-about-data-center-builds-why-delays-will-persist-in-2026-without-greater-visibility). That is why, in this industrial revolution, the metric that matters most is speed‑to‑power: how quickly real, reliable megawatts can be made available at the fence line, not how many gigawatts exist on slides or in press releases. In this industrial revolution, that metric will do more to determine who wins than any short‑term race to buy chips or secure logos. The operators who escape that bottleneck will be the ones who stop treating the grid as their only path to power and start treating energy as a first‑order design choice, not a background assumption.

The Fifth Industrial Revolution Meets a 20th‑Century Grid

Every industrial revolution has been powered as much by infrastructure as by ideas. Steam needed coal and railroads; mass production needed cheap electricity and high‑voltage transmission; the digital revolution needed a dense web of fiber and reliable baseload power. Each wave demanded entirely new energy systems and massive build‑outs of physical infrastructure. AI, often described as a fifth industrial revolution, is no different—except this time the breakthrough is landing on a grid that was never designed for this kind of load, at this kind of speed.

For most of the last two decades, data center development proceeded as if power were an infinite, fungible input: if you could find land, fiber, and tax incentives, the electrons would somehow follow. That assumption is now colliding with the reality of multi‑year interconnection queues, constrained transmission corridors, and long‑lead equipment that cannot be willed into existence by demand alone. Yet, even as AI roadmaps are accelerating, interconnection reform, permitting modernization, and domestic equipment build‑out moved at a far slower pace, significantly lagging behind demand curves.

The result is a profound mismatch. The industry is behaving like it is in the middle of an infrastructure boom (AI investment, state‑level incentives, and hyperscale build‑out plans)while the processes that govern what can actually be built (queue studies, zoning and permitting, transformer manufacturing) are still calibrated for the previous era. In the gaps between those timelines, data center projects are stalling. We are paying for this mismatch in multi-year delays and stranded projects. DCD recently reported that a one-month delay on a 60MW facility could cost 14.2M USD, underscoring how quickly these structural frictions translate into stranded capital and eroded returns (https://www.datacenterdynamics.com/en/whitepapers/preventing-multimillion-dollar-data-center-losses-through-reporting/).

Where Projects are Stuck: Queues, Permits, and Steel

For a large AI campus today, the hard part is no longer how many racks you can fit into a shell; it is everything that has to happen upstream of the switchgear. Once you cross into nine‑figure loads, new data centers stop being “just another rate-payer” and start triggering transmission‑level impacts—new substations, reconductored lines, and sometimes entirely new high‑voltage corridors. Each of those requirements pulls the project into the same machinery that governs any grid‑scale asset: multi‑stage studies, cost‑allocation fights, and a queue that is already congested with generation.

In PJM, interconnection wait times for large loads such as data centers have stretched beyond eight years in some cases, leaving tens of gigawatts of planned capacity unable to access grid power. In New York, the grid operator has reported 48 large‑load interconnection requests totaling around 12 GW—most from data center and similar digital infrastructure projects—underscoring how dozens of builds are stuck in line rather than under construction (NYISO reports and queue data). Texas is facing the same pattern at a different scale: ERCOT’s large‑load interconnection queue has swelled to about 226 GW, nearly quadruple the prior year’s level, with roughly 77% of that tied to large data centers targeting grid connections by 2030 (https://www.latitudemedia.com/news/ercots-large-load-queue-has-nearly-quadrupled-in-a-single-year/). Queue position, and the uncertainty around when it will translate into a notice to proceed, has become a central scheduling risk rather than a back‑office detail.

Queue status, however, is only one part of where projects bog down. Zoning, land‑use approvals, and environmental permits have become another major source of friction, as communities and regulators confront a new class of industrial‑scale digital infrastructure. Local debates over water use, visual impact, noise, emergency‑response obligations, and who should pay for upstream grid upgrades are now common features of data center hearings from Northern Virginia to the Pacific Northwest (https://www.thefastmode.com/expert-opinion/47210-what-we-learned-in-2025-about-data-center-builds-why-delays-will-persist-in-2026-without-greater-visibility).

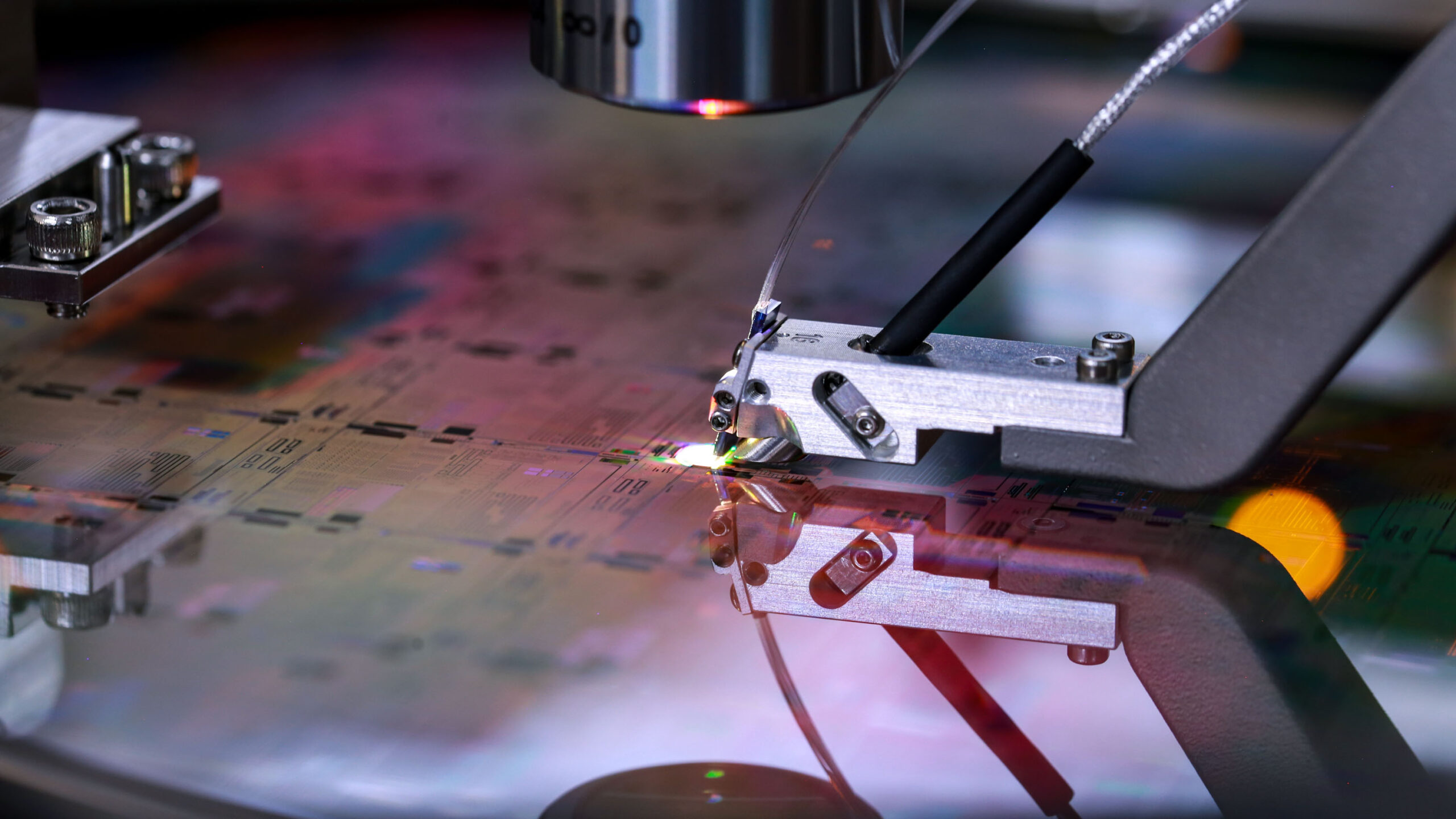

Even when a project clears those hurdles, it still has to contend with a constrained global supply chain for the components that physically connect it to the grid. Large power transformers, generator step‑up units, and high‑voltage breakers now sit at the center of a well‑documented bottleneck, with typical lead times for big transformers in the 80‑ to 120‑week range and some transmission‑class units stretching toward three or four years in tight markets ( https://www.utilitydive.com/news/electric-transformer-shortage-nrel-niac/738947/, https://www.woodmac.com/news/opinion/supply-shortages-and-an-inflexible-market-give-rise-to-high-power-transformer-lead-times/). That means a campus can have land, customers, capital, and permits lined up—and still sit idle because the “iron heart” of its interconnection is somewhere in a manufacturing backlog, halfway around the world.

Demand Shock: AI Data Centers as a New Kind of Load

What makes this wave different is the nature of the load. Traditional enterprise and cloud data centers grew in relatively predictable increments that grid planners could smooth over time. AI data centers arrive in chunks that look more like aluminum smelters or steel mills: 100 MW, 300 MW, 1 GW per campus, often on a compressed timeline. For system planners, that turns data centers from industrial rate payers into grid‑scale assets that can reshape local load duration curves and reserve margins. Planning has had to shift accordingly.

BloombergNEF projects that U.S. data center power demand could reach 106 GW by 2035—more than double today’s operating capacity—with over a quarter of recently announced U.S. data center projects larger than 500 MW (https://www.utilitydive.com/news/us-data-center-power-demand-could-reach-106-gw-by-2035-bloombergnef/806972/, https://www.publicpower.org/periodical/article/data-center-power-demand-us-hits-106-gw-2035-bloombergnef). That growth is not confined to Northern Virginia or a handful of legacy hubs; maps of announced and under‑construction capacity show gigawatts of load spreading into exurban and rural regions, often served by transmission infrastructure that was never designed for clusters of multi‑hundred‑megawatt digital plants.

Grid operators are scrambling to keep up. PJM’s 2026 outlook projects that summer peak demand could rise to approximately 222 GW by 2036, about 66 GW above current levels, with data centers a major driver of that growth (https://insidelines.pjm.com/pjms-updated-20-year-forecast-continues-to-see-significant-long-term-load-growth/). At the federal level, the Federal Energy Regulatory Commission (FERC) has opened a dedicated rulemaking—RM26‑4—on interconnection of large loads, seeking to establish a consistent process and standards for customers with 20 MW or more of demand (https://www.ferc.gov/rm26-4). The fact that data centers now require bespoke regulatory categories is a signal in itself: the demand shock from AI has outgrown the assumptions baked into the old rulebook.

Supply Shock: Generation That Can’t Get to the Fence Line

If AI data centers are a demand shock, offshore wind and other large‑scale generation projects are the supply shock—but they are running into many of the same structural bottlenecks, just in reverse. Offshore wind along the U.S. East Coast depends on a fragile chain of federal permits, specialized vessels and ports, and interconnections into congested coastal grids. When any one of those pieces falters, gigawatts can sit idle on paper.

In late 2025, the Department of the Interior issued a stop‑work order on several major offshore wind projects—including Vineyard Wind, Revolution Wind, Coastal Virginia Offshore Wind, Sunrise Wind, and Empire Wind—citing national security and permitting concerns (https://www.doi.gov/pressreleases/trump-administration-protects-us-national-security-pausing-offshore-wind-leases). Those decisions effectively paused multiple gigawatts of contracted clean energy and put billions of dollars of investment in limbo while developers, states, and the federal government argued in court and in the press (https://www.nytimes.com/2026/01/10/climate/billions-at-stake-in-the-ocean-as-trump-throttles-offshore-wind-farms.html). Early rulings in 2026 allowed some projects—such as Revolution Wind and Empire Wind—to resume installation, but the episode underscored a new reality: infrastructure schedules are now intertwined with litigation timelines as much as engineering ones (https://www.spencerfane.com/insight/revolution-wind-may-proceed-with-its-offshore-wind-energy-project-the-trump-administration-loses-another-court-battle/, https://www.equinor.com/news/20260115-empire-wind-granted-preliminary-injunction).

Offshore wind is not alone. New gas plants, battery storage hubs, and even brownfield repowers are facing their own interconnection and siting bottlenecks. One recent analysis found nearly 2,600 GW of generation and storage capacity (almost double the size of the existing U.S. grid) waiting in interconnection queues nationwide, with solar and battery projects making up the bulk of that backlog. In PJM, a one‑time Reliability Resource Initiative was needed to fast‑track 50 shovel‑ready generators to cover load growth and retirements, and most of the projects selected for that fast lane were gas plants, not renewables, simply because they could clear permitting and interconnection hurdles more quickly in the current system (https://www.cfr.org/articles/us-interconnection-challenge-why-renewables-are-stuck-line).

Transmission is its own constraint. Multiple studies show U.S. transmission build‑out is lagging far behind what is needed to balance regional supply and demand, even before AI’s additional load is fully accounted for ( https://cleanenergygrid.org/new-report-reveals-u-s-transmission-buildout-lagging-far-behind-national-needs/). FERC’s recent Orders 1920 and 1977 push transmission providers toward 20‑year planning horizons and attempt to streamline federal backstop siting, but they cannot erase the reality that every new long‑distance line still has to navigate state‑by‑state approvals, local opposition, and the same transformer bottlenecks that data centers and power plants are competing over ( https://www.whitecase.com/insight-alert/transmission-planning-reforms-finalized-ferc-order-no-1920). In practice, that means many of the clean energy and gas projects being promised to serve AI loads are located in places where they cannot reach the data center clusters without years of intermediate transmission upgrades.

For data center operators, this has a double impact, because the supply they are counting on is experiencing its own stalling effect. Offshore wind, new gas plants, storage projects, and transmission expansions are all vying for the same scarce transformer manufacturing slots, construction crews, and court dockets. Both the demand side (AI load) and the supply side (new generation) are hitting the same constraints in build throughput—permitting, interconnection, and long‑lead equipment—just from opposite directions. When both the loads and the resources that could serve them are constrained by build throughput, planning assumptions that looked balanced on paper can break down quickly in practice.

Policy In Catch‑Up Mode

Policymakers are not ignoring these signals; they are just moving on a different timeline. FERC’s Order 2023, finalized in 2023 and being implemented across regions, aims to unclog generator interconnection queues by imposing cluster studies, stricter project readiness requirements, and firm deadlines on transmission providers (https://www.ferc.gov/explainer-interconnection-final-rule). In theory, that should help move renewable, storage, and gas projects through the system more predictably, eventually easing the supply‑side bottleneck.

On the demand side, RM26‑4 is FERC’s attempt to do something similar for large loads—data centers, hydrogen hubs, and other industrial customers with tens or hundreds of megawatts of demand (https://www.ferc.gov/rm26-4). The docket has attracted dozens of comments from utilities, grid operators, industrial customers, and consumer advocates, debating standards for when a load becomes “large,” how to treat behind‑the‑meter resources, and who should pay for the network upgrades triggered by these connections (https://www.monitoringanalytics.com/filings/2025/IMM_Reply_Comments_re_ANOPR_Docket_No_RM26-4_20251205.pdf). The answers will shape not just how fast projects move through studies, but also whether the economics pencil out for campuses that must shoulder large interconnection bills.

Regional grid operators are making their own adjustments. PJM’s board has outlined new processes to integrate large loads more reliably, including scenario‑based planning for data center clusters and a focus on co‑located generation and load as a mainstream option rather than an exception (https://insidelines.pjm.com/pjm-board-outlines-plans-to-integrate-large-loads-reliably/, https://www.duanemorris.com/alerts/ferc_mandates_new_transmission_services_accomodate_data_centers_1225.html). FERC has also ordered PJM to revise its tariff to better accommodate arrangements where significant generation and load share an interconnection point—precisely the kind of configuration emerging at AI campuses with on‑site power (https://www.perkinscoie.com/en/insights/ferc-orders-pjm-to-revise-tariff-to-accommodate-co-located-arrangements.html).

The problem is that regulatory reform timelines are measured in years, while AI‑driven site selection often happens in months. By the time rulemaking is finalized, a new wave of projects may already be stuck in the old rules, prolonging the startup phase for the very customers those reforms are supposed to help.

Beyond the Grid‑Only Mindset: Bring Your Own Power

In this industrial cycle, an uncomfortable truth is emerging: operators who arrive expecting the grid alone to solve their power problem will wait the longest. The ones that move fastest will be those that show up with a credible power plan—generation, fuel, and interconnection—baked into the project from day one. That logic went from industry subtext to national talking point when President Donald Trump used his 2026 State of the Union address to lay out what he called a “ratepayer protection pledge,” telling major tech companies that they would be expected to build or finance their own power plants for AI data centers so that households are not stuck paying for grid upgrades. In his words, “they’re going to produce their own electricity”—a political framing that formalizes a trend many developers were already moving toward.

For some operators, that shift means pairing data centers with existing generation rather than waiting for greenfield plants to be approved. One of the clearest examples is Amazon and Talen Energy: through a long‑term agreement, Talen will supply up to 1,920 MW of carbon‑free power from the Susquehanna nuclear station in Pennsylvania to support AWS data centers, while the two companies explore uprates and potential small modular reactors at the site (https://www.utilitydive.com/news/talen-amazon-aws-susquehanna-nuclear-data-centert/750440/, https://energydigital.com/articles/amazons-nuclear-energy-deal). That structure effectively treats the nuclear plant and the cloud campus as a single integrated system, giving Amazon a dedicated supply with a clear development path and giving Talen a long‑term revenue stream and a platform for future expansion. It is not quite “off‑grid,” since transmission is still involved, but it is much closer to a bring‑your‑own‑power posture than a traditional retail service agreement.

Others are pursuing firmed hybrid models designed around the data center as an anchor load, combining natural gas, storage, and, in some cases, renewables. PJM’s recent rules explicitly create a faster pathway for combined data center and power‑generation projects, with Reuters reporting that the new framework tends to favor on‑site or adjacent gas plants because they can clear permitting and interconnection more quickly than many greenfield renewables in today’s environment (https://www.reuters.com/business/energy/us-grid-rules-faster-data-centers-favor-on-site-gas-plants–reeii-2026-01-27/). Under that approach, large loads can either lean on their own generation through an expedited connection or enter into “connect‑and‑manage” arrangements that require them to curtail during grid stress, effectively trading some flexibility for earlier access to power.

The most ambitious version of this trend is true bring‑your‑own‑power (BYOP): campuses where the data center and a dedicated power plant are planned, permitted, and financed as one project, with the grid seen as a secondary outlet rather than the primary lifeline. Several AI‑focused developers are now actively marketing off‑grid or near‑grid concepts that pair gas‑fired generation directly with high‑density compute clusters, explicitly arguing that building an independent, “shadow” power system is faster and more controllable than navigating standard interconnection queues (https://finance-commerce.com/2026/02/off-grid-ai-data-centers-natural-gas-power/). In these models, the utility interconnection is still valuable—for backup, for selling surplus, and for long‑term optionality—but it sits alongside on‑site power rather than dictating whether the project can proceed.

This is not an argument against the grid or against clean energy. It is a recognition that, in an AI‑first world, time to power is time to market, and the queue alone cannot deliver the capacity the industry needs on the timelines customers expect. For the next wave of AI data centers, the power plant and the campus will increasingly be designed as one story, not two.

Learning from COVID: What We Should Have Built Already

During the pandemic, global supply chains, transformer availability, and construction logistics were revealed as fragile; that should have triggered a concerted push to regionalize manufacturing, streamline permitting, and modernize grid planning assumptions. COVID was, in many ways, a dress rehearsal for today’s constraints. The pandemic revealed just how brittle global supply chains were, especially for heavy equipment like transformers, turbines, and switchgear. It also showed how quickly demand patterns could shift, forcing utilities and grid operators to contend with new load shapes and uncertainties.

COVID should have shattered our infrastructure preparedness illusion. By all means, it was a good readiness test, yet it also revealed how much more quickly digitization needed to occur. Instead, much of the response focused on restoring the old normal. Factory shutdowns, shipping delays, and a wave of early warnings about transformer scarcity and workforce limits exposed how fragile the physical backbone of the grid really was. By 2024, lead times for large generation step‑up transformers had roughly doubled from pre‑pandemic norms of 30–60 weeks to around 120–130 weeks on average, and analysts warned that domestic manufacturing capacity was still nowhere near keeping pace with rising demand from data centers, electrification, and clean energy projects (https://www.nrucfc.coop/content/solutions/en/stories/energy-tech/transformers-are-facing-major-cost–supply-chain-pressures.html, https://www.woodmac.com/press-releases/power-transformers-and-distribution-transformers-will-face-supply-deficits-of-30-and-10-in-2025/). Imports now provide an estimated 80 percent of U.S. power transformers, leaving critical grid projects exposed to geopolitical shocks and trade disputes on top of basic manufacturing bottlenecks.

Let’s face it: COVID was 6 years ago. That’s 60% of the time it typically takes to plan, permit, and build a major new high‑voltage transmission line in the United States, where full project timelines routinely stretch to eight–twelve years from conception to completion (https://energy.sustainability-directory.com/learn/what-is-the-typical-timeline-for-planning-permitting-and-constructing-a-major-, https://www.publicadvocates.cpuc.ca.gov/-/media/cal-advocates-website/files/press-room/reports-and-analyses/230612-caladvocates-transmission-timelines.pdf). In that window, the country added only a trickle of new high‑voltage lines: Americans for a Clean Energy Grid estimates that from 2020 to 2023, the U.S. built an average of about 350 miles of new high‑voltage transmission per year—roughly one‑fifth of the 1,700 miles per year added in the early 2010s, and well below the ~5,000 miles per year DOE now says will be needed going forward (https://cleanenergygrid.org/fewer-new-miles-2024/, https://cleanenergygrid.org/new-report-reveals-u-s-transmission-buildout-lagging-far-behind-national-needs/).

We sensed the power shakeup. Have we done enough as a nation to address that? No. In my work supporting equipment providers into the space, I can also tell you–many did not do enough to dial up production. I saw many power distribution equipment companies shuttering some of their production plants, even while their timelines for equipment grew into multi-year waiting lists. Others, like Kohler (now Rehlko) took the opportunity to expand their production or, like JST, launch their equipment into the market as a viable new competitor for power equipment. But these moves remain the exception, not the rule, relative to the scale of AI‑driven demand now arriving.

For the data center and AI ecosystem, the lesson should have been straightforward: if you plan to double or triple load in key regions over a decade, you must start building the supporting infrastructure early, diversify supply chains, and streamline the processes that stand between project conception and energization. But the policy and procurement apparatus did not move at the same speed as AI adoption. Instead, the industry raced ahead with hyperscale build‑out plans, while many of the underlying reforms—domestic transformer manufacturing, permitting modernization, interconnection overhaul—remained incremental.

That is why today’s delayed projects feel particularly frustrating. Unlike earlier industrial revolutions, this one arrived with a recent, vivid, and economically catastrophic reminder of what happens when infrastructure lags technology. The warnings were there; the choice not to build ahead of the next hurdle was society’s.

Moving From “Stalled” to “Under Construction”

The next phase of this story will be written by those willing to treat power as the foremost question, not one of the four first questions. For data center developers, that starts with flipping the usual site‑selection script: instead of finding land and then asking how much power might be available someday, the first filter becomes where firm capacity—or a credible path to it—can be made real within the deployment window. That can mean prioritizing sites near existing generation, pursuing long‑term offtake from plants that already hold interconnection rights, or designing campuses with explicit multi‑path strategies that combine grid supply, on‑site generation, and potential neighbor‑to‑neighbor energy arrangements. In this frame, the site isn’t just “good dirt;” it is a power strategy with a physical address.

Utilities and grid operators, in turn, have an opportunity to move from passive gatekeepers to proactive partners. Publishing hosting‑capacity‑style tools for large loads, clarifying how bring‑your‑own‑power projects will be evaluated, and setting transparent, tiered processes for 20‑MW, 100‑MW, and 500‑MW‑plus customers all help align expectations and reduce surprises. (https://insidelines.pjm.com/pjm-board-outlines-plans-to-integrate-large-loads-reliably/) Some regions are beginning to show what this can look like: large‑load frameworks that distinguish between customers arriving with their own firm generation and those relying entirely on network upgrades, and public reporting that makes the composition and status of big‑load requests visible enough for developers to plan around. Those steps won’t eliminate interconnection queues, but they can make the time to credible answer much shorter, even if the answer is that a different site or power configuration will be needed.

For policymakers, the task is to connect the dots between transmission and interconnection reforms and the reality of AI‑driven load. Order 2023, at its core, is about unclogging generator interconnection queues—cluster studies, readiness screens, and firm timelines so that new supply can move from applications to energization more predictably. RM26‑4 is the matching piece on the demand side, aiming to create clear, consistent standards for large loads seeking to connect at 20 MW and above: what studies they trigger, how quickly they are processed, and how costs are allocated. Layered on top of state‑level siting reforms, those federal rules can either remain abstract or be used explicitly to treat AI data centers and co‑located generation as strategic infrastructure, with aligned timelines for environmental review, interconnection studies, and cost‑allocation decisions.

The Fifth Revolution’s Coming Power Test

In a world where one grid region is evaluating more than 200 GW of large‑load requests and U.S. data center demand could reach 106 GW by 2035, the differentiator will not be who has the largest AI budget, but who can convert megawatts from a theoretical promise into energized, reliable capacity fastest. That is what speed‑to‑power really means in practice: a shared playbook where developers design for power first, utilities reward projects that solve as well as consume, and policymakers make sure the rules recognize AI data centers and co‑located generation as part of the backbone of the next industrial era, rather than as an afterthought at the edge of the grid.

Every previous industrial revolution has eventually been judged by whether its physical infrastructure managed to keep pace with its transformative potential. The fifth one that we are operating within and actively building will be no different. AI can only be as revolutionary as the substations, transformers, and generation that feed its clusters allow it to be. This problem will not be fixed overnight.

Right now, too many projects are stalled in queues, courtrooms, and equipment backlogs. Whether the “stalled” project label becomes the defining word of this era versus just a painful, brief chapter will depend on how quickly our industry, utilities, and policymakers are willing to treat energy not as an afterthought, but as the central design constraint of the AI age.