Sasol Ltd. Chief Executive Officer Simon Baloyi is seeking a new path for South Africa’s second-largest polluter to reach its emissions target after doubling down on coal to run its fuel and chemicals production.

The Johannesburg-based company plans to boost the use of renewable energy to counter the growing dependence on coal, Baloyi said. The firm has faced increasing pressure to lower the emission of greenhouse gases – mainly from the production process at its Secunda plant, the world’s biggest single site for emissions.

Sasol is falling back on coal after encountering obstacles in its plan to pivot to natural gas and green hydrogen in its path to net zero by 2050. The return of Donald Trump — who has promised to help the coal industry — as US president may also dampen some criticism for companies using the dirtiest fossil fuel.

Sasol identifies itself as a coal-based company, which is “at the core of the South African economy” and needs to transition at a pace that works for the country, Baloyi said in an interview at Bloomberg’s Johannesburg office last week. Shutting operations to meet climate goals “for me does not make any logical sense at all,” he said.

The company has attracted criticism in the past from some of its biggest shareholders over its decarbonization plans. The company’s hares slipped as much as 3.6 percent in Johannesburg on Wednesday before paring losses. They have declined 42 percent over the past 12 months, the most on an index of the 40 biggest traded stocks in the city.

The outlook shares similarities to recent decisions by state-owned Eskom Holdings SOC Ltd., the only emitter bigger than Sasol, that uses coal for more than 80 percent of South Africa’s electricity production. The utility is running units beyond their slated retirement date in order to stabilize power supply following years of record blackouts that crimped the economy.

Sasol in 2021 pledged to reduce its emissions 30 percent by the end of the decade by substituting coal with more gas – delivered by pipeline from operations in neighboring Mozambique. The company has been criticized over the years, in a report and separate policy brief, for lacking adequate access to the replacement fuel that raises its risks.

“That GHG strategy was predominantly based on lots of gas coming in, and where are we today? We know there’s no gas in Mozambique,” and Sasol’s existing wells are running down, Baloyi said, referring to greenhouse gas. Sasol remains committed to the 30 percent emissions reduction target, “but we can’t do it the way we thought we were going to do it,” he said.

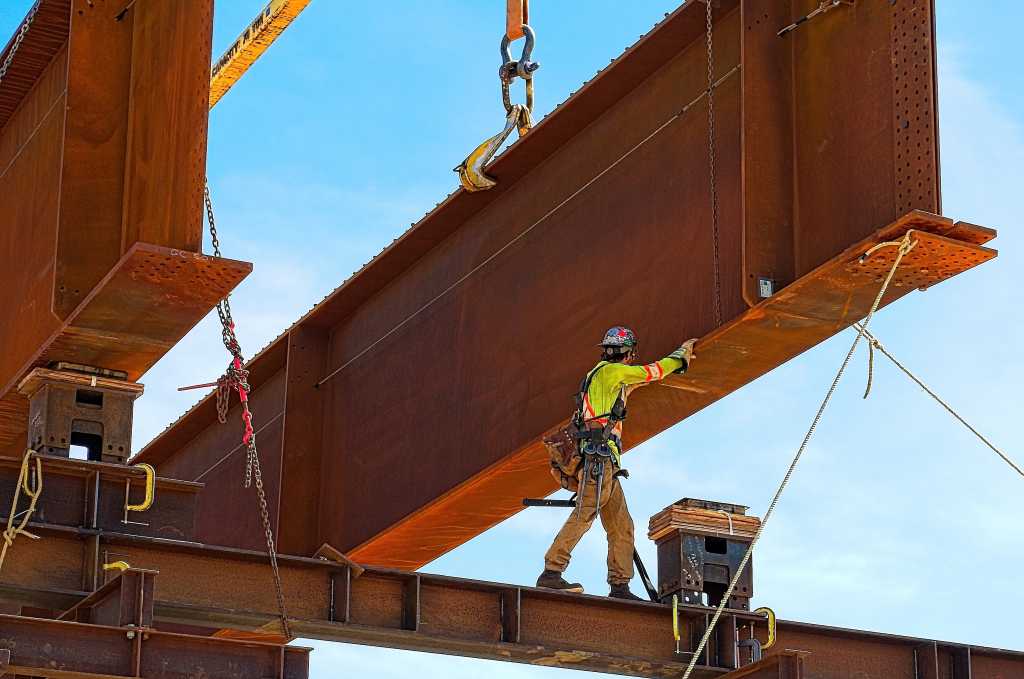

The company’s revised strategy will look at energy efficiency to reduce the amount of steam needed throughout its facilities. It may increase the 750 megawatts of green energy it’s already sourced and that will help reduce coal consumption, according to the CEO. “If we need to double up on renewable energy from one gigawatt to two, we’ll do that.”

There will be more to offset if Sasol improves the supply and quality of coal it mines to feed its operations and revive fuel and chemical production from Secunda.

A plan to remove stones from coal could see production increase production from the current 7 million tons. Output was at 7.6 million tons in 2021.

Sasol’s ambition to reach net zero in 2050 was launched by Fleetwood Grobler, Baloyi’s predecessor, who three years ago emphasized moving quickly on technology to produce green hydrogen in the wake of Ukraine’s invasion of Russia.

The company has looked into a project at the port of Boegoebaai on South Africa’s northwest coast that would export the fuel and provide domestic supply for its own operations.

But the price of the clean energy has deterred buyers. Sasol sees little benefit from being a first mover from using the technology, according to Baloyi.

“Why do you want to be like a guinea pig of technology,” he said.

Company studies have found the area near the project could support as much as 200 gigawatts of renewables if supporting infrastructure such as ports are built and then “you almost get green hydrogen for free,” he said.

What do you think? We’d love to hear from you, join the conversation on the

Rigzone Energy Network.

The Rigzone Energy Network is a new social experience created for you and all energy professionals to Speak Up about our industry, share knowledge, connect with peers and industry insiders and engage in a professional community that will empower your career in energy.

MORE FROM THIS AUTHOR

Bloomberg