Join our daily and weekly newsletters for the latest updates and exclusive content on industry-leading AI coverage. Learn More

Google has removed its long-standing prohibition against using artificial intelligence for weapons and surveillance systems, marking a significant shift in the company’s ethical stance on AI development that former employees and industry experts say could reshape how Silicon Valley approaches AI safety.

The change, quietly implemented this week, eliminates key portions of Google’s AI Principles that explicitly banned the company from developing AI for weapons or surveillance. These principles, established in 2018, had served as an industry benchmark for responsible AI development.

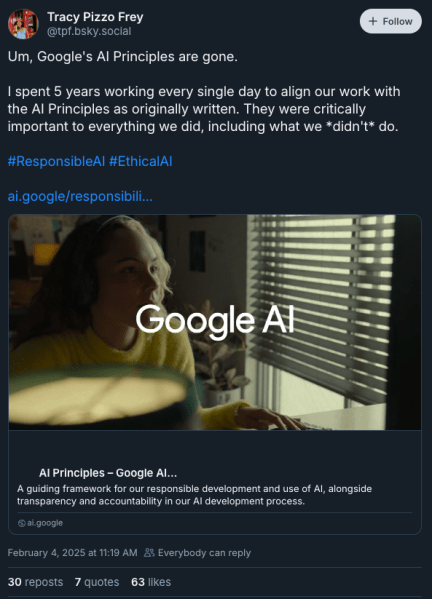

“The last bastion is gone. It’s no holds barred,” said Tracy Pizzo Frey, who spent five years implementing Google’s original AI principles as Senior Director of Outbound Product Management, Engagements and Responsible AI at Google Cloud, in a BlueSky post. “Google really stood alone in this level of clarity about its commitments for what it would build.”

The revised principles remove four specific prohibitions: technologies likely to cause overall harm, weapons applications, surveillance systems, and technologies that violate international law and human rights. Instead, Google now says it will “mitigate unintended or harmful outcomes” and align with “widely accepted principles of international law and human rights.”

Google loosens AI ethics: What this means for military and surveillance tech

This shift comes at a particularly sensitive moment, as artificial intelligence capabilities advance rapidly and debates intensify about appropriate guardrails for the technology. The timing has raised questions about Google’s motivations, though the company maintains these changes have been long in development.

“We’re in a state where there’s not much trust in big tech, and every move that even appears to remove guardrails creates more distrust,” Pizzo Frey said in an interview with VentureBeat. She emphasized that clear ethical boundaries had been crucial for building trustworthy AI systems during her tenure at Google.

The original principles emerged in 2018 amid employee protests over Project Maven, a Pentagon contract involving AI for drone footage analysis. While Google eventually declined to renew that contract, the new changes could signal openness to similar military partnerships.

The revision maintains some elements of Google’s previous ethical framework but shifts from prohibiting specific applications to emphasizing risk management. This approach aligns more closely with industry standards like the NIST AI Risk Management Framework, though critics argue it provides less concrete restrictions on potentially harmful applications.

“Even if the rigor is not the same, ethical considerations are no less important to creating good AI,” Pizzo Frey noted, highlighting how ethical considerations improve AI products’ effectiveness and accessibility.

From Project Maven to policy shift: The road to Google’s AI ethics overhaul

Industry observers say this policy change could influence how other technology companies approach AI ethics. Google’s original principles had set a precedent for corporate self-regulation in AI development, with many enterprises looking to Google for guidance on responsible AI implementation.

The modification of Google’s AI principles reflects broader tensions in the tech industry between rapid innovation and ethical constraints. As competition in AI development intensifies, companies face pressure to balance responsible development with market demands.

“I worry about how fast things are getting out there into the world, and if more and more guardrails are removed,” Pizzo Frey said, expressing concern about the competitive pressure to release AI products quickly without sufficient evaluation of potential consequences.

Big tech’s ethical dilemma: Will Google’s AI policy shift set a new industry standard?

The revision also raises questions about internal decision-making processes at Google and how employees might navigate ethical considerations without explicit prohibitions. During her time at Google, Pizzo Frey had established review processes that brought together diverse perspectives to evaluate AI applications’ potential impacts.

While Google maintains its commitment to responsible AI development, the removal of specific prohibitions marks a significant departure from its previous leadership role in establishing clear ethical boundaries for AI applications. As artificial intelligence continues to advance, the industry watches to see how this shift might influence the broader landscape of AI development and regulation.

Daily insights on business use cases with VB Daily

If you want to impress your boss, VB Daily has you covered. We give you the inside scoop on what companies are doing with generative AI, from regulatory shifts to practical deployments, so you can share insights for maximum ROI.

Read our Privacy Policy

Thanks for subscribing. Check out more VB newsletters here.

An error occured.