Join our daily and weekly newsletters for the latest updates and exclusive content on industry-leading AI coverage. Learn More

Reasoning models like OpenAI o1 and DeepSeek-R1 have a problem: They overthink. Ask them a simple question such as “What is 1+1?” and they will think for several seconds before answering.

Ideally, like humans, AI models should be able to tell when to give a direct answer and when to spend extra time and resources to reason before responding. A new technique presented by researchers at Meta AI and the University of Illinois Chicago trains models to allocate inference budgets based on the difficulty of the query. This results in faster responses, reduced costs, and better allocation of compute resources.

Costly reasoning

Large language models (LLMs) can improve their performance on reasoning problems when they produce longer reasoning chains, often referred to as “chain-of-thought” (CoT). The success of CoT has led to an entire range of inference-time scaling techniques that prompt the model to “think” longer about the problem, produce and review multiple answers and choose the best one.

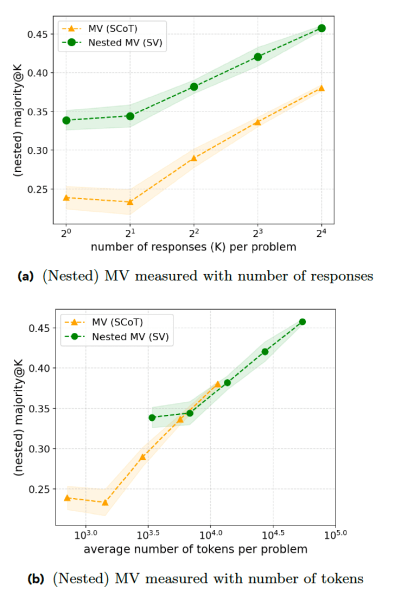

One of the main ways used in reasoning models is to generate multiple answers and choose the one that recurs most often, also known as “majority voting” (MV). The problem with this approach is that the model adopts a uniform behavior, treating every prompt as a hard reasoning problem and spending unnecessary resources to generate multiple answers.

Smart reasoning

The new paper proposes a series of training techniques that make reasoning models more efficient at responding. The first step is “sequential voting” (SV), where the model aborts the reasoning process as soon as an answer appears a certain number of times. For example, the model is prompted to generate a maximum of eight answers and choose the answer that comes up at least three times. If the model is given the simple query mentioned above, the first three answers will probably be similar, which will trigger the early-stopping, saving time and compute resources.

Their experiments show that SV outperforms classic MV in math competition problems when it generates the same number of answers. However, SV requires extra instructions and token generation, which puts it on par with MV in terms of token-to-accuracy ratio.

The second technique, “adaptive sequential voting” (ASV), improves SV by prompting the model to examine the problem and only generate multiple answers when the problem is difficult. For simple problems (such as the 1+1 prompt), the model simply generates a single answer without going through the voting process. This makes the model much more efficient at handling both simple and complex problems.

Reinforcement learning

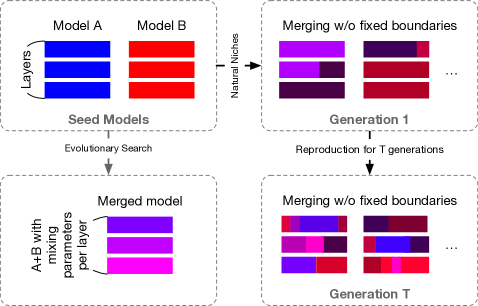

While both SV and ASV improve the model’s efficiency, they require a lot of hand-labeled data. To alleviate this problem, the researchers propose “Inference Budget-Constrained Policy Optimization” (IBPO), a reinforcement learning algorithm that teaches the model to adjust the length of reasoning traces based on the difficulty of the query.

IBPO is designed to allow LLMs to optimize their responses while remaining within an inference budget constraint. The RL algorithm enables the model to surpass the gains obtained through training on manually labeled data by constantly generating ASV traces, evaluating the responses, and choosing outcomes that provide the correct answer and the optimal inference budget.

Their experiments show that IBPO improves the Pareto front, which means for a fixed inference budget, a model trained on IBPO outperforms other baselines.

The findings come against the backdrop of researchers warning that current AI models are hitting a wall. Companies are struggling to find quality training data and are exploring alternative methods to improve their models.

One promising solution is reinforcement learning, where the model is given an objective and allowed to find its own solutions as opposed to supervised fine-tuning (SFT), where the model is trained on manually labeled examples.

Surprisingly, the model often finds solutions that humans haven’t thought of. This is a formula that seems to have worked well for DeepSeek-R1, which has challenged the dominance of U.S.-based AI labs.

The researchers note that “prompting-based and SFT-based methods struggle with both absolute improvement and efficiency, supporting the conjecture that SFT alone does not enable self-correction capabilities. This observation is also partially supported by concurrent work, which suggests that such self-correction behavior emerges automatically during RL rather than manually created by prompting or SFT.”

Daily insights on business use cases with VB Daily

If you want to impress your boss, VB Daily has you covered. We give you the inside scoop on what companies are doing with generative AI, from regulatory shifts to practical deployments, so you can share insights for maximum ROI.

Read our Privacy Policy

Thanks for subscribing. Check out more VB newsletters here.

An error occured.