Join our daily and weekly newsletters for the latest updates and exclusive content on industry-leading AI coverage. Learn More

While consumer attention has focused on the generative AI battles between OpenAI and Google, Anthropic has executed a disciplined enterprise strategy centered on coding — potentially the most valuable enterprise AI use case. The results are becoming increasingly clear: Claude is positioning itself as the LLM that matters most for businesses.

The evidence? Anthropic’s Claude 3.7 Sonnet, released just two weeks ago, set new benchmark records for coding performance. Simultaneously, the company launched Claude Code, a command-line AI agent that helps developers build applications faster. Meanwhile, Cursor — an AI-powered code editor that defaults to Anthropic’s Claude model — has surged to a reported $100 million in annual recurring revenue in just 12 months.

Anthropic’s deliberate focus on coding comes as enterprises increasingly recognize the power of AI coding agents, which enable both seasoned developers and non-coders to build applications with unprecedented speed and efficiency. “Anthropic continues to come out on top,” said Guillermo Rauch, CEO of Vercel, another fast-growing company that lets developers, including non-coders, deploy front-end applications. Last year, Vercel switched its lead coding model from OpenAI’s GPT to Anthropic’s Claude after evaluating the models’ performance on key coding tasks.

Claude 3.7: Setting new benchmarks for AI coding

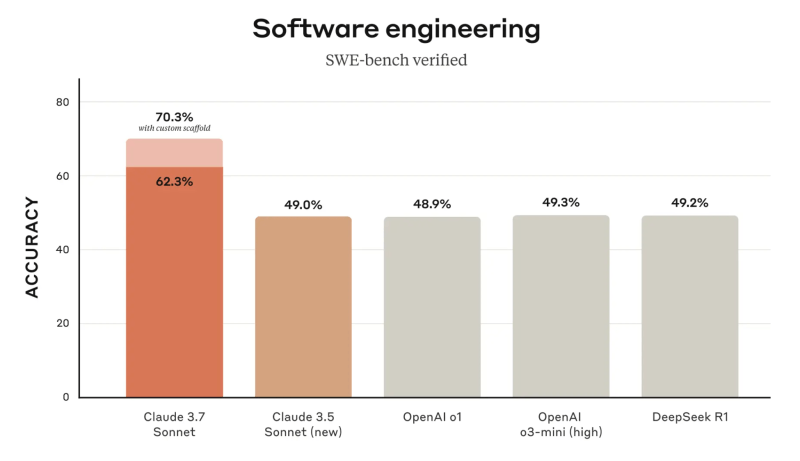

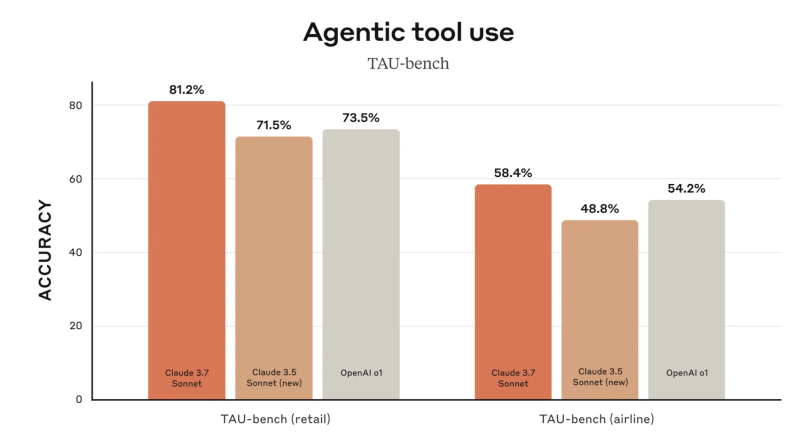

Released February 24, Claude 3.7 Sonnet leads on nearly all coding benchmarks. It scored an impressive 70.3% on the respected SWE-bench benchmark, which measures an agent’s software development skills, handily outperforming nearest competitors OpenAI’s o1 (48.9%) and DeepSeek-R1 (49.2%). It also outperforms competitors on agentic tasks.

Developer communities have quickly verified these results in real-world testing. Reddit threads comparing Claude 3.7 with Grok 3, the newly released model from Elon Musk’s xAI, consistently favor Anthropic’s model for coding tasks. “Based on what I’ve tested, Claude 3.7 seems to be the best for writing code (at least for me),” said a top commenter.

Alongside the 3.7 Sonnet release, Anthropic launched Claude Code, an AI coding agent that works directly through the command line. This complements the company’s October release of Computer Use, which enables Claude to interact with a user’s computer, including using a browser to search the web, opening applications, and inputting text.

Most notable is what Anthropic hasn’t done. Unlike competitors that rush to match each other feature-for-feature, the company hasn’t even bothered to integrate web search functionality into its app — a basic feature most users expect. This calculated omission signals that Anthropic isn’t competing for general consumers but is laser-focused on the enterprise market, where coding capabilities deliver much higher ROI than search.

Hands-on with Claude’s coding capabilities

To test the real-world capabilities of these coding agents, I experimented with building a database to store VentureBeat articles using three different approaches: Claude 3.7 Sonnet through Anthropic’s app; Cursor’s coding agent; and Claude Code.

Using Claude 3.7 directly through Anthropic’s app, I found the solution provided remarkable guidance for a non-coder like myself. It recommended several options, from very robust solutions using things like PostgreSQL database, to easier, lightweight ones like using Airtable. I chose the lightweight solution, and Claude methodically walked me through how to pull articles from the VentureBeat API into Airtable using Make.com for connections. The process took about two hours, including some authentication challenges, but resulted in a functional system. You could say that instead of doing all of the code for me, it showed me a master plan on how to do it.

Cursor, which defaults to Claude’s models, is a full-fledged code editor and was more eager to automate the process. However, it required permission at every step, creating a somewhat tedious workflow.

Claude Code offered yet another approach, running directly in the terminal and using SQLite to create a local database that pulled articles from our RSS feed. This solution was simpler and more reliable in terms of getting me to my end goal, but definitely less robust and feature-rich than the Airtable implementation. I’m now understanding the nature of these tradeoffs, and know that the coding agent I pick really depends on the specific project.

The key insight: Even as a non-developer, I was able to build functional database applications using all three approaches — something that would have been unthinkable just a year ago. And they all relied on Claude under the hood.

For a more detailed review of how to do this so-called “vibe coding,” where you rely on agents to code things while not doing any coding yourself, read this great piece by developer Simon Willison published yesterday. The process can be very buggy, and frustrating at times, but with the right concessions to this, you can go a long way.

The strategy: Why coding is Anthropic’s enterprise play

Anthropic’s singular focus on coding capabilities isn’t accidental. According to projections reportedly leaked to The Information, Anthropic aims to reach $34.5 billion in revenue by 2027 — an 86-fold increase from current levels. Approximately 67% of this projected revenue would come from API business, with enterprise coding applications as the primary driver. While Anthropic hasn’t released exact numbers for its revenue so far, it said its coding revenue surged 1,000% over the last quarter of 2024. Last week, Anthropic announced it had raised $3.5 billion more in funding at a $61.5 billion valuation.

This coding bet is supported by Anthropic’s own Economic Index, which found that 37.2% of queries sent to Claude were in the “computer and mathematical” category, primarily covering software engineering tasks like code modification, debugging and network troubleshooting.

Anthropic appears to be marching to its own beat — at a time when competitors are distracted, rushing to cover both enterprise and consumer markets with feature parity. OpenAI’s lead is reinforced from its early consumer recognition and usage, and it’s stuck trying to serve both regular users and businesses with multiple models and functionality. Google is chasing this trend too, trying to have one of everything.

Anthropic’s comparatively disciplined strategy extends to its product decisions. Instead of chasing consumer market share, the company has prioritized enterprise features like GitHub integration, audit logs, customizable permissions and domain-specific security controls. Six months ago, it introduced a massive 500,000-token context window for developers, while Google limited its 1-million-token window to private testers. The result is a comprehensive coding-focused offering that enterprises are increasingly adopting.

The company recently introduced features allowing non-coders to publish AI-created applications within their organizations, and just last week upgraded its console with enhanced collaboration capabilities, including shareable prompts and templates. This democratization reflects a sort of Trojan Horse strategy: First enable developers to build powerful foundations, then expand access to the broader enterprise workforce, including up into the corporate suite.

The coding agent ecosystem: Cursor and beyond

Perhaps the most telling sign of Anthropic’s success is the explosive growth of Cursor, an AI code editor that reportedly has 360,000 users, with more than 40,000 of them paying customers, after just 12 months — making it possibly the fastest SaaS company to reach that milestone.

Cursor’s success is inextricably linked to Claude. “You’ve got to think their number one customer is Cursor,” noted Sam Witteveen, cofounder of Red Dragon, an independent developer of AI agents. “Most people on [Cursor] were using the Claude Sonnet model — the 3.5 models — already. And now it seems everyone’s just migrating over to 3.7.”

The relationship between Anthropic and its ecosystem extends beyond individual companies like Cursor. In November, Anthropic released its Model Context Protocol (MCP) as an open standard, allowing developers to build tools that interact with Claude models. The standard is being widely adopted by developers.

“By launching this as an open protocol, they’re sort of saying, ‘Hey, everyone, have at it,’” explained Witteveen. “You can develop whatever you want that fits this protocol. We’re going to support this protocol.”

This approach creates a virtuous cycle: Developers build tools for Claude, which makes Claude more valuable to enterprises, which drives more adoption, which attracts more developers.

The competition: Microsoft, OpenAI, Google and open source

While Anthropic has found its focus, competitors are pursuing different strategies with varying results.

Microsoft maintains significant momentum through its GitHub Copilot, which has 1.3 million paid users and has been adopted by more than 77,000 organizations in roughly two years. Companies like Honeywell, State Street, TD Bank Group and Levi’s are among its users. This widespread adoption stems largely from Microsoft’s existing enterprise relationships and its first-mover advantage, whereby it invested early into OpenAI and used that company’s models to power Copilot.

However, even Microsoft has acknowledged Anthropic’s strength. In October, it allowed GitHub Copilot users to choose Anthropic’s models as an alternative to OpenAI. And OpenAI’s recent models — o1 and the newer o3, which emphasize reasoning through extended thinking — haven’t demonstrated particular strengths in coding or agentic tasks.

Google has made its own play by recently making its Code Assist free, but this move seems more defensive than strategic.

The open source movement is another significant force in this landscape. Meta’s Llama models have gained substantial enterprise traction, with major companies like AT&T, DoorDash and Goldman Sachs deploying Llama-based models for various applications. The open-source approach offers enterprises greater control, customization options and cost benefits that closed models can’t match, as VentureBeat reported last year.

Rather than seeing this as a direct threat, Anthropic appears to be positioning itself as complementary to open source. Enterprise customers can use Claude alongside open-source models depending on specific needs, a hybrid approach that maximizes the strengths of each.

In fact, most enterprise companies of scale I’ve talked with over the past several months are explicitly multimodal, in that their AI workflows allow them to use whatever model is best for a given case. Intuit was an early example of a company that had bet on OpenAI as a default for its tax return applications, but then last year switched to Claude because it was superior in some cases. The pain of switching led Intuit to create an AI orchestration framework that allowed switching between models to be much more seamless, as Nhung Ho, Intuit’s VP of AI, told VentureBeat at the time.

Most other enterprise companies have since followed a similar practice. They use whatever model is best for the specific case, pulling in models with simple API calls. In some cases, an open-source model like Llama might work well, but in others — for example, in calculations where accuracy is important — Claude is the choice, Intuit’s Ho explained at VentureBeat’s VB Transform event last year.

Over the past couple of days, I’ve been attending the HumanX conference in Las Vegas, where hundreds of developers gathered to talk about AI. Claude comes up almost always whenever the topic of agents or coding is raised. Over lunch yesterday, Julianne Averill, managing director at Danforth Advisors, which advises life science companies, said her company had found Claude superior for many such tasks, including building structured analysis tables.

Vercel CEO Guillermo Rauch, another attendee, said his company, which has surpassed $100 million in annual revenue, chose Claude last year as its default model to help developers code after doing rigorous evaluations of all models. “3.7 is king,” Rauch told VentureBeat. He agreed it’s important to offer developers a choice of models, since the breakneck pace of advances means there can’t be loyalty to a single model. But while Vercel’s V0 product, which lets users generate web user interfaces (UIs) using natural-language prompts, offers that choice, it has to pick a default model to help users during their initial ideation and reasoning phase. That model is Claude Sonnet. “You need the architect model that is capable of reasoning and does the lion’s share of code generation,” he said. “A significant chunk of our pipeline is powered by Anthropic Sonnet.” Adobe, Chick-Fil-A and Bed Bath and Beyond are Vercel’s customers.

Still Rauch cautioned that fluidity in the LLM race remains, and the lead model could change at any time. Vercel experimented with China’s DeepSeek, he said, but found it fell just short of matching Claude’s Sonnet. Similarly, he said, Alibaba’s Qwen model has gotten very good.

Enterprise implications: Making the shift to coding agents

For enterprise decision-makers, this rapidly evolving landscape presents both opportunities and challenges.

Security remains a top concern, but a recent independent report found Claude 3.7 Sonnet to be the most secure model yet — the only one tested that proved “jailbreak-proof.” This security stance, combined with Anthropic’s backing from both Google and Amazon (and integration into AWS Bedrock), positions it well for enterprise adoption.

The rise of coding agents isn’t just changing how applications are built — it’s democratizing the process. According to GitHub, 92% of U.S.-based developers at enterprise companies were already using AI-powered coding tools at work 18 months ago. That number has likely grown substantially since then.

“The challenge that people are having [because of] not being a coder is really that they don’t know a lot of the terminology. They don’t know best practices,” explained Witteveen. AI coding agents increasingly bridge this gap, allowing technical and non-technical team members to collaborate more effectively.

For enterprise adoption, Witteveen recommends a balanced approach: “It’s the balance between security and experimentation at the moment. Clearly, on the developer side, people are starting to build real-world apps with this stuff.”

For a deeper exploration of these issues, check out my recent YouTube video conversation with Witteveen, where we take a deep dive into the state of coding agents and what they mean for enterprise AI strategy.

Looking ahead: the future of enterprise coding

The rise of AI coding agents signals a fundamental shift in enterprise software development. When used effectively, these tools don’t replace developers but transform their roles, allowing them to focus on architecture and innovation rather than implementation details.

Anthropic’s disciplined approach in focusing specifically on coding capabilities while competitors chase multiple priorities appears to be paying dividends for the company. By the end of 2025, we may look back on this period as the moment when AI coding agents became essential enterprise tools — with Claude leading the way.

For technical decision-makers, the message is clear: Start experimenting with these tools now or risk falling behind competitors who are already using them to accelerate development cycles dramatically. This moment echoes the early days of the iPhone revolution, when companies initially tried to block “unsanctioned” devices from their corporate networks, only to eventually embrace BYOD policies as employee demand became overwhelming. Some companies VentureBeat has talked with, like Honeywell, have recently similarly tried to shut down “rogue” use of AI coding tools not approved by IT.

Speaking Monday at the HumanX conference, James Reggio, the CTO of Brex, a company that provides credit cards and other financial services to small and mid-sized enterprises, said his company initially also tried to enforce a top-down approach to AI model selection, in an effort to reach perfection. But the company faced revolt among its developer employees, and soon realized this was futile. It decided to allow users to experiment freely. Smart companies are already setting up secure sandbox environments to allow controlled experimentation. Organizations that create clear guardrails while encouraging innovation will benefit from both employee enthusiasm and insights about how these tools can best serve their unique needs — positioning themselves ahead of competitors who resist change. And Anthropic’s Claude, at least for now, is a big beneficiary of this movement.

Watch my video with developer Sam Witteveen here for a full deep dive into the coding agent trend:

Daily insights on business use cases with VB Daily

If you want to impress your boss, VB Daily has you covered. We give you the inside scoop on what companies are doing with generative AI, from regulatory shifts to practical deployments, so you can share insights for maximum ROI.

Read our Privacy Policy

Thanks for subscribing. Check out more VB newsletters here.

An error occured.