Join our daily and weekly newsletters for the latest updates and exclusive content on industry-leading AI coverage. Learn More

Whether by automating tasks, serving as copilots or generating text, images, video and software from plain English, AI is rapidly altering how we work. Yet, for all the talk about AI revolutionizing jobs, widespread workforce displacement has yet to happen.

It seems likely that this could be the lull before the storm. According to a recent World Economic Forum (WEF) survey, 40% of employers anticipate reducing their workforce between 2025 and 2030 in areas wherever AI can automate tasks. This statistic dovetails well with earlier predictions. For example, Goldman Sachs said in a research report two years ago that “generative AI could expose the equivalent of 300 million full-time jobs to automation leading to “significant disruption” in the labor market.

According to the International Monetary Fund (IMF) “almost 40% of global employment is exposed to AI.” Brookings said last fall in another report that “more than 30% of all workers could see at least 50% of their occupation’s tasks disrupted by gen AI.” Several years ago, Kai-Fu Lee, one of the world’s foremost AI experts, said in a 60 Minutes interview that AI could displace 40% of global jobs within 15 years.

If AI is such a disruptive force, why aren’t we seeing large layoffs?

Some have questioned those predictions, especially as job displacement from AI so far appears negligible. For example, an October 2024 Challenger Report that tracks job cuts said that in the 17 months between May 2023 and September 2024, fewer than 17,000 jobs in the U.S. had been lost due to AI.

On the surface, this contradicts the dire warnings. But does it? Or does it suggest that we are still in a gradual phase before a possible sudden shift? History shows that technology-driven change does not always happen in a steady, linear fashion. Rather, it builds up over time until a sudden shift reshapes the landscape.

In a recent Hidden Brain podcast on inflection points, researcher Rita McGrath of Columbia University referenced Ernest Hemingway’s 1926 novel The Sun Also Rises. When one character was asked how they went bankrupt, they answered: “Two ways. Gradually, then suddenly.” This could be an allegory for the impact of AI on jobs.

This pattern of change — slow and nearly imperceptible at first, then suddenly undeniable — has been experienced across business, technology and society. Malcolm Gladwell calls this a “tipping point,” or the moment when a trend reaches critical mass, then dramatically accelerates.

In cybernetics — the study of complex natural and social systems — a tipping point can occur when recent technology becomes so widespread that it fundamentally changes the way people live and work. In such scenarios, the change becomes self-reinforcing. This often happens when innovation and economic incentives align, making change inevitable.

Gradually, then suddenly

While employment impacts from AI are (so far) nascent, that is not true of AI adoption. In a new survey by McKinsey, 78% of respondents said their organizations use AI in at least one business function, up more than 40% from 2023. Other research found that 74% of enterprise C-suite executives are now more confident in AI for business advice than colleagues or friends. The research also revealed that 38% trust AI to make business decisions for them, while 44% defer to AI reasoning over their own insights.

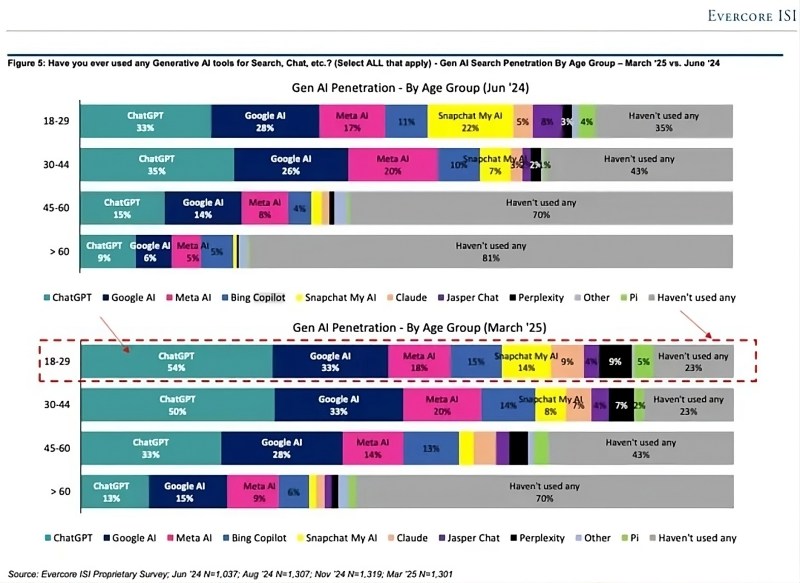

It is not only business executives who are increasing their use of AI tools. A new chart from the investment firm Evercore depicts increased use among all age groups over the last 9 months, regardless of application.

This data reveals both broad and growing adoption of AI tools. However, true enterprise AI integration remains in its infancy — just 1% of executives describe their gen AI rollouts as mature, according to another McKinsey survey. This suggests that while AI adoption is surging, companies have yet to fully integrate it into core operations in a way that might displace jobs at scale. But that could change quickly. If economic pressures intensify, businesses may not have the luxury of gradual AI adoption and may feel the need to automate fast.

Canary in the coal mine

One of the first job categories likely to be hit by AI is software development. Numerous AI tools based on large language models (LLMs) exist to augment programming, and soon the function could be entirely automated. Anthropic CEO Dario Amodei said recently on Reddit that “we’re 3 to 6 months from a world where AI is writing 90% of the code. And then in 12 months, we may be in a world where AI is writing essentially all of the code.”

This trend is becoming clear, as evidenced by startups in the winter 2025 cohort of incubator Y Combinator. Managing partner Jared Friedman said that 25% of this startup batch have 95% of their codebases generated by AI. He added: “A year ago, [the companies] would have built their product from scratch — but now 95% of it is built by an AI.”

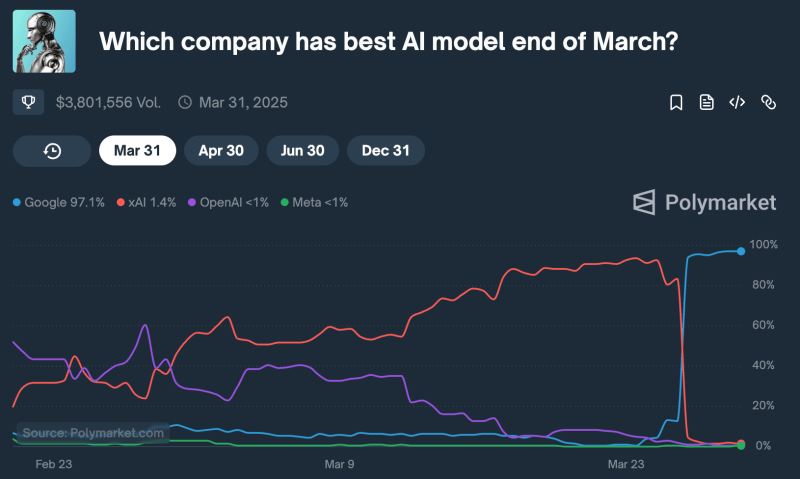

The LLMs underlying code generation, such as Claude, Gemini, Grok, Llama and ChatGPT, are all advancing rapidly and increasingly perform well on an array of quantitative benchmark tests. For example, reasoning model o3 from OpenAI missed only one question on the 2024 American Invitational Mathematics Exam, scoring 97.7%, and achieved 87.7% on GPQA Diamond, which has graduate-level biology, physics and chemistry questions.

Even more striking is a qualitative impression of the new GPT 4.5, as described in a Confluence post. GPT 4.5 correctly answered a broad and vague prompt that other models could not. This might not seem remarkable, but the authors noted: “This insignificant exchange was the first conversation with an LLM where we walked away thinking, ‘Now that feels like general intelligence.’” Did OpenAI just cross a threshold with GPT 4.5?

Tipping points

While software engineering may be among the first knowledge-worker professions to face widespread AI automation, it will not be the last. Many other white-collar jobs covering research, customer service and financial analysis are similarly exposed to AI-driven disruption.

What might prompt a sudden shift in workplace adoption of AI? History shows that economic recessions often accelerate technological adoption, and the next downturn may be the tipping point when AI’s impact on jobs shifts from gradual to sudden.

During economic downturns, businesses face pressure to cut costs and improve efficiency, making automation more attractive. Labor becomes more expensive compared to technology investments, especially when companies need to do more with fewer human resources. This phenomenon is sometimes called “forced productivity.” As an example, the Great Recession of 2007 to 2009 saw significant advances in automation, cloud computing and digital platforms.

If a recession materializes in 2025 or 2026, companies facing pressure to reduce headcount may well turn to AI technologies, particularly tools and processes based on LLMs, as a strategy to support efficiency and productivity with fewer people. This could be even more pronounced — and more sudden — given business worries about falling behind in AI adoption.

Will there be a recession in 2025?

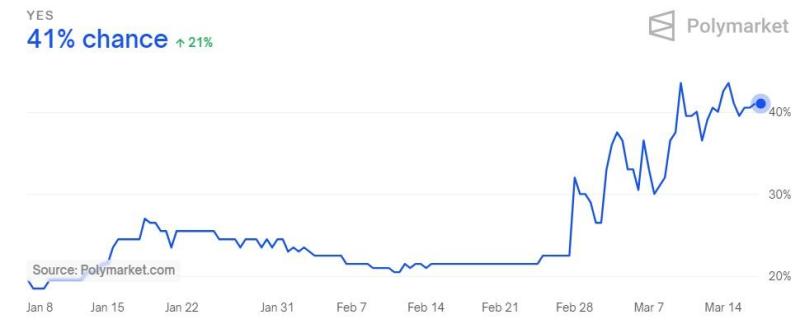

It is always difficult to tell when a recession will occur. J.P. Morgan’s chief economist recently estimated a 40% chance. Former Treasury Secretary Larry Summers said it could be around 50%. The betting markets are aligned with these views, predicting a greater than 40% probability that a recession will occur in 2025.

If a recession does occur later in 2025, it could indeed be characterized as an “AI recession.” However, AI itself will not be the cause. Instead, economic necessity could force companies to accelerate automation decisions. This would not be a technological inevitability, but a strategic response to financial pressure.

The extent of AI’s impact will depend on several factors, including the pace of technological sophistication and integration, the effectiveness of workforce retraining programs and the adaptability of businesses and employees to an evolving landscape.

Whenever it occurs, the next recession may not just lead to temporary job losses. Companies that have been experimenting with AI or adopting it in limited deployments may suddenly find automation not optional, but essential for survival. If such a scenario happens, it may signal a permanent shift toward a more AI-driven workforce.

As Salesforce CEO Marc Benioff put it in a recent earnings call: “We’re the last generation of CEOs to only manage humans. Every CEO going forward is going to manage humans and agents together. I know that’s what I’m doing. … You can see it also in the global economy. I think productivity is going to rise without additions to more human labor, which is good because human labor is not increasing in the global workforce.”

Many of history’s biggest technological shifts have coincided with economic downturns. AI may be next. The only question left is: Will 2025 be the year AI not only augments jobs but begins to replace them?

Gradually, then suddenly.

Gary Grossman is EVP of technology practice at Edelman and global lead of the Edelman AI Center of Excellence.

Daily insights on business use cases with VB Daily

If you want to impress your boss, VB Daily has you covered. We give you the inside scoop on what companies are doing with generative AI, from regulatory shifts to practical deployments, so you can share insights for maximum ROI.

Read our Privacy Policy

Thanks for subscribing. Check out more VB newsletters here.

An error occured.