This story originally appeared in The Algorithm, our weekly newsletter on AI. To get stories like this in your inbox first, sign up here.

This week’s edition of The Algorithm is brought to you not by your usual host, James O’Donnell, but Eileen Guo, an investigative reporter at MIT Technology Review.

A few weeks ago, when I was at the digital rights conference RightsCon in Taiwan, I watched in real time as civil society organizations from around the world, including the US, grappled with the loss of one of the biggest funders of global digital rights work: the United States government.

As I wrote in my dispatch, the Trump administration’s shocking, rapid gutting of the US government (and its push into what some prominent political scientists call “competitive authoritarianism”) also affects the operations and policies of American tech companies—many of which, of course, have users far beyond US borders. People at RightsCon said they were already seeing changes in these companies’ willingness to engage with and invest in communities that have smaller user bases—especially non-English-speaking ones.

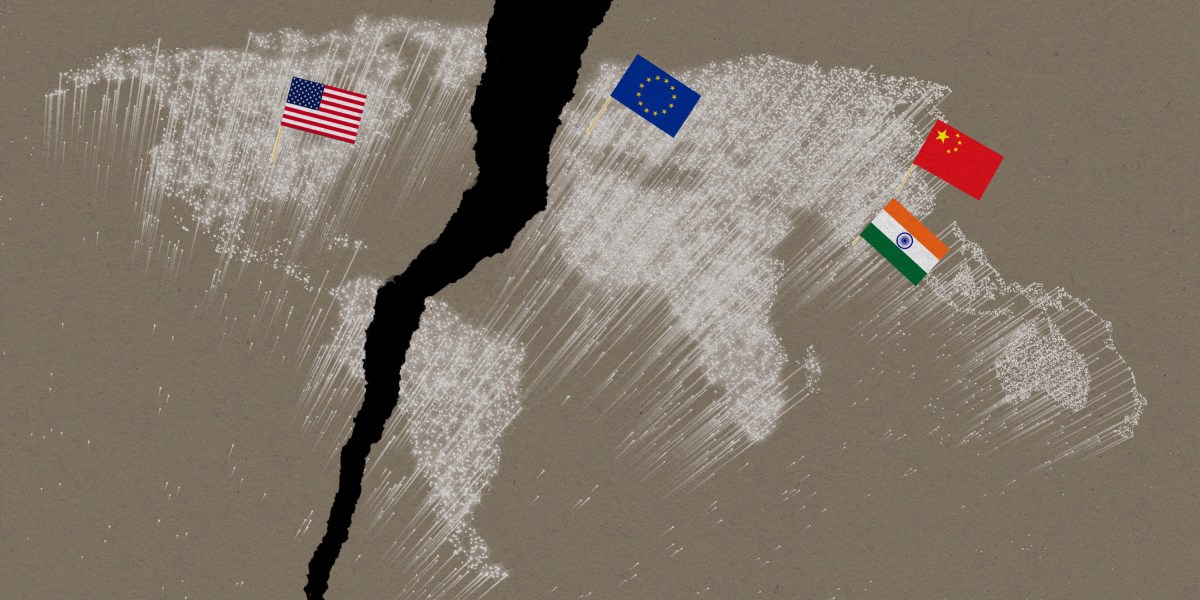

As a result, some policymakers and business leaders—in Europe, in particular—are reconsidering their reliance on US-based tech and asking whether they can quickly spin up better, homegrown alternatives. This is particularly true for AI.

One of the clearest examples of this is in social media. Yasmin Curzi, a Brazilian law professor who researches domestic tech policy, put it to me this way: “Since Trump’s second administration, we cannot count on [American social media platforms] to do even the bare minimum anymore.”

Social media content moderation systems—which already use automation and are also experimenting with deploying large language models to flag problematic posts—are failing to detect gender-based violence in places as varied as India, South Africa, and Brazil. If platforms begin to rely even more on LLMs for content moderation, this problem will likely get worse, says Marlena Wisniak, a human rights lawyer who focuses on AI governance at the European Center for Not-for-Profit Law. “The LLMs are moderated poorly, and the poorly moderated LLMs are then also used to moderate other content,” she tells me. “It’s so circular, and the errors just keep repeating and amplifying.”

Part of the problem is that the systems are trained primarily on data from the English-speaking world (and American English at that), and as a result, they perform less well with local languages and context.

Even multilingual language models, which are meant to process multiple languages at once, still perform poorly with non-Western languages. For instance, one evaluation of ChatGPT’s response to health-care queries found that results were far worse in Chinese and Hindi, which are less well represented in North American data sets, than in English and Spanish.

For many at RightsCon, this validates their calls for more community-driven approaches to AI—both in and out of the social media context. These could include small language models, chatbots, and data sets designed for particular uses and specific to particular languages and cultural contexts. These systems could be trained to recognize slang usages and slurs, interpret words or phrases written in a mix of languages and even alphabets, and identify “reclaimed language” (onetime slurs that the targeted group has decided to embrace). All of these tend to be missed or miscategorized by language models and automated systems trained primarily on Anglo-American English. The founder of the startup Shhor AI, for example, hosted a panel at RightsCon and talked about its new content moderation API focused on Indian vernacular languages.

First, recent research and development on language models has reached the point where data set size is no longer a predictor of performance, meaning that more people can create them. In fact, “smaller language models might be worthy competitors of multilingual language models in specific, low-resource languages,” says Aliya Bhatia, a visiting fellow at the Center for Democracy & Technology who researches automated content moderation.

Then there’s the global landscape. AI competition was a major theme of the recent Paris AI Summit, which took place the week before RightsCon. Since then, there’s been a steady stream of announcements about “sovereign AI” initiatives that aim to give a country (or organization) full control over all aspects of AI development.

AI sovereignty is just one part of the desire for broader “tech sovereignty” that’s also been gaining steam, growing out of more sweeping concerns about the privacy and security of data transferred to the United States. The European Union appointed its first commissioner for tech sovereignty, security, and democracy last November and has been working on plans for a “Euro Stack,” or “digital public infrastructure.” The definition of this is still somewhat fluid, but it could include the energy, water, chips, cloud services, software, data, and AI needed to support modern society and future innovation. All these are largely provided by US tech companies today. Europe’s efforts are partly modeled after “India Stack,” that country’s digital infrastructure that includes the biometric identity system Aadhaar. Just last week, Dutch lawmakers passed several motions to untangle the country from US tech providers.

This all fits in with what Andy Yen, CEO of the Switzerland-based digital privacy company Proton, told me at RightsCon. Trump, he said, is “causing Europe to move faster … to come to the realization that Europe needs to regain its tech sovereignty.” This is partly because of the leverage that the president has over tech CEOs, Yen said, and also simply “because tech is where the future economic growth of any country is.”

But just because governments get involved doesn’t mean that issues around inclusion in language models will go away. “I think there needs to be guardrails about what the role of the government here is. Where it gets tricky is if the government decides ‘These are the languages we want to advance’ or ‘These are the types of views we want represented in a data set,’” Bhatia says. “Fundamentally, the training data a model trains on is akin to the worldview it develops.”

It’s still too early to know what this will all look like, and how much of it will prove to be hype. But no matter what happens, this is a space we’ll be watching.

Deeper Learning

OpenAI has released its first research into how using ChatGPT affects people’s emotional well-being

OpenAI released two pieces of research last week that explore how ChatGPT affects people who engage with it on emotional issues, yielding some interesting results. Female study participants were slightly less likely to socialize with people than their male counterparts who used the chatbot for the same period of time, our reporter Rhiannon Williams writes. And people who used voice mode in a gender that was not their own reported higher levels of loneliness at the end of the experiment.

Why it matters: AI companies have raced to build chatbots that act not just as productivity tools but also as companions, romantic partners, friends, therapists, and more. Legally, it’s largely still a Wild West landscape. Some have instructed users to harm themselves, and others have offered sexually charged conversations as underage characters represented by deepfakes. More research into how people, especially children, are using these AI models is essential. OpenAI’s work is only a start. Read more from Rhiannon Williams.

Bits and Bytes

Opinion – Why handing over total control to AI agents would be a huge mistake

Companies like OpenAI and Butterfly Effect (the startup in China that made Manus) are racing to build AI agents that can do tasks for you by taking over your computer. In this op-ed, some top AI researchers detail the potential missteps that could occur if we cede more control of our digital lives to decision-making AIs.

A provocative experiment pitted AI against federal judges

Research has long shown that judges are influenced by many factors, like how sympathetic they are to defendants, or when their last meal was. Despite AI models’ inherent problems with biases and hallucinations, researchers at the University of Chicago Law School wondered if they can present more objective opinions. They can, but that doesn’t make them better judges, the researchers say. (The Washington Post)

Elon Musk’s “truth-seeking” chatbot often disagrees with him

Musk promised his company xAI’s model Grok would be an antidote to the “woke” and politically influenced chatbots that he says dominate today. But in tests done by the Washington Post, the model contradicted many of Musk’s claims about specific issues. (The Washington Post)

A Disney employee downloaded an AI tool that contained malware, and it ruined his life

MIT Technology Review has long predicted that the proliferation of AI will enable scammers to up their productivity as never before. One victim of this trend is Matthew Van Andel, a Disney employee who downloaded malware disguised as an AI tool. It led to his firing. (Wall Street Journal)

The facial recognition company Clearview attempted to buy Social Security numbers and mugshots for its database

Three years ago, Clearview was fined for scraping images of individuals’ faces from the internet. Now, court records reveal that the company was attempting to buy 690 million arrest records and 390 million arrest photos in the US—records that also contained Social Security numbers, emails, and physical addresses. The deal fell through, but Clearview nonetheless holds one of the largest databases of facial images, and its tools are used by police and federal agencies. (404 Media)