Join our daily and weekly newsletters for the latest updates and exclusive content on industry-leading AI coverage. Learn More

There’s a new king on the throne of AI coding models: Today, Google’s DeepMind AI research unit unveiled Gemini 2.5 Pro “I/O” edition, a new version of its hit Gemini 2.5 Pro multimodal large language model (LLM) released back in March that DeepMind CEO Demis Hassabis said on X is “the best coding model we’ve ever built!”

Indeed, the initial benchmarks released by the company indicate Google has taken the lead — for the first time since the generative AI race began in earnest with the late 2022 launch of ChatGPT — above all other models on at least one important coding benchmark.

The new version, labeled “gemini-2.5-pro-preview-05-06,” replaces the previous 03-25 release and is now available for indie developers in Google AI Studio and for enterprises in the Vertex AI cloud platform, as well as to individual users in the Gemini app. Google’s blog post said it also powers the Gemini mobile app’s Canvas and other features.

The new version powers feature development in apps like Gemini 95, where the model helps match visual styles across components automatically. It also enables workflows like converting YouTube videos into full-featured learning applications and crafting highly styled components—such as responsive video players or animated dictation UIs—with little to no manual CSS editing.

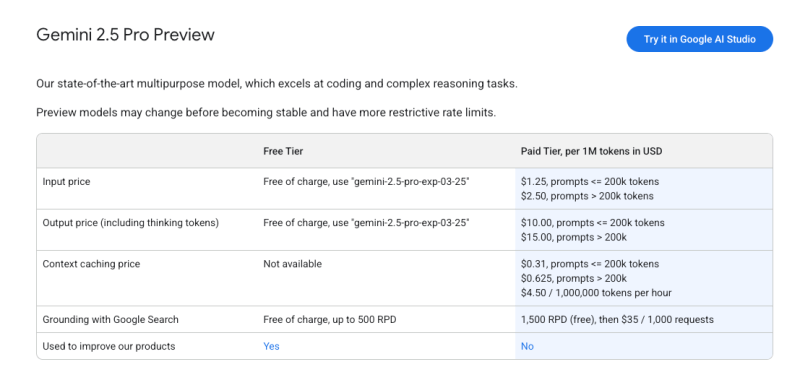

It’s a proprietary model, meaning enterprises will have to pay Google to use it and access it only through Google’s web services. However, it doesn’t alter pricing or rate limits; current users of Gemini 2.5 Pro will be automatically routed to the updated model which costs $1.25/$10 per million tokens in/out (for context lengths of 200,000 tokens) compared to Claude 3.7 Sonnet’s $3/$15.

The company frames this move — ahead of Google’s annual I/O (input/output) developer conference later this month in Mountain View and online, May 20-21 — as a response to strong community feedback around Gemini’s practical utility in real-world code generation and interface design.

Logan Kilpatrick, Senior Product Manager for Gemini API and Google AI Studio, confirmed in a developer blog post that the update also addresses key developer feedback around function calling, with improvements in error reduction and trigger reliability.

Top scores from human raters at generating web apps

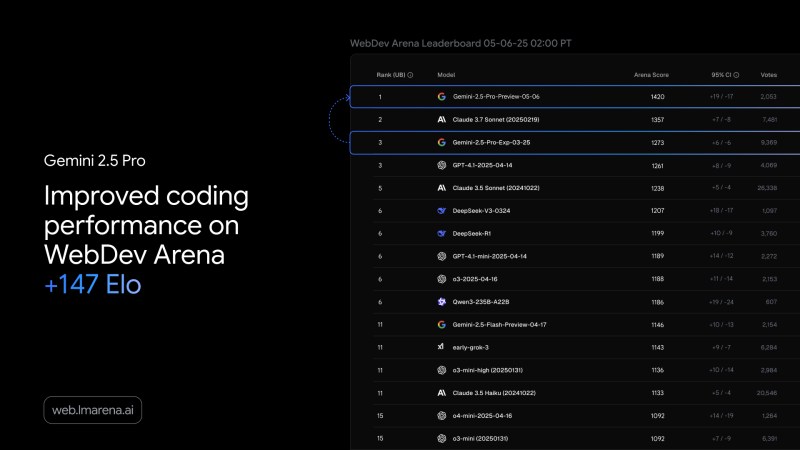

On WebDev Arena Leaderboard, a third-party metric that ranks models by human preference based on their ability to generate visually appealing and functional web apps, Gemini 2.5 Pro Preview (05-06) has now overtaken Anthropic’s Claude 3.7 Sonnet at the number one spot.

The new version scored 1499.95 on the leaderboard, placing it well ahead of Sonnet 3.7’s 1377.10. The previous Gemini 2.5 Pro (03-25) model held third place with a score of 1278.96, meaning the I/O edition represents a 221-point jump.

As noted by the AI power user “Lisan al Gaib” on X, not even OpenAI’s GPT-4o (“o3”) was able to displace Sonnet 3.7, highlighting the significance of Gemini’s advancement.

Gemini’s performance boost reflects improved reliability, aesthetics, and usability in its outputs.

Already winning rave reviews

Several developers and platform leaders have highlighted the model’s improved reliability and application in production scenarios.

Cognition’s Silas Alberti noted that Gemini 2.5 Pro was the first model to successfully complete a complex refactoring of a backend routing system, demonstrating the kind of decision-making one would expect from a senior developer.

Michael Truell, CEO of the AI coding tool Cursor, said internal testing shows a marked decrease in tool call failures, a previously noted issue. He expects users to find the latest version significantly more effective in hands-on environments. Cursor has already integrated Gemini 2.5 Pro into its own code agent, reflecting how developers are using the model as a key component in more intelligent developer workflows.

Michele Catasta, President of Replit, described Gemini 2.5 Pro as the best frontier model for balancing capability with latency. His comments suggest that Replit is considering integration of the model into its own tools, especially for tasks where high responsiveness and reliability are crucial.

Similarly, AI educator and BlueShell private AI chatbot founder Paul Couvert noted on X that “Its code and UI generation capabilities are impressive.’”

And as Pietro Schirano, CEO of the AI art tool EverArt, noted on X, the new Gemini 2.5 Pro I/O edition was able to generate an interactive simulation of the “1 gorilla vs. 100 men” meme that’s been circulating on social media lately from a single prompt.

Showing off another interactive Tetris-style puzzle game with working sound effects reportedly created in less than a minute, X user “RameshR” (@rezmeram) wrote that “the casual game industry is dead!!”

These endorsements add weight to DeepMind’s claims of practical improvements and may encourage broader adoption across developer platforms.

Full apps and programs from one text prompt

One of the standout features of the update is its ability to build full, interactive web apps or simulations from a single prompt.

This aligns with DeepMind’s vision of simplifying the prototyping and development process.

Demonstrations within the Gemini app showcase how users can transform visual patterns or thematic prompts into usable code, lowering the barrier to entry for design-oriented developers and teams experimenting with new ideas.

Although the architecture and under-the-hood changes of Gemini 2.5 Pro have not been detailed publicly, the emphasis remains on enabling faster, more intuitive development experiences.

By leaning into its strengths in code generation and multimodal inputs, Gemini 2.5 Pro is positioned less as a research novelty and more as a practical tool for real-world coding challenges. The early release reflects a clear intention from Google DeepMind to meet developer demand and maintain momentum ahead of its major conference announcements.

Daily insights on business use cases with VB Daily

If you want to impress your boss, VB Daily has you covered. We give you the inside scoop on what companies are doing with generative AI, from regulatory shifts to practical deployments, so you can share insights for maximum ROI.

Read our Privacy Policy

Thanks for subscribing. Check out more VB newsletters here.

An error occured.