Thailand’s PTT Exploration and Production Public Co. Ltd. (PTTEP) said it has acquired a 50 percent participating interest in Block A-18 of the Malaysia–Thailand Joint Development Area (MTJDA) for $450 million.

The sellers, Hess (Bahamas) Limited and Hess Asia Holdings Inc., are subsidiaries of Chevron following the Chevron-Hess merger.

The acquisition enhances PTTEP’s gas production volume, petroleum reserves, and increases its investment in the MTJDA from its existing 50 percent participating interest in Block B-17-01, the company said in a news release.

Block A-18 currently produces 600 million standard cubic feet of natural gas per day (MMscfd) which is distributed equally to Thailand and Malaysia, the company said, adding that the 300 MMscfd supplied to Thailand accounts for six percent of the country’s domestic gas demand.

PTTEP said it plans to develop additional production wells and wellhead platforms, as well as gas pipelines, to support a consistent and reliable gas supply.

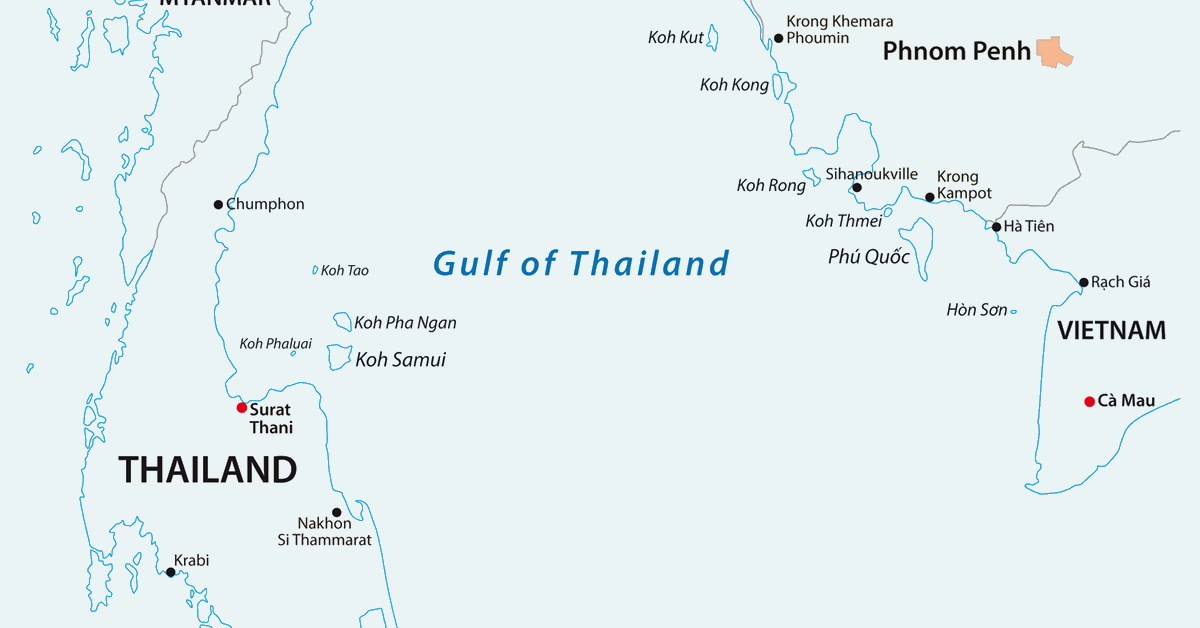

The MTJDA is located in the southern part of the Gulf of Thailand. Covering an area of approximately 2,800 square miles (7,250 square kilometers), it is a key source of natural gas and condensates for Thailand and Malaysia, according to the release.

Block A-18 which includes Cakerawala, Bumi, Suriya, Bulan, and Bulan South fields, started production in 2005, while Block B-17-01 began production in 2010. The block includes Muda, Tapi, Tanjung, Amarit, Jengka, Melati, and Andalas fields, and currently produces approximately 300 MMscfd of natural gas for Thailand and Malaysia, the release said.

“PTTEP is pleased to further expand our operations in the MTJDA, which is recognized for its petroleum potential and strategic significance to Thailand’s energy security. The acquisition also contributes to the company’s growth. Apart from the existing producing fields, Block A-18 includes several discovered gas fields awaiting development to unlock their full potential. Participation in Block A-18 also fosters operational synergy with Block B-17-01, enhancing efficiency to ensure continuous and accelerated energy supply for both countries.” PTTEP CEO Montri Rawanchaikul said.

First Half Updates

In 2025, PTTEP, in partnership with Eni Algeria Exploration B.V. as the operator, was awarded the Reggane II block in Algeria. The company, which holds a 34 percent interest in the project, signed a production sharing contract (PSC) for the asset, with the contract effective upon the official announcement by the Algerian government.

Reggane II, located near the Algeria Touat Project, “presents a strategic opportunity to enhance development and resource management synergies, coupled with discovered gas and exploration potential in the area,” PTTEP said in a separate statement.

In the Middle East, PTTEP has also signed an agreement to extend the Exploration and Production Sharing Agreement for Block 53 through 2050. The company has also been awarded the production concession agreement for the Abu Dhabi Offshore 2 project by a state agency of the Emirate of Abu Dhabi, UAE, following a successful gas discovery. This marks a step toward a final investment decision (FID), the company said.

For the first half of the year PTTEP reported total revenue of THB 148.53 billion ($4.43 billion). Major growth was mainly from the increase in G1/61 production since March 2024, higher crude sales of Sabah Block K project and the participation interest increase of Sinphuhorm project, the company said.

To contact the author, email [email protected]