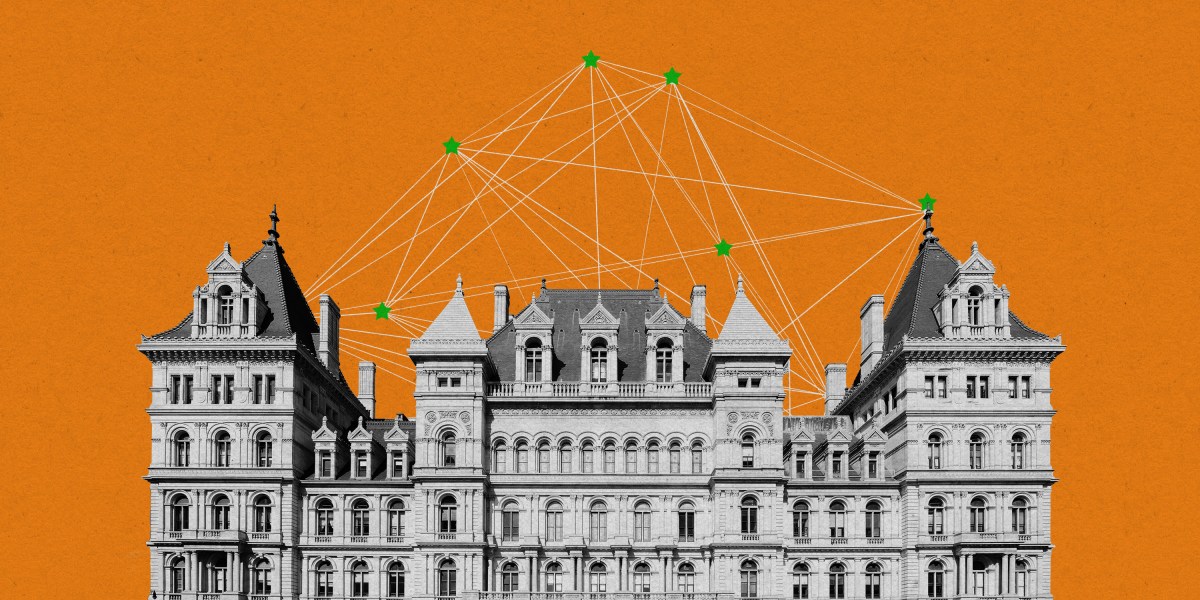

The first Democrat in New York history with a computer science background wants to revive some of the ideas behind the failed California AI safety bill, SB 1047, with a new version in his state that would regulate the most advanced AI models. It’s called the RAISE Act, an acronym for “Responsible AI Safety and Education.”

Assembly member Alex Bores hopes his bill, currently an unpublished draft—subject to change—that MIT Technology Review has seen, will address many of the concerns that blocked SB 1047 from passing into law.

SB 1047 was, at first, thought to be a fairly modest bill that would pass without much fanfare. In fact, it flew through the California statehouse with huge margins and received significant public support.

However, before it even landed on Governor Gavin Newsom’s desk for signature in September, it sparked an intense national fight. Google, Meta, and OpenAI came out against the bill, alongside top congressional Democrats like Nancy Pelosi and Zoe Lofgren. Even Hollywood celebrities got involved, with Jane Fonda and Mark Hamill expressing support for the bill.

Ultimately, Newsom vetoed SB 1047, effectively killing regulation of so-called frontier AI models not just in California but, with the lack of laws on the national level, anywhere in the US, where the most powerful systems are developed.

Now Bores hopes to revive the battle. The main provisions in the RAISE Act include requiring AI companies to develop safety plans for the development and deployment of their models.

The bill also provides protections for whistleblowers at AI companies. It forbids retaliation against an employee who shares information about an AI model in the belief that it may cause “critical harm”; such whistleblowers can report the information to the New York attorney general. One way the bill defines critical harm is the use of an AI model to create a chemical, biological, radiological, or nuclear weapon that results in the death or serious injury of 100 or more people.

Alternatively, a critical harm could be a use of the AI model that results in 100 or more deaths or at least $1 billion in damages in an act with limited human oversight that if committed by a human would constitute a crime requiring intent, recklessness, or gross negligence.

The safety plans would ensure that a company has cybersecurity protections in place to prevent unauthorized access to a model. The plan would also require testing of models to assess risks before and after training, as well as detailed descriptions of procedures to assess the risks associated with post-training modifications. For example, some current AI systems have safeguards that can be easily and cheaply removed by a malicious actor. A safety plan would have to address how the company plans to mitigate these actions.

The safety plans would then be audited by a third party, like a nonprofit with technical expertise that currently tests AI models. And if violations are found, the bill empowers the attorney general of New York to issue fines and, if necessary, go to the courts to determine whether to halt unsafe development.

A different flavour of bill

The safety plans and external audits were elements of SB 1047, but Bores aims to differentiate his bill from the California one. “We focused a lot on what the feedback was for 1047,” he says. “Parts of the criticism were in good faith and could make improvements. And so we’ve made a lot of changes.”

The RAISE Act diverges from SB 1047 in a few ways. For one, SB 1047 would have created the Board of Frontier Models, tasked with approving updates to the definitions and regulations around these AI models, but the proposed act would not create a new government body. The New York bill also doesn’t create a public cloud computing cluster, which SB 1047 would have done. The cluster was intended to support projects to develop AI for the public good.

The RAISE Act doesn’t have SB 1047’s requirement that companies be able to halt all operations of their model, a capability sometimes referred to as a “kill switch.” Some critics alleged that the shutdown provision of SB 1047 would harm open-source models, since developers can’t shut down a model someone else may now possess (even though SB 1047 had an exemption for open-source models).

The RAISE Act avoids the fight entirely. SB 1047 referred to an “advanced persistent threat” associated with bad actors trying to steal information during model training. The RAISE Act does away with that definition, sticking to addressing critical harms from covered models.

Focusing on the wrong issues?

Bores’ bill is very specific with its definitions in an effort to clearly delineate what this bill is and isn’t about. The RAISE Act doesn’t address some of the current risks from AI models, like bias, discrimination, and job displacement. Like SB 1047, it is very focused on catastrophic risks from frontier AI models.

Some in the AI community believe this focus is misguided. “We’re broadly supportive of any efforts to hold large models accountable,” says Kate Brennan, associate director of the AI Now Institute, which conducts AI policy research.

“But defining critical harms only in terms of the most catastrophic harms from the most advanced models overlooks the material risks that AI poses, whether it’s workers subject to surveillance mechanisms, prone to workplace injuries because of algorithmically managed speed rates, climate impacts of large-scale AI systems, data centers exerting massive pressure on local power grids, or data center construction sidestepping key environmental protections,” she says.

Bores has worked on other bills addressing current harms posed by AI systems, like discrimination and lack of transparency. That said, Bores is clear that this new bill is aimed at mitigating catastrophic risks from more advanced models. “We’re not talking about any model that exists right now,” he says. “We are talking about truly frontier models, those on the edge of what we can build and what we understand, and there is risk in that.”

The bill would cover only models that pass a certain threshold for how many computations their training required, typically measured in FLOPs (floating-point operations). In the bill, a covered model is one that requires more than 1026 FLOPs in its training and costs over $100 million. For reference, GPT-4 is estimated to have required 1025 FLOPs.

This approach may draw scrutiny from industry forces. “While we can’t comment specifically on legislation that isn’t public yet, we believe effective regulation should focus on specific applications rather than broad model categories,” says a spokesperson at Hugging Face, a company that opposed SB 1047.

Early days

The bill is in its nascent stages, so it’s subject to many edits in the future, and no opposition has yet formed. There may already be lessons to be learned from the battle over SB 1047, however. “There’s significant disagreement in the space, but I think debate around future legislation would benefit from more clarity around the severity, the likelihood, and the imminence of harms,” says Scott Kohler, a scholar at the Carnegie Endowment for International Peace, who tracked the development of SB 1047.

When asked about the idea of mandated safety plans for AI companies, assembly member Edward Ra, a Republican who hasn’t yet seen a draft of the new bill yet, said: “I don’t have any general problem with the idea of doing that. We expect businesses to be good corporate citizens, but sometimes you do have to put some of that into writing.”

Ra and Bores co chair the New York Future Caucus, which aims to bring together lawmakers 45 and under to tackle pressing issues that affect future generations.

Scott Wiener, a California state senator who sponsored SB 1047, is happy to see that his initial bill, even though it failed, is inspiring further legislation and discourse. “The bill triggered a conversation about whether we should just trust the AI labs to make good decisions, which some will, but we know from past experience, some won’t make good decisions, and that’s why a level of basic regulation for incredibly powerful technology is important,” he says.

He has his own plans to reignite the fight: “We’re not done in California. There will be continued work in California, including for next year. I’m optimistic that California is gonna be able to get some good things done.”

And some believe the RAISE Act will highlight a notable contradiction: Many of the industry’s players insist that they want regulation, but when any regulation is proposed, they fight against it. “SB 1047 became a referendum on whether AI should be regulated at all,” says Brennan. “There are a lot of things we saw with 1047 that we can expect to see replay in New York if this bill is introduced. We should be prepared to see a massive lobbying reaction that industry is going to bring to even the lightest-touch regulation.”

Wiener and Bores both wish to see regulation at a national level, but in the absence of such legislation, they’ve taken the battle upon themselves. At first it may seem odd for states to take up such important reforms, but California houses the headquarters of the top AI companies, and New York, which has the third-largest state economy in the US, is home to offices for OpenAI and other AI companies. The two states may be well positioned to lead the conversation around regulation.

“There is uncertainty at the direction of federal policy with the transition upcoming and around the role of Congress,” says Kohler. “It is likely that states will continue to step up in this area.”

Wiener’s advice for New York legislators entering the arena of AI regulation? “Buckle up and get ready.”