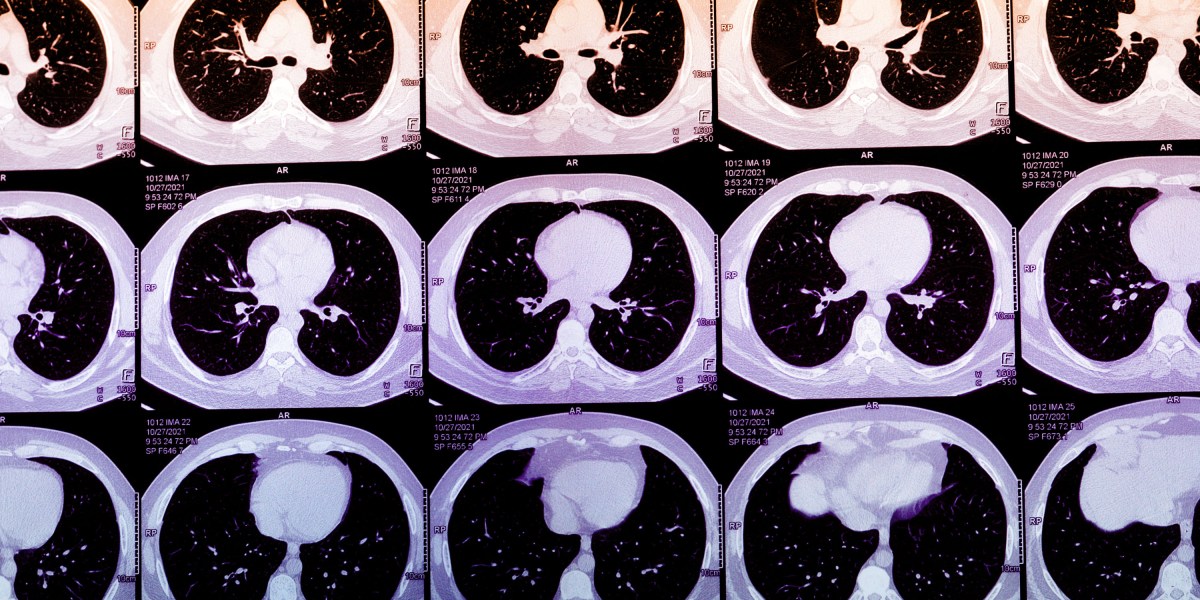

For all the modern marvels of cardiology, we struggle to predict who will have a heart attack. Many people never get screened at all. Now, startups like Bunkerhill Health, Nanox.AI, and HeartLung Technologies are applying AI algorithms to screen millions of CT scans for early signs of heart disease. This technology could be a breakthrough for public health, applying an old tool to uncover patients whose high risk for a heart attack is hiding in plain sight. But it remains unproven at scale while raising thorny questions about implementation and even how we define disease.

Last year, an estimated 20 million Americans had chest CT scans done, after an event like a car accident or to screen for lung cancer. Frequently, they show evidence of coronary artery calcium (CAC), a marker for heart attack risk, that is buried or not mentioned in a radiology report focusing on ruling out bony injuries, life-threatening internal trauma, or cancer.

Dedicated testing for CAC remains an underutilized method of predicting heart attack risk. Over decades, plaque in heart arteries moves through its own life cycle, hardening from lipid-rich residue into calcium. Heart attacks themselves typically occur when younger, lipid-rich plaque unpredictably ruptures, kicking off a clotting cascade of inflammation that ultimately blocks the heart’s blood supply. Calcified plaque is generally stable, but finding CAC suggests that younger, more rupture-prone plaque is likely present too.

Coronary artery calcium can often be spotted on chest CTs, and its concentration can be subjectively described. Normally, quantifying a person’s CAC score involves obtaining a heart-specific CT scan. Algorithms that calculate CAC scores from routine chest CTs, however, could massively expand access to this metric. In practice, these algorithms could then be deployed to alert patients and their doctors about abnormally high scores, encouraging them to seek further care. Today, the footprint of the startups offering AI-derived CAC scores is not large, but it is growing quickly. As their use grows, these algorithms may identify high-risk patients who are traditionally missed or who are on the margins of care.

Historically, CAC scans were believed to have marginal benefit and were marketed to the worried well. Even today, most insurers won’t cover them. Attitudes, though, may be shifting. More expert groups are endorsing CAC scores as a way to refine cardiovascular risk estimates and persuade skeptical patients to start taking statins.

The promise of AI-derived CAC scores is part of a broader trend toward mining troves of medical data to spot otherwise undetected disease. But while it seems promising, the practice raises plenty of questions. For example, CAC scores haven’t proved useful as a blunt instrument for universal screening. A 2022 Danish study evaluating a population-based program, for example, showed no benefit in mortality rates for patients who had undergone CAC screening tests. If AI delivered this information automatically, would the calculus really shift?

And with widespread adoption, abnormal CAC scores will become common. Who follows up on these findings? “Many health systems aren’t yet set up to act on incidental calcium findings at scale,” says Nishith Khandwala, the cofounder of Bunkerhill Health. Without a standard procedure for doing so, he says, “you risk creating more work than value.”

There’s also the question of whether these AI-generated scores would actually improve patient care. For a symptomatic patient, a CAC score of zero may offer false reassurance. For the asymptomatic patient with a high CAC score, the next steps remain uncertain. Beyond statins, it isn’t clear if these patients would benefit from starting costly cholesterol-lowering drugs such as Repatha or other PCSK9-inhibitors. It may encourage some to pursue unnecessary but costly downstream procedures that could even end up doing harm. Currently, AI-derived CAC scoring is not reimbursed as a separate service by Medicare or most insurers. The business case for this technology today, effectively, lies in these potentially perverse incentives.

At a fundamental level, this approach could actually change how we define disease. Adam Rodman, a hospitalist and AI expert at Beth Israel Deaconess Medical Center in Boston, has observed that AI-derived CAC scores share similarities with the “incidentaloma,” a term coined in the 1980s to describe unexpected findings on CT scans. In both cases, the normal pattern of diagnosis—in which doctors and patients deliberately embark on testing to figure out what’s causing a specific problem—were fundamentally disrupted. But, as Rodman notes, incidentalomas were still found by humans reviewing the scans.

Now, he says, we are entering an era of “machine-based nosology,” where algorithms define diseases on their own terms. As machines make more diagnoses, they may catch things we miss. But Rodman and I began to wonder if a two-tiered diagnostic future may emerge, where “haves” pay for brand-name algorithms while “have-nots” settle for lesser alternatives.

For patients who have no risk factors or are detached from regular medical care, an AI-derived CAC score could potentially catch problems earlier and rewrite the script. But how these scores reach people, what is done about them, and whether they can ultimately improve patient outcomes at scale remain open questions. For now—holding the pen as they toggle between patients and algorithmic outputs—clinicians still matter.

Vishal Khetpal is a fellow in cardiovascular disease. The views expressed in this article do not represent those of his employers.