In recent years, Parquet has become a standard format for data storage in Big Data ecosystems. Its column-oriented format offers several advantages:

- Faster query execution when only a subset of columns is being processed

- Quick calculation of statistics across all data

- Reduced storage volume thanks to efficient compression

When combined with storage frameworks like Delta Lake or Apache Iceberg, it seamlessly integrates with query engines (e.g., Trino) and data warehouse compute clusters (e.g., Snowflake, BigQuery). In this article, the content of a Parquet file is dissected using mainly standard Python tools to better understand its structure and how it contributes to such performances.

Writing Parquet file(s)

To produce Parquet files, we use PyArrow, a Python binding for Apache Arrow that stores dataframes in memory in columnar format. PyArrow allows fine-grained parameter tuning when writing the file. This makes PyArrow ideal for Parquet manipulation (one can also simply use Pandas).

# generator.py

import pyarrow as pa

import pyarrow.parquet as pq

from faker import Faker

fake = Faker()

Faker.seed(12345)

num_records = 100

# Generate fake data

names = [fake.name() for _ in range(num_records)]

addresses = [fake.address().replace("n", ", ") for _ in range(num_records)]

birth_dates = [

fake.date_of_birth(minimum_age=67, maximum_age=75) for _ in range(num_records)

]

cities = [addr.split(", ")[1] for addr in addresses]

birth_years = [date.year for date in birth_dates]

# Cast the data to the Arrow format

name_array = pa.array(names, type=pa.string())

address_array = pa.array(addresses, type=pa.string())

birth_date_array = pa.array(birth_dates, type=pa.date32())

city_array = pa.array(cities, type=pa.string())

birth_year_array = pa.array(birth_years, type=pa.int32())

# Create schema with non-nullable fields

schema = pa.schema(

[

pa.field("name", pa.string(), nullable=False),

pa.field("address", pa.string(), nullable=False),

pa.field("date_of_birth", pa.date32(), nullable=False),

pa.field("city", pa.string(), nullable=False),

pa.field("birth_year", pa.int32(), nullable=False),

]

)

table = pa.Table.from_arrays(

[name_array, address_array, birth_date_array, city_array, birth_year_array],

schema=schema,

)

print(table)pyarrow.Table

name: string not null

address: string not null

date_of_birth: date32[day] not null

city: string not null

birth_year: int32 not null

----

name: [["Adam Bryan","Jacob Lee","Candice Martinez","Justin Thompson","Heather Rubio"]]

address: [["822 Jennifer Field Suite 507, Anthonyhaven, UT 98088","292 Garcia Mall, Lake Belindafurt, IN 69129","31738 Jonathan Mews Apt. 024, East Tammiestad, ND 45323","00716 Kristina Trail Suite 381, Howelltown, SC 64961","351 Christopher Expressway Suite 332, West Edward, CO 68607"]]

date_of_birth: [[1955-06-03,1950-06-24,1955-01-29,1957-02-18,1956-09-04]]

city: [["Anthonyhaven","Lake Belindafurt","East Tammiestad","Howelltown","West Edward"]]

birth_year: [[1955,1950,1955,1957,1956]]The output clearly reflects a columns-oriented storage, unlike Pandas, which usually displays a traditional “row-wise” table.

How is a Parquet file stored?

Parquet files are generally stored in cheap object storage databases like S3 (AWS) or GCS (GCP) to be easily accessible by data processing pipelines. These files are usually organized with a partitioning strategy by leveraging directory structures:

# generator.py

num_records = 100

# ...

# Writing the parquet files to disk

pq.write_to_dataset(

table,

root_path='dataset',

partition_cols=['birth_year', 'city']

)If birth_year and city columns are defined as partitioning keys, PyArrow creates such a tree structure in the directory dataset:

dataset/

├─ birth_year=1949/

├─ birth_year=1950/

│ ├─ city=Aaronbury/

│ │ ├─ 828d313a915a43559f3111ee8d8e6c1a-0.parquet

│ │ ├─ 828d313a915a43559f3111ee8d8e6c1a-0.parquet

│ │ ├─ …

│ ├─ city=Alicialand/

│ ├─ …

├─ birth_year=1951 ├─ ...

The strategy enables partition pruning: when a query filters on these columns, the engine can use folder names to read only the necessary files. This is why the partitioning strategy is crucial for limiting delay, I/O, and compute resources when handling large volumes of data (as has been the case for decades with traditional relational databases).

The pruning effect can be easily verified by counting the files opened by a Python script that filters the birth year:

# query.py

import duckdb

duckdb.sql(

"""

SELECT *

FROM read_parquet('dataset/*/*/*.parquet', hive_partitioning = true)

where birth_year = 1949

"""

).show()> strace -e trace=open,openat,read -f python query.py 2>&1 | grep "dataset/.*.parquet"

[pid 37] openat(AT_FDCWD, "dataset/birth_year=1949/city=Box%201306/e1ad1666a2144fbc94892d4ac1234c64-0.parquet", O_RDONLY) = 3

[pid 37] openat(AT_FDCWD, "dataset/birth_year=1949/city=Box%201306/e1ad1666a2144fbc94892d4ac1234c64-0.parquet", O_RDONLY) = 3

[pid 39] openat(AT_FDCWD, "dataset/birth_year=1949/city=Box%201306/e1ad1666a2144fbc94892d4ac1234c64-0.parquet", O_RDONLY) = 4

[pid 39] openat(AT_FDCWD, "dataset/birth_year=1949/city=Box%203487/e1ad1666a2144fbc94892d4ac1234c64-0.parquet", O_RDONLY) = 5

[pid 39] openat(AT_FDCWD, "dataset/birth_year=1949/city=Box%203487/e1ad1666a2144fbc94892d4ac1234c64-0.parquet", O_RDONLY) = 3

[pid 39] openat(AT_FDCWD, "dataset/birth_year=1949/city=Clarkemouth/e1ad1666a2144fbc94892d4ac1234c64-0.parquet", O_RDONLY) = 4

[pid 39] openat(AT_FDCWD, "dataset/birth_year=1949/city=Clarkemouth/e1ad1666a2144fbc94892d4ac1234c64-0.parquet", O_RDONLY) = 5

[pid 39] openat(AT_FDCWD, "dataset/birth_year=1949/city=DPO%20AP%2020198/e1ad1666a2144fbc94892d4ac1234c64-0.parquet", O_RDONLY) = 3

[pid 39] openat(AT_FDCWD, "dataset/birth_year=1949/city=DPO%20AP%2020198/e1ad1666a2144fbc94892d4ac1234c64-0.parquet", O_RDONLY) = 4

[pid 39] openat(AT_FDCWD, "dataset/birth_year=1949/city=East%20Morgan/e1ad1666a2144fbc94892d4ac1234c64-0.parquet", O_RDONLY) = 5

[pid 39] openat(AT_FDCWD, "dataset/birth_year=1949/city=East%20Morgan/e1ad1666a2144fbc94892d4ac1234c64-0.parquet", O_RDONLY) = 3

[pid 39] openat(AT_FDCWD, "dataset/birth_year=1949/city=FPO%20AA%2006122/e1ad1666a2144fbc94892d4ac1234c64-0.parquet", O_RDONLY) = 4

[pid 39] openat(AT_FDCWD, "dataset/birth_year=1949/city=FPO%20AA%2006122/e1ad1666a2144fbc94892d4ac1234c64-0.parquet", O_RDONLY) = 5

[pid 39] openat(AT_FDCWD, "dataset/birth_year=1949/city=New%20Michelleport/e1ad1666a2144fbc94892d4ac1234c64-0.parquet", O_RDONLY) = 3

[pid 39] openat(AT_FDCWD, "dataset/birth_year=1949/city=New%20Michelleport/e1ad1666a2144fbc94892d4ac1234c64-0.parquet", O_RDONLY) = 4

[pid 39] openat(AT_FDCWD, "dataset/birth_year=1949/city=North%20Danielchester/e1ad1666a2144fbc94892d4ac1234c64-0.parquet", O_RDONLY) = 5

[pid 39] openat(AT_FDCWD, "dataset/birth_year=1949/city=North%20Danielchester/e1ad1666a2144fbc94892d4ac1234c64-0.parquet", O_RDONLY) = 3

[pid 39] openat(AT_FDCWD, "dataset/birth_year=1949/city=Port%20Chase/e1ad1666a2144fbc94892d4ac1234c64-0.parquet", O_RDONLY) = 4

[pid 39] openat(AT_FDCWD, "dataset/birth_year=1949/city=Port%20Chase/e1ad1666a2144fbc94892d4ac1234c64-0.parquet", O_RDONLY) = 5

[pid 39] openat(AT_FDCWD, "dataset/birth_year=1949/city=Richardmouth/e1ad1666a2144fbc94892d4ac1234c64-0.parquet", O_RDONLY) = 3

[pid 39] openat(AT_FDCWD, "dataset/birth_year=1949/city=Richardmouth/e1ad1666a2144fbc94892d4ac1234c64-0.parquet", O_RDONLY) = 4

[pid 39] openat(AT_FDCWD, "dataset/birth_year=1949/city=Robbinsshire/e1ad1666a2144fbc94892d4ac1234c64-0.parquet", O_RDONLY) = 5

[pid 39] openat(AT_FDCWD, "dataset/birth_year=1949/city=Robbinsshire/e1ad1666a2144fbc94892d4ac1234c64-0.parquet", O_RDONLY) = 3Only 23 files are read out of 100.

Reading a raw Parquet file

Let’s decode a raw Parquet file without specialized libraries. For simplicity, the dataset is dumped into a single file without compression or encoding.

# generator.py

# ...

pq.write_table(

table,

"dataset.parquet",

use_dictionary=False,

compression="NONE",

write_statistics=True,

column_encoding=None,

)The first thing to know is that the binary file is framed by 4 bytes whose ASCII representation is “PAR1”. The file is corrupted if this is not the case.

# reader.py

with open("dataset.parquet", "rb") as file:

parquet_data = file.read()

assert parquet_data[:4] == b"PAR1", "Not a valid parquet file"

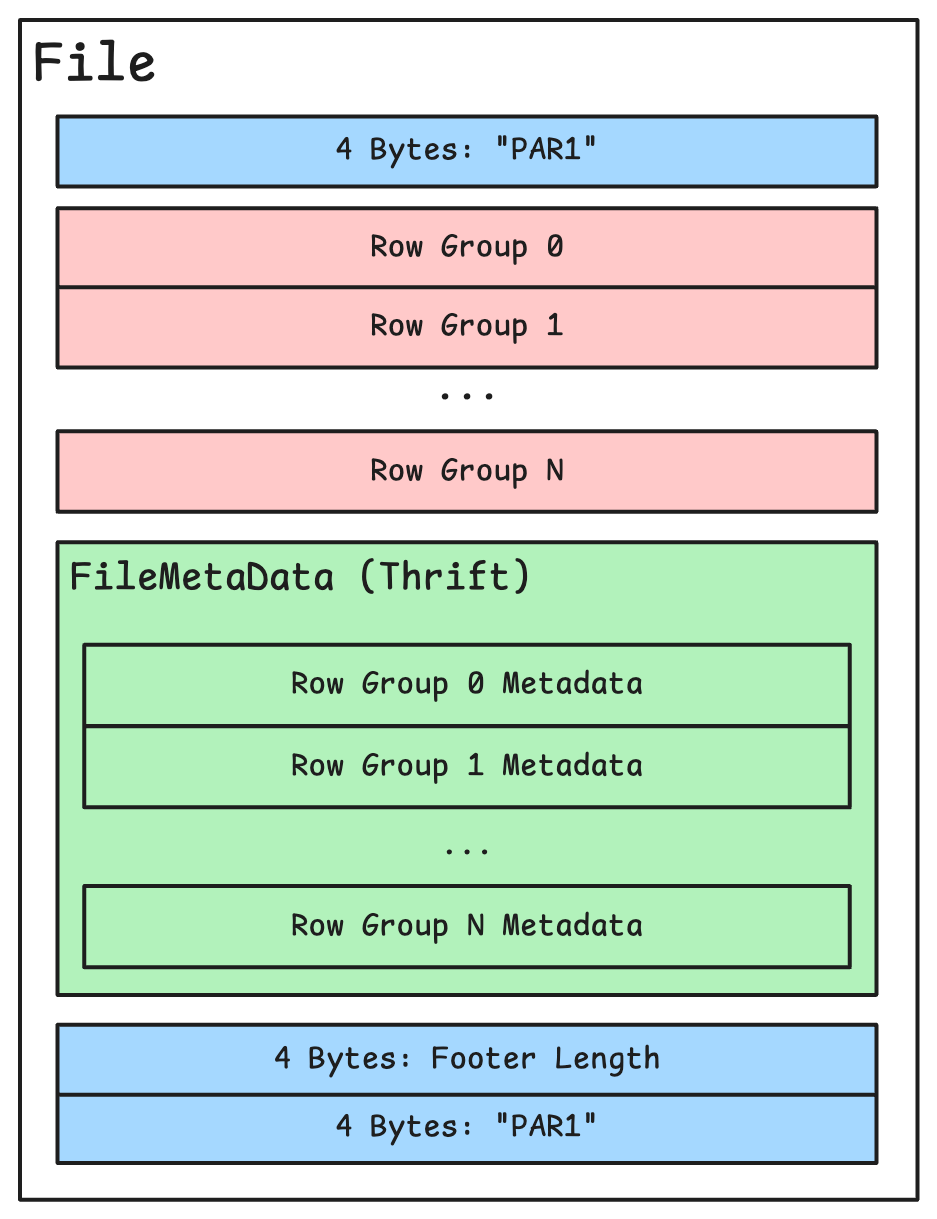

assert parquet_data[-4:] == b"PAR1", "File footer is corrupted"As indicated in the documentation, the file is divided into two parts: the “row groups” containing actual data, and the footer containing metadata (schema below).

The footer

The size of the footer is indicated in the 4 bytes preceding the end marker as an unsigned integer written in “little endian” format (noted “unpack function).

# reader.py

import struct

# ...

footer_length = struct.unpack("Footer size in bytes: 1088The footer information is encoded in a cross-language serialization format called Apache Thrift. Using a human-readable but verbose format like JSON and then translating it into binary would be less efficient in terms of memory usage. With Thrift, one can declare data structures as follows:

struct Customer {

1: required string name,

2: optional i16 birthYear,

3: optional list interests

}On the basis of this declaration, Thrift can generate Python code to decode byte strings with such data structure (it also generates code to perform the encoding part). The thrift file containing all the data structures implemented in a Parquet file can be downloaded here. After having installed the thrift binary, let’s run:

thrift -r --gen py parquet.thriftThe generated Python code is placed in the “gen-py” folder. The footer’s data structure is represented by the FileMetaData class – a Python class automatically generated from the Thrift schema. Using Thrift’s Python utilities, binary data is parsed and populated into an instance of this FileMetaData class.

# reader.py

import sys

# ...

# Add the generated classes to the python path

sys.path.append("gen-py")

from parquet.ttypes import FileMetaData, PageHeader

from thrift.transport import TTransport

from thrift.protocol import TCompactProtocol

def read_thrift(data, thrift_instance):

"""

Read a Thrift object from a binary buffer.

Returns the Thrift object and the number of bytes read.

"""

transport = TTransport.TMemoryBuffer(data)

protocol = TCompactProtocol.TCompactProtocol(transport)

thrift_instance.read(protocol)

return thrift_instance, transport._buffer.tell()

# The number of bytes read is not used for now

file_metadata_thrift, _ = read_thrift(footer_data, FileMetaData())

print(f"Number of rows in the whole file: {file_metadata_thrift.num_rows}")

print(f"Number of row groups: {len(file_metadata_thrift.row_groups)}")

Number of rows in the whole file: 100

Number of row groups: 1The footer contains extensive information about the file’s structure and content. For instance, it accurately tracks the number of rows in the generated dataframe. These rows are all contained within a single “row group.” But what is a “row group?”

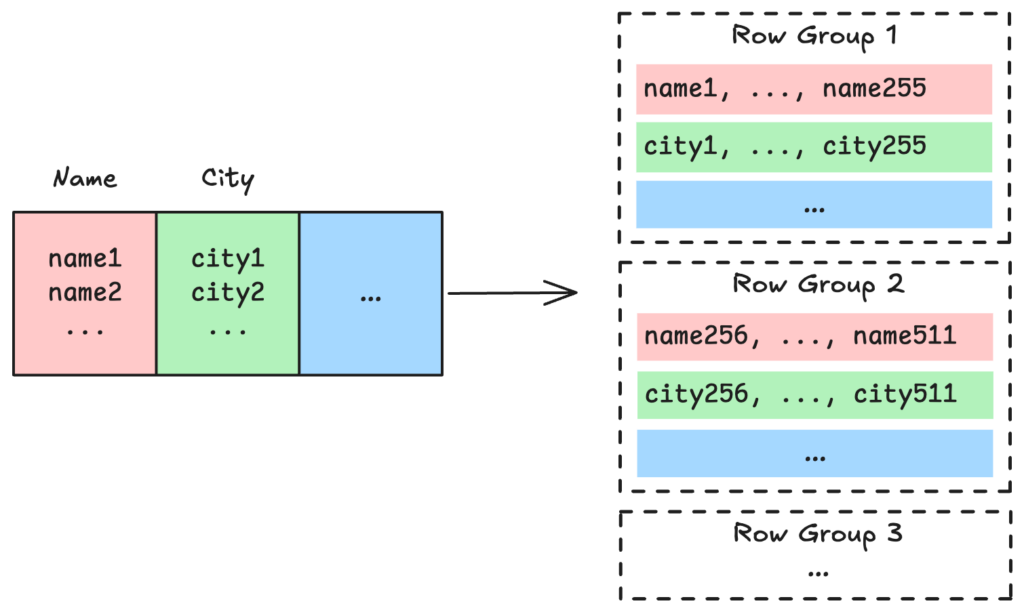

Row groups

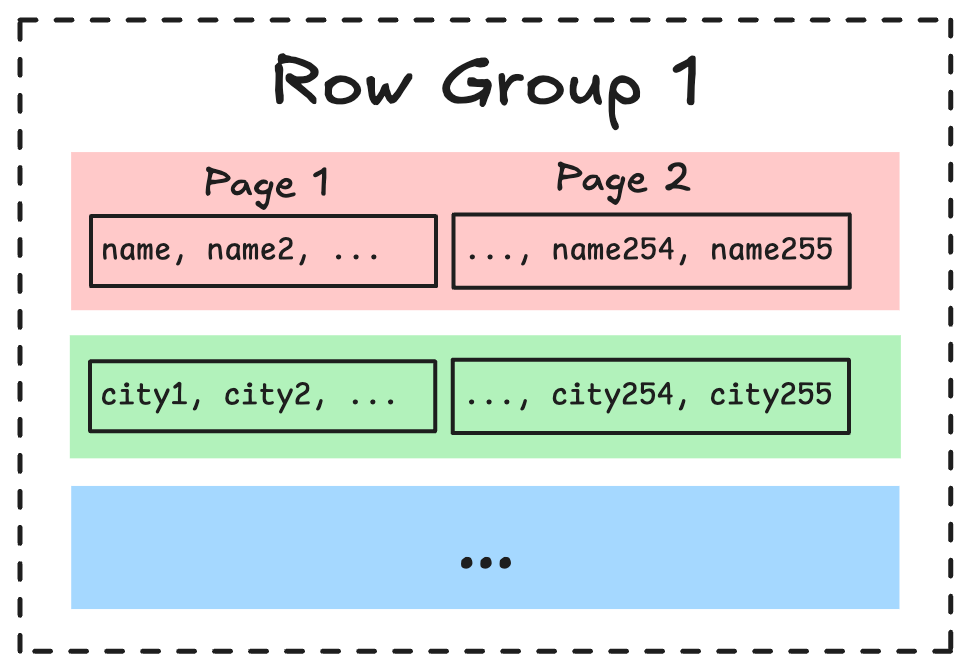

Unlike purely column-oriented formats, Parquet employs a hybrid approach. Before writing column blocks, the dataframe is first partitioned vertically into row groups (the parquet file we generated is too small to be split in multiple row groups).

This hybrid structure offers several advantages:

Parquet calculates statistics (such as min/max values) for each column within each row group. These statistics are crucial for query optimization, allowing query engines to skip entire row groups that don’t match filtering criteria. For example, if a query filters for birth_year > 1955 and a row group’s maximum birth year is 1954, the engine can efficiently skip that entire data section. This optimisation is called “predicate pushdown”. Parquet also stores other useful statistics like distinct value counts and null counts.

# reader.py

# ...

first_row_group = file_metadata_thrift.row_groups[0]

birth_year_column = first_row_group.columns[4]

min_stat_bytes = birth_year_column.meta_data.statistics.min

max_stat_bytes = birth_year_column.meta_data.statistics.max

min_year = struct.unpack("The birth year range is between 1949 and 1958- Row groups enable parallel processing of data (particularly valuable for frameworks like Apache Spark). The size of these row groups can be configured based on the computing resources available (using the

row_group_sizeproperty in functionwrite_tablewhen using PyArrow).

# generator.py

# ...

pq.write_table(

table,

"dataset.parquet",

row_group_size=100,

)

# /! Keep the default value of "row_group_size" for the next parts- Even if this is not the primary objective of a column format, Parquet’s hybrid structure maintains reasonable performance when reconstructing complete rows. Without row groups, rebuilding an entire row might require scanning the entirety of each column which would be extremely inefficient for large files.

Data Pages

The smallest substructure of a Parquet file is the page. It contains a sequence of values from the same column and, therefore, of the same type. The choice of page size is the result of a trade-off:

- Larger pages mean less metadata to store and read, which is optimal for queries with minimal filtering.

- Smaller pages reduce the amount of unnecessary data read, which is better when queries target small, scattered data ranges.

Now let’s decode the contents of the first page of the column dedicated to addresses whose location can be found in the footer (given by the data_page_offset attribute of the right ColumnMetaData) . Each page is preceded by a Thrift PageHeader object containing some metadata. The offset actually points to a Thrift binary representation of the page metadata that precedes the page itself. The Thrift class is called a PageHeader and can also be found in the gen-py directory.

💡 Between the PageHeader and the actual values contained within the page, there may be a few bytes dedicated to implementing the Dremel format, which allows encoding nested data structures. Since our data has a regular tabular format and the values are not nullable, these bytes are skipped when writing the file (https://parquet.apache.org/docs/file-format/data-pages/).

# reader.py

# ...

address_column = first_row_group.columns[1]

column_start = address_column.meta_data.data_page_offset

column_end = column_start + address_column.meta_data.total_compressed_size

column_content = parquet_data[column_start:column_end]

page_thrift, page_header_size = read_thrift(column_content, PageHeader())

page_content = column_content[

page_header_size : (page_header_size + page_thrift.compressed_page_size)

]

print(column_content[:100])b'6x00x00x00481 Mata Squares Suite 260, Lake Rachelville, KY 874642x00x00x00671 Barker Crossing Suite 390, Mooreto'The generated values finally appear, in plain text and not encoded (as specified when writing the Parquet file). However, to optimize the columnar format, it is recommended to use one of the following encoding algorithms: dictionary encoding, run length encoding (RLE), or delta encoding (the latter being reserved for int32 and int64 types), followed by compression using gzip or snappy (available codecs are listed here). Since encoded pages contain similar values (all addresses, all decimal numbers, etc.), compression ratios can be particularly advantageous.

As documented in the specification, when character strings (BYTE_ARRAY) are not encoded, each value is preceded by its size represented as a 4-byte integer. This can be observed in the previous output:

To read all the values (for example, the first 10), the loop is rather simple:

idx = 0

for _ in range(10):

str_size = struct.unpack("481 Mata Squares Suite 260, Lake Rachelville, KY 87464

671 Barker Crossing Suite 390, Mooretown, MI 21488

62459 Jordan Knoll Apt. 970, Emilyfort, DC 80068

948 Victor Square Apt. 753, Braybury, RI 67113

365 Edward Place Apt. 162, Calebborough, AL 13037

894 Reed Lock, New Davidmouth, NV 84612

24082 Allison Squares Suite 345, North Sharonberg, WY 97642

00266 Johnson Drives, South Lori, MI 98513

15255 Kelly Plains, Richardmouth, GA 33438

260 Thomas Glens, Port Gabriela, OH 96758And there we have it! We have successfully recreated, in a very simple way, how a specialized library would read a Parquet file. By understanding its building blocks including headers, footers, row groups, and data pages, we can better appreciate how features like predicate pushdown and partition pruning deliver such impressive performance benefits in data-intensive environments. I am convinced knowing how Parquet works under the hood helps making better decisions about storage strategies, compression choices, and performance optimization.

All the code used in this article is available on my GitHub repository at https://github.com/kili-mandjaro/anatomy-parquet, where you can explore more examples and experiment with different Parquet file configurations.

Whether you are building data pipelines, optimizing query performance, or simply curious about data storage formats, I hope this deep dive into Parquet’s inner structures has provided valuable insights for your Data Engineering journey.

All images are by the author.