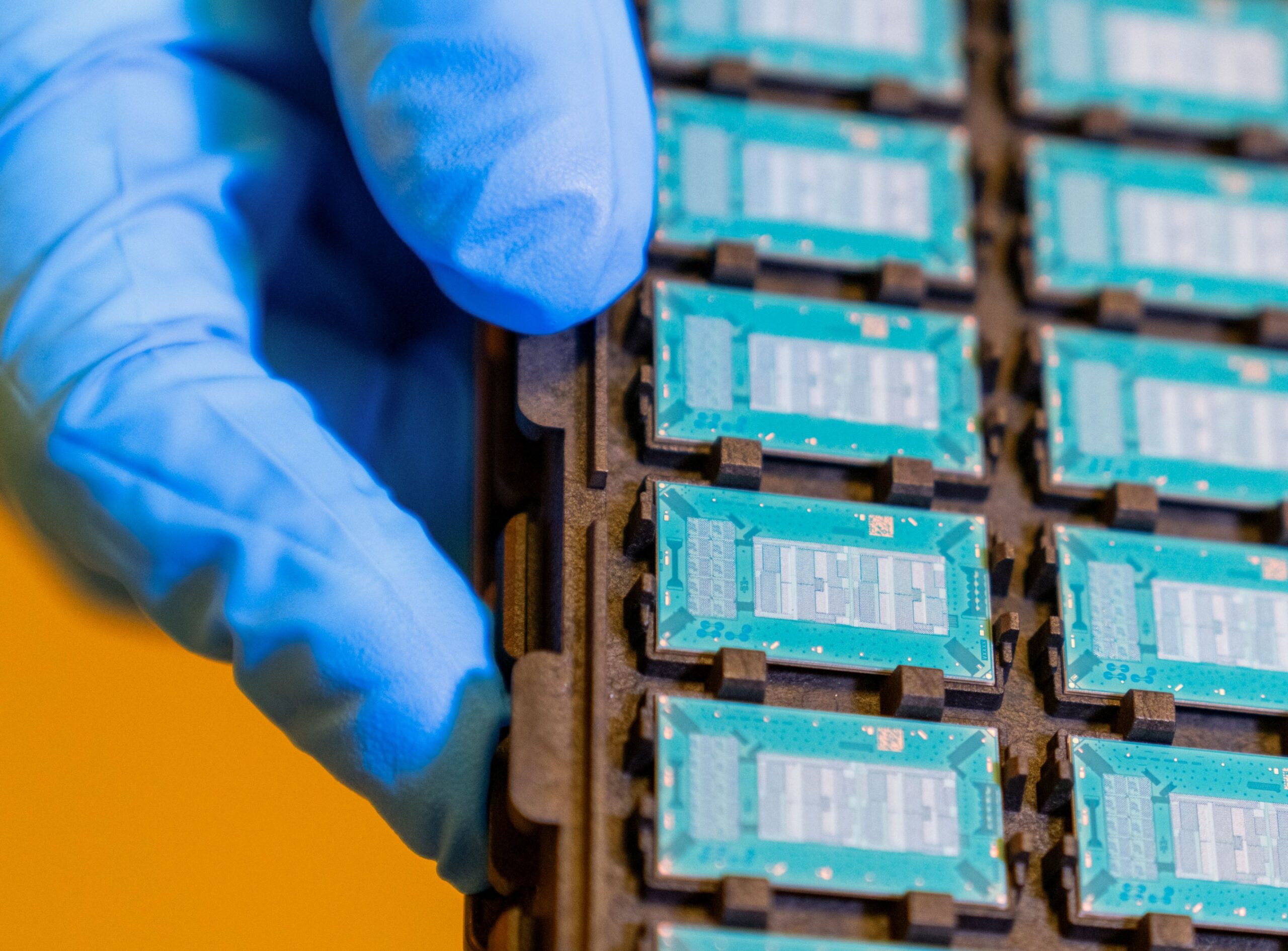

To prove their point, the authors imagined a 400 MW AI datacenter with 1024 GPU racks of 128 GPUs each for a total of 128,000 GPUs. “Assume 12.8T scale-up and 1.6T scale-out bandwidth per GPU. With OSFP switch racks that have a density of 1.6 Pbps per rack, this would require more than 1,400 switch racks for scale-up and scale-out fabrics. With XPO, this would require 75% fewer racks, saving over 1,050 racks or 44 % of the floor space,” Bechtolsheim and Vusirikala stated in the blog.

“Eliminating 75% of switch racks translates to massive reductions in construction and infrastructure costs, including power distribution, plumbing and installation costs, while accelerating deployment timelines,” Bechtolsheim and Vusirikala stated.

Arista said the water-cooling capability of XPO is also an important feature.

“All large AI data centers will be liquid cooled and the switches that go into these data centers also need to be liquid cooled,” Bechtolsheim and Vusirikala stated. “While one can add liquid cooled cold plates on flat-top OSFP modules, this does not substantially improve thermal performance.”

XPO solves this problem by integrating a liquid cold plate inside the module, with two 32-channel paddle cards sharing the common cold plate which can cool both low power as well as high-power optics such as 8x1600G-ZR/ZR+ with up to 400W of power, Bechtolsheim and Vusirikala stated.

XPO modules are much simpler than OSPF modules which improves reliability as well. “Each 32-channel paddle card has only one microcontroller and one set of voltage converters, a 75% reduction in common components versus 4 OSFPs,” Bechtolsheim and Vusirikala wrote.