Over the past couple of weeks, I’ve been following news of the deaths of actor Gene Hackman and his wife, pianist Betsy Arakawa. It was heartbreaking to hear how Arakawa appeared to have died from a rare infection days before her husband, who had advanced Alzheimer’s disease and may have struggled to understand what had happened.

But as I watched the medical examiner reveal details of the couple’s health, I couldn’t help feeling a little uncomfortable. Media reports claim that the couple liked their privacy and had been out of the spotlight for decades. But here I was, on the other side of the Atlantic Ocean, being told what pills Arakawa had in her medicine cabinet, and that Hackman had undergone multiple surgeries.

It made me wonder: Should autopsy reports be kept private? A person’s cause of death is public information. But what about other intimate health details that might be revealed in a postmortem examination?

The processes and regulations surrounding autopsies vary by country, so we’ll focus on the US, where Hackman and Arakawa died. Here, a “medico-legal” autopsy may be organized by law enforcement agencies and handled through courts, while a “clinical” autopsy may be carried out at the request of family members.

And there are different levels of autopsy—some might involve examining specific organs or tissues, while more thorough examinations would involve looking at every organ and studying tissues in the lab.

The goal of an autopsy is to discover the cause of a person’s death. Autopsy reports, especially those resulting from detailed investigations, often reveal health conditions—conditions that might have been kept private while the person was alive. There are multiple federal and state laws designed to protect individuals’ health information. For example, the Health Insurance Portability and Accountability Act (HIPAA) protects “individually identifiable health information” up to 50 years after a person’s death. But some things change when a person dies.

For a start, the cause of death will end up on the death certificate. That is public information. The public nature of causes of death is taken for granted these days, says Lauren Solberg, a bioethicist at the University of Florida College of Medicine. It has become a public health statistic. She and her student Brooke Ortiz, who have been researching this topic, are more concerned about other aspects of autopsy results.

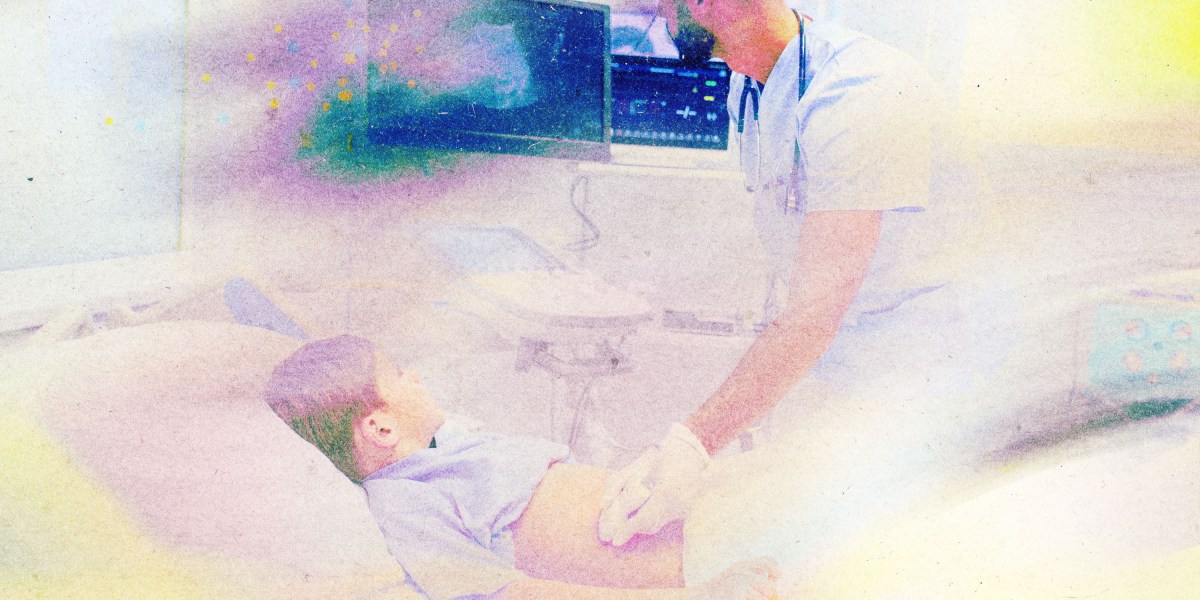

The thing is, autopsies can sometimes reveal more than what a person died from. They can also pick up what are known as incidental findings. An examiner might find that a person who died following a covid-19 infection also had another condition. Perhaps that condition was undiagnosed. Maybe it was asymptomatic. That finding wouldn’t appear on a death certificate. So who should have access to it?

The laws over who should have access to a person’s autopsy report vary by state, and even between counties within a state. Clinical autopsy results will always be made available to family members, but local laws dictate which family members have access, says Ortiz.

Genetic testing further complicates things. Sometimes the people performing autopsies will run genetic tests to help confirm the cause of death. These tests might reveal what the person died from. But they might also flag genetic factors unrelated to the cause of death that might increase the risk of other diseases.

In those cases, the person’s family members might stand to benefit from accessing that information. “My health information is my health information—until it comes to my genetic health information,” says Solberg. Genes are shared by relatives. Should they have the opportunity to learn about potential risks to their own health?

This is where things get really complicated. Ethically speaking, we should consider the wishes of the deceased. Would that person have wanted to share this information with relatives?

It’s also worth bearing in mind that a genetic risk factor is often just that; there’s often no way to know whether a person will develop a disease, or how severe the symptoms would be. And if the genetic risk is for a disease that has no treatment or cure, will telling the person’s relatives just cause them a lot of stress?

One 27-year-old experienced this when a 23&Me genetic test told her she had “a 28% chance of developing late-onset Alzheimer’s disease by age 75 and a 60% chance by age 85.”

“I’m suddenly overwhelmed by this information,” she posted on a dementia forum. “I can’t help feeling this overwhelming sense of dread and sadness that I’ll never be able to un-know this information.”

In their research, Solberg and Ortiz came across cases in which individuals who had died in motor vehicle accidents underwent autopsies that revealed other, asymptomatic conditions. One man in his 40s who died in such an accident was found to have a genetic kidney disease. A 23-year-old was found to have had kidney cancer.

Ideally, both medical teams and family members should know ahead of time what a person would have wanted—whether that’s an autopsy, genetic testing, or health privacy. Advance directives allow people to clarify their wishes for end-of-life care. But only around a third of people in the US have completed one. And they tend to focus on care before death, not after.

Solberg and Ortiz think they should be expanded. An advance directive could specify how people want to share their health information after they’ve died. “Talking about death is difficult,” says Solberg. “For physicians, for patients, for families—it can be uncomfortable.” But it is important.

On March 17, a New Mexico judge granted a request from a representative of Hackman’s estate to seal police photos and bodycam footage as well as the medical records of Hackman and Arakawa. The medical investigator is “temporarily restrained from disclosing … the Autopsy Reports and/or Death Investigation Reports for Mr. and Mrs. Hackman,” according to Deadline.

This article first appeared in The Checkup, MIT Technology Review’s weekly biotech newsletter. To receive it in your inbox every Thursday, and read articles like this first, sign up here.