Blaxel, a startup building cloud infrastructure specifically designed for artificial intelligence agents, has raised $7.3 million in seed funding led by First Round Capital, the company announced Tuesday. The financing comes just three months after the six-founder team graduated from Y Combinator’s Spring 2025 batch, underscoring investor appetite for infrastructure plays in the rapidly expanding AI agent market.

The San Francisco-based company is betting that the current generation of cloud providers — Amazon Web Services, Google Cloud, and Microsoft Azure — are fundamentally mismatched for the new wave of autonomous AI systems that can take actions without human intervention. These AI agents, which handle everything from managing calendars to generating code, require dramatically different infrastructure than traditional web applications built for human users.

“The current cloud providers have been designed for the Web 2.0, Software as a Service era,” said Paul Sinaï, Blaxel’s co-founder and CEO, in an exclusive interview with VentureBeat. “But with this new wave of agentic AI, we believe that there is a need for a new type of infrastructure which is dedicated to AI agents.”

The timing reflects a broader shift in enterprise computing as companies increasingly deploy AI agents for customer service, data processing, and workflow automation. Unlike traditional applications where databases sit alongside web servers in predictable patterns, AI agents create unique networking challenges by connecting to language models in one region, APIs in another cloud, and knowledge bases elsewhere—all while users expect instant responses.

The AI Impact Series Returns to San Francisco – August 5

The next phase of AI is here – are you ready? Join leaders from Block, GSK, and SAP for an exclusive look at how autonomous agents are reshaping enterprise workflows – from real-time decision-making to end-to-end automation.

Secure your spot now – space is limited: https://bit.ly/3GuuPLF

Blaxel has already demonstrated significant traction, processing millions of agent requests daily across 16 global regions by the end of their Y Combinator batch. One customer is running over 1 billion seconds of agent runtime to process millions of videos, representing a scale that illustrates the infrastructure demands of AI-first companies.

“One of our customers is processing session replays to enable product managers to understand better how the user behavior of their product,” Sinaï explained. “They need to process millions of session replays every month. So it represents millions of minutes of sessions. They are using our agentic infrastructure to process those session replays and provide insights for product managers.”

The company’s approach centers on providing infrastructure that AI agents can operate themselves, rather than requiring human administrators. This includes sandboxed virtual machines that boot in under 25 milliseconds, automatic scaling based on agent activity patterns, and APIs designed to be consumed directly by AI systems rather than human developers.

How six co-founders with a successful exit plan to take on Big Tech

Blaxel’s unusual six-founder structure stems from the team’s shared experience building and selling a previous company to OVHcloud, Europe’s largest cloud provider. That company became OVH’s entire analytics product suite, giving the team firsthand experience with both cloud infrastructure challenges and successful exits.

“I know it sounds unusual, pretty big team. We didn’t fit exactly on the stage for demo day,” Sinaï said, referencing Y Combinator’s signature event. “But we already did that. My previous company, which I sold to OVH cloud, we were also six co-founders.”

The team includes Charles Drappier, whom Sinaï has known for over 30 years, along with co-founders Christophe Ploujoux, Nicolas Lecomte, Thomas Crochet, and Mathis Joffre. Their collective experience spans infrastructure, developer tools, and platform engineering — critical expertise for competing against tech giants with virtually unlimited resources.

“I think it’s important to be six right now, because we have a lot of ambition,” Sinaï said. “What we are doing is building this next generation of cloud computing for this new agentic era.”

What sets Blaxel apart in the competitive cloud infrastructure market

The cloud infrastructure market is notoriously competitive, with AWS commanding roughly one-third market share and newer players like Modal, Replicate, and RunPod targeting AI workloads. Blaxel differentiates itself by focusing specifically on AI agents rather than model inference or training.

“Most of the competitors you mentioned are solving a very difficult problem, which is around the inference — how you can host your model, how you can make those models as fast as you can in terms of number of tokens,” Sinaï said. “But there is not that many people working on infrastructure for the agents, and it’s exactly what we are doing.”

The company’s platform includes three main components: agent hosting for deploying AI systems as serverless APIs, MCP (Model Context Protocol) servers for connecting agents to external tools, and a unified gateway for accessing multiple AI models. The infrastructure is designed to handle the variable resource demands of AI agents, which might require minimal computing power while waiting for responses but need significant resources during active processing.

Enterprise security and compliance features target regulated industries

Despite targeting younger AI-first companies, Blaxel has implemented enterprise-grade security measures including SOC2 and HIPAA compliance. The platform offers data residency controls that allow customers to restrict workloads to specific geographic regions—critical for companies in regulated industries.

“We provide a policy framework where you can attach, for example, to workloads to say, this agent cannot run outside of those subsets of regions,” Sinaï explained. “You can attach a policy to say this agent cannot run outside of the United States, so you are sure that this agent will process the data only in the regions you have chosen.”

This approach reflects the company’s belief that even early-stage AI companies need robust infrastructure practices because they’re building the enterprises of tomorrow. “We believe that it’s very important to have, even for young companies, the best infrastructure with the best practices, because they are going to become enterprises,” Sinaï said.

Pay-as-you-go pricing delivers 50% cost savings over traditional serverless

Blaxel has adopted a pay-as-you-go pricing model similar to established cloud providers, moving away from an initial subscription approach after validating market demand during their Y Combinator batch. The model charges customers only when their agents are actively processing tasks, shutting down infrastructure during idle periods to optimize costs.

“We provide infrastructure that spin up in just few milliseconds and shut down in just one second,” Sinaï said. “So you just pay for the time your agent is actually processing something. When your agent is waiting for something else, you don’t have to pay for it because we shut it down.”

The approach has already delivered cost savings for customers, with one client achieving 50% cost reduction compared to typical serverless solutions while processing terabytes of data monthly.

Gartner predicts 75% of apps will use AI agents by 2028

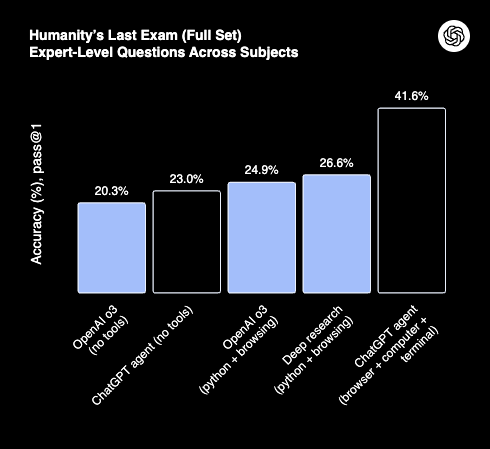

The investment comes as industry analysts predict explosive growth in AI agent adoption. Gartner forecasts that 75% of application development will involve AI agents by 2028, though Sinaï believes current enterprise adoption remains largely experimental.

“Right now, most of companies working actively in production are mostly smaller companies, not yet enterprise companies,” he said. “So we are focusing really on serving them exactly like the big cloud providers did in the past.”

The strategy mirrors how Amazon Web Services initially focused on startups and developer-friendly companies before expanding to enterprise customers. Blaxel plans to follow a similar path, using the $7.3 million to expand their software platform before potentially moving into custom hardware and data center optimization.

“Seven millions is not enough to build data centers, obviously, but I think it’s important to go step by step,” Sinaï said. “Being sure that right now we have the best interfaces we can provide to our customers, the best services for their agents, and then going into the deeper infrastructure optimization.”

The company’s roadmap includes features like snapshot forking for agent experimentation, automatic failover capabilities, and deeper optimization for the massive scale they anticipate. With projections of hundreds of billions of AI agents in the coming decades, Blaxel sees an opportunity to build infrastructure designed for this new computing paradigm from the ground up.

“We believe that there is a huge economy which is starting around the agents,” Sinaï said. “There are going to be hundreds of billions of AI agents, and the infrastructure we have today has not been designed for this new wave.”

The funding round included participation from Y Combinator, Liquid2, Transpose, and angel investors who share the company’s vision of purpose-built agent infrastructure. As AI agents transition from experimental tools to production systems handling critical business processes, Blaxel’s specialized approach could position it to capture significant market share in what may become the next major category of cloud computing.

Daily insights on business use cases with VB Daily

If you want to impress your boss, VB Daily has you covered. We give you the inside scoop on what companies are doing with generative AI, from regulatory shifts to practical deployments, so you can share insights for maximum ROI.

Read our Privacy Policy

Thanks for subscribing. Check out more VB newsletters here.

An error occured.