Liberal Party Leader Mark Carney argued that he represents change from Justin Trudeau’s nine years in power as he fended off attacks from his rivals during the final televised debate of Canada’s election.

“Look, I’m a very different person from Justin Trudeau,” Carney said in response to comments from Conservative Leader Pierre Poilievre, his chief opponent in the election campaign that concludes April 28.

Carney’s Liberals lead by several percentage points in most polls, marking a stunning reversal from the start of this year, when Trudeau was still the party’s leader and Poilievre’s Conservatives were ahead by more than 20 percentage points in some surveys.

Trudeau’s resignation and US President Donald Trump’s economic and sovereignty threats against Canada have upended the race. Poilievre sought to remind Canadians of their complaints about the Liberal government, while Carney tried to distance himself from Trudeau’s record.

Poilievre argued that Carney was an adviser to Trudeau’s Liberals during a time when energy projects were stymied and the cost of living soared — especially housing prices.

Carney, 60, responded that he has been prime minister for just a month, and pointed to moves he made to reverse some of Trudeau’s policies, such as scrapping the carbon tax on consumer fuels. As for inflation, Carney noted that it was well under control when he was governor of the Bank of Canada.

“I know it may be difficult, Mr. Poilievre,” Carney told him. “You spent years running against Justin Trudeau and the carbon tax and they’re both gone.”

“Well, you’re doing a good impersonation of him, with the same policies,” Poilievre shot back.

Trudeau announced in January that he was stepping down as prime minister and Carney was sworn in as his replacement on March 14. He triggered an election nine days later.

“The question you have to ask is: after a decade of Liberal promises, can you afford food?” Poilievre said during one segment. “Is your housing more affordable than it used to be? What is your cost of living like compared to what it was a decade ago?”

When it comes to issues such as housing, “We need a change, and you, sir, are not a change,” Poilievre told Carney.

Trade Retaliation

Polls suggest Trump’s aggression toward Canada is a major issue for voters. The debate opened with a segment on the trade war and candidates broadly agreed on Canada taking a tough response.

Carney made clear that in negotiating with Trump, his government has already moved off the principle of “dollar-for-dollar” counter-tariffs as retaliation. Instead, Carney said, he’s focusing on measures that will have maximum impact in the US but minimum impact on Canada.

“We have to recognize, and I think we all do, the United States economy is more than 10 times the size of the Canadian economy,” Carney said.

The nationally televised event was a critical opportunity for Carney’s opponents to make up ground. That put him under attack from all sides, including from the leader of the left-wing New Democratic Party, Jagmeet Singh, and the head of the sovereigntist Bloc Quebecois, Yves-Francois Blanchet.

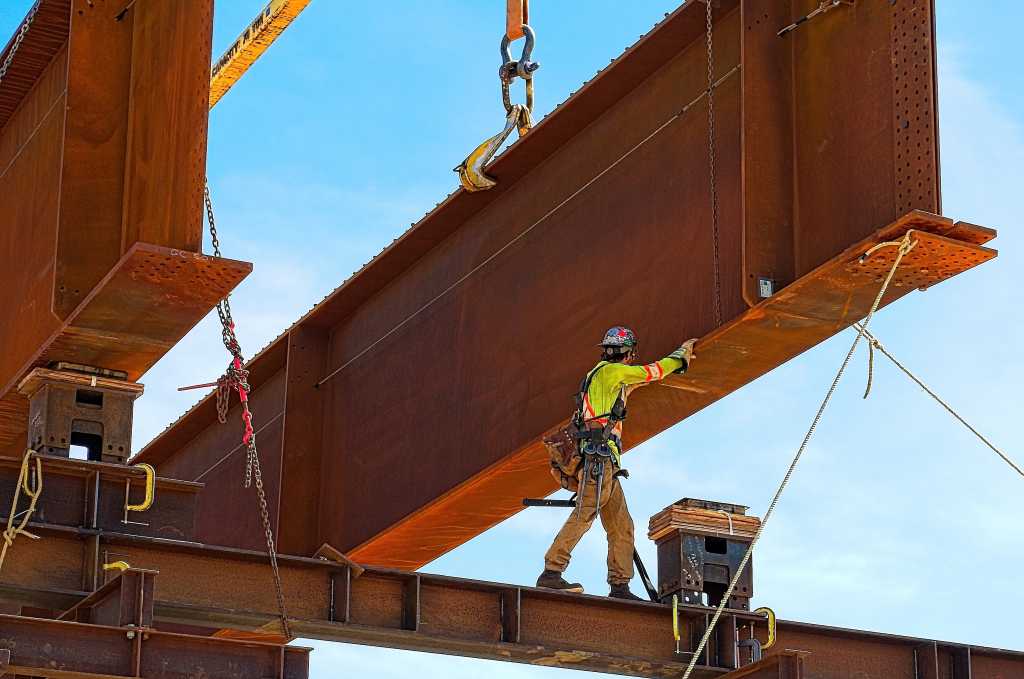

During a lengthy segment on oil infrastructure, Poilievre charged that the Liberals had not done enough to get energy exports to markets other than the US. He said the government’s regulatory regime makes building pipelines too difficult, which “effectively empowers Donald Trump to have a total monopoly on our single biggest export.”

Singh, looking incredulous, pointed out that Trudeau’s government had nationalized the Trans Mountain pipeline, which exports oil from Canada’s west coast, and spent tens of billions of dollars to expand it. “The Liberals bought a pipeline, they built a pipeline,” Singh said. “I don’t know what Pierre is complaining about.”

“I’m interested in getting energy infrastructure built,” Carney insisted. “That means pipelines, that means carbon capture storage, that means electricity grids.”

The first debate, which took place Wednesday, was conducted entirely in French — making it a test for Carney, who is weaker than his opponents in the language. The French-speaking province of Quebec is Canada’s second largest by population and an important battleground region in the election. The Liberal leader made it through that debate largely unscathed.

Still, there were a few notable moments. In one exchange, Carney pledged that his government would produce more oil in order to reduce Canada’s reliance on the US, but that it would need to be “low-carbon” oil.

Carney also said his government would maintain “a cap on all types of immigration for a period of time in order to increase our capacity,” particularly around housing and other social supports for immigrants.

Last fall, Trudeau’s government slashed its permanent immigration target by 21%, aiming for a total of 395,000 for 2025. It also put a limit on international student visas and added restrictions to the use of foreign labor.