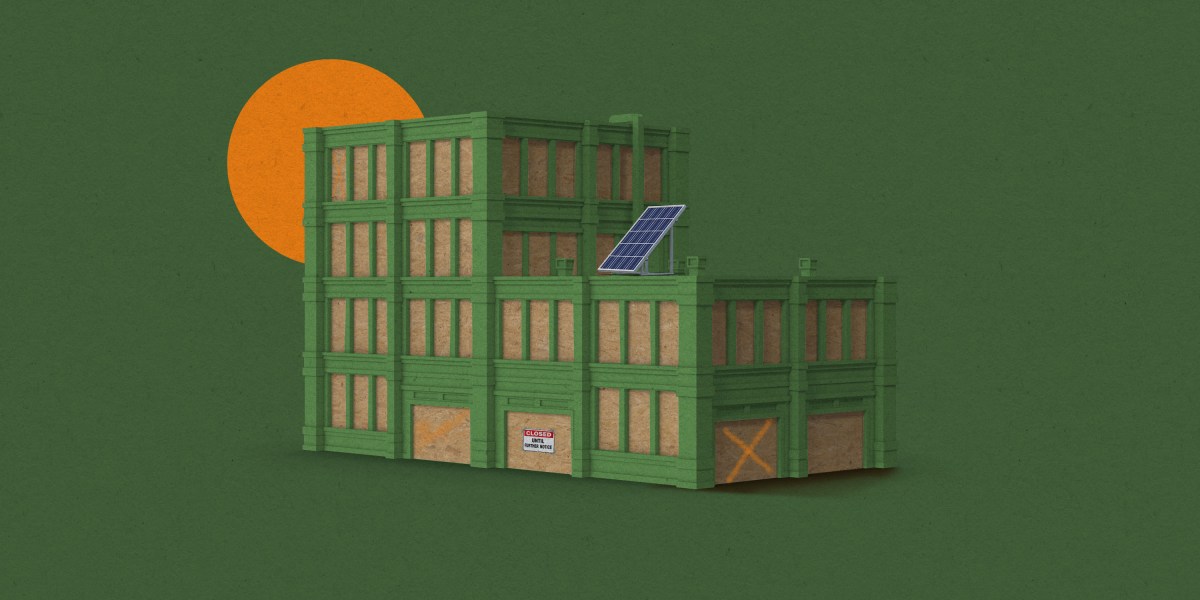

One costly and time-consuming step in constructing a concrete building is creating the “formwork,” the wooden mold into which the concrete is poured. Now MIT researchers have developed a way to replace the wood with lightly treated mud.

“What we’ve demonstrated is that we can essentially take the ground we’re standing on, or waste soil from a construction site, and transform it into accurate, highly complex, and flexible formwork for customized concrete structures,” says Sandy Curth, a PhD student in MIT’s Department of Architecture, who has helped spearhead the project.

The EarthWorks method, as it’s known, introduces some additives, such as straw, and a waxlike coating to the soil material. Then it’s 3D-printed into a custom-designed shape. “We found a way to make formwork that is infinitely recyclable,” Curth says. “It’s just dirt.”

A particular advantage of the technique is that the material’s flexibility makes it easier to create unique shapes optimized so that the resulting buildings use no more concrete than structurally necessary. This can significantly reduce the carbon emissions associated with concrete construction.

“What’s cool here is we’re able to make shape-optimized building elements for the same amount of time and energy it would take to make rectilinear building elements,” says Curth, who recently coauthored a paper on the work with MIT professors Lawrence Sass, SM ’94, PhD ’00; Caitlin Mueller ’07, SM ’14, PhD ’14; and others. He has also founded a firm, Forma Systems, through which he hopes to take EarthWorks into the construction industry.