When Jones Lang LaSalle (JLL)’s Sean Farney walked back on stage after lunch at the Data Center Frontier Trends Summit 2025, he didn’t bother easing into the topic.

“This is the best one of the day,” he joked, “and it’s got the most buzzwords in the title.”

The session, “Scaling AI: The Role of Adaptive Reuse and Power-Rich Sites in GPU Deployment,” lived up to that billing. Over the course of the hour, Farney and his panel of experts dug into the hard constraints now shaping AI infrastructure—and the unconventional sites and power strategies needed to overcome them.

Joining Farney on stage were:

- Lovisa Tedestedt, Strategic Account Executive – Cloud & Service Providers, Schneider Electric

- Phill Lawson-Shanks, Chief Innovation Officer, Aligned Data Centers

- Scott Johns, Chief Commercial Officer, Sapphire Gas Solutions

Together, they painted a picture of an industry running flat-out, where adaptive reuse, modular buildouts, and behind-the-meter power are becoming the fastest path to AI revenue.

The Perfect Storm: 2.3% Vacancy, Power-Constrained Revenue

Farney opened with fresh JLL research that set the stakes in stark terms.

- U.S. colo vacancy is down to 2.3% – roughly 98% utilization.

- Just five years ago, vacancy was about 10%.

- The industry is tracking to over 5.4 GW of colocation absorption this year, with 63% of first-half absorption concentrated in just two markets: Northern Virginia and Dallas.

- There’s roughly 8 GW of build pipeline, but about 73% of that is already pre-leased, largely by hyperscalers and “Mag 7” cloud and AI giants.

“We are the envy of every industry on the planet,” Farney said. “That’s fantastic if you’re in the data center business. It’s a really bad thing if you’re a customer.”

The message to CIOs and CTOs was blunt: if you don’t have a capacity strategy dialed in, your growth may be constrained by infrastructure scarcity.

Farney then layered in the new reality: power has become the gating factor for revenue, citing NVIDIA’s GTC messaging that enterprise revenues are now effectively constrained by power availability.

“It’s a perfect storm of constraint,” he said. “You can’t just tap out. Data keeps doubling every few years. So what do we do in the face of these constraints?”

That question set up the rest of the discussion: how can adaptive reuse, modularity, and power-rich sites change the equation for GPU deployments?

Schneider: Grid-to-Chip, Liquid Cooling, and Energy-as-a-Service

From Schneider Electric’s vantage point, everyone is chasing power and time-to-revenue at once, said Lovisa Tedestedt.

“Customers are trying to find land with energized power, or repurpose land and buildings and re-energize them,” she explained. “Schneider is involved from grid to chip, chip to chiller—the full flow of infrastructure.”

For AI-scale builds, Tedestedt emphasized three interlocking themes:

Liquid cooling as the primary lever for usable IT power

With power headroom tight, the fastest way to “create” more effective capacity is better efficiency.

Moving from purely air-cooled systems to liquid-cooled architectures substantially increases the share of input power that reaches GPUs.

She pointed to warmer CDU (cooling distribution unit) setpoints—on the order of 45°C water temperatures—as a way to maximize efficiency and unlock more IT load from the same utility feed.

Behind-the-meter and microgrid strategies

In many markets, customers are now told they may wait up to eight years for full grid interconnection.

That reality is pushing operators toward onsite generation and microgrids, as well as a mix of supplemental power options.

Schneider is seeing strong traction in “energy-as-a-service” models, where a joint venture or specialist provider owns, maintains, and operates the power plant or microgrid—charging the data center via a per-kWh structure rather than forcing them to become utilities themselves.

Modular, prefabricated deployment for speed

Tedestedt argued that prefabricated, modular electrical rooms and IT pods are now the fastest and often most sustainable path to AI capacity.

“Engineering, stamped drawings, testing—it’s all done in the factory,” she said. “You roll skids in and you’re looking at on the order of a 60% faster deployment versus traditional stick-built infrastructure.”

The throughline in her remarks: finding power, adding power, and then squeezing as much usable IT capacity as possible out of every megawatt through liquid cooling and modular, factory-built systems.

Aligned: Adaptive Reuse, Edge AI, and Designing for an Uncertain Future

Phill Lawson-Shanks of Aligned Data Centers brought a long lens to the conversation, drawing on nearly four decades of digital infrastructure experience.

“We’ve been optimized for cloud for the last 10 years,” he said. “Now things are getting exciting again.”

Liquid + Air, and Why Immersion Isn’t a Default

Lawson-Shanks agreed that liquid cooling is now essential—not a niche technology—but stressed that air isn’t going away anytime soon.

- Current and next-gen hardware stacks still contain numerous components that require air cooling.

- Even in high-density liquid deployments, operators may only be pulling 60–70% of the heat into the liquid loop, with the remainder handled by air.

Full immersion, he argued, isn’t yet the mainstream answer due to chemical and regulatory concerns, especially around PFAS-based fluids and dual-phase systems. The industry is waiting for safer, more widely acceptable chemistries, even as R&D races ahead.

Building for Cloud, AI, and Whatever Comes Next

One of Lawson-Shanks’ central points was that design lifecycles and depreciation schedules haven’t shrunk just because AI is moving fast.

“We still need to monetize a building over 25 years,” he said. “But we don’t actually know how workloads will change over that life.”

That uncertainty is driving Aligned toward:

- Highly adaptable electrical topologies (low-voltage today, but pre-engineered to pivot to higher-voltage AC/DC or blended systems).

- Skidded electrical rooms that can be swapped, expanded, or reconfigured as AI workloads evolve.

- A recognition that AI inference at the edge may dramatically shrink the number of racks while radically increasing density and gray space needed for power and cooling.

“We used to joke edge was always just around the corner,” he said. “Now with agentic AI and inference, we really may be looking at an ‘AI inference edge’—sites that are only a few racks, but carry massive power density and require very different logistics and design.”

Adaptive Reuse as Community Catalyst

Lawson-Shanks then brought the conversation back to adaptive reuse in a very literal sense.

Aligned has focused on large legacy industrial sites where substantial power once existed. He highlighted a project in Sandusky, Ohio, repurposing a former General Motors war-bearing plant that once powered electric arc furnaces.

- The site had been dormant for years as manufacturing moved offshore.

- By acquiring and rehabilitating the property, Aligned unlocked the potential for over a gigawatt of capacity.

- Just as important, they used the project to re-introduce the community to digital infrastructure, visiting local high schools and trade programs to talk about careers in mechanical and electrical engineering and data center operations.

“People say data centers don’t create jobs,” Lawson-Shanks said. “But once you account for client teams, ongoing electrical and mechanical work, security, and services, these facilities absolutely help reinvigorate local economies.”

Adaptive reuse, in his telling, isn’t just about shaving months off a schedule. It’s also about re-energizing communities with new forms of digital-age industry.

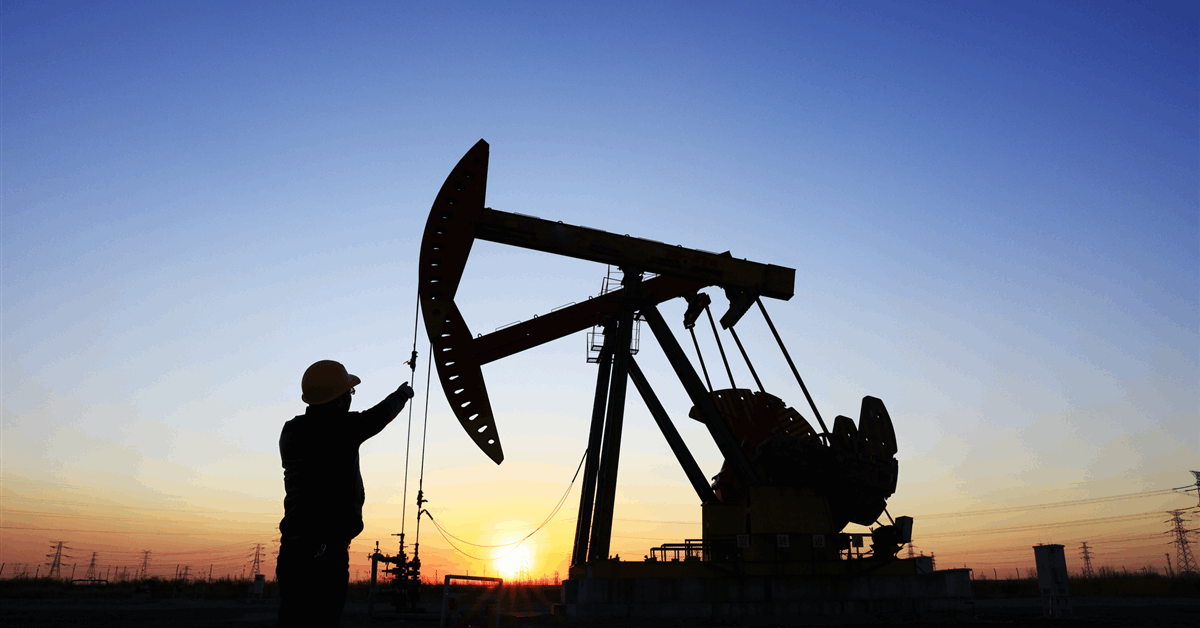

Sapphire Gas: Virtual Pipelines and Behind-the-Fence Power

If power availability is the bottleneck, Sapphire Gas Solutions’ Scott Johns is in the business of widening the neck.

“We operate virtual pipelines,” he said. “Mobile pipeline operations. We keep power plants and entire towns running when utilities have to take pipelines down.”

Sapphire is active in 38 states, serving both:

- Utilities that need temporary or emergency gas supply.

- Large energy users like data centers that need fuel 24/7 but may lack immediate physical pipeline access.

Bridging the Gap to the Grid

Johns argued that while the industry is rightly alarmed by long interconnection queues, the U.S. has been here before.

“We’ve doubled the grid once; we’re getting ready to double it again,” he said. “When customers come to us, they’re in a panic. But this isn’t new in an absolute sense.”

His core message: stop thinking of backup generation and temporary gas services as add-ons, and start planning holistically:

- Many customers start around 10–20 MW of demand and quickly find themselves needing 50, 100, or 150 MW.

- Leasing large banks of generators is not a sustainable strategy at that scale.

- Instead, operators should be purchasing or contracting for behind-the-fence generation—turbines, distributed generation, microgrids, and related infrastructure engineered for long-term scalability.

Utilities themselves are now bringing companies like Sapphire to the table, Johns said, especially when interconnection options look like “40–80 MW in six years, for $120 million-plus in infrastructure”—and when pipelines may require hundreds of millions more.

In those scenarios, virtual pipelines and onsite fuel logistics become critical to:

- Bridge multi-year delays in both electric and gas infrastructure.

- Provide flexible redundancy and curtailment support.

- Supply on-site storage, including large-scale LNG tanks, to buffer price spikes (e.g., winter “polar vortex” events where gas prices can briefly trade at many multiples of summer levels).

From Diesel Gensets to Gas, RNG, and Hydrogen

Johns also challenged the industry to rethink construction and backup practices.

“Stop using diesel generation for construction phases,” he said. “Use natural gas.”

He pointed to:

- Compressed and liquefied natural gas for primary and backup construction power.

- Renewable natural gas (RNG) sourced from swine, dairy, landfills, and wastewater plants, blended into the fuel mix at 10% or more, with negative carbon intensity profiles.

- A path where persistent, large, behind-the-fence loads—like data centers—could ultimately justify faster commercialization of hydrogen, especially if combined with renewable methane and low-carbon production pathways.

Hydrogen drew spirited debate on stage and from the audience. Johns framed it as a 2050-ish fuel that could be brought forward into the 2030s with data center demand and the right policy support. An audience member countered that hydrogen-powered data centers are already operating today at pilot scale, illustrating just how quickly this space is evolving.

Adaptive Reuse, Sustainability, and the Materials Question

For Lovisa Tedestedt, adaptive reuse is not just a clever strategy—it’s an inevitability.

“We have no other choice than to start looking at reuse,” she said. “There’s enough available real estate—warehouses, industrial buildings—that we should be considering for data centers. There’s no end in sight for demand.”

From a sustainability standpoint, she pointed out that:

- Reusing existing buildings avoids a portion of the embodied carbon tied up in concrete and steel.

- Combining reuse with factory-built, pre-tested modular systems can yield both higher uptime and faster deployment.

- For hyperscale-class projects (100 MW-plus), behind-the-meter power plus adaptive reuse may be the only realistic path in many markets.

At the same time, Tedestedt raised a pointed cultural question that landed with the room:

“How did we go from ‘you can’t cook on natural gas at home’ to building tens of gigawatts of natural-gas generation for data centers in a year?” she asked. “What happened to the sustainability story?”

The panel’s collective answer was nuanced rather than neat. Johns defended the central role of gas and RNG in a high-demand transition period. Lawson-Shanks pointed toward nuclear and hydrogen as future complements. Tedestedt emphasized efficiency, reuse, and smarter infrastructure as near-term mitigation.

The exchange underscored an uncomfortable truth: scaling AI will require confronting tradeoffs between speed, reliability, and sustainability in very public ways.

AI Helping Build AI: Design and Operations Get Smarter

In the audience Q&A, one attendee asked the obvious meta-question:

“We’re doing all this to make AI run. Can we also use AI to make some of these problems better?”

Lawson-Shanks said Aligned already is:

- Using AI-driven tools for logistics, such as systems that optimize equipment movements and scheduling.

- Employing platforms like Foresight to analyze construction schedules (P6 files) and surface schedule and resource contention in minutes, rather than waiting weeks for manual analysis.

- Trialing AI agents for cooling optimization, a descendant of work first done at Google DeepMind, to anticipate heat and load fluctuations and modulate valves and flows in real time.

He also pointed to the future of generative design for building structures and systems—akin to how Formula 1 teams use generative tools to optimize chassis designs.

“If you’re putting a billion dollars into a building, you’re not going to do that on day one,” he cautioned. “But as tools mature, we’ll see more organic, optimized structures and material choices that reduce both cost and embodied carbon.”

Will Enterprise Data Centers Be Reborn for AI Inference?

In a rapid-fire “speed round,” Farney posed one last strategic question to the panel:

After 15 years of sending workloads to the cloud and stranding power on-prem, will enterprises see a rebirth of the enterprise data center—specifically for GPU-driven AI inference?

The panel didn’t hesitate.

“Yeah.”

“Yeah.”

“Yeah.”

Their reasoning, woven through the earlier discussion, was straightforward:

- Many enterprises will never put certain systems fully in the cloud, whether for regulatory, security, or data-sovereignty reasons.

- They increasingly want to own their AI destiny, developing models and inference stacks that are deeply tied to internal data and processes.

- The emerging “AI inference edge”—small, dense deployments close to users and data sources—is a natural fit for a next generation of enterprise-class facilities, often built on adaptive reuse and powered by a blend of grid and behind-the-meter sources.

The Road Ahead: From Constraint to Creative Deployment

If Farney opened the session with a “perfect storm of constraint,” the panel closed it with something closer to a roadmap.

- Adaptive reuse offers a way to shortcut interconnection timelines, avoid some embodied carbon, and reinvigorate communities.

- Power-rich and power-adjacent sites—whether near generation, pipelines, or future reactors—are emerging as strategic anchors for AI campuses.

- Behind-the-fence generation, microgrids, and virtual pipelines are no longer exotic; they’re fast becoming table stakes for anyone playing at AI scale.

- Liquid cooling and modular infrastructure are the primary levers for unlocking more usable IT power and compressing time-to-revenue.

- And increasingly, AI itself will help design, build, and operate the infrastructure underpinning the AI era.

As vacancy tightens, power queues lengthen, and GPU clusters grow heavier, denser, and more expensive, the industry’s ability to reuse, re-route, and re-invent sites and power strategies will be the difference between sitting on the sidelines and actually scaling AI.