Ten years ago, everybody was fascinated by the cloud. It was the new thing, and companies that adopted it rapidly saw tremendous growth. Salesforce, for example, positioned itself as a pioneer of this technology and saw great wins.

The tides are turning though. As much as cloud providers still proclaim that they’re the most cost-effective and efficient solution for businesses of all sizes, this is increasingly clashing with the day-to-day experience.

Cloud Computing was touted as the solution for scalability, flexibility, and reduced operational burdens. Increasingly, though, companies are finding that, at scale, the costs and control limitations outweigh the benefits.

Attracted by free AWS credits, me and my CTO started out with setting up our entire company IT infrastructure on the cloud. However, we were shocked when we saw the costs ballooning after just a few software tests. We decided to invest in a high-quality server and moved our whole infrastructure onto it. And we’re not looking back: This decision is already saving us hundreds of Euros per month.

We’re not the only ones: Dropbox already made this move in 2016 and saved close to $75 million over the ensuing two years. The company behind Basecamp, 37signals, completed this transition in 2022, and expects to save $7 million over five years.

We’ll dive deeper into the how and why of this trend and the cost savings that are associated with it. You can expect some practical insights that will help you make or influence such a decision at your company, too.

Cloud costs have been exploding

According to a recent study by Harness, 21% of enterprise cloud infrastructure spend—which will be equivalent to $44.5 billion in 2025—is wasted on underutilized resources. According to the study author, cloud spend is one of the biggest cost drivers for many software enterprises, second only to salaries.

The premise of this study is that developers must develop a keener eye on costs. However, I disagree. Cost control can only get you so far—and many smart developers are already spending inordinate amounts of their time on cost control instead of building actual products.

Cloud costs have a tendency to balloon over time: Storage costs per GB of data might seem low, but when you’re dealing with terabytes of data—which even we as a three-person startup are already doing—costs add up very quickly. Add to this retrieval and egress fees, and you’re faced with a bill you cannot unsee.

Steep retrieval and egress fees only serve one thing: Cloud providers want to incentivize you to keep as much data as possible on the platform, so they can make money off every operation. If you download data from the cloud, it will cost you inordinate amounts of money.

Variable costs based on CPU and GPU usage often spike during high-performance workloads. A report by CNCF found that almost half of Kubernetes adopters found that they’d exceeded their budget as a result. Kubernetes is an open-source container orchestration software that is often used for cloud deployments.

The pay-per-use model of the cloud has its advantages, but billing becomes unpredictable as a result. Costs can then explode during usage spikes. Cloud add-ons for security, monitoring, and data analytics also come at a premium, which often increases costs further.

As a result, many IT leaders have started migrating back to on-premises servers. A 2023 survey by Uptime found that 33% of respondents had repatriated at least some production applications in the past year.

Cloud providers have not restructured their billing in response to this trend. One could argue that doing so would seriously impact their profitability, especially in a largely consolidated market where competitive pressure by upstarts and outsiders is limited. As long as this is the case, the trend towards on-premises is expected to continue.

Cost efficiency and control

There is a reason that cloud providers tend to advertise so much to small firms and startups. The initial setup costs of a cloud infrastructure are low because of pay-as-you-go models and free credits.

The easy setup can be a trap, though, especially once you start scaling. (At my firm, we noticed our costs going out of control even before we scaled to a decent extent, simply because we handle large amounts of data.) Monthly costs for on-premises servers are fixed and predictable; costs for cloud services can quickly balloon beyond expectations.

As mentioned before, cloud providers also charge steep data egress fees, which can quickly add up when you’re considering a hybrid infrastructure.

Security costs can initially be higher on-premises. On the other hand, you have full control over everything you implement. Cloud providers cover infrastructure security, but you remain responsible for data security and configuration. This often requires paid add-ons.

A round-up can be found in the table above. On the whole, an on-premises infrastructure comes with higher setup costs and needs considerable know-how. This initial investment pays off quickly, though, because you tend to have very predictable monthly costs and full control over additions like security measures.

There are plenty of prominent examples of companies that have saved millions by moving back on-premises. Whether this is a good choice for you depends on several factors, though, which need to be assessed carefully.

Should you move back on-premises?

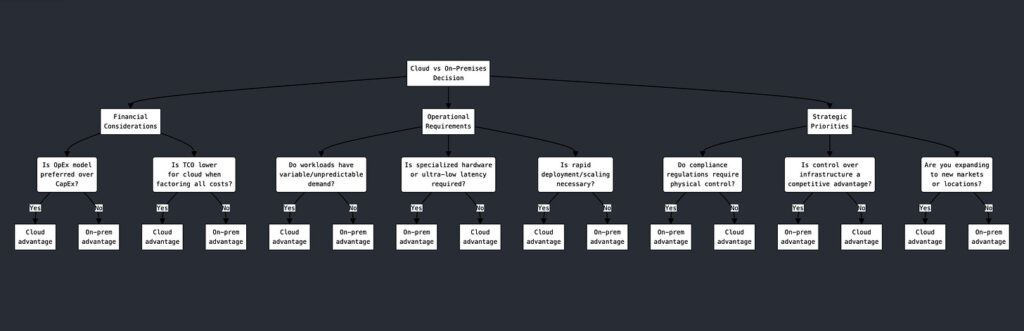

Whether you should make the shift back to server racks depends on several factors. The most important considerations in most cases are financial, operational, and strategic.

From a financial point of view, your company’s cash structure plays a big role. If you prefer lean capital expenditures but have no problem racking up high operational costs every month, then you should remain on the cloud. If you can make a higher capital expenditure up front and then refrain from bleeding cash, you should do this though.

At the end of the day, the total operational costs (TCO) are key though. If your operational costs on cloud are consistently lower than running servers yourself, then you should absolutely stay on the cloud.

From an operational point of view, staying on the cloud can make sense if you often face spikes in usage. On-premises servers can only carry so much traffic; cloud servers scale pretty seamlessly in proportion to demand. If expensive and specialized hardware is more accessible for you on the cloud, this is also a point in favor of staying on the cloud. On the other hand, if you are worried about complying with specific regulations (like GDPR, HIPAA, or CSRD for example), then the shared-responsibility model of cloud services is likely not for you.

Strategically speaking, having full control of your infrastructure can be a strategic advantage. It keeps you from getting locked in with a vendor and having to play along with whatever they bill you and what services they are able to offer you. If you plan a geographic expansion or rapidly deploy new services, then cloud can be advantageous though. In the long run, however, going on-premises might make sense even when you’re expanding geographically or in your scope of services, due to increased control and lower operational costs.

On the whole, if you value predictability, control, and compliance, you should consider running on-premises. If, on the other hand, you value flexibility, then staying on the cloud might be your better choice.

How to repatriate easily

If you are considering repatriating your services, here is a brief checklist to follow:

- Assess Current Cloud Usage: Inventory applications and data volume.

- Cost Analysis: Calculate current cloud costs vs. projected on-prem costs.

- Select On-Prem Infrastructure: Servers, storage, and networking requirements.

- Minimize Data Egress Costs: Use compression and schedule transfers during off-peak hours.

- Security Planning: Firewalls, encryption, and access controls for on-prem.

- Test and Migrate: Pilot migration for non-critical workloads first.

- Monitor and Optimize: Set up monitoring for resources and adjust.

Repatriation is not just for enterprise companies that make the headlines. As the example of my firm shows, even small startups need to make this consideration. The earlier you make the migration, the less cash you’ll bleed.

The bottom line: Cloud is not dead, but the hype around it is dying

Cloud services aren’t going anywhere. They offer flexibility and scalability, which are unmatched for certain use cases. Startups and companies with unpredictable or rapidly growing workloads still benefit greatly from cloud solutions.

That being said, even early-stage companies can benefit from on-premises infrastructure, for example if the large data loads they’re handling would make the cloud bill balloon out of control. This was the case at my firm.

The cloud has often been marketed as a one-size-fits-all solution for everything from data storage to AI workloads. We can see that this is not the case; the reality is a bit more granular than this. As companies scale, the costs, compliance challenges, and performance limitations of cloud computing become impossible to ignore.

The hype around cloud services is dying because experience is showing us that there are real limits and plenty of hidden costs. In addition, cloud providers can often not adequately provide for security solutions, options for compliance, and user control if you don’t pay a hefty premium for all this.

Most companies will likely adopt a hybrid approach in the long run: On-premises offers control and predictability; cloud servers can jump into the fray when demand from users spikes.

There’s no real one-size-fits-all solution. However, there are specific criteria that should help you guide your decision. Like every hype, there are ebbs and flows. The fact that cloud services are no longer hyped does not mean that you need to go all-in on server racks now. It does, however, invite for a deeper reflection about the advantages that this trend offers for your company.