Join our daily and weekly newsletters for the latest updates and exclusive content on industry-leading AI coverage. Learn More

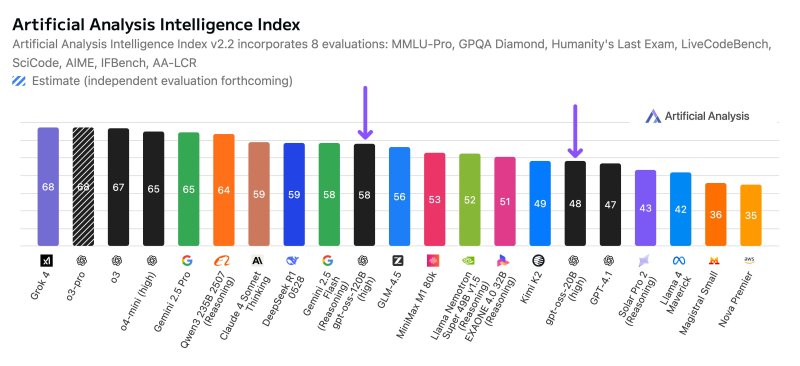

Google’s Gemini 2.5 Pro, which the company calls its most intelligent model ever, quietly took the developer world by storm.

After seeing strong developer interest, Google announced it would increase rate limits for Gemini 2.5 Pro and offer the model at a lower price than many of its competitors. The company did not release pricing at launch.

“We’ve seen incredible developer enthusiasm and early adoption of Gemini 2.5 Pro, and we’ve been listening to your feedback,” Google said in a blog post today. “To make this powerful model available to more developers, we’re moving Gemini 2.5 Pro into public preview in the Gemini API in Google AI Studio today, with Vertex AI rolling out shortly.”

Gemini 2.5 Pro is the first experimental Google model to feature higher rate limits and billing.

Google said developers using Gemini 2.5 Pro on public preview, priced at $1.24 per one million tokens, will see increased rate limits. The experimental version of the model will remain free but have lower rate limits.

Heading off competitors

Gemini 2.5 Pro’s pricing is competitive and significantly lower than competitors like Anthropic and OpenAI.

As previously mentioned, Gemini 2.5 Pro is $1.25 per million input tokens and $10 per million output tokens. Social media users expressed surprise that Google could pull off pricing such a powerful model for so low a price, noting that it’s “about to get wild.”

Anthropic offers Claude 3.7 Sonnet, a comparable model to Gemini 2.5 Pro, at $3 per million input tokens and $15 for output tokens. On its site, Anthropic says that Claude 3.7 Sonnet users can save up to 90% of the cost if they use prompt caching.

OpenAI’s o1 reasoning model costs $15 per million input rockets and $60 per million output tokens. However, cached inputs cost $7.50. Its other reasoning model, o3-mini, is cheaper at $1.10 per million input tokens and $4.40 per million output tokens, but o3-mini is a smaller reasoning model. For non-reasoning models, OpenAI priced GPT-4o at $2.50 for inputs and $10 for outputs.

Gemini 2.5 Pro demand

Google released Gemini 2.5 Pro somewhat quietly, adding the experimental version of the model to the Gemini Advanced. Since its launch a few weeks ago, several developers and users have found it compelling.

VentureBeat’s Ben Dickson played with Gemini 2.5 Pro and declared it may be the “most useful reasoning model yet.”

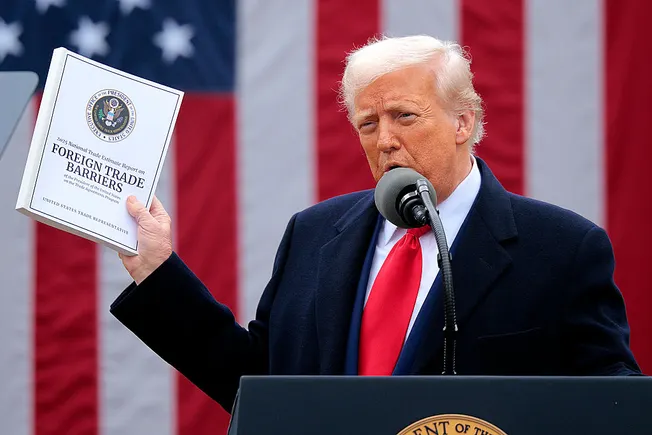

Effectively pricing reasoning models is the next big battleground for AI model developers. DeepSeek’s low cost for DeepSeek R1 caused a ruckus among enterprises. DeepSeek continues to put out models at a lower price than most of the more prominent model developers, putting even more pressure on Google, OpenAI and Anthropic to offer robust and extremely capable models at affordable prices.

Daily insights on business use cases with VB Daily

If you want to impress your boss, VB Daily has you covered. We give you the inside scoop on what companies are doing with generative AI, from regulatory shifts to practical deployments, so you can share insights for maximum ROI.

Read our Privacy Policy

Thanks for subscribing. Check out more VB newsletters here.

An error occured.