The UK government has confirmed that the windfall tax is scheduled to end in 2030, and has launched a consultation on what its successor will look like.

The government had already announced a 2030 end date for the controversial taxation policy in the Autumn Budget when chancellor Rachel Reeves pushed back its end date.

The government has said that authorities will work with industry, communities, trade unions and wider organisations to determine what the new regime could look like to ensure it can respond to any future shocks in commodity prices.

The government said that the new regime will provide long-term certainty to the oil and gas industry, helping support investments.

The regime aims to protect jobs in existing and future industries and deliver a fair return for the nation during times of unusually high prices.

The highly controversial energy profits levy (EPL), or windfall tax, brought the headline rate of tax on operators to 78% late last year after Keir Starmer’s government hiked taxes imposed on UK operators by 3%.

The oil and gas industry has long since rallied against the tax regime in the UK.

Energy secretary Ed Miliband said: “The North Sea will be at the heart of Britain’s energy future. For decades, its workers, businesses and communities have helped power our country and our world.

“Oil and gas production will continue to play an important role and, as the world embraces the drive to clean energy, the North Sea can power our Plan for Change and clean energy future in the decades ahead.

“This consultation is about a dialogue with North Sea communities – businesses, trade unions, workers, environmental groups and communities – to develop a plan that enables us to take advantage of the tremendous opportunities of the years ahead.”

Commitment to existing North Sea fields

In addition, the consultation commits to maintaining existing oil and gas fields for their lifetime.

However, it will also implement the Labour government’s plan to not issue new licences to explore new fields.

These are two policies that Starmer’s premiership has talked about implementing since the 2024 election campaign.

The consultation forms part of the government’s plan to ensure a phased transition for the North Sea, balancing energy security against growing clean industries and creating tens of thousands more jobs in offshore renewables estimated by 2030.

Additional proposals being considered could see changes to the role of North Sea Transition Authority (NSTA), as the regulator of UK oil and gas, offshore hydrogen, and carbon storage industries.

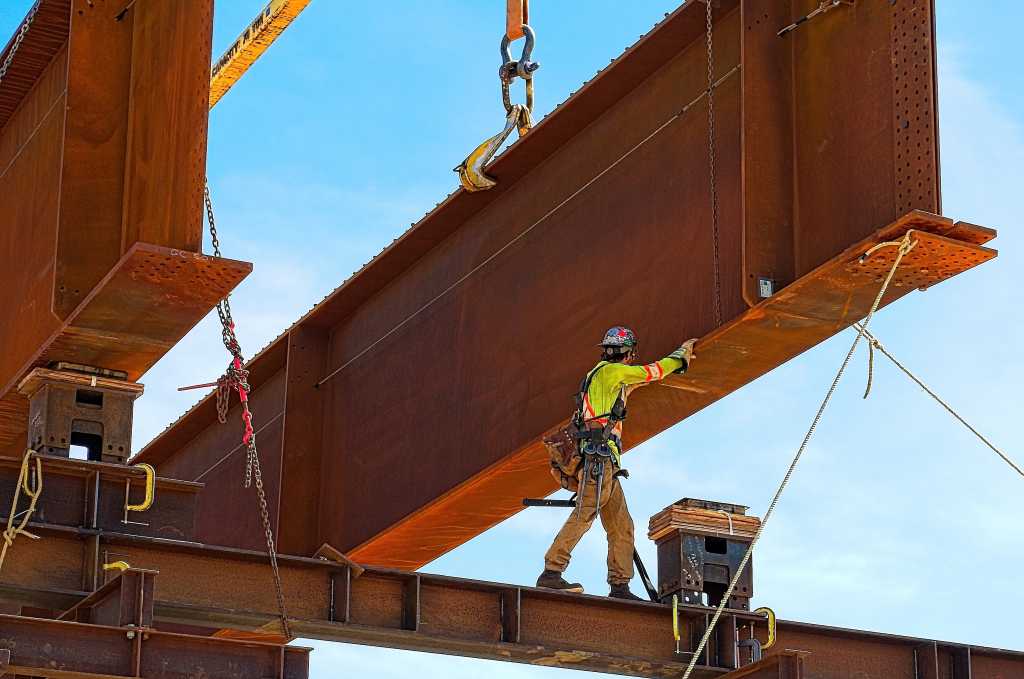

© Supplied by OEUK

© Supplied by OEUKThis includes ensuring the authority has the regulatory framework it needs to support the government’s vision for the long-term future of the North Sea and enable an orderly and prosperous transition to clean energy.

Offshore Energies UK (OEUK) chief executive David Whitehouse said: “The UK offshore energy industry, including its oil and gas sector, is responsible for thousands of jobs across Scotland and the UK, and today the government has committed to meaningful consultation on the long-term future of our North Sea.

“That is important and welcomed. Energy policy underpins our national security – how we build a clean energy future and leverage our proud heritage matters.

“Today’s consultations, on both the critical role of the North Sea in the energy transition and how the taxation regime will respond to unusually high oil and gas prices, will help to begin to give certainty to investors and create a stable investment environment for years to come.

“We will continue to work with government and wider stakeholders to ensure a future North Sea which delivers economic growth and supports the communities that rely on this sector and workers across right and the UK.”

Recommended for you

Port of Cromarty Firth receives £55m to boost floating wind