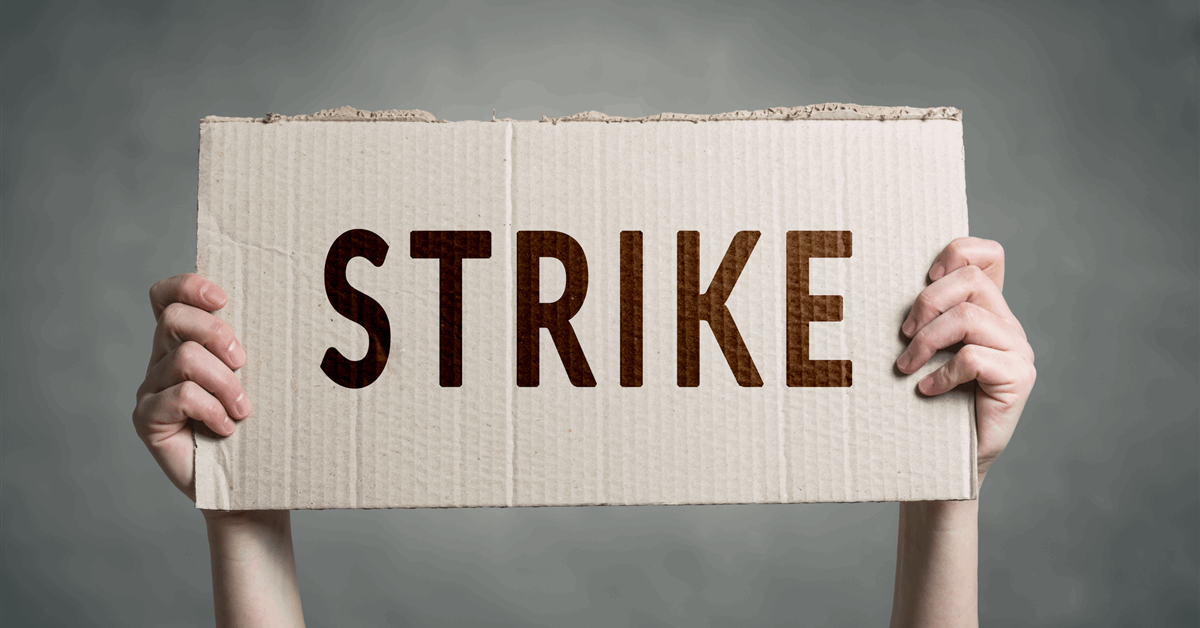

UK union Unite announced that around 350 offshore workers are “traveling towards strike action in disputes with offshore operators and companies”, in a statement sent to Rigzone by the Unite team recently. Workers employed by Repsol, CNOOC, and MCL Medics are all involved in strike ballots or forthcoming industrial action on offshore platforms, Unite highlighted in the statement. “Over 200 Repsol workers have rejected several unacceptable pay offers with the latest amounting to a three per cent increase in basic pay,” the union said in the statement. “Unite can confirm its membership has emphatically backed strike by 92.1 per cent after rejecting the latest pay offer,” it added. The union noted in the statement that industrial action is now set to hit Repsol’s Arbroath, AUK, Bleoholm, Claymore, Clyde, Fulmer, Montrose, and Piper Bravo assets “in a series of stoppages”. Unite said a one day strike will commence at 06:00 on August 6, 13, and 28 and added that a further stoppage will take place on September 4. A continuous overtime ban will also be in operation, according to the statement. The union claimed in the statement that “the impact of industrial action will lead to the shutting down of platforms as the workers involved include control room operators, supervisors, electricians, technicians, mechanics and HSE advisors”. A further 130 CNOOC workers are being balloted on industrial action in a dispute over jobs, pay, and conditions on the Buzzard, Scott, and Golden Eagle platforms, Unite said in the statement, noting that several offers concerning pay and allowances have been rejected by the workers, with the latest amounting to a 4.25 percent increase in basic pay. The union highlighted in the statement that a ballot on industrial action opened on July 25 and will close August 28. “Unite believes that the impact of