For risk managers at electric utilities, the specter of wildfire and extreme weather events looms large. We’ve all heard the term “Black Swan”; the unpredictable, high-impact event that reshapes our understanding of risk. Yet, is the electric utility industry truly equipped to anticipate and mitigate these increasingly frequent catastrophes, particularly when it comes to wildfire and extreme weather?

My passion for this topic stems from a career spent on all sides of the wildfire problem. From issuing red flag warnings at the National Weather Service to analyzing risk at leading electric utilities, I’ve seen firsthand the critical importance of context. It’s not enough to simply react to current weather conditions. Electric utilities must understand the climatological backdrop against which these events unfold.

The “Black Swan” concept originated in finance, describing events that are highly improbable, yet massively consequential. For electric utilities, the increasing frequency of wildfire and extreme weather events demands a paradigm shift. Utilities can no longer rely solely on traditional weather forecasts. They must delve into climatology to truly understand the risk to effectively plan operations.

The October 2007 firestorm around San Diego, CA, was a stark reminder of this reality. I recall working those red flag warnings from the National Weather Service and conveying the message of high winds and fire danger. But, a retired utility leader once told me that if only they had truly understood just how severe that event was going to be, maybe things would have been different.

This single comment illuminated a crucial gap for me: the disconnect between a general warning and the visceral understanding of an unprecedented event. The severe conditions fueling the 2007 firestorm – maximum wind gusts akin to a Category 2 hurricane and extremely dry conditions – were of a magnitude beyond anything seen in San Diego County in modern history. It was a Black Swan and its impact was devastating.

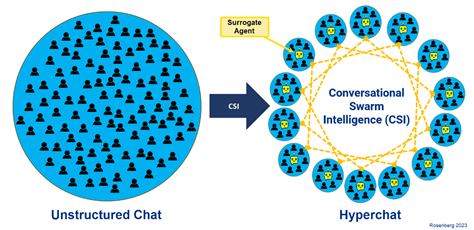

The tragedy spurred electric utilities to develop a higher-resolution approach and address the clear need to balance current weather, fire risk, and historical data to truly grasp the severity of impending events. Imagine an emergency manager seeing a chart of daily wildfire risk displaying a spike far exceeding anything in the past 30 years, a week before it occurs. This is the power of contextual climatology.

Understanding local fire weather climatology is paramount because the overwhelming majority of catastrophic asset-caused ignitions occur during conditions far exceeding the norm for their location. It’s not just about forecasting the weather; it’s about placing that forecast within the context of the past 30 years. Is this just a normal windy day, or are we facing an event in the top 1/2 of 1% of historical extremes? If our historical data doesn’t include past Black Swan events, how can we possibly anticipate future ones? By building a robust climatological record, electric utilities can identify anomalies and prepare for events that might otherwise catch them off guard.

Prior to the Labor Day 2020 fires in Oregon, many areas west of the Cascades had virtually no significant wildfire history. Yet, multiple large and catastrophic wildfires erupted in extreme fire weather conditions that were clearly outside the known climatology. This anomaly was visible in the data and a stark departure from the norm. Electric utilities can apply this approach to wildfire modeling as well, by simulating millions of wildfires, both in real-time and retrospectively to understand the range of potential outcomes based on historical data. By comparing current forecasts to the worst conditions of the past 30 years, they can better assess the true risk and vastly improve their decision-making.

Local climatology also informs our understanding of wind-related impacts to electric utility assets from vegetation contact. A eucalyptus tree, for instance, in a wind-sheltered area will react differently to a 40 mph gust than one routinely exposed to strong winds. This difference underscores the importance of localized data in setting appropriate thresholds for preventative measures. Catastrophic wind-driven asset-caused ignitions almost invariably occur when wind conditions exceed the normal range for a given environment. When we operate above the 99th percentile of historical wind conditions, we enter a danger zone where the environment is primed for disaster.

For electric utilities, this means moving beyond simple red flag warnings and standard weather forecasts. It means investing in high-resolution climatological data, developing sophisticated modeling tools, and fostering a deep understanding of local fire weather and fuel patterns. By embracing a data-driven, context-rich approach, they can move from reactive mitigation to proactive prevention, and have the tools to see the black swan coming. Learn more about the modeling science behind this work and how that science is put into practice.