Sure, the clips you see in demo reels are cherry-picked to showcase a company’s models at the top of their game. But with the technology in the hands of more users than ever before—Sora and Veo 3 are available in the ChatGPT and Gemini apps for paying subscribers—even the most casual filmmaker can now knock out something remarkable.

The downside is that creators are competing with AI slop, and social media feeds are filling up with faked news footage. Video generation also uses up a huge amount of energy, many times more than text or image generation.

With AI-generated videos everywhere, let’s take a moment to talk about the tech that makes them work.

How do you generate a video?

Let’s assume you’re a casual user. There are now a range of high-end tools that allow pro video makers to insert video generation models into their workflows. But most people will use this technology in an app or via a website. You know the drill: “Hey, Gemini, make me a video of a unicorn eating spaghetti. Now make its horn take off like a rocket.” What you get back will be hit or miss, and you’ll typically need to ask the model to take another pass or 10 before you get more or less what you wanted.

So what’s going on under the hood? Why is it hit or miss—and why does it take so much energy? The latest wave of video generation models are what’s known as latent diffusion transformers. Yes, that’s quite a mouthful. Let’s unpack each part in turn, starting with diffusion.

What’s a diffusion model?

Imagine taking an image and adding a random spattering of pixels to it. Take that pixel-spattered image and spatter it again and then again. Do that enough times and you will have turned the initial image into a random mess of pixels, like static on an old TV set.

A diffusion model is a neural network trained to reverse that process, turning random static into images. During training, it gets shown millions of images in various stages of pixelation. It learns how those images change each time new pixels are thrown at them and, thus, how to undo those changes.

The upshot is that when you ask a diffusion model to generate an image, it will start off with a random mess of pixels and step by step turn that mess into an image that is more or less similar to images in its training set.

But you don’t want any image—you want the image you specified, typically with a text prompt. And so the diffusion model is paired with a second model—such as a large language model (LLM) trained to match images with text descriptions—that guides each step of the cleanup process, pushing the diffusion model toward images that the large language model considers a good match to the prompt.

An aside: This LLM isn’t pulling the links between text and images out of thin air. Most text-to-image and text-to-video models today are trained on large data sets that contain billions of pairings of text and images or text and video scraped from the internet (a practice many creators are very unhappy about). This means that what you get from such models is a distillation of the world as it’s represented online, distorted by prejudice (and pornography).

It’s easiest to imagine diffusion models working with images. But the technique can be used with many kinds of data, including audio and video. To generate movie clips, a diffusion model must clean up sequences of images—the consecutive frames of a video—instead of just one image.

What’s a latent diffusion model?

All this takes a huge amount of compute (read: energy). That’s why most diffusion models used for video generation use a technique called latent diffusion. Instead of processing raw data—the millions of pixels in each video frame—the model works in what’s known as a latent space, in which the video frames (and text prompt) are compressed into a mathematical code that captures just the essential features of the data and throws out the rest.

A similar thing happens whenever you stream a video over the internet: A video is sent from a server to your screen in a compressed format to make it get to you faster, and when it arrives, your computer or TV will convert it back into a watchable video.

And so the final step is to decompress what the latent diffusion process has come up with. Once the compressed frames of random static have been turned into the compressed frames of a video that the LLM guide considers a good match for the user’s prompt, the compressed video gets converted into something you can watch.

With latent diffusion, the diffusion process works more or less the way it would for an image. The difference is that the pixelated video frames are now mathematical encodings of those frames rather than the frames themselves. This makes latent diffusion far more efficient than a typical diffusion model. (Even so, video generation still uses more energy than image or text generation. There’s just an eye-popping amount of computation involved.)

What’s a latent diffusion transformer?

Still with me? There’s one more piece to the puzzle—and that’s how to make sure the diffusion process produces a sequence of frames that are consistent, maintaining objects and lighting and so on from one frame to the next. OpenAI did this with Sora by combining its diffusion model with another kind of model called a transformer. This has now become standard in generative video.

Transformers are great at processing long sequences of data, like words. That has made them the special sauce inside large language models such as OpenAI’s GPT-5 and Google DeepMind’s Gemini, which can generate long sequences of words that make sense, maintaining consistency across many dozens of sentences.

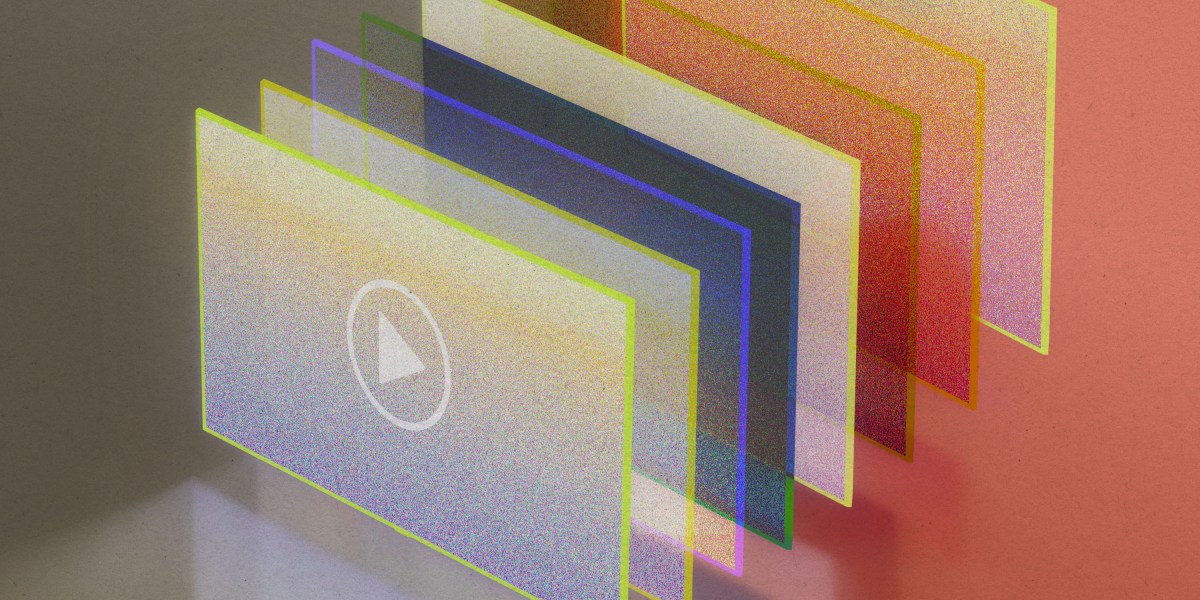

But videos are not made of words. Instead, videos get cut into chunks that can be treated as if they were. The approach that OpenAI came up with was to dice videos up across both space and time. “It’s like if you were to have a stack of all the video frames and you cut little cubes from it,” says Tim Brooks, a lead researcher on Sora.

Using transformers alongside diffusion models brings several advantages. Because they are designed to process sequences of data, transformers also help the diffusion model maintain consistency across frames as it generates them. This makes it possible to produce videos in which objects don’t pop in and out of existence, for example.

And because the videos are diced up, their size and orientation do not matter. This means that the latest wave of video generation models can be trained on a wide range of example videos, from short vertical clips shot with a phone to wide-screen cinematic films. The greater variety of training data has made video generation far better than it was just two years ago. It also means that video generation models can now be asked to produce videos in a variety of formats.

What about the audio?

A big advance with Veo 3 is that it generates video with audio, from lip-synched dialogue to sound effects to background noise. That’s a first for video generation models. As Google DeepMind CEO Demis Hassabis put it at this year’s Google I/O: “We’re emerging from the silent era of video generation.”

The challenge was to find a way to line up video and audio data so that the diffusion process would work on both at the same time. Google DeepMind’s breakthrough was a new way to compress audio and video into a single piece of data inside the diffusion model. When Veo 3 generates a video, its diffusion model produces audio and video together in a lockstep process, ensuring that the sound and images are synched.

You said that diffusion models can generate different kinds of data. Is this how LLMs work too?

No—or at least not yet. Diffusion models are most often used to generate images, video, and audio. Large language models—which generate text (including computer code)—are built using transformers. But the lines are blurring. We’ve seen how transformers are now being combined with diffusion models to generate videos. And this summer Google DeepMind revealed that it was building an experimental large language model that used a diffusion model instead of a transformer to generate text.

Here’s where things start to get confusing: Though video generation (which uses diffusion models) consumes a lot of energy, diffusion models themselves are in fact more efficient than transformers. Thus, by using a diffusion model instead of a transformer to generate text, Google DeepMind’s new LLM could be a lot more efficient than existing LLMs. Expect to see more from diffusion models in the near future!