For a long time, one of the common ways to start new Node.js projects was using boilerplate templates. These templates help developers reuse familiar code structures and implement standard features, such as access to cloud file storage. With the latest developments in LLM, project boilerplates appear to be more useful than ever.

Building on this progress, I’ve extended my existing Node.js API boilerplate with a new tool LLM Codegen. This standalone feature enables the boilerplate to automatically generate module code for any purpose based on text descriptions. The generated module comes complete with E2E tests, database migrations, seed data, and necessary business logic.

History

I initially created a GitHub repository for a Node.js API boilerplate to consolidate the best practices I’ve developed over the years. Much of the implementation is based on code from a real Node.js API running in production on AWS.

I am passionate about vertical slicing architecture and Clean Code principles to keep the codebase maintainable and clean. With recent advancements in LLM, particularly its support for large contexts and its ability to generate high-quality code, I decided to experiment with generating clean TypeScript code based on my boilerplate. This boilerplate follows specific structures and patterns that I believe are of high quality. The key question was whether the generated code would follow the same patterns and structure. Based on my findings, it does.

To recap, here’s a quick highlight of the Node.js API boilerplate’s key features:

- Vertical slicing architecture based on

DDD&MVCprinciples - Services input validation using

ZOD - Decoupling application components with dependency injection (

InversifyJS) - Integration and

E2Etesting with Supertest - Multi-service setup using

Dockercompose

Over the past month, I’ve spent my weekends formalizing the solution and implementing the necessary code-generation logic. Below, I’ll share the details.

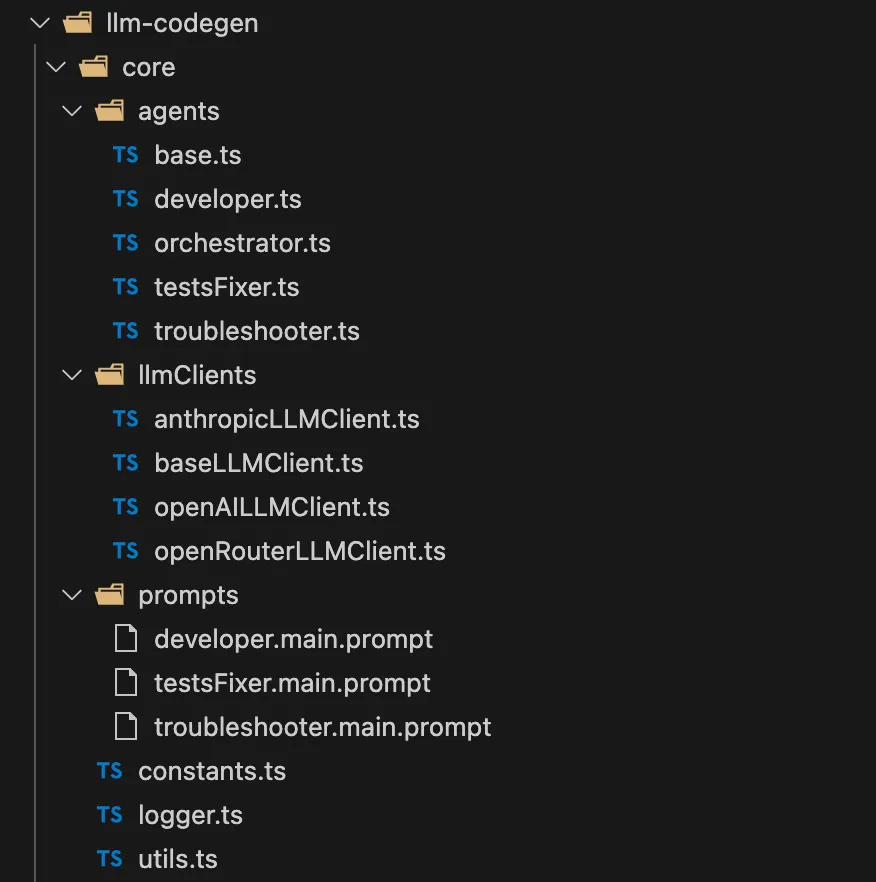

Implementation Overview

Let’s explore the specifics of the implementation. All Code Generation logic is organized at the project root level, inside the llm-codegen folder, ensuring easy navigation. The Node.js boilerplate code has no dependency on llm-codegen, so it can be used as a regular template without modification.

It covers the following use cases:

- Generating clean, well-structured code for new module based on input description. The generated module becomes part of the Node.js REST API application.

- Creating database migrations and extending seed scripts with basic data for the new module.

- Generating and fixing E2E tests for the new code and ensuring all tests pass.

The generated code after the first stage is clean and adheres to vertical slicing architecture principles. It includes only the necessary business logic for CRUD operations. Compared to other code generation approaches, it produces clean, maintainable, and compilable code with valid E2E tests.

The second use case involves generating DB migration with the appropriate schema and updating the seed script with the necessary data. This task is particularly well-suited for LLM, which handles it exceptionally well.

The final use case is generating E2E tests, which help confirm that the generated code works correctly. During the running of E2E tests, an SQLite3 database is used for migrations and seeds.

Mainly supported LLM clients are OpenAI and Claude.

How to Use It

To get started, navigate to the root folder llm-codegen and install all dependencies by running:

npm i

llm-codegen does not rely on Docker or any other heavy third-party dependencies, making setup and execution easy and straightforward. Before running the tool, ensure that you set at least one *_API_KEY environment variable in the .env file with the appropriate API key for your chosen LLM provider. All supported environment variables are listed in the .env.sample file (OPENAI_API_KEY, CLAUDE_API_KEY etc.) You can use OpenAI, Anthropic Claude, or OpenRouter LLaMA. As of mid-December, OpenRouter LLaMA is surprisingly free to use. It’s possible to register here and obtain a token for free usage. However, the output quality of this free LLaMA model could be improved, as most of the generated code fails to pass the compilation stage.

To start llm-codegen, run the following command:

npm run start

Next, you’ll be asked to input the module description and name. In the module description, you can specify all necessary requirements, such as entity attributes and required operations. The core remaining work is performed by micro-agents: Developer, Troubleshooter, and TestsFixer.

Here is an example of a successful code generation:

Below is another example demonstrating how a compilation error was fixed:

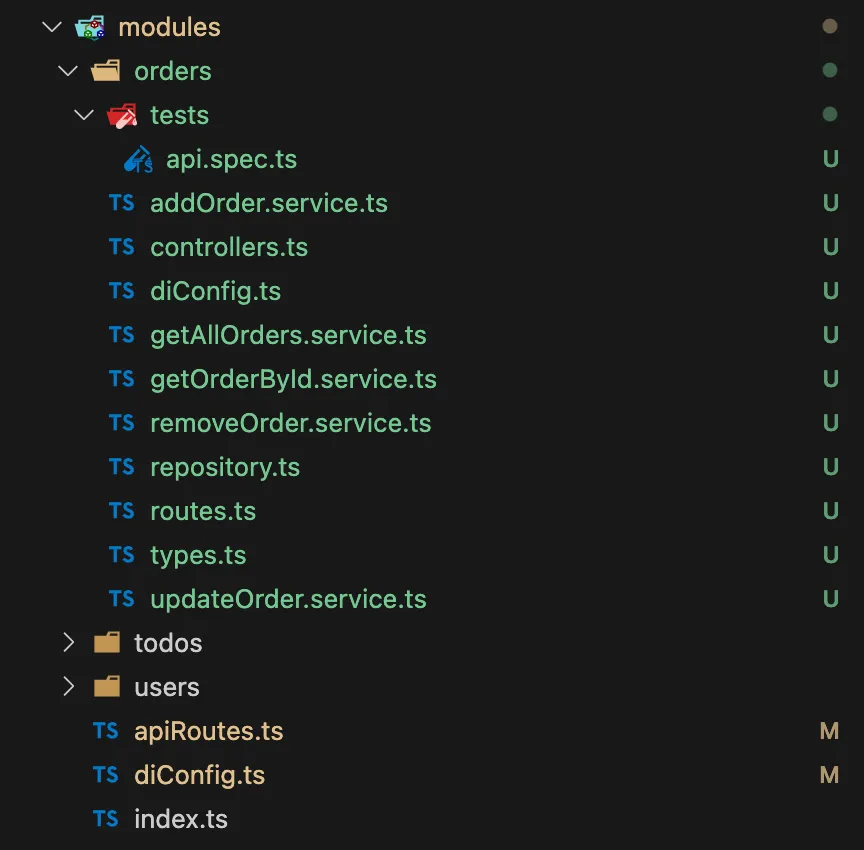

The following is an example of a generated orders module code:

A key detail is that you can generate code step by step, starting with one module and adding others until all required APIs are complete. This approach allows you to generate code for all required modules in just a few command runs.

How It Works

As mentioned earlier, all work is performed by those micro-agents: Developer, Troubleshooter and TestsFixer, controlled by the Orchestrator. They run in the listed order, with the Developer generating most of the codebase. After each code generation step, a check is performed for missing files based on their roles (e.g., routes, controllers, services). If any files are missing, a new code generation attempt is made, including instructions in the prompt about the missing files and examples for each role. Once the Developer completes its work, TypeScript compilation begins. If any errors are found, the Troubleshooter takes over, passing the errors to the prompt and waiting for the corrected code. Finally, when the compilation succeeds, E2E tests are run. Whenever a test fails, the TestsFixer steps in with specific prompt instructions, ensuring all tests pass and the code stays clean.

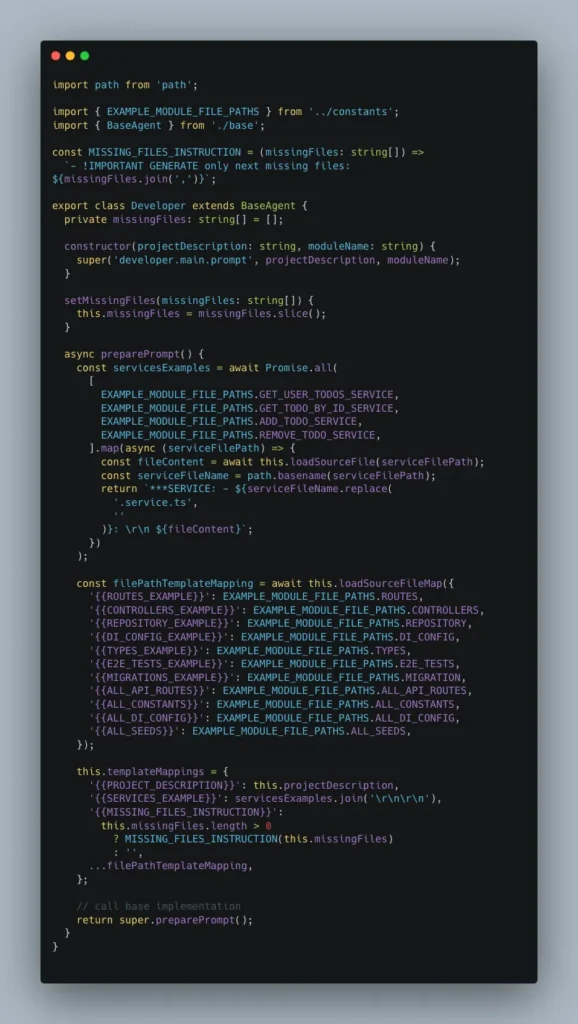

All micro-agents are derived from the BaseAgent class and actively reuse its base method implementations. Here is the Developer implementation for reference:

Each agent utilizes its specific prompt. Check out this GitHub link for the prompt used by the Developer.

After dedicating significant effort to research and testing, I refined the prompts for all micro-agents, resulting in clean, well-structured code with very few issues.

During the development and testing, it was used with various module descriptions, ranging from simple to highly detailed. Here are a few examples:

- The module responsible for library book management must handle endpoints for CRUD operations on books.

- The module responsible for the orders management. It must provide CRUD operations for handling customer orders. Users can create new orders, read order details, update order statuses or information, and delete orders that are canceled or completed. Order must have next attributes: name, status, placed source, description, image url

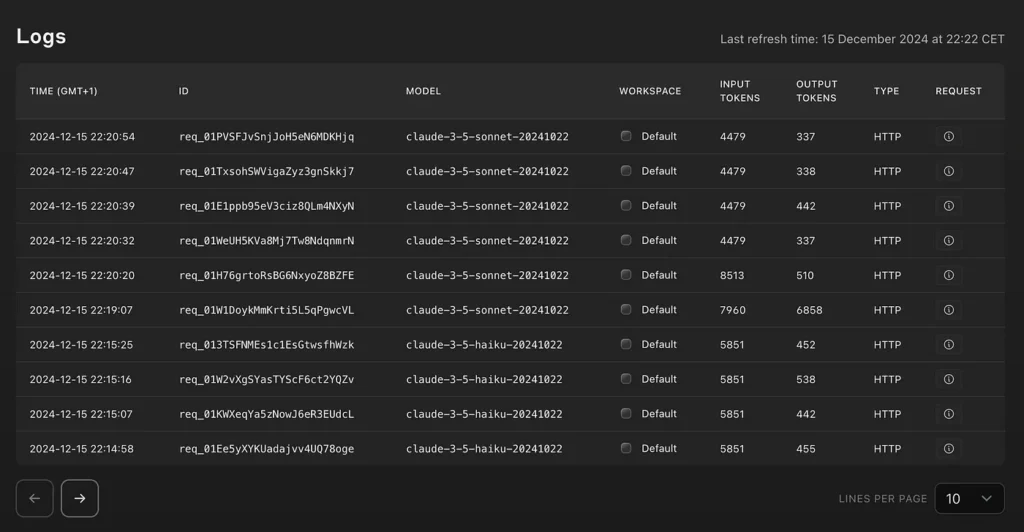

- Asset Management System with an "Assets" module offering CRUD operations for company assets. Users can add new assets to the inventory, read asset details, update information such as maintenance schedules or asset locations, and delete records of disposed or sold assets.Testing with gpt-4o-mini and claude-3-5-sonnet-20241022 showed comparable output code quality, although Sonnet is more expensive. Claude Haiku (claude-3–5-haiku-20241022), while cheaper and similar in price to gpt-4o-mini, often produces non-compilable code. Overall, with gpt-4o-mini, a single code generation session consumes an average of around 11k input tokens and 15k output tokens. This amounts to a cost of approximately 2 cents per session, based on token pricing of 15 cents per 1M input tokens and 60 cents per 1M output tokens (as of December 2024).

Below are Anthropic usage logs showing token consumption:

Based on my experimentation over the past few weeks, I conclude that while there may still be some issues with passing generated tests, 95% of the time generated code is compilable and runnable.

I hope you found some inspiration here and that it serves as a starting point for your next Node.js API or an upgrade to your current project. Should you have suggestions for improvements, feel free to contribute by submitting PR for code or prompt updates.

If you enjoyed this article, feel free to clap or share your thoughts in the comments, whether ideas or questions. Thank you for reading, and happy experimenting!

UPDATE [February 9, 2025]: The LLM-Codegen GitHub repository was updated with DeepSeek API support. It’s cheaper than

gpt-4o-miniand offers nearly the same output quality, but it has a longer response time and sometimes struggles with API request errors.

Unless otherwise noted, all images are by the author