[ Related: More Nvidia news and insights ]

Initial reaction to the chipmakers’ announcement was mostly favorable. Intel shares initially surged on the news but then retreated.

Sag said the Nvidia Intel deal “is simply so unprecedented that it’s hard to process to be honest. But I think it’s a clear indication that Nvidia wants Intel to be around and doesn’t see it as a near term or long-term threat in AI. Strategically, having Intel around keeps AMD on its toes too.”

The deal offers pros and cons for IT buyers across the board.

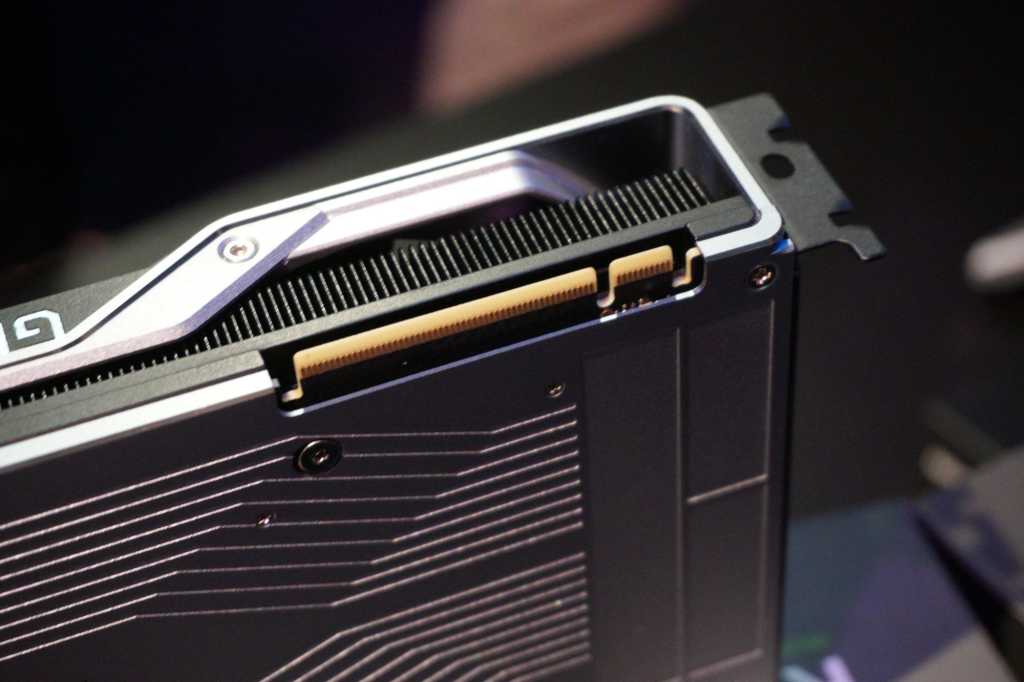

“On the client side, I really don’t know what this means for Intel’s GPU division, but I imagine that maybe it makes Intel + Nvidia solutions better, of which there are already very many. Arc‘s future seems even cloudier than before. Even though I don’t think Intel will abandon GPU IP, I do wonder whether that investment continues for discrete [graphics chips] at this point,” Sag said.

Manufacturing consent

While the companies said that Intel will manufacture the co-developed chips, they said nothing about Nvidia shifting some of its other chip lines from TSMC to Intel fabs.

“I’m also surprised that there’s no foundry component here at all, which I would imagine could help Nvidia drive down costs long term and help prop up Intel.”