SAN JOSE, Calif. — If there was a single message that emerged from Jensen Huang’s keynote at Nvidia’s GTC conference this week, it was this: the artificial intelligence revolution is entering its infrastructure phase.

For the past several years, the technology industry has been preoccupied with training ever larger models. But in Huang’s telling, that era is already giving way to something far bigger: the industrial-scale deployment of AI systems that run continuously, generating intelligence on demand.

“The inference inflection point has arrived,” Huang told the audience gathered at the SAP Center.

That shift carries enormous implications for the data center industry. Instead of episodic bursts of compute used to train models, the next generation of AI systems will require persistent, high-throughput infrastructure designed to serve billions, and eventually trillions, of inference requests every day.

And the scale of the buildout Huang envisions is staggering.

Throughout the keynote, the Nvidia CEO repeatedly referenced what he believes will become a trillion-dollar global market for AI infrastructure in the coming years, spanning accelerated computing systems, networking fabrics, storage architectures, power systems, and the facilities required to house them.

At that scale, Huang argued, data centers are no longer simply IT facilities. They are truly becoming AI factories: industrial systems designed to convert electricity into tokens.

“Tokens are the new commodity,” Huang said. “AI factories are the infrastructure that produces them.”

Across more than two hours on stage, Huang sketched the architecture of that new computing platform, introducing new computing systems, networking technologies, software frameworks, and infrastructure blueprints designed to support what Nvidia believes will be the largest computing buildout in history.

Four main themes defined the presentation:

• The arrival of the inference inflection point.

• The emergence of OpenClaw as a foundational operating layer for AI agents.

• New hybrid inference architectures involving companies such as Groq.

• The growing role of optical networking and digital-twin simulation in designing gigawatt-scale AI infrastructure.

Together, the announcements offered a glimpse of the emerging architecture behind the next generation of AI infrastructure.

CUDA’s Twenty-Year Flywheel

Huang opened by marking the 20th anniversary of CUDA, Nvidia’s GPU programming platform that has become the backbone of modern AI development.

When CUDA launched in 2006, it represented a radical idea: using GPUs not just for graphics but as programmable parallel processors capable of accelerating scientific and analytical workloads.

Two decades later, that bet has grown into one of the most influential software ecosystems in computing.

CUDA now supports:

-

Hundreds of millions of deployed GPUs

-

Thousands of development libraries and tools

-

Hundreds of thousands of open-source CUDA projects

That ecosystem created what Huang described as Nvidia’s defining advantage: a developer flywheel.

How does that work? Developers build algorithms. Breakthrough algorithms create new applications. New applications expand the installed base.

“The flywheel is now accelerating,” Huang said.

The result is a platform dynamic that continues to improve the performance of existing hardware as software evolves, allowing accelerated computing to keep driving performance gains even as traditional transistor scaling slows.

“Moore’s Law has run out of steam,” Huang said. “Accelerated computing is how we take the next giant leap.”

AI Is Rewriting the Data Stack

Another major theme of the keynote was the growing role of AI in data analysis itself.

Huang described enterprise data as increasingly divided between two categories.

Structured data, stored in relational databases and analytics systems.

And unstructured data, including documents, images, video, and audio; which now accounts for roughly 90 percent of newly generated information.

Historically, unstructured data has been difficult to analyze at scale because it lacks the indexing and schema of traditional databases.

AI models are changing that.

Using multimodal reasoning capabilities, modern systems can read documents, interpret images, extract meaning, and organize massive data repositories.

To support this shift, Nvidia has introduced new data frameworks designed to accelerate both structured and unstructured workloads.

The company highlighted new GPU-accelerated libraries for data frames and vector databases, along with integrations with enterprise platforms including IBM WatsonX, Dell AI infrastructure systems, and Google Cloud analytics services.

In one example cited during the keynote, food giant Nestlé reportedly accelerated a supply-chain analytics workload fivefold while reducing compute costs by 83 percent using GPU-accelerated processing.

The AI Platform Shift

Huang framed the current moment as the latest in a series of computing platform transitions.

The personal computer era produced companies like Microsoft and Intel.

The internet era produced Google and Amazon.

The mobile and cloud era reshaped enterprise software.

Now, Huang argues, artificial intelligence is driving the next platform shift.

Investment reflects that shift.

Venture funding for AI startups has already surpassed $150 billion, making it one of the largest waves of technology investment in history.

Three breakthroughs accelerated that transition.

First came generative AI, capable of creating new content rather than simply retrieving information.

Second came reasoning models, capable of planning, verifying results, and solving complex tasks.

Third came agentic AI systems, capable of executing multi-step workflows autonomously.

When AI systems begin performing productive work – reading documents, writing software, performing research – the computing requirements change dramatically.

Every step requires inference. Thinking requires inference; reading requires inference; generating output requires inference. That dynamic, Huang said, is driving an explosion in computing demand.

Nvidia estimates that AI compute demand has increased roughly one million-fold in the past two years when accounting for both model complexity and usage.

The Inference Inflection Point

For much of the past decade, the AI industry has been defined by training workloads.

But Huang argued that the center of gravity has shifted: “The inference inflection has arrived.”

AI systems are increasingly running continuously: generating responses, executing tasks, and interacting with users in real time.

Instead of occasional bursts of compute used for model training, AI infrastructure must now support persistent, high-throughput inference workloads.

That change dramatically increases the importance of infrastructure efficiency.

Which brought Huang to the concept at the center of the keynote.

Data Centers Become Token Factories

In Huang’s framing, modern data centers are evolving into AI factories.

Rather than storing data or hosting applications, these facilities are designed to generate tokens as the fundamental units of output produced by AI models.

“Tokens are the new commodity,” Huang said.

In this framework, the economics of AI infrastructure revolve around a single metric: Tokens per watt.

Power availability has already emerged as one of the most significant constraints on AI infrastructure expansion.

As a result, the productivity of AI factories increasingly depends on how efficiently they convert electricity into inference output.

Every improvement in compute architecture, networking bandwidth, cooling efficiency, or software optimization ultimately serves the same goal: increasing token production within a fixed power envelope.

Grace Blackwell and the Inference Breakthrough

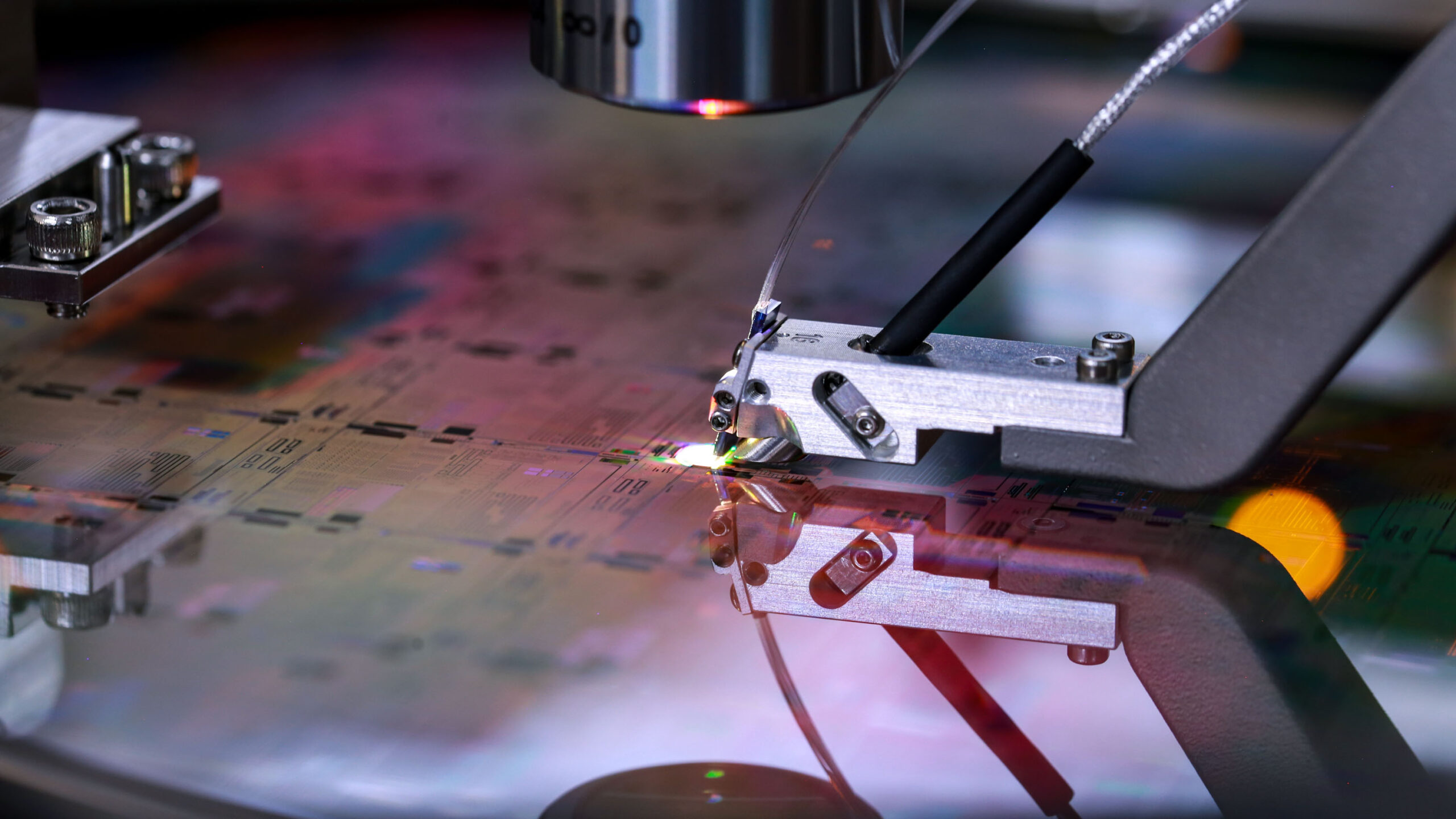

Nvidia’s current architecture, Grace Blackwell NVLink72, represents a major step toward that goal.

The system connects 72 GPUs into a single high-bandwidth computing domain using Nvidia’s NVLink switching fabric.

According to benchmarks discussed during the keynote, the platform delivers dramatic improvements in inference performance.

Industry analysts estimate that the architecture provides 35 to 50 times higher performance than previous generation systems for certain workloads.

Those gains come from multiple innovations:

-

NVFP4 precision designed for inference workloads

-

Optimized tensor processing algorithms

-

Extensive kernel tuning via DGX Cloud

-

Tight hardware-software integration

The result is dramatically lower token cost.

“If you have the wrong architecture,” Huang said, “even if it’s free, it’s not cheap enough.”

Rubin and the Architecture of AI Factories

Nvidia’s next generation platform, Vera Rubin, extends that design philosophy.

Rubin is engineered specifically for agentic AI systems, which require massive memory bandwidth and extremely fast interconnects.

The architecture integrates:

-

NVLink72 GPU clusters

-

A new Vera CPU for orchestration workloads

-

AI-optimized storage systems

-

Co-packaged optical networking

-

Fully liquid-cooled rack infrastructure

Rubin systems will deliver roughly 3.6 exaflops of compute per rack-scale system.

The architecture is also designed to operate with 45-degree Celsius hot-water cooling, reducing the thermal burden on data center facilities.

According to Huang, the Rubin infrastructure could increase token output from 2 million tokens per second to roughly 700 million tokens per second within a gigawatt-scale AI facility.

Disaggregated Inference and the Groq Integration

Another notable development in the keynote was Nvidia’s collaboration with Groq.

Groq processors use a deterministic dataflow architecture optimized for ultra-low-latency inference workloads.

Unlike GPUs, which dynamically schedule tasks, Groq processors execute statically compiled computation pipelines.

That design enables extremely fast token generation.

Historically, Groq chips lacked the memory capacity required to host large models independently.

Nvidia’s solution is disaggregated inference.

Under this approach:

The two systems operate together through Nvidia’s Dynamo inference orchestration software.

The hybrid architecture could deliver up to 35-times performance improvements for certain inference workloads, Huang said.

Co-Packaged Optics Enters the AI Data Center

Networking also emerged as a central theme.

Huang announced that Nvidia’s Spectrum-X networking platform now includes the industry’s first production co-packaged optical switch, developed in collaboration with TSMC.

Co-packaged optics integrates optical transceivers directly into networking silicon, allowing electrical signals to convert to optical signals within the switch package itself.

The result is dramatically higher bandwidth and lower power consumption compared with conventional pluggable optical modules.

As AI clusters scale into tens of thousands of GPUs, networking bandwidth is becoming as important as compute performance.

Future Nvidia architectures will combine both copper and optical connectivity.

“Are we going to scale with copper?” Huang asked.

“Yes.”

“Are we going to scale with optics?”

“Yes.”

DSX: Designing the AI Factory

Perhaps the most consequential infrastructure announcement was Nvidia’s introduction of the Vera Rubin DSX AI Factory reference architecture and the Omniverse DSX digital-twin blueprint.

Together, the two systems represent Nvidia’s attempt to standardize how gigawatt-scale AI infrastructure is designed and deployed.

The DSX reference architecture provides a guide for building fully integrated AI factories spanning compute, networking, storage, power, and cooling systems.

Meanwhile, the Omniverse DSX blueprint allows operators to create physically accurate digital twins of AI factories before construction begins.

Using Nvidia’s Omniverse simulation environment, developers can model:

These simulations allow operators to evaluate design decisions and operational policies before deploying physical infrastructure.

In an environment where AI campuses may cost tens of billions of dollars, Nvidia believes such digital twins will become essential tools.

A broad ecosystem of companies is already integrating with the platform, including Cadence, Dassault Systèmes, Schneider Electric, Siemens, Vertiv, Trane Technologies, and Switch.

Energy companies including GE Vernova, Siemens Energy, Hitachi Energy, and Emerald AI are also working with Nvidia to integrate grid-level modeling into the system.

The platform includes software components designed to optimize operations.

- DSX Max-Q maximizes computing output within fixed power budgets.

- DSX Flex allows AI factories to dynamically adjust power consumption in response to grid conditions.

- DSX Exchange connects IT systems with facility and energy management platforms.

- DSX Sim enables high-fidelity digital-twin simulations of full AI factory deployments.

Taken together, the DSX architecture reflects Nvidia’s view that AI infrastructure must be co-designed with energy systems, rather than treated as a traditional IT workload.

OpenClaw, Nemo, and Nemotron: The Software Stack for Agentic AI

On the software side, Huang highlighted the rapid emergence of OpenClaw, an open framework for orchestrating AI agents.

“OpenClaw opened the next frontier of AI to everyone and became the fastest-growing open source project in history,” said Jensen Huang.

The system connects language models, enterprise tools, APIs, and data systems into coordinated workflows.

Huang described OpenClaw as an operating system for agentic computing; a layer that manages how AI systems plan, execute, and coordinate work.

Agents built on the platform can:

-

Access databases and APIs

-

Plan multi-step workflows

-

Execute tasks

-

Coordinate with other agents

Huang compared the technology’s potential significance to foundational internet platforms.

“OpenClaw is as important as Linux,” he said. “It is as important as HTML. It is as important as Kubernetes.”

But OpenClaw is only one layer of what Huang is assembling.

If OpenClaw is the operating system, Nvidia’s Nemo platform and Nemotron models form the intelligence layer that runs on top of it.

Nvidia introduced NemoClaw, an enterprise implementation designed to bring security, governance, and policy control to agent systems as a means of addressing a key concern Huang raised: agents that can access sensitive data, execute code, and communicate externally cannot operate without guardrails.

At the same time, Nvidia is expanding its Nemotron family of open models, positioning them as a foundational layer for agentic systems across industries.

To accelerate that effort, the company announced the NVIDIA Nemotron Coalition, a collaboration with leading AI-native companies including Mistral AI, Perplexity, LangChain, Cursor, and others.

The coalition will co-develop open, frontier-scale foundation models, trained on Nvidia’s DGX Cloud infrastructure, that can be specialized for different industries, regions, and use cases.

Those models will underpin the next generation of Nemotron systems, which Huang positioned as customizable, domain-specific intelligence layers for enterprise AI.

“Open models are the lifeblood of innovation,” Huang said.

The strategy reflects a broader shift.

Rather than building a single dominant model, Nvidia is attempting to seed an ecosystem of open, extensible foundation models that enterprises can adapt to their own data, workflows, and regulatory environments.

In Huang’s architecture, the pieces fit together:

-

Nemotron provides the base intelligence.

-

OpenClaw orchestrates agent behavior.

-

NemoClaw secures and governs deployment.

Together, they form what Huang is effectively positioning as a full software stack for agentic AI.

And just as CUDA defined the programming model for GPU computing, Nvidia is now attempting to define the software architecture for the next phase of AI.

From Agents to Physical AI: Robotics, Autonomy, and Space Data Centers

If the first half of Huang’s keynote was about the economics of inference, the latter portion extended that logic into the physical world.

Agentic AI systems, he argued, are not confined to software.

They are the foundation of what Nvidia calls physical AI: systems that perceive, reason, and act in real-world environments.

“We have digital agents,” Huang said. “Now we have physically embodied agents. We call them robots.”

Nvidia’s approach to robotics reflects the same full-stack philosophy that defines its data center strategy. The company describes three core systems required to enable physical AI:

-

A training system for developing models.

-

A simulation system for generating synthetic data.

-

A runtime system embedded within robots themselves.

At the center of that approach is simulation.

Because real-world data is inherently limited and difficult to collect at scale, Huang emphasized the importance of generating synthetic training data using physics-based simulation and AI-generated environments.

“Compute is data,” Huang said, in a line that underscores Nvidia’s belief that simulation will become a primary driver of model development in robotics.

The company’s Isaac platform, along with newer tools such as Cosmos world models and Groot robotics models, is designed to create and train robots in simulated environments before deployment in the real world.

Autonomous Vehicles Reach Their “ChatGPT Moment”

Huang also suggested that autonomous driving has reached a turning point.

“The ChatGPT moment of self-driving cars has arrived,” he said.

Nvidia announced a new wave of automotive partnerships, including deployments with global manufacturers such as BYD, Hyundai, Nissan, and Geely, joining existing partners like Mercedes-Benz and Toyota.

In total, the company says its platform will support autonomous systems across tens of millions of vehicles annually.

A key shift is the integration of reasoning models into vehicle systems.

Instead of simply reacting to sensor inputs, next-generation autonomous systems are capable of explaining their decisions, planning actions, and adapting to complex environments.

In demonstrations during the keynote, vehicles narrated their own behavior; describing lane changes, obstacle avoidance, and route decisions in real time.

That capability reflects the broader convergence Huang described between language models and physical systems.

Autonomous vehicles are no longer just perception systems. They are reasoning systems operating in the physical world.

Infrastructure Extends to the Edge — and Beyond

Huang also pointed to a broader expansion of AI infrastructure beyond traditional data centers.

Telecommunications networks, for example, are evolving into distributed AI platforms.

Base stations — once designed purely for signal transmission — will increasingly run AI workloads, performing tasks such as traffic optimization, beamforming, and energy management.

“The base station is going to become an AI infrastructure platform,” Huang said.

That shift extends the AI factory model to the network edge.

But Huang pushed the idea even further.

Nvidia is now exploring data center infrastructure in space.

The company announced early work on a system called Vera Rubin Space, designed to operate in orbital environments where cooling must rely on radiation rather than convection.

While still experimental, the effort reflects Huang’s broader point: as AI becomes a continuous, global workload, infrastructure will expand wherever compute can be deployed efficiently.

Bottom line: From hyperscale campuses to edge networks and potentially into orbit, the boundaries of the data center are expanding.

The Next Phase of AI Is Physical

Taken together, the robotics, autonomous vehicle, and edge infrastructure announcements extend Huang’s core thesis.

AI is no longer just software. It is becoming a distributed, physical system embedded in machines, networks, and environments.

And just as in the data center, the governing constraints remain the same: compute efficiency, energy, throughput.

Whether in a factory, a vehicle, or a satellite, the same principle applies. AI systems must convert power into intelligence – as efficiently as possible.

The Infrastructure Era of AI

By the end of the keynote, Jensen Huang had effectively shifted the center of gravity for the industry.

For years, artificial intelligence has been defined by advances in models. But in Huang’s telling, the decisive challenge of the next decade will be building the systems required to run those models at global scale.

Those systems must deliver continuous inference, operate within fixed power envelopes, and integrate compute, networking, and energy infrastructure at unprecedented scale.

Nvidia’s estimate of a $1 trillion AI infrastructure market reflects the magnitude of that shift.

What emerges is not simply a larger data center industry, but a different kind of industrial system altogether: a global platform for generating intelligence as a continuous output.

If that forecast proves accurate, the industry moving to the center of the AI economy will not just be software or semiconductors, but the full stack of power, networking, and computing required to sustain it.

In that world, the defining metric is no longer capacity or uptime. It is productivity.

How much intelligence can be produced from a given amount of energy. How many tokens a data center can generate from every watt of electricity it consumes.

Because in the AI factory era, electricity is no longer just an input to computing. It is the raw material of intelligence.