LLOG Exploration Company, L.L.C. has advanced its Salamanca project in the Gulf of Mexico (GOM), which involves the conversion of a former GOM production facility into a floating production unit (FPU).

The privately owned exploration and production company anticipates the final outfitting of the FPU to be completed in early 2025, in time for the project’s production target of mid-2025.

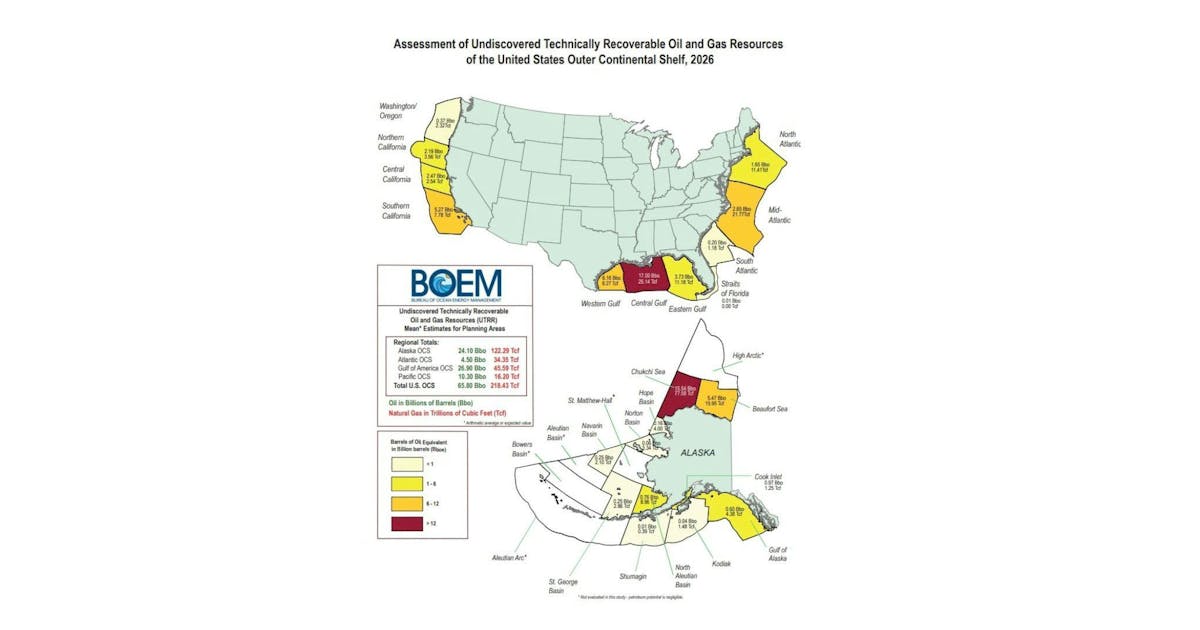

The Salamanca FPU will have a capacity of 60,000 barrels per day (bpd) of oil and 40 million cubic feet per day (MMcfpd) of natural gas. The approach “significantly minimizes environmental impact by reusing existing infrastructure and reduces time, ultimately enhancing economic returns,” LLOG said in a news release.

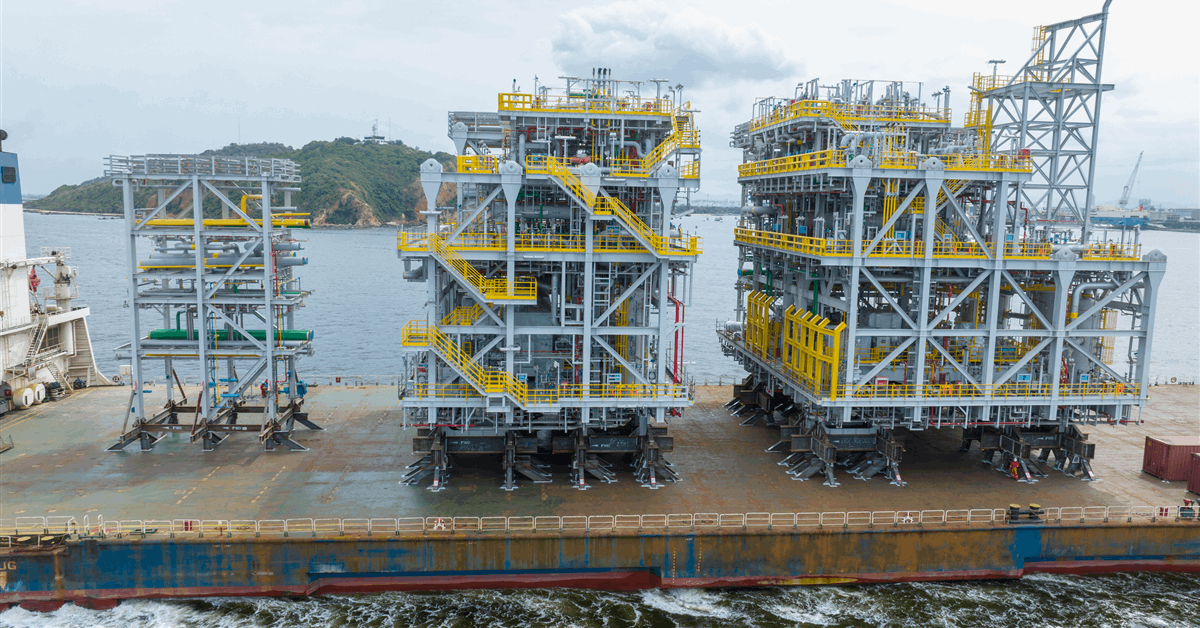

The hull refurbishment was completed at Seatrium in Brownsville, Texas and delivered to Kiewit’s yard in Ingleside in October 2024. In early November 2024, the new topside equipment and deck was successfully rejoined to the hull, LLOG stated.

Further, LLOG said that all of the initial wells to support the Salamanca FPU have been successfully drilled and cased, including discovery wells drilled at Castile and Leon, with additional successful development wells drilled in 2023 and 2024.

“The final well finished drilling in September 2024 at the Leon Development (Keathley Canyon 686 #4), with better-than-expected results, encountering greater than 1,000 feet of high quality oil-bearing sands,” LLOG said. The facility will be located in Keathley Canyon 689 in approximately 6,400 feet of water.

LLOG COO Eric Zimmermann said, “LLOG has a long history of developing prolific projects in the GOM safely, efficiently and economically. We are pleased to be progressing another world class project and to have reached several important milestones while also optimizing financial flexibility through securing financing for the Salamanca project. The unique aspect of the Salamanca facility is that the FPU is the first refurbishment of a GOM facility that was in production and being brought into commerce as a producing asset again. By modifying a previously built production unit compared with constructing a new facility, we are able to significantly reduce the time to bring these discoveries online”.

“Also, the project has a significantly positive environmental impact as it reuses an existing unit compared with abandonment of the unit, while also accomplishing approximately a 70% reduction in emissions impact compared to the construction of a new unit. As a Louisiana-based company, the other aspect of the project that brings us pride is the major construction for this project has been undertaken in shipyards and construction yards in Texas and Louisiana versus occurring internationally. Our ongoing success and achievements in delivering complex deepwater projects reflect the dedication and expertise of our outstanding team,” he added.

LLOG entered the Leon field as its operator in 2019 through an agreement with Repsol. LLOG is the operator of the Salamanca FPU, as well as the Leon and Castile discoveries with Repsol and O.G. Oil & Gas as non-operating working interest owners.

In November 2024, Karoon Energy Ltd and LLOG confirmed a hydrocarbon discovery in the Who Dat South exploration well in the GOM. Drilling in Who Dat South started in September 2024.

Karoon said in an earlier news release that the exploration well intersected several hydrocarbon-bearing sandstone intervals through the targeted Miocene zones, over a gross interval between 5,000 meters measured depth (MD) and a final total depth (TD) of 7,014 meters MD.

To contact the author, email [email protected]

WHAT DO YOU THINK?

Generated by readers, the comments included herein do not reflect the views and opinions of Rigzone. All comments are subject to editorial review. Off-topic, inappropriate or insulting comments will be removed.

MORE FROM THIS AUTHOR