Join our daily and weekly newsletters for the latest updates and exclusive content on industry-leading AI coverage. Learn More

Meta’s new flagship AI language model Llama 4 came suddenly over the weekend, with the parent company of Facebook, Instagram, WhatsApp and Quest VR (among other services and products) revealing not one, not two, but three versions — all upgraded to be more powerful and performant using the popular “Mixture-of-Experts” architecture and a new training method involving fixed hyperparameters, known as MetaP.

Also, all three are equipped with massive context windows — the amount of information that an AI language model can handle in one input/output exchange with a user or tool.

But following the surprise announcement and public release of two of those models for download and usage — the lower-parameter Llama 4 Scout and mid-tier Llama 4 Maverick — on Saturday, the response from the AI community on social media has been less than adoring.

Llama 4 sparks confusion and criticism among AI users

An unverified post on the North American Chinese language community forum 1point3acres made its way over to the r/LocalLlama subreddit on Reddit alleging to be from a researcher at Meta’s GenAI organization who claimed that the model performed poorly on third-party benchmarks internally and that company leadership “suggested blending test sets from various benchmarks during the post-training process, aiming to meet the targets across various metrics and produce a ‘presentable’ result.”

The post was met with skepticism from the community in its authenticity, and a VentureBeat email to a Meta spokesperson has not yet received a reply.

But other users found reasons to doubt the benchmarks regardless.

“At this point, I highly suspect Meta bungled up something in the released weights … if not, they should lay off everyone who worked on this and then use money to acquire Nous,” commented @cto_junior on X, in reference to an independent user test showing Llama 4 Maverick’s poor performance (16%) on a benchmark known as aider polyglot, which runs a model through 225 coding tasks. That’s well below the performance of comparably sized, older models such as DeepSeek V3 and Claude 3.7 Sonnet.

Referencing the 10 million-token context window Meta boasted for Llama 4 Scout, AI PhD and author Andriy Burkov wrote on X in part that: “The declared 10M context is virtual because no model was trained on prompts longer than 256k tokens. This means that if you send more than 256k tokens to it, you will get low-quality output most of the time.”

Also on the r/LocalLlama subreddit, user Dr_Karminski wrote that “I’m incredibly disappointed with Llama-4,” and demonstrated its poor performance compared to DeepSeek’s non-reasoning V3 model on coding tasks such as simulating balls bouncing around a heptagon.

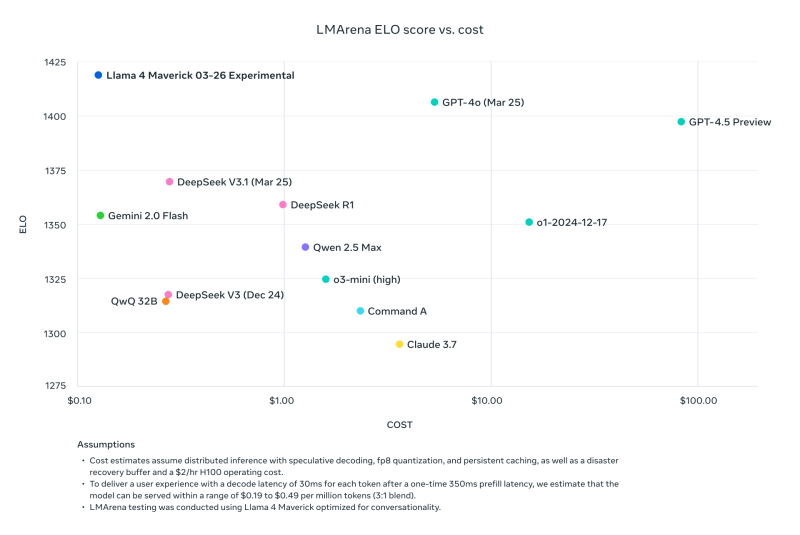

Former Meta researcher and current AI2 (Allen Institute for Artificial Intelligence) Senior Research Scientist Nathan Lambert took to his Interconnects Substack blog on Monday to point out that a benchmark comparison posted by Meta to its own Llama download site of Llama 4 Maverick to other models, based on cost-to-performance on the third-party head-to-head comparison tool LMArena ELO aka Chatbot Arena, actually used a different version of Llama 4 Maverick than the company itself had made publicly available — one “optimized for conversationality.”

As Lambert wrote: “Sneaky. The results below are fake, and it is a major slight to Meta’s community to not release the model they used to create their major marketing push. We’ve seen many open models that come around to maximize on ChatBotArena while destroying the model’s performance on important skills like math or code.”

Lambert went on to note that while this particular model on the arena was “tanking the technical reputation of the release because its character is juvenile,” including lots of emojis and frivolous emotive dialog, “The actual model on other hosting providers is quite smart and has a reasonable tone!”

In response to the torrent of criticism and accusations of benchmark cooking, Meta’s VP and Head of GenAI Ahmad Al-Dahle took to X to state:

“We’re glad to start getting Llama 4 in all your hands. We’re already hearing lots of great results people are getting with these models.

That said, we’re also hearing some reports of mixed quality across different services. Since we dropped the models as soon as they were ready, we expect it’ll take several days for all the public implementations to get dialed in. We’ll keep working through our bug fixes and onboarding partners.

We’ve also heard claims that we trained on test sets — that’s simply not true and we would never do that. Our best understanding is that the variable quality people are seeing is due to needing to stabilize implementations.

We believe the Llama 4 models are a significant advancement and we’re looking forward to working with the community to unlock their value.“

Yet even that response was met with many complaints of poor performance and calls for further information, such as more technical documentation outlining the Llama 4 models and their training processes, as well as additional questions about why this release compared to all prior Llama releases was particularly riddled with issues.

It also comes on the heels of the number two at Meta’s VP of Research Joelle Pineau, who worked in the adjacent Meta Foundational Artificial Intelligence Research (FAIR) organization, announcing her departure from the company on LinkedIn last week with “nothing but admiration and deep gratitude for each of my managers.” Pineau, it should be noted also promoted the release of the Llama 4 model family this weekend.

Llama 4 continues to spread to other inference providers with mixed results, but it’s safe to say the initial release of the model family has not been a slam dunk with the AI community.

And the upcoming Meta LlamaCon on April 29, the first celebration and gathering for third-party developers of the model family, will likely have much fodder for discussion. We’ll be tracking it all, stay tuned.

Daily insights on business use cases with VB Daily

If you want to impress your boss, VB Daily has you covered. We give you the inside scoop on what companies are doing with generative AI, from regulatory shifts to practical deployments, so you can share insights for maximum ROI.

Read our Privacy Policy

Thanks for subscribing. Check out more VB newsletters here.

An error occured.