Meta’s Project Waterworth: Building the Global Backbone for AI-Powered Digital Infrastructure

Also very recently, Meta unveiled its most ambitious subsea cable initiative yet: Project Waterworth. Aimed at revolutionizing global digital connectivity, the project will span over 50,000 kilometers—surpassing the Earth’s circumference—and connect five major continents. When completed, it will be the world’s longest subsea cable system, featuring the highest-capacity technology available today.

A Strategic Expansion to Key Global Markets

As announced on Feb. 14, Project Waterworth is designed to enhance connectivity across critical regions, including the United States, India, Brazil, and South Africa. These regions are increasingly pivotal to global digital growth, and the new subsea infrastructure will fuel economic cooperation, promote digital inclusion, and unlock opportunities for technological advancement.

In India, for instance, where rapid digital infrastructure growth is already underway, the project will accelerate progress and support the country’s ambitions for an expanded digital economy. This enhanced connectivity will foster regional integration and bolster the foundation for next-generation applications, including AI-driven services.

Strengthening Global Digital Highways

Subsea cables are the unsung heroes of global digital infrastructure, facilitating over 95% of intercontinental data traffic. With a multi-billion-dollar investment, Meta aims to open three new oceanic corridors that will deliver the high-speed, high-capacity bandwidth needed to fuel innovations like artificial intelligence.

Meta’s experience in subsea infrastructure is extensive. Over the past decade, the company has collaborated with various partners to develop more than 20 subsea cables, including systems boasting up to 24 fiber pairs—far exceeding the typical 8 to 16 fiber pairs found in most new deployments. This technological edge ensures scalability and reliability, essential for handling the world’s ever-increasing data demands.

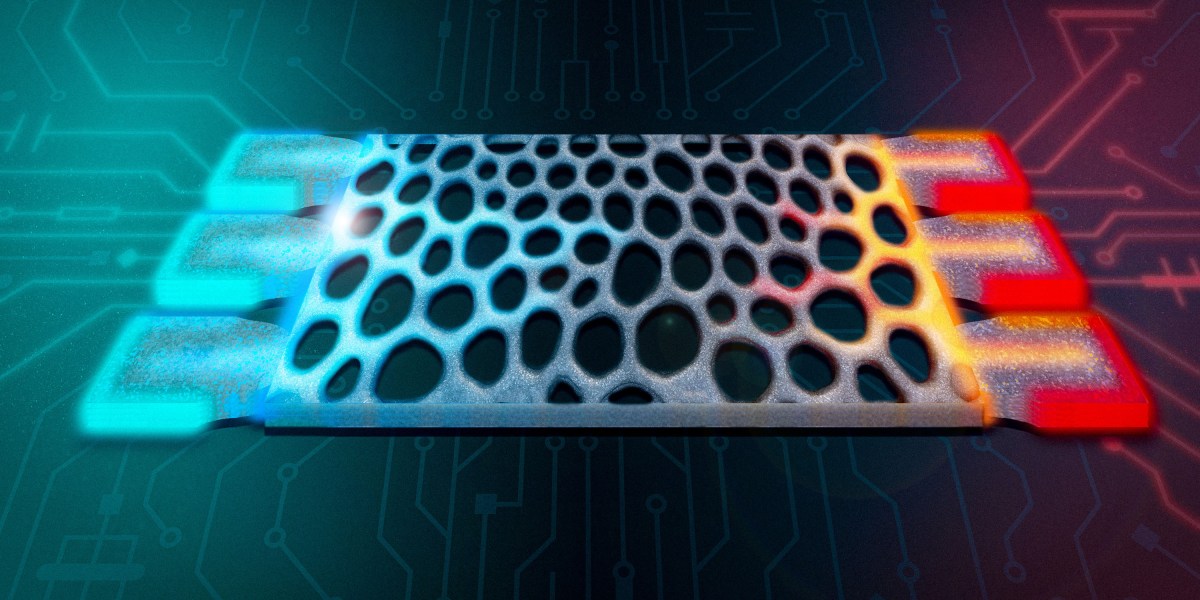

Engineering Innovations for Resilience and Capacity

Project Waterworth isn’t just about scale—it’s about resilience and cutting-edge engineering. The system will be the longest 24-fiber-pair subsea cable ever built, enhancing both speed of deployment and durability.

Advanced engineering features include:

-

Deep-sea routing at depths reaching 7,000 meters to minimize physical disruptions.

-

Enhanced burial techniques in shallow, high-risk fault zones to protect against damage from ship anchors and other hazards.

These innovations aim to create a robust and secure global data network that can support AI’s increasing infrastructure demands.

Powering the Future of AI-Driven Connectivity

AI is reshaping industries, economies, and everyday life. For AI applications to thrive, the supporting digital infrastructure must be both scalable and resilient. Project Waterworth positions Meta at the forefront of this transformation by ensuring that high-capacity connectivity reaches even the most remote regions.

By enhancing global access to AI technologies and fostering digital equity, Meta’s latest subsea initiative reinforces its role as a leader in building the future of global infrastructure—ensuring no region is left behind in the next wave of technological advancement.