Nvidia reported that its revenue for the fourth fiscal quarter ended January 26 was $39.3 billion, up 12% from the previous quarter and up 78% from a year ago.

For the quarter, GAAP earnings per diluted share was 89 cents, up 14% from the previous quarter and up 82% from a year ago. Non-GAAP earnings per diluted share was 89 cents, up 10% from the previous quarter and up 71% from a year ago. (Updated with corrected numbers).

The company’s stock is trading up to $132.87, up 1.2%, in after-hours trading, above where the analysts estimated that Nvidia’s quarterly results would be: $38.2 billion in revenue and 85 cents per share in earnings. Full-year revenue was $130.5 billion, up 114%. GAAP earnings per diluted share was $2.94, up 147% from a year ago. Non-GAAP earnings per diluted share was $2.99, up 130% from a year ago.

There’s always a lot at stake with Nvidia’s earnings these days. Thanks to AI growth, Nvidia’s market value is $3.16 trillion, making it second compared to fellow tech firm Apple, valued at $3.66 trillion.

That stock price has meant that investors view Nvidia as invincible. But the company got a shock to its systems in the past month as China’s DeepSeek AI announced it was able to deploy an AI model that performed well even though it was trained at a fraction of the cost of other heavy-duty AI models. That suggested to investors that such companies might not need a ton of Nvidia’s AI processors, and it led to a selloff in the company’s stock.

More recently, Microsoft appeared to back off on its decision to invest heavily into AI data centers. This earnings call is the first chance to talk extensively about why Nvidia still has big opportunities ahead of it.

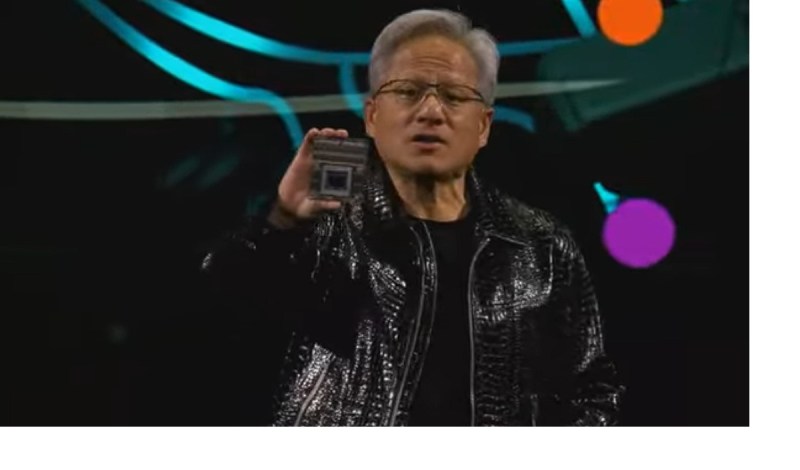

“Demand for Blackwell is amazing as reasoning AI adds another scaling law — increasing compute for training makes models smarter and increasing compute for long thinking makes the answer smarter,” said Jensen Huang, founder and CEO of Nvidia, in a statement.

“We’ve successfully ramped up the massive-scale production of Blackwell AI supercomputers, achieving billions of dollars in sales in its first quarter. AI is advancing at light speed as agentic AI and physical AI set the stage for the next wave of AI to revolutionize the largest industries.”

During CES 2025, the big tech trade show in Las Vegas in January, Huang gave a keynote speech where he predicted a boom in physical AI, such as industrial robots, would happen because of heavy use of synthetic data, where computer simulations can fully test scenarios for things like robots and self-driving cars, reducing the time it takes to test such products in the real world.

Nvidia’s Outlook

For the first fiscal quarter ending in April, the company said it expects revenue to be $43.0 billion, plus or minus 2%.

GAAP and non-GAAP gross margins are expected to be 70.6% and 71.0%, respectively, plus or minus 50 basis points.

GAAP and non-GAAP operating expenses are expected to be approximately $5.2 billion and $3.6 billion, respectively.

GAAP and non-GAAP other income and expense are expected to be an income of approximately $400 million, excluding gains and losses from non-marketable and publicly-held equity securities.

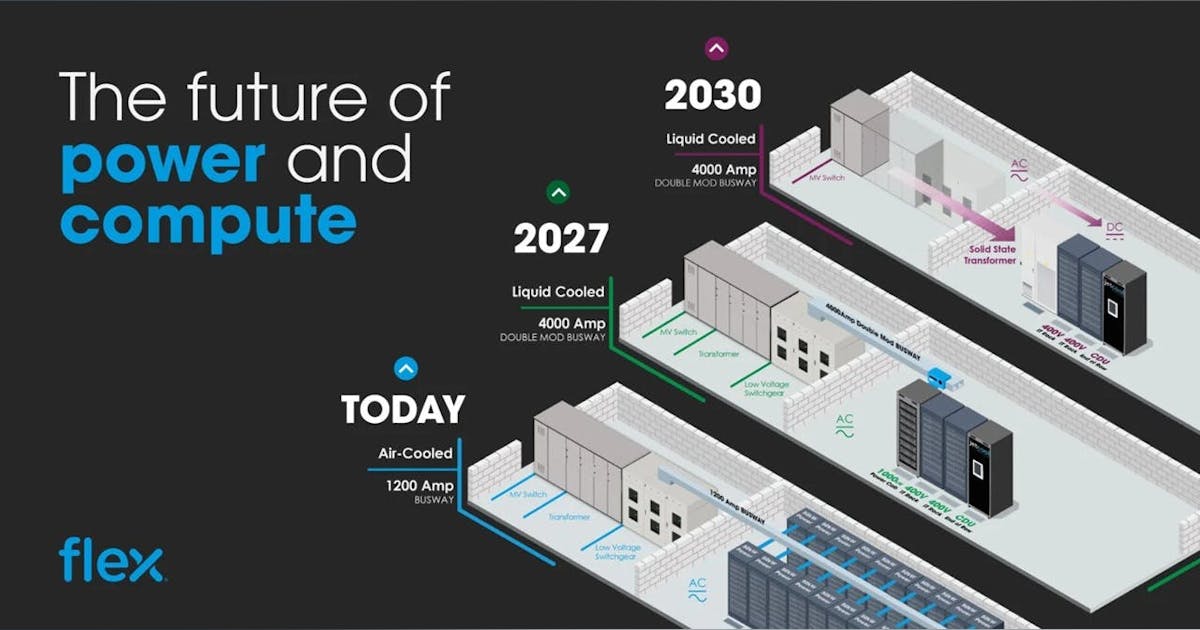

Data Center

Fourth quarter Data Center revenue was $35.6 billion, up 16% from Q3 and up 93% from a year ago.

Fourth-quarter revenue was a record $35.6 billion, up 16% from the previous quarter and up 93% from a year ago. Full-year revenue rose 142% to a record $115.2 billion.

During the quarter, Nvidia announced that Nvidia will serve as a key technology partner for the $500 billion Stargate Project.

It revealed that cloud service providers AWS, CoreWeave, Google Cloud Platform (GCP), Microsoft Azure and Oracle Cloud Infrastructure (OCI) are bringing Nvidia GB200 systems to cloud regions around the world to meet surging customer demand for AI.

The company partnered with AWS to make the Nvidia DGX Cloud AI computing platform and Nvidia NIM microservices available through AWS Marketplace.

And it revealed that Cisco will integrate Nvidia Spectrum-X into its networking portfolio to help enterprises build AI infrastructure.

Nvidia revealed that more than 75% of the systems on the TOP500 list of the world’s most powerful supercomputers are powered by Nvidia technologies.

It announced a collaboration with Verizon to integrate Nvidia AI Enterprise, NIM and accelerated computing with Verizon’s private 5G network to power a range of edge enterprise AI applications and services.

It also unveiled partnerships with industry leaders including IQVIA, Illumina, Mayo Clinic and Arc Institute to advance genomics, drug discovery and healthcare.

Nvidia launched Nvidia AI Blueprints and Llama Nemotron model families for building AI agents and released Nvidia NIM microservices to safeguard applications for agentic AI. And it announced the opening of Nvidia’s first R&D center in Vietnam.

The company also revealed that Siemens Healthineers has adopted MONAI Deploy for medical imaging AI.

Gaming and AI PC

Fourth-quarter Gaming revenue was $2.5 billion, down 22% from the previous quarter and down 11% from a year ago. Full-year revenue rose 9% to $11.4 billion.

In the quarter, Nvidia announced new GeForce RTX 50 Series graphics cards and laptops powered by the Nvidia Blackwell architecture, delivering breakthroughs in AI-driven rendering to gamers, creators and developers.

It launched GeForce RTX 5090 and 5080 graphics cards, delivering up to a 2x performance improvement over the prior generation.

Nvidia introduced Nvidia DLSS 4 with Multi Frame Generation and image quality enhancements, with 75 games and apps supporting it at launch, and unveiled Nvidia Reflex 2 technology, which can reduce PC latency by up to 75%.

And it unveiled Nvidia NIM microservices, AI Blueprints and the Llama Nemotron family of open models for RTX AI PCs to help developers and enthusiasts build AI agents and creative workflows.

Professional Visualization

Fourth-quarter revenue was $511 million, up 5% from the previous quarter and up 10% from a year ago. Full-year revenue rose 21% to $1.9 billion.

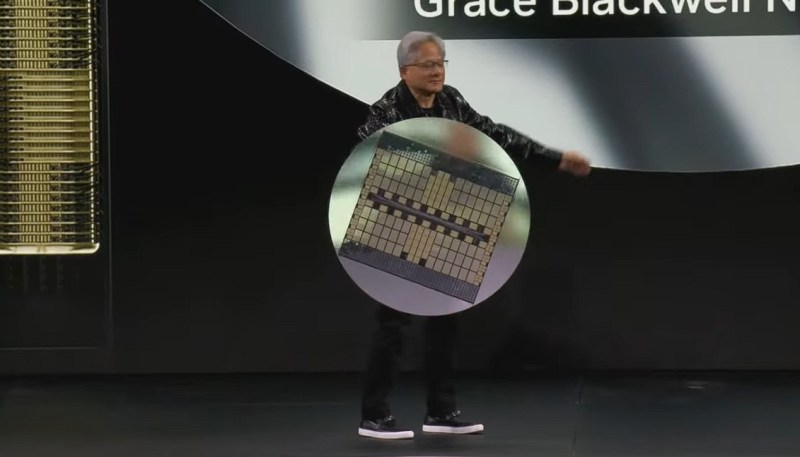

The company said it unveiled Nvidia Project DIGITS, a personal AI supercomputer that provides AI researchers, data scientists and students worldwide with access to the power of the Nvidia Grace Blackwell platform.

It announced generative AI models and blueprints that expand Nvidia Omniverse integration further into physical AI applications, including robotics, autonomous vehicles and vision AI.

And it introduced Nvidia Media2, an AI-powered initiative transforming content creation, streaming and live media experiences, built on NIM and AI Blueprints.

Automotive and Robotics

Fourth-quarter Automotive revenue was $570 million, up 27% from the previous quarter and up 103% from a year ago. Full-year revenue rose 55% to $1.7 billion.

The company announced that Toyota, the world’s largest automaker, will build its next-generation vehicles on Nvidia DRIVE AGX Orin running the safety-certified Nvidia DriveOS operating system.

It partnered with Hyundai Motor Group to create safer, smarter vehicles, supercharge manufacturing and deploy cutting-edge robotics with Nvidia AI and Nvidia Omniverse.

And it announced that the Nvidia DriveOS safe autonomous driving operating system received ASIL-D functional safety certification and launched the Nvidia Drive AI Systems Inspection Lab.

Nvidia launched Nvidia Cosmos, a platform comprising state-of-the-art generative world foundation models, to accelerate physical AI development, with adoption by leading robotics and automotive companies 1X, Agile Robots, Waabi, Uber and others.

And it unveiled the Nvidia Jetson Orin Nano Super, which delivers up to a 1.7x gain in generative AI performance.

GB Daily

Stay in the know! Get the latest news in your inbox daily

Read our Privacy Policy

Thanks for subscribing. Check out more VB newsletters here.

An error occured.