In association withDeloitte

Generative AI has the potential to transform the finance function. By taking on some of the more mundane tasks that can occupy a lot of time, generative AI tools can help free up capacity for more high-value strategic work. For chief financial officers, this could mean spending more time and energy on proactively advising the business on financial strategy as organizations around the world continue to weather ongoing geopolitical and financial uncertainty.

CFOs can use large language models (LLMs) and generative AI tools to support everyday tasks like generating quarterly reports, communicating with investors, and formulating strategic summaries, says Andrew W. Lo, Charles E. and Susan T. Harris professor and director of the Laboratory for Financial Engineering at the MIT Sloan School of Management. “LLMs can’t replace the CFO by any means, but they can take a lot of the drudgery out of the role by providing first drafts of documents that summarize key issues and outline strategic priorities.”

Generative AI is also showing promise in functions like treasury, with use cases including cash, revenue, and liquidity forecasting and management, as well as automating contracts and investment analysis. However, challenges still remain for generative AI to contribute to forecasting due to the mathematical limitations of LLMs. Regardless, Deloitte’s analysis of its 2024 State of Generative AI in the Enterprise survey found that one-fifth (19%) of finance organizations have already adopted generative AI in the finance function.

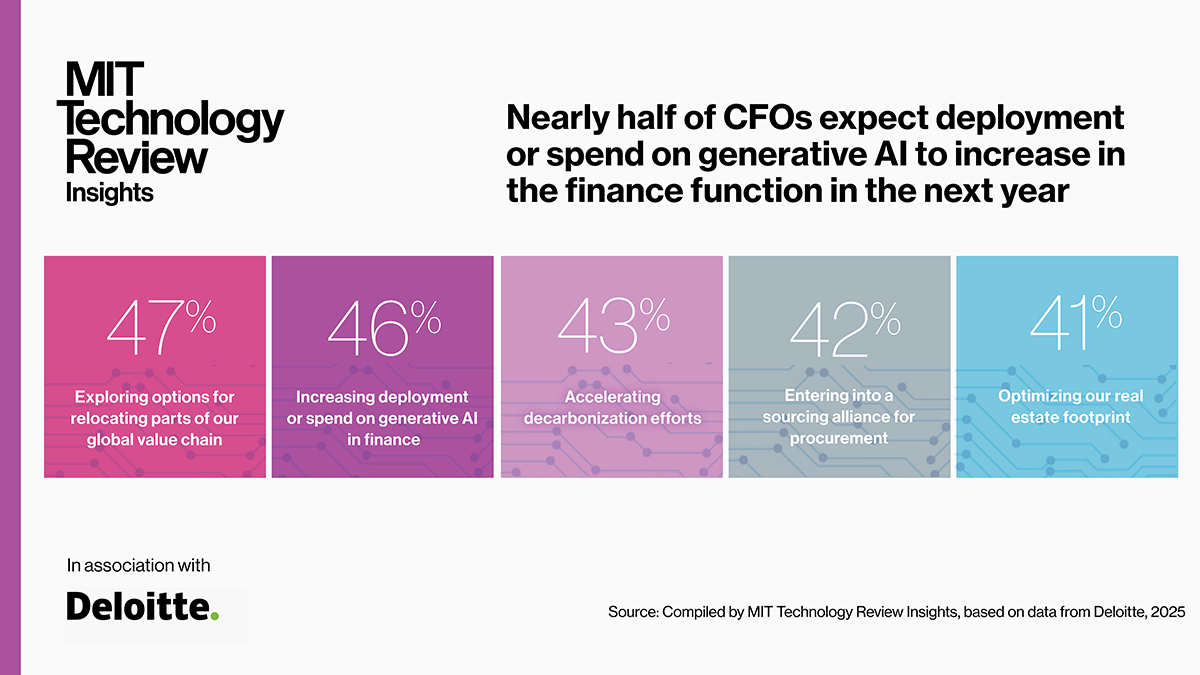

Despite return on generative AI investments in finance functions being 8 points below expectations so far for surveyed organizations (see Figure 1), some finance departments appear to be moving ahead with investments. Deloitte’s fourth-quarter 2024 North American CFO Signals survey found that 46% of CFOs who responded expect deployment or spend on generative AI in finance to increase in the next 12 months (see Figure 2). Respondents cite the technology’s potential to help control costs through self-service and automation and free up workers for higher-level, higher-productivity tasks as some of the top benefits of the technology.

“Companies have used AI on the customer-facing side of the house for a long time, but in finance, employees are still creating documents and presentations and emailing them around,” says Robyn Peters, principal in finance transformation at Deloitte Consulting LLP. “Largely, the human-centric experience that customers expect from brands in retail, transportation, and hospitality haven’t been pulled through to the finance organization. And there’s no reason we cannot do that—and, in fact, AI makes it a lot easier to do.”

If CFOs think they can just sit by for the next five years and watch how AI evolves, they may lose out to more nimble competitors that are actively experimenting in the space. Future finance professionals are growing up using generative AI tools too. CFOs should consider reimagining what it looks like to be a successful finance professional, in collaboration with AI.

This content was produced by Insights, the custom content arm of MIT Technology Review. It was not written by MIT Technology Review’s editorial staff. It was researched, designed, and written by human writers, editors, analysts, and illustrators. AI tools that may have been used were limited to secondary production processes that passed thorough human review.