Indonesia’s PT Pertamina Hulu Energi (PHE), PT Pertamina’s upstream unit, said it has recorded its largest exploration reserve discovery in the past fifteen years.

For 2024, Pertamina Upstream Subholding Group’s 2C contingent recoverable resources reached 652 million barrels of oil equivalent (MMboe) or 2C oil in place of 1.75 billion barrels of oil equivalent (Bboe), including existing structures reassessment evaluations, the company said in a news release.

The 2C contingent resource discovery represents a significant increase compared to previous years, marking a growth of 34 percent, compared with the 2023 figure of 488 MMboe, Pertamina said.

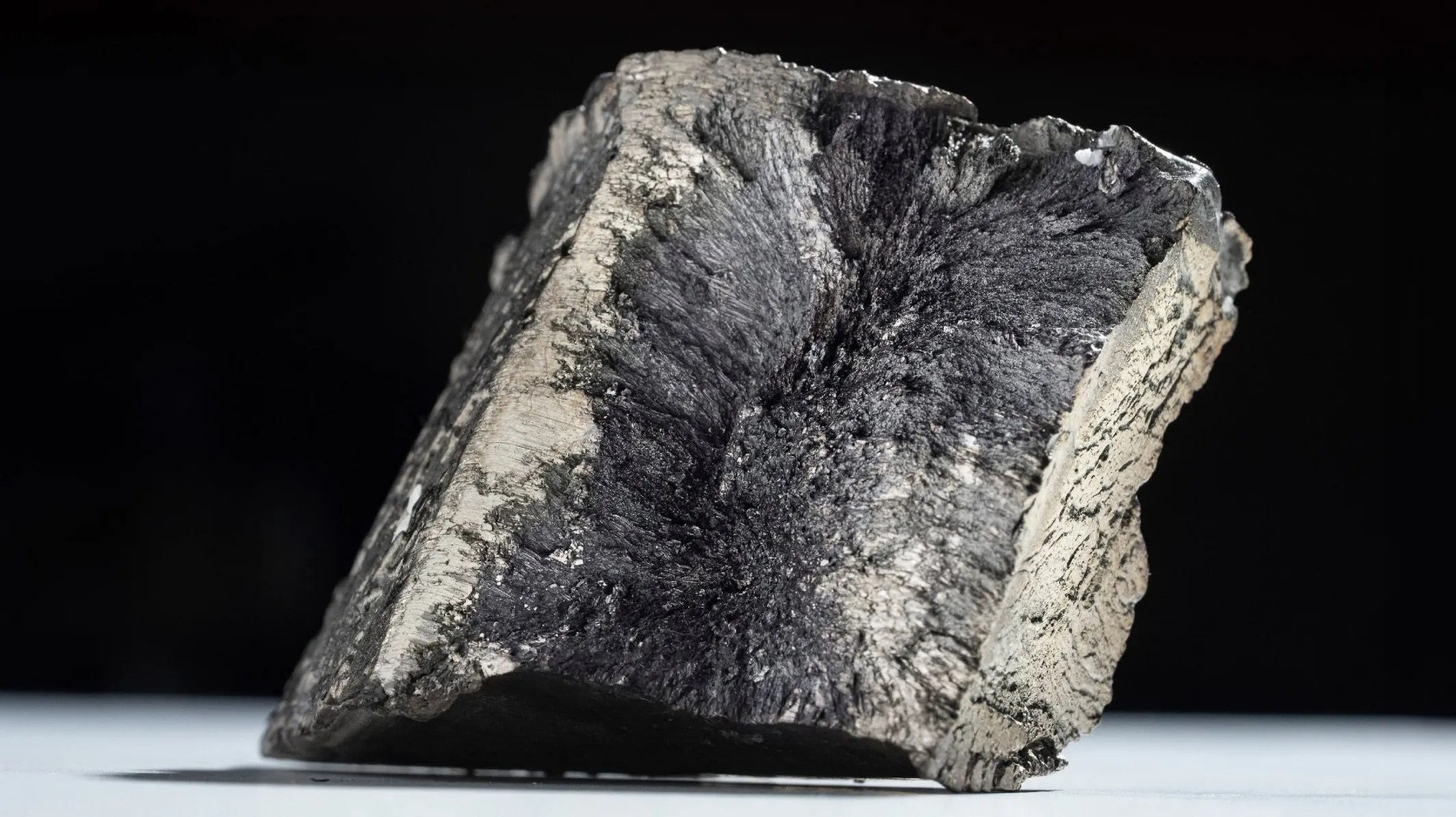

The contingent resource discovery was primarily driven by the company’s high impact discovery at the Tedong (TDG)-001 well, which holds 548 billion cubic feet of gas of 2C recoverable resources and 13.51 million barrels of condensate within the Pertamina EP Working Area, operated by PHE’s affiliate, PT Pertamina EP Cepu, in Region IV Zone 13, according to the release.

The drilling of the Tedong (TDG)-001 well is part of a frontier area exploration initiative across five key locations: East Wolai (EWO)-001, West Wolai (WWO)-001, Julang Emas (JLE)-001, Yaki Emas (YKE)-001, and Tedong (TDG)-001. The initiative aims to confirm the hydrocarbon potential of the Minahaki and Tomori Formation Limestone, Pertamina said.

Another significant discovery in Padang Pancuran (PPC)-1, located administratively in South Sumatra within the Jambi Merang Working Area, further contributed to the 2C contingent resources realization in the Pertamina Upstream Subholding Group last year. The PPC-1 well, drilled to a depth of 3,750 feet (1,143 meters), recorded 140.6 MMboe of 2C recoverable resources, the company stated.

PHE completed drilling 22 exploration wells in 2024. Additionally, PHE conducted a 2D seismic survey covering 769 kilometers and a 3D seismic survey spanning 4,990 square kilometers.

“This achievement is concrete evidence of our exploration team’s dedication and hard work, as well as our close collaboration with SKK Migas and the Ministry of Energy and Mineral Resources (ESDM). These efforts contribute to national oil and gas production, supporting the vision of energy self-sufficiency and national energy security,” Director of Exploration at PHE, Muharram Jaya Panguriseng, said.

The discovery “marks a significant milestone in PHE’s mission to increase national oil and gas reserves and support the government’s energy self-sufficiency program under the Asta Cita initiative,” according to the release.

Meanwhile, Pertamina New & Renewable Energy (Pertamina NRE) and PT Kilang Pertamina Internasional (KPI) have officially partnered to develop a project aiming to convert flare gas to power at the Balongan refinery in West Java.

“This initiative aligns with our vision to optimize existing energy resources while significantly reducing carbon emissions,” Pertamina NRE CEO John Anis said.

The Flare Gas to Power project aims to capture waste gas that would otherwise be flared into the atmosphere. The captured gas is then processed through a purification system and directed to a gas turbine or power generator. The resulting energy is used for refinery operations or fed into the power grid, according to a separate release.

KPI President Director of KPI Taufik Aditiyawarman stated that, through the project, KPI has the potential to reduce CO2 emissions by 80,000 tons of CO2 equivalent per year, decrease broiler gas consumption by more than 2.5 million standard cubic feet per day), and achieve fuel cost savings of over $9 million annually.

To contact the author, email [email protected]

WHAT DO YOU THINK?

Generated by readers, the comments included herein do not reflect the views and opinions of Rigzone. All comments are subject to editorial review. Off-topic, inappropriate or insulting comments will be removed.

MORE FROM THIS AUTHOR