The coming shift to higher-voltage DC

That internal power challenge led Simonelli to one of the most consequential architectural topics in the interview: the likely transition toward higher-voltage DC distribution at very high rack densities.

He framed it pragmatically. At current density levels, the industry knows how to get power into racks at 200 or 300 kilowatts. But as densities rise toward 400 kilowatts and beyond, conventional AC approaches start to run into physical limits. Too much cable, too much copper, too much conversion equipment, and too much space consumed by power infrastructure rather than GPUs.

At that point, he said, higher-voltage DC becomes attractive not for philosophical reasons, but because it reduces current, shrinks conductor size, saves space, and leaves more room for revenue-generating compute.

“It is again a paradigm shift,” Simonelli said of DC power at these densities. “But it won’t be everywhere.”

That is probably right. The transition will not be universal, and the exact thresholds will evolve. But his underlying point is powerful. As rack densities climb, electrical architecture starts to matter not only for efficiency and reliability, but for physical space allocation inside the rack. Put differently, power distribution becomes a compute-enablement issue.

Distance between accelerators matters, too. The closer GPUs and TPUs can be kept together, the better they perform. If power infrastructure can be compacted, more of the rack can be devoted to dense compute, improving the economics and performance of the system.

That is a strong example of how AI is collapsing traditional boundaries between facility engineering and compute architecture. The two are no longer cleanly separable.

Gas now, renewables over time

On onsite power, Simonelli was refreshingly direct. If the goal is dispatchable onsite generation at the scale now being contemplated for AI facilities, he said, “there really isn’t an alternative other than gas” today.

That is not a dismissal of renewables. Rather, it is a statement about near-term practicality. Gas turbines are the technology that can currently provide the kind of reliable, scalable onsite generation large AI campuses need in the timeframe the market is demanding.

At the same time, Simonelli offered a more nuanced long-term view. He sees data centers with storage-rich electrical architectures as potentially enabling more renewable penetration on the grid. If large data centers can absorb overproduction from solar or otherwise use storage to interact more flexibly with the grid, they may eventually become more compatible with renewable-heavy systems rather than simply competing with them for capacity.

That perspective is worth noting because it moves beyond the simplistic binary of gas versus renewables. In Schneider’s view, gas is the practical bridge for large-scale onsite generation now, while storage-enabled flexibility could help data centers support a more renewable grid over time.

BESS as strategic AI infrastructure

Battery energy storage also occupies a much more central place in Simonelli’s thinking than the industry sometimes grants it.

In his account, BESS is not just an ancillary asset or backup adjunct. It is becoming strategic infrastructure for AI data centers, serving multiple roles at once: smoothing load swings, stabilizing onsite generation, controlling ramp rates, riding through grid faults, and potentially enabling more flexible use of renewable power.

The same basic storage technologies, he noted, can be applied across different contexts. What changes more than the chemistry is the control logic and the way those systems are used.

That too reflects the larger systems theme running through both interviews. The future AI data center is not being built around one hero technology. It is being built around coordination among power electronics, storage, controls, software, cooling, and compute.

Schneider’s energy-native AI ambition

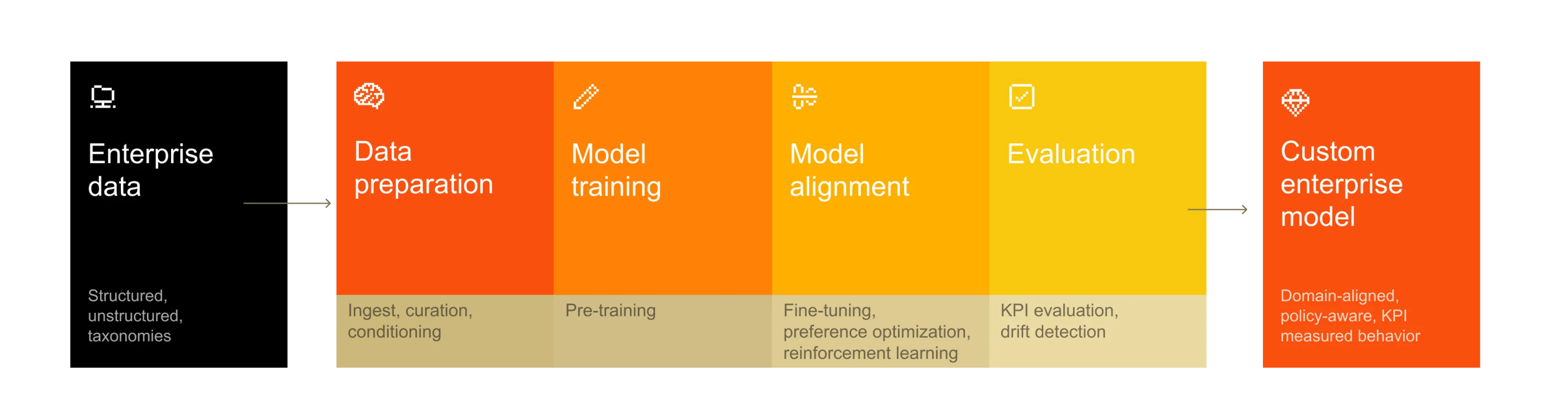

One of the more forward-looking aspects of Simonelli’s interview was his description of Schneider’s thinking around infrastructure-native AI and “energy intelligence.”

He spoke of a developing data layer capable of ingesting power, cooling, and service data into a single environment, one designed to be native to AI and fluent in the language of buildings and energy systems. He described the idea as something akin to a foundational model, but for energy and infrastructure rather than text or documents.

That distinction matters.

Generic models can summarize alarms or describe events. But they do not necessarily understand what a pressure variation on a thermal control system loop means, or how it relates to cooling behavior, power draw, and service conditions. Schneider’s ambition appears to be to build a model that does understand those things because it has been trained on the relevant infrastructure domain.

That creates a useful distinction between agentic frameworks and domain intelligence. Simonelli made clear that Schneider does not necessarily need to own the general-purpose agent shell. It is comfortable using open or partner-driven agentic systems, including NVIDIA’s own tooling, where appropriate. What Schneider wants to own is the infrastructure-native intelligence beneath those frameworks.

That may prove to be an important strategic position. As AI operations tooling proliferates, the companies that succeed may not be the ones with the most generalized AI wrapper, but the ones with the deepest domain-specific understanding of the systems under management.

Omniverse as the common layer

Simonelli also offered a helpful perspective on a question many visitors to GTC were likely asking: if Schneider, Siemens, Cadence, and others all appear to be building around digital twin and Omniverse, does that turn the space into a circular firing squad?

His answer was more nuanced.

Yes, the companies continue to compete in authoring tools, engineering environments, and simulation capabilities. But Omniverse, in his view, is emerging as a shared cross-domain layer where designs can be visualized and understood together. Customers do not want isolated viewports for electrical, mechanical, robotics, and facility domains. They want one environment where all those elements can be seen in relationship.

That does not eliminate competition. It changes where some of it happens.

In other words, Schneider may compete with Siemens or Cadence in the underlying engineering stack while still participating in a common interoperability and visualization environment on top. For customers grappling with multi-domain AI infrastructure design, that may be not only acceptable but necessary.

Reference designs and the industrialization of deployment

Garner, for his part, also emphasized Schneider’s ongoing work with NVIDIA on reference designs.

That is more than partnership theater. In a market rushing toward repeatable deployment at extraordinary scale, reference architectures become a way to compress engineering cycles, reduce uncertainty, and standardize around known-good infrastructure patterns.

Schneider’s role here is revealing. The company is not only supplying gear. It is helping encode infrastructure assumptions into reusable templates aligned with NVIDIA’s compute roadmaps.

That is another sign that AI infrastructure is becoming more industrialized. Standardization is no longer the enemy of innovation. It is increasingly one of the prerequisites for speed.

A feature of the next era: hybrid AI factories

Another subtle but important point from Simonelli concerns workload mix.

He suggested that even at the largest scale, these AI factories are unlikely to remain purely monolithic training environments forever. Instead, they may evolve toward hybrid configurations, with different kinds of compute serving different kinds of workloads inside the same broad campus environment.

That aligns with broader signals from GTC, where training still dominated much of the spectacle but inference, specialized compute, and token economics increasingly shaped the underlying conversation.

The implication is that the future AI campus may not be a single-purpose machine. It may be a layered compute estate, with infrastructure choices increasingly influenced by workload diversity as well as density.

The deeper lesson from Schneider at GTC

What Schneider Electric brought to GTC 2026 was not simply a list of products or a set of partnership announcements. It was a coherent view of where the AI data center is headed. That view has several core components.

First, AI infrastructure has entered a simulation-first era. The data center must increasingly be modeled before it is built, because the interdependence among power, cooling, storage, and compute has grown too consequential to manage reactively.

Second, the electrical behavior of AI workloads is changing the relationship between the data center and the grid. UPS and storage are evolving from backup assets into dynamic control systems for load smoothing, fault ride-through, and ramp management.

Third, cooling, while still transformative, is no longer the only or even the hardest engineering problem. Power availability, power delivery, and electrical topology now loom larger, both at the site level and inside the rack.

Fourth, the industry is moving toward a more integrated energy-and-compute architecture, one in which onsite generation, storage, grid interaction, and high-density distribution become part of the same design conversation.

And fifth, the operating layer of the future data center will likely be shaped not just by generalized AI, but by infrastructure-native intelligence trained to understand the physics, signals, alarms, and interactions of real facilities.

For Data Center Frontier readers, the significance of that message is hard to miss.

The AI data center has not merely outgrown the traditional enterprise facility. It is beginning to outgrow the conceptual boundaries of the data center itself. It is becoming an engineered system of systems, one part compute platform, one part power system, one part thermal machine, and one part digital model.

That may be the most important lesson from Schneider Electric’s presence at GTC this year. The next phase of AI infrastructure will not be won simply by whoever can procure the most GPUs. It will also be shaped by who can best design, simulate, power, cool, and operate the vast physical systems required to make those GPUs useful at industrial scale.

And in San Jose last month, Schneider made clear that it sees that systems challenge as the real frontier now opening.