Join our daily and weekly newsletters for the latest updates and exclusive content on industry-leading AI coverage. Learn More

Researchers from the Soochow University of China have introduced Chain-of-Tools (CoTools), a novel framework designed to enhance how large language models (LLMs) use external tools. CoTools aims to provide a more efficient and flexible approach compared to existing methods. This will allow LLMs to leverage vast toolsets directly within their reasoning process, including ones they haven’t explicitly been trained on.

For enterprises looking to build sophisticated AI agents, this capability could unlock more powerful and adaptable applications without the typical drawbacks of current tool integration techniques.

While modern LLMs excel at text generation, understanding and even complex reasoning, they need to interact with external resources and tools such as databases or applications for many tasks. Equipping LLMs with external tools—essentially APIs or functions they can call—is crucial for extending their capabilities into practical, real-world applications.

However, current methods for enabling tool use face significant trade-offs. One common approach involves fine-tuning the LLM on examples of tool usage. While this can make the model proficient at calling the specific tools seen during training, it often restricts the model to only those tools. Furthermore, the fine-tuning process itself can sometimes negatively impact the LLM’s general reasoning abilities, such as Chain-of-Thought (CoT), potentially diminishing the core strengths of the foundation model.

The alternative approach relies on in-context learning (ICL), where the LLM is provided with descriptions of available tools and examples of how to use them directly within the prompt. This method offers flexibility, allowing the model to potentially use tools it hasn’t seen before. However, constructing these complex prompts can be cumbersome, and the model’s efficiency decreases significantly as the number of available tools grows, making it less practical for scenarios with large, dynamic toolsets.

As the researchers note in the paper introducing Chain-of-Tools, an LLM agent “should be capable of efficiently managing a large amount of tools and fully utilizing unseen ones during the CoT reasoning, as many new tools may emerge daily in real-world application scenarios.”

CoTools offers a compelling alternative to existing methods by cleverly combining aspects of fine-tuning and semantic understanding while crucially keeping the core LLM “frozen”—meaning its original weights and powerful reasoning capabilities remain untouched. Instead of fine-tuning the entire model, CoTools trains lightweight, specialized modules that work alongside the LLM during its generation process.

“The core idea of CoTools is to leverage the semantic representation capabilities of frozen foundation models for determining where to call tools and which tools to call,” the researchers write.

In essence, CoTools taps into the rich understanding embedded within the LLM’s internal representations, often called “hidden states,” which are computed as the model processes text and generates response tokens.

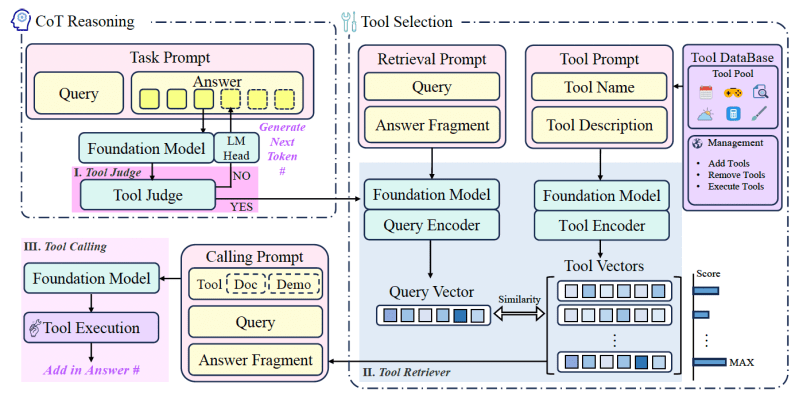

The CoTools framework comprises three main components that operate sequentially during the LLM’s reasoning process:

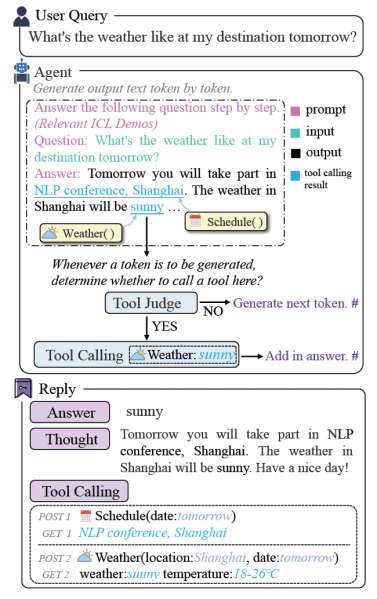

Tool Judge: As the LLM generates its response token by token, the Tool Judge analyzes the hidden state associated with the potential next token and decides whether calling a tool is appropriate at that specific point in the reasoning chain.

Tool Retriever: If the Judge determines a tool is needed, the Retriever chooses the most suitable tool for the task. The Tool Retriever has been trained to create an embedding of the query and compare it to the available tools. This allows it to efficiently select the most semantically relevant tool from the pool of available tools, including “unseen” tools (i.e., not part of the training data for the CoTools modules).

Tool Calling: Once the best tool is selected, CoTools uses an ICL prompt that demonstrates filling in the tool’s parameters based on the context. This targeted use of ICL avoids the inefficiency of adding thousands of demonstrations in the prompt for the initial tool selection. Once the selected tool is executed, its result is inserted back into the LLM’s response generation.

By separating the decision-making (Judge) and selection (Retriever) based on semantic understanding from the parameter filling (Calling via focused ICL), CoTools achieves efficiency even with massive toolsets while preserving the LLM’s core abilities and allowing flexible use of new tools. However, since CoTools requires access to the model’s hidden states, it can only be applied to open-weight models such as Llama and Mistral instead of private models such as GPT-4o and Claude.

The researchers evaluated CoTools across two distinct application scenarios: numerical reasoning using arithmetic tools and knowledge-based question answering (KBQA), which requires retrieval from knowledge bases.

On arithmetic benchmarks like GSM8K-XL (using basic operations) and FuncQA (using more complex functions), CoTools applied to LLaMA2-7B achieved performance comparable to ChatGPT on GSM8K-XL and slightly outperformed or matched another tool-learning method, ToolkenGPT, on FuncQA variants. The results highlighted that CoTools effectively enhance the capabilities of the underlying foundation model.

For the KBQA tasks, tested on the KAMEL dataset and a newly constructed SimpleToolQuestions (STQuestions) dataset featuring a very large tool pool (1836 tools, including 837 unseen in the test set), CoTools demonstrated superior tool selection accuracy. It particularly excelled in scenarios with massive tool numbers and when dealing with unseen tools, leveraging the descriptive information for effective retrieval where methods relying solely on trained tool representations faltered. The experiments also indicated that CoTools maintained strong performance despite lower-quality training data.

Implications for the enterprise

Chain-of-Tools presents a promising direction for building more practical and powerful LLM-powered agents in the enterprise. This is especially useful as new standards such as the Model Context Protocol (MCP) enable developers to integrate external tools and resources easily into their applications. Enterprises can potentially deploy agents that adapt to new internal or external APIs and functions with minimal retraining overhead.

The framework’s reliance on semantic understanding via hidden states allows for nuanced and accurate tool selection, which could lead to more reliable AI assistants in tasks that require interaction with diverse information sources and systems.

“CoTools explores the way to equip LLMs with massive new tools in a simple way,” Mengsong Wu, lead author of the CoTools paper and machine learning researcher at Soochow University, told VentureBeat. “It could be used to build a personal AI agent with MCP and do complex reasoning with scientific tools.”

However, Wu also noted that they have only conducted preliminary exploratory work so far. “To apply it in a real-world environment, you still need to find a balance between the cost of fine-tuning and the efficiency of generalized tool invocation,” Wu said.

The researchers have released the code for training the Judge and Retriever modules on GitHub.

“We believe that our ideal Tool Learning agent framework based on frozen LLMs with its practical realization method CoTools can be useful in real-world applications and even drive further development of Tool Learning,” the researchers write.

Daily insights on business use cases with VB Daily

If you want to impress your boss, VB Daily has you covered. We give you the inside scoop on what companies are doing with generative AI, from regulatory shifts to practical deployments, so you can share insights for maximum ROI.

Read our Privacy Policy

Thanks for subscribing. Check out more VB newsletters here.

An error occured.