The United States and China are entangled in what many have dubbed an “AI arms race.”

In the early days of this standoff, US policymakers drove an agenda centered on “winning” the race, mostly from an economic perspective. In recent months, leading AI labs such as OpenAI and Anthropic got involved in pushing the narrative of “beating China” in what appeared to be an attempt to align themselves with the incoming Trump administration. The belief that the US can win in such a race was based mostly on the early advantage it had over China in advanced GPU compute resources and the effectiveness of AI’s scaling laws.

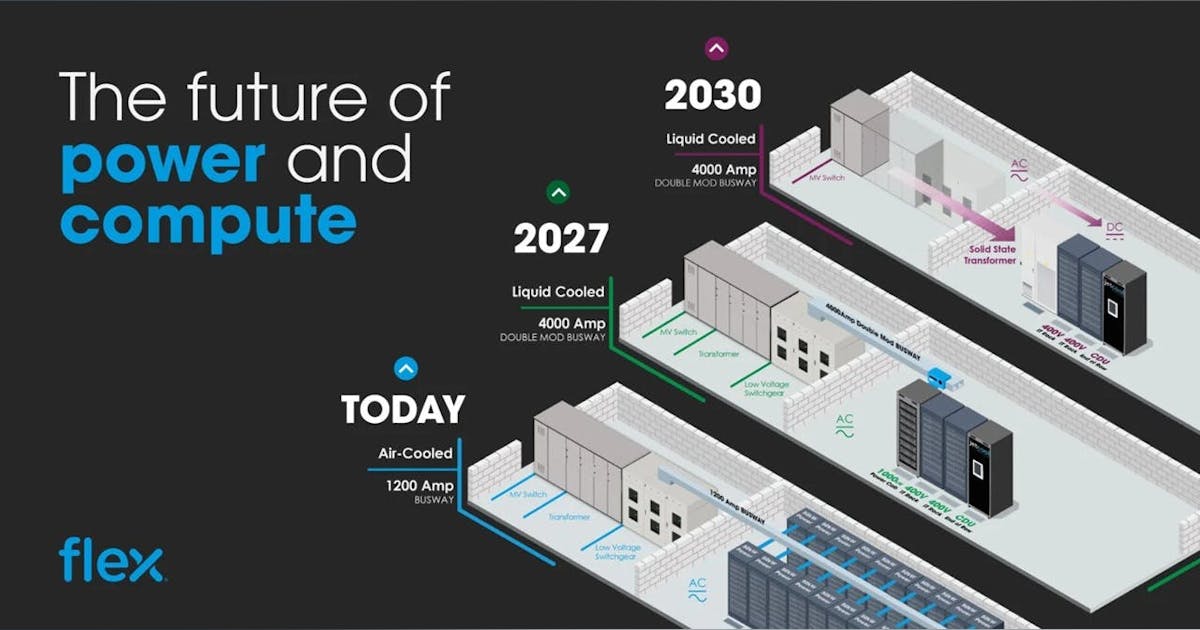

But now it appears that access to large quantities of advanced compute resources is no longer the defining or sustainable advantage many had thought it would be. In fact, the capability gap between leading US and Chinese models has essentially disappeared, and in one important way the Chinese models may now have an advantage: They are able to achieve near equivalent results while using only a small fraction of the compute resources available to the leading Western labs.

The AI competition is increasingly being framed within narrow national security terms, as a zero-sum game, and influenced by assumptions that a future war between the US and China, centered on Taiwan, is inevitable. The US has employed “chokepoint” tactics to limit China’s access to key technologies like advanced semiconductors, and China has responded by accelerating its efforts toward self-sufficiency and indigenous innovation, which is causing US efforts to backfire.

Recently even outgoing US Secretary of Commerce Gina Raimondo, a staunch advocate for strict export controls, finally admitted that using such controls to hold back China’s progress on AI and advanced semiconductors is a “fool’s errand.” Ironically, the unprecedented export control packages targeting China’s semiconductor and AI sectors have unfolded alongside tentative bilateral and multilateral engagements to establish AI safety standards and governance frameworks—highlighting a paradoxical desire of both sides to compete and cooperate.

When we consider this dynamic more deeply, it becomes clear that the real existential threat ahead is not from China, but from the weaponization of advanced AI by bad actors and rogue groups who seek to create broad harms, gain wealth, or destabilize society. As with nuclear arms, China, as a nation-state, must be careful about using AI-powered capabilities against US interests, but bad actors, including extremist organizations, would be much more likely to abuse AI capabilities with little hesitation. Given the asymmetric nature of AI technology, which is much like cyberweapons, it is very difficult to fully prevent and defend against a determined foe who has mastered its use and intends to deploy it for nefarious ends.

Given the ramifications, it is incumbent on the US and China as global leaders in developing AI technology to jointly identify and mitigate such threats, collaborate on solutions, and cooperate on developing a global framework for regulating the most advanced models—instead of erecting new fences, small or large, around AI technologies and pursing policies that deflect focus from the real threat.

It is now clearer than ever that despite the high stakes and escalating rhetoric, there will not and cannot be any long-term winners if the intense competition continues on its current path. Instead, the consequences could be severe—undermining global stability, stalling scientific progress, and leading both nations toward a dangerous technological brinkmanship. This is particularly salient given the importance of Taiwan and the global foundry leader TSMC in the AI stack, and the increasing tensions around the high-tech island.

Heading blindly down this path will bring the risk of isolation and polarization, threatening not only international peace but also the vast potential benefits AI promises for humanity as a whole.

Historical narratives, geopolitical forces, and economic competition have all contributed to the current state of the US-China AI rivalry. A recent report from the US-China Economic and Security Review Commission, for example, frames the entire issue in binary terms, focused on dominance or subservience. This “winner takes all” logic overlooks the potential for global collaboration and could even provoke a self-fulfilling prophecy by escalating conflict. Under the new Trump administration this dynamic will likely become more accentuated, with increasing discussion of a Manhattan Project for AI and redirection of US military resources from Ukraine toward China.

Fortunately, a glimmer of hope for a responsible approach to AI collaboration is appearing now as Donald Trump recently posted on January 17 that he’d restarted direct dialogue with Chairman Xi Jinping regarding various areas of collaboration, and given past cooperation should continue to be “partners and friends.” The outcome of the TikTok drama, putting Trump at odds with sharp China critics in his own administration and Congress, will be a preview of how his efforts to put US China relations on a less confrontational trajectory.

The promise of AI for good

Western mass media usually focuses on attention-grabbing issues described in terms like the “existential risks of evil AI.” Unfortunately, the AI safety experts who get the most coverage often recite the same narratives, scaring the public. In reality, no credible research shows that more capable AI will become increasingly evil. We need to challenge the current false dichotomy of pure accelerationism versus doomerism to allow for a model more like collaborative acceleration.

It is important to note the significant difference between the way AI is perceived in Western developed countries and developing countries. In developed countries the public sentiment toward AI is 60% to 70% negative, while in the developing markets the positive ratings are 60% to 80%. People in the latter places have seen technology transform their lives for the better in the past decades and are hopeful AI will help solve the remaining issues they face by improving education, health care, and productivity, thereby elevating their quality of life and giving them greater world standing. What Western populations often fail to realize is that those same benefits could directly improve their lives as well, given the high levels of inequity even in developed markets. Consider what progress would be possible if we reallocated the trillions that go into defense budgets each year to infrastructure, education, and health-care projects.

Once we get to the next phase, AI will help us accelerate scientific discovery, develop new drugs, extend our health span, reduce our work obligations, and ensure access to high-quality education for all. This may sound idealistic, but given current trends, most of this can become a reality within a generation, and maybe sooner. To get there we’ll need more advanced AI systems, which will be a much more challenging goal if we divide up compute/data resources and research talent pools. Almost half of all top AI researchers globally (47%) were born or educated in China, according to industry studies. It’s hard to imagine how we could have gotten where we are without the efforts of Chinese researchers. Active collaboration with China on joint AI research could be pivotal to supercharging progress with a major infusion of quality training data and researchers.

The escalating AI competition between the US and China poses significant threats to both nations and to the entire world. The risks inherent in this rivalry are not hypothetical—they could lead to outcomes that threaten global peace, economic stability, and technological progress. Framing the development of artificial intelligence as a zero-sum race undermines opportunities for collective advancement and security. Rather than succumb to the rhetoric of confrontation, it is imperative that the US and China, along with their allies, shift toward collaboration and shared governance.

Our recommendations for policymakers:

- Reduce national security dominance over AI policy. Both the US and China must recalibrate their approach to AI development, moving away from viewing AI primarily as a military asset. This means reducing the emphasis on national security concerns that currently dominate every aspect of AI policy. Instead, policymakers should focus on civilian applications of AI that can directly benefit their populations and address global challenges, such as health care, education, and climate change. The US also needs to investigate how to implement a possible universal basic income program as job displacement from AI adoption becomes a bigger issue domestically.

- 2. Promote bilateral and multilateral AI governance. Establishing a robust dialogue between the US, China, and other international stakeholders is crucial for the development of common AI governance standards. This includes agreeing on ethical norms, safety measures, and transparency guidelines for advanced AI technologies. A cooperative framework would help ensure that AI development is conducted responsibly and inclusively, minimizing risks while maximizing benefits for all.

- 3. Expand investment in detection and mitigation of AI misuse. The risk of AI misuse by bad actors, whether through misinformation campaigns, telecom, power, or financial system attacks, or cybersecurity attacks with the potential to destabilize society, is the biggest existential threat to the world today. Dramatically increasing funding for and international cooperation in detecting and mitigating these risks is vital. The US and China must agree on shared standards for the responsible use of AI and collaborate on tools that can monitor and counteract misuse globally.

- 4. Create incentives for collaborative AI research. Governments should provide incentives for academic and industry collaborations across borders. By creating joint funding programs and research initiatives, the US and China can foster an environment where the best minds from both nations contribute to breakthroughs in AI that serve humanity as a whole. This collaboration would help pool talent, data, and compute resources, overcoming barriers that neither country could tackle alone. A global effort akin to the CERN for AI will bring much more value to the world, and a peaceful end, than a Manhattan Project for AI, which is being promoted by many in Washington today.

- 5. Establish trust-building measures. Both countries need to prevent misinterpretations of AI-related actions as aggressive or threatening. They could do this via data-sharing agreements, joint projects in nonmilitary AI, and exchanges between AI researchers. Reducing import restrictions for civilian AI use cases, for example, could help the nations rebuild some trust and make it possible for them to discuss deeper cooperation on joint research. These measures would help build transparency, reduce the risk of miscommunication, and pave the way for a less adversarial relationship.

- 6. Support the development of a global AI safety coalition. A coalition that includes major AI developers from multiple countries could serve as a neutral platform for addressing ethical and safety concerns. This coalition would bring together leading AI researchers, ethicists, and policymakers to ensure that AI progresses in a way that is safe, fair, and beneficial to all. This effort should not exclude China, as it remains an essential partner in developing and maintaining a safe AI ecosystem.

- 7. Shift the focus toward AI for global challenges. It is crucial that the world’s two AI superpowers use their capabilities to tackle global issues, such as climate change, disease, and poverty. By demonstrating the positive societal impacts of AI through tangible projects and presenting it not as a threat but as a powerful tool for good, the US and China can reshape public perception of AI.

Our choice is stark but simple: We can proceed down a path of confrontation that will almost certainly lead to mutual harm, or we can pivot toward collaboration, which offers the potential for a prosperous and stable future for all. Artificial intelligence holds the promise to solve some of the greatest challenges facing humanity, but realizing this potential depends on whether we choose to race against each other or work together.

The opportunity to harness AI for the common good is a chance the world cannot afford to miss.

Alvin Wang Graylin

Alvin Wang Graylin is a technology executive, author, investor, and pioneer with over 30 years of experience shaping innovation in AI, XR (extended reality), cybersecurity, and semiconductors. Currently serving as global vice president at HTC, Graylin was the company’s China president from 2016 to 2023. He is the author of Our Next Reality.

Paul Triolo

Paul Triolo is a partner for China and technology policy lead at DGA-Albright Stonebridge Group. He advises clients in technology, financial services, and other sectors as they navigate complex political and regulatory matters in the US, China, the European Union, India, and around the world.