Note for readers: This newsletter discusses gun violence, a raw and tragic issue in America. It was already in progress on Wednesday when a school shooting occurred at Evergreen High School in Colorado and Charlie Kirk was shot and killed at Utah Valley University.

Earlier this week, the Trump administration’s Make America Healthy Again movement released a strategy for improving the health and well-being of American children. The report was titled—you guessed it—Make Our Children Healthy Again.

Robert F. Kennedy Jr., who leads the Department of Health and Human Services, and his colleagues are focusing on four key aspects of child health: diet, exercise, chemical exposure, and overmedicalization.

Anyone who’s been listening to RFK Jr. posturing on health and wellness won’t be surprised by these priorities. And the first two are pretty obvious. On the whole, American children should be eating more healthily. And they should be getting more exercise.

But there’s a glaring omission. The leading cause of death for American children and teenagers isn’t ultraprocessed food or exposure to some chemical. It’s gun violence.

Yesterday’s news of yet more high-profile shootings at schools in the US throws this disconnect into even sharper relief. Experts believe it is time to treat gun violence in the US as what it is: a public health crisis.

I live in London, UK, with my husband and two young children. We don’t live in a particularly fancy part of the city—in one recent ranking of London boroughs from most to least posh, ours came in at 30th out of 33. I do worry about crime. But I don’t worry about gun violence.

That changed when I temporarily moved my family to the US a couple of years ago. We rented the ground-floor apartment of a lovely home in Cambridge, Massachusetts—a beautiful area with good schools, pastel-colored houses, and fluffy rabbits hopping about. It wasn’t until after we’d moved in that my landlord told me he had guns in the basement.

My daughter joined the kindergarten of a local school that specialized in music, and we took her younger sister along to watch the kids sing songs about friendship. It was all so heartwarming—until we noticed the school security officer at the entrance carrying a gun.

Later in the year, I received an email alert from the superintendent of the Cambridge Public Schools. “At approximately 1:45 this afternoon, a Cambridge Police Department Youth Officer assigned to Cambridge Rindge and Latin School accidentally discharged their firearm while using a staff bathroom inside the school,” the message began. “The school day was not disrupted.”

These experiences, among others, truly brought home to me the cultural differences over firearms between the US and the UK (along with most other countries). For the first time, I worried about my children’s exposure to them. I banned my children from accessing parts of the house. I felt guilty that my four-year-old had to learn what to do if a gunman entered her school.

But it’s the statistics that are the most upsetting.

In 2023, 46,728 people died from gun violence in the US, according to a report published in June by the Johns Hopkins Bloomberg School of Public Health. That includes both homicides and suicides, and it breaks down to 128 deaths per day, on average. The majority of those who die from gun violence are adults. But the figures for children are sickening, too. In 2023, 2,566 young people died from gun violence. Of those, 234 were under the age of 10.

Gun death rates among children have more than doubled since 2013. Firearms are involved in more child deaths than cancer or car crashes.

Many other children survive gun violence with nonfatal—but often life-changing—injuries. And the impacts are felt beyond those who are physically injured. Witnessing gun violence or hearing gunshots can understandably cause fear, sadness, and distress.

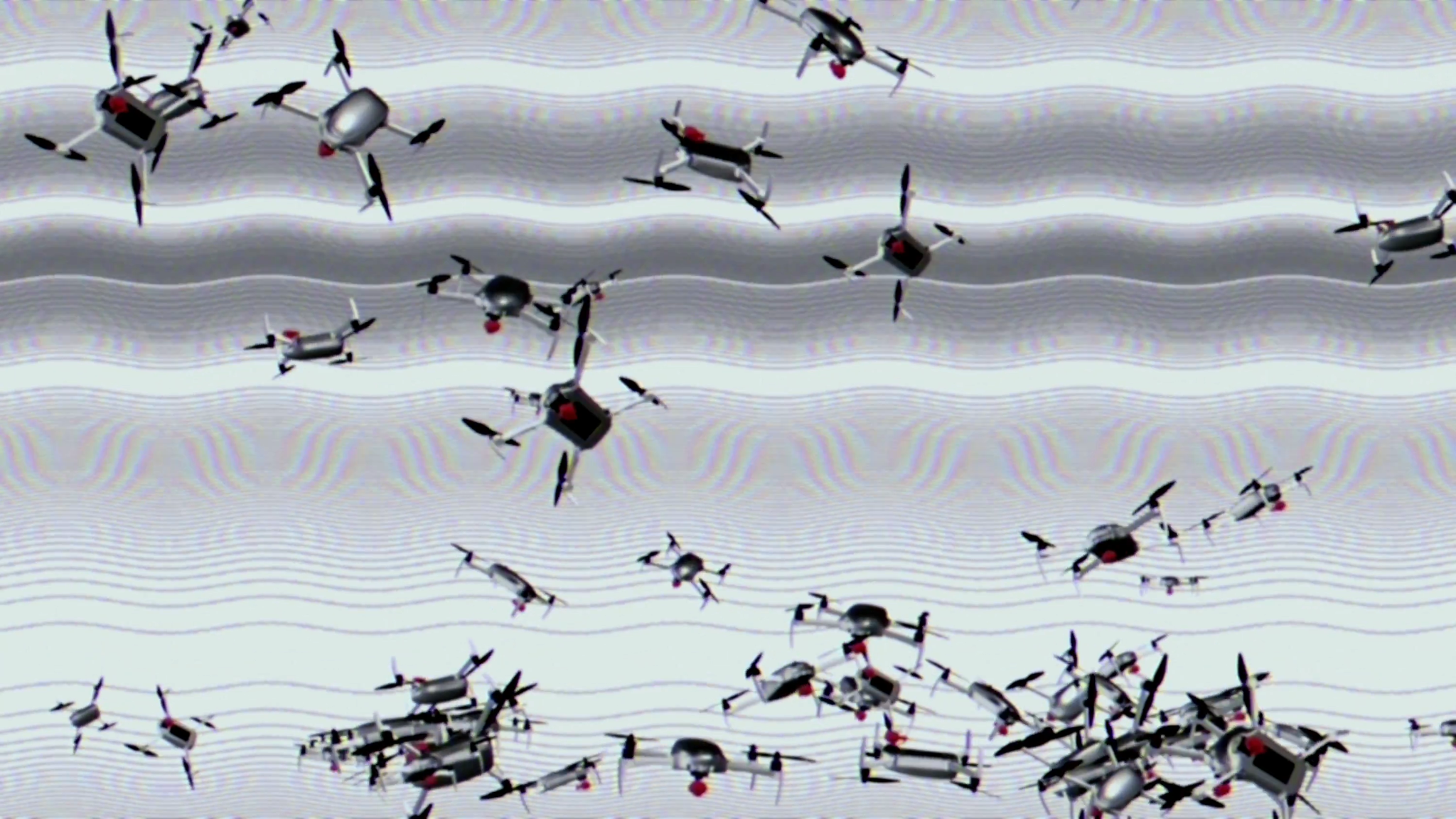

That’s worth bearing in mind when you consider that there have been 434 school shootings in the US since Columbine in 1999. The Washington Post estimates that 397,000 students have experienced gun violence at school in that period. Another school shooting took place at Evergreen High School in Colorado on Wednesday, adding to that total.

“Being indirectly exposed to gun violence takes its toll on our mental health and children’s ability to learn,” says Daniel Webster, Bloomberg Professor of American Health at the Johns Hopkins Center for Gun Violence Solutions in Baltimore.

The MAHA report states that “American youth face a mental health crisis,” going on to note that “suicide deaths among 10- to 24-year-olds increased by 62% from 2007 to 2021” and that “suicide is now the leading cause of death in teens aged 15-19.” What it doesn’t say is that around half of these suicides involve guns.

“When you add all these dimensions, [gun violence is] a very huge public health problem,” says Webster.

Researchers who study gun violence have been saying the same thing for years. And in 2024, then US Surgeon General Vivek Murthy declared it a public health crisis. “We don’t have to subject our children to the ongoing horror of firearm violence in America,” Murthy said in a statement at the time. Instead, he argued, we should tackle the problem using a public health approach.

Part of that approach involves identifying who is at the greatest risk and offering support to lower that risk, says Webster. Young men who live in poor communities tend to have the highest risk of gun violence, he says, as do those who experience crisis or turmoil. Trying to mediate conflicts or limit access to firearms, even temporarily, can help lower the incidence of gun violence, he says.

There’s an element of social contagion, too, adds Webster. Shooting begets more shooting. He likens it to the outbreak of an infectious disease. “When more people get vaccinated … infection rates go down,” he says. “Almost exactly the same thing happens with gun violence.”

But existing efforts are already under threat. The Trump administration has eliminated hundreds of millions of dollars in grants for organizations working to reduce gun violence.

Webster thinks the MAHA report has “missed the mark” when it comes to the health and well-being of children in the US. “This document is almost the polar opposite to how many people in public health think,” he says. “We have to acknowledge that injuries and deaths from firearms are a big threat to the health and safety of children and adolescents.”

This article first appeared in The Checkup, MIT Technology Review’s weekly biotech newsletter. To receive it in your inbox every Thursday, and read articles like this first, sign up here.