Join our daily and weekly newsletters for the latest updates and exclusive content on industry-leading AI coverage. Learn More

The sleeping giant has awoken!

For a while, it seemed like Amazon was playing catchup in the race to offer its users — particularly the millions of developers building atop Amazon Web Services (AWS)’s cloud infrastructure — compelling first-party AI models and tools.

But in late 2024, it debuted its own internal foundation model family, Amazon Nova, with text, image and even video generation capabilities, and last month saw a new Amazon Alexa voice assistant powered in part by Anthropic’s Claude family of models.

Then, on Monday, the e-commerce and cloud giant’s artificial general intelligence division Amazon AGI has announced the release of Amazon Nova Act, an experimental developer kit for building AI agents that can navigate the web and complete tasks autonomously, powered by a custom, proprietary version of Amazon’s Nova large language model (LLM). Oh, and the standard developer kit (SDK) is open source under a permissive Apache 2.0 license, though the SDK is designed to work only with Amazon’s in-house custom Nova model, not any third-party ones.

The goal is to enable third-party developers to build AI agents capable of reliably performing tasks within web browsers.

But how does Amazon’s Nova Act stack up to other agent building platforms out there on the market, such as Microsoft’s AutoGen, Salesforce’s Agentforce, and of course, OpenAI’s recently released open source Agents SDK?

A different, more thoughtful approach to AI agents

Since the public rise of large language models (LLMs), most “agent” systems have been limited to responding in natural language or providing information by querying knowledge bases.

Nova Act is part of the larger industry shift toward action-based agents—systems that can complete actual tasks across digital environments on behalf of the user. OpenAI’s new Responses API, which gives users access to its autonomous browser navigator, is one leading example of this, which developers can integrate into AI agents through the OpenAI Agents SDK.

Amazon AGI emphasizes that current agent systems, while promising, struggle with reliability and often require human supervision, especially when handling multi-step or complex workflows.

Nova Act is specifically designed to address these limitations by providing a set of atomic, prescriptive commands that can be chained together into reliable workflows.

Deniz Birlikci, a Member of Technical Staff at Amazon, described the broader vision in a video introducing Nova Act: soon, there will be more AI agents than people browsing the web, carrying out tasks on behalf of users.

David Luan, VP of Amazon’s Autonomy Team and Head of AGI SF Lab, framed the mission more directly in a recent video call interview with VentureBeat: “We’ve created this new experimental AI model that is trained to perform actions in a web browser. Fundamentally, we think that agents are the building block of computing,” he said.

Luan, formerly a co-founder and CEO of Adept AI, joined Amazon in 2024 as part of an aqcui-hire. Luan said he has long been a proponent of AI agents. “With Adept, we were the first company to really start working on AI agents. At this point, everybody knows how important agents are. It was pretty cool to be a bit ahead of our time,” he added.

What Nova Act offers devs

The Nova Act SDK provides developers with a framework for constructing web-based automation agents using natural language prompts broken down into clear, manageable steps.

Unlike typical LLM-powered agents that attempt entire workflows from a single prompt—often resulting in unreliable behavior—Nova Act is designed to incrementally execute smaller, verifiable tasks.

Some of the key features of Nova Act include:

- Fine-Grained Task Decomposition: Developers can break down complex digital workflows into smaller act() calls, each guiding the agent to perform specific UI interactions.

- Direct Browser Manipulation via Playwright: Nova Act integrates with Playwright, an open-source browser automation framework developed by Microsoft. Playwright allows developers to control web browsers programmatically—clicking elements, filling forms, or navigating pages—without relying solely on AI predictions. This integration is particularly useful for handling sensitive tasks such as entering passwords or credit card details. For example, instead of sending sensitive information to the model, developers can instruct Nova Act to focus on a password field and then use Playwright APIs to securely enter the password without the model ever “seeing” it. This approach helps strengthen security and privacy when automating web interactions.

- Python Integration: The SDK allows developers to interleave Python code with Nova Act commands, including standard Python tools such as breakpoints, assertions, or thread pooling for parallel execution.

- Structured Information Extraction: The SDK supports structured data extraction through Pydantic schemas, allowing agents to convert screen content into structured formats.

- Parallelization and Scheduling: Developers can run multiple Nova Act instances concurrently and schedule automated workflows without the need for continuous human oversight.

Luan emphasized that Nova Act is a tool for developers rather than a general-purpose chatbot. “Nova Act is built for developers. It’s not a chatbot you talk to for fun. It’s designed to let developers start building useful products,” he said.

For example, one of the sample workflows demonstrated in Amazon’s documentation shows how Nova Act can automate apartment searches by scraping rental listings and calculating biking distance to train stations, then sorting the results in a structured table.

Another showcased example uses Nova Act to order a specific salad from Sweetgreen every Tuesday, entirely hands-free and on a schedule, illustrating how developers can automate repeatable digital tasks in a way that feels reliable and customizable.

Benchmark performance and a focus on reliability

A central message in Amazon’s announcement is that reliability, not just intelligence, is the key barrier to widespread agent adoption.

Current state-of-the-art models are actually quite brittle at powering AI agents, with agents typically achieving 30% to 60% success rates on browser-based multi-step tasks, according to Amazon.

Nova Act, however, emphasizes a building-block approach, scoring over 90% on internal evaluations of tasks that challenge other models—such as interacting with dropdowns, date pickers, or pop-ups.

Luan underscored why that reliability focus matters. “What we’ve really focused on is how do you actually make agents reliable? If you ask it to update a record in Salesforce and it deletes your database one out of ten times, you’re probably never going to use it again,” he said.

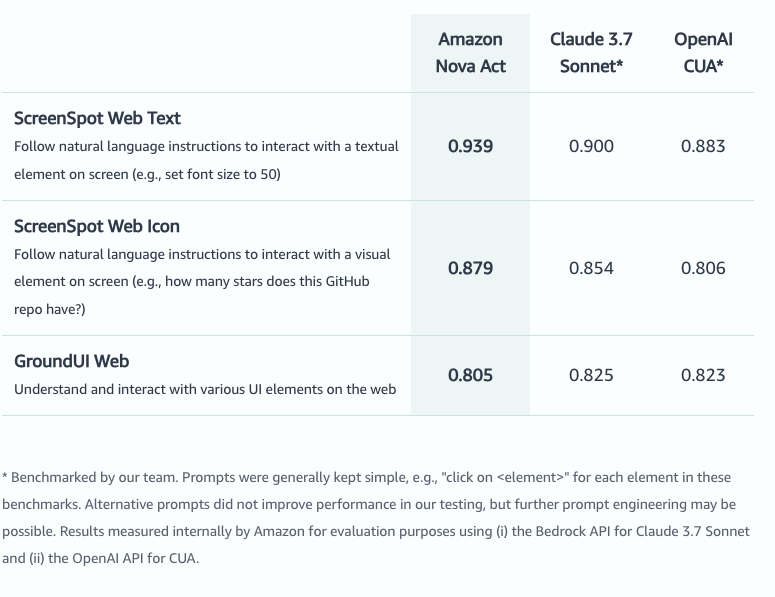

Amazon AGI benchmarked Nova Act against competing models including Anthropic’s Claude 3.7 Sonnet and OpenAI’s CUA model. On the ScreenSpot Web Text benchmark, which tests instruction-following on textual screen elements, Nova Act achieved a score of 0.939, outperforming Claude 3.7 Sonnet (0.900) and OpenAI CUA (0.883).

On the ScreenSpot Web Icon benchmark, which focuses on visual UI elements, Nova Act scored 0.879, again ahead of the other models.

However, on the GroundUI Web benchmark, which tests general UI interaction, Nova Act scored 0.805, slightly behind its competitors.

These scores were measured internally by Amazon using consistent prompts and evaluation criteria.

Amazon also highlighted early results in Nova Act’s ability to generalize beyond standard environments.

For instance, team member Rick Liu demonstrated how the agent, without explicit training, successfully interacted with a pigeon-themed web game—assigning stats, battling opponents, and progressing in the game.

According to Luan, that ability to generalize is central to the long-term vision. “Our goal with Nova Act is to be a universal browser-use solution. We want an agent that can do anything you want to do on a computer for you,” he said.

Flexible for use in different clouds, but locked to Amazon’s Nova model

While Nova Act is accessible to developers globally through nova.amazon.com, Luan clarified that the system is tightly coupled to Amazon’s in-house Nova foundation models.

Developers cannot plug in external LLMs such as OpenAI’s GPT-4o or Anthropic’s Claude 3.7 Sonnet, unlike with OpenAI’s Agents SDK, and to a lesser extent, Microsoft’s AutoGen and Salesforce’s Agentforce platforms (which allow switching to a few different provider companies and model families).

“Nova Act is a custom trained version of the Nova model,” he said. “It’s not just a scaffolding over a generic LLM. It’s natively trained to act on the internet on your behalf.”

However, Nova Act is not restricted to AWS environments. Developers can download the SDK and run it locally, in the cloud, or wherever they choose. “You don’t need to be on AWS to use it,” Luan stated.

Thus, for businesses looking for maximum underlying model flexibility for their agents, Nova Act is probably not the best choice. However, for those looking for a purpose-built model specifically designed to navigate the web and perform actions across a wide variety of websites with very different user interfaces (UIs), it’s probably worth a look — especially if you’re already in the Amazon or AWS developer ecosystem.

Security, licensing and pricing

The Nova Act SDK is released under the Apache License, Version 2.0 (January 2004), an open source license. However, this applies only to the SDK software.

The Nova Act model itself, along with its weights and training data, is proprietary and remains closed-source. The approach is intentional, according to Luan, who explained that the model is tightly integrated and co-trained with the SDK to achieve reliability.

At launch, Nova Act is offered as a free research preview. There is no announced pricing for production use yet.

Luan described this phase as an opportunity for developers to experiment and build with the technology. “Our belief is that the majority of the most useful agent products have not yet been built. We want to enable anybody to build a really useful agent, whether for themselves or as a product,” he said.

Longer term, Amazon plans to introduce production-grade terms, including usage-based billing and scaling guarantees, but those are not yet available.

What’s next for Nova Act?

The release of Nova Act reflects Amazon’s broader ambition to make action-oriented AI agents a foundational component of computing.

Luan summed up the opportunity ahead: “My personal dream is that agents become the building block of computing, and the coolest new startups and products get built on top of what our team is developing.”

The Nova Act SDK is available now for experimentation and prototyping on Amazon’s website and on Github.

Daily insights on business use cases with VB Daily

If you want to impress your boss, VB Daily has you covered. We give you the inside scoop on what companies are doing with generative AI, from regulatory shifts to practical deployments, so you can share insights for maximum ROI.

Read our Privacy Policy

Thanks for subscribing. Check out more VB newsletters here.

An error occured.