Since the publication of the UK government’s Clean Power 2030 Action Plan late last year, we’ve seen a renewed focus on the country’s energy transition.

The government’s ambition to streamline the grid connectivity process is commendable and much needed, but it’s becoming increasingly clear that 2025 needs to be the year that this is married with a broader strategy to overcome the challenges of grid capacity and ensure the UK’s energy transition remains on track.

While large-scale renewable projects and grid connections are essential, the growing pressure on the grid means we must also provide appropriate resource to how we can reduce the pressure on grid infrastructure and accelerate the adoption of behind-the-meter generation.

This streamlined, decentralised approach offers significant advantages for businesses, the grid, and the UK’s overall decarbonisation goals, and should be a key focus for 2025.

The grid bottleneck: slower progress, higher costs

The UK’s renewable energy progress to date has been largely driven by grid-connected projects and a significant decrease in renewable energy costs, particularly in solar and wind.

However, as we move forward in 2025, the situation becomes more complex.

The Climate Change Committee’s 2024 Progress Report highlighted a key concern: new grid capacity is being filled up at a rapid pace, and the cost of grid connections is increasing as available capacity dwindles.

For new projects, the grid connection process is slow, costly, and complex, often making up a large portion of the levelised cost of energy (LCOE).

© Supplied by Balance Power

© Supplied by Balance PowerThe lengthy wait times for grid connections are already hindering project development, with some initiatives taking as long as 10 years to come online, particularly for offshore and large-scale projects.

Part of this issue is linked to a distinct lack of cohesion between differing government and industry players in the space.

The National Energy System Operator (NESO), the Department for Energy Security and Net Zero (DESNZ) and Distribution Network Operators (DNOs) all operate together to dictate the types of renewable energy technologies needed in different regions, shaping how developers gain access to the grid and deciding which types of projects receive government support.

However, these bodies are often not being aligned with local planning authorities (LPAs) which grant planning permissions for these projects, leading to LPAs potentially rejecting proposals that align with national grid objectives but conflict with local planning priorities.

These disconnects further slow grid connectivity, increasing the bottleneck’s severity further.

Whilst we welcome the government’s recent measures to address the grid connectivity issue, as outlined in the Clean Power 2030 Action Plan, the reality is that the pace of grid expansion cannot keep up with the demand for new clean energy sources.

This is a serious bottleneck for the UK’s energy transition, and unless we explore alternatives, our decarbonisation ambitions may be delayed.

Behind-the-meter generation: Lightening the grid load and fuelling the future

Behind-the-meter generation involves generating renewable energy directly at or near the point of consumption, bypassing the need for complex grid connections.

For businesses, this typically means setting up on-site renewable projects, such as rooftop solar panels, on-site solar farms, or wind turbines, that feed power directly to the business via private wires.

This model ensures that energy use is 100% renewable and offers a clear, measurable route to decarbonisation.

One of the key advantages of behind-the-meter generation is that it can significantly reduce strain on the national grid.

The UK’s energy networks are increasingly under pressure, and the cost of upgrading grid infrastructure is rising exponentially.

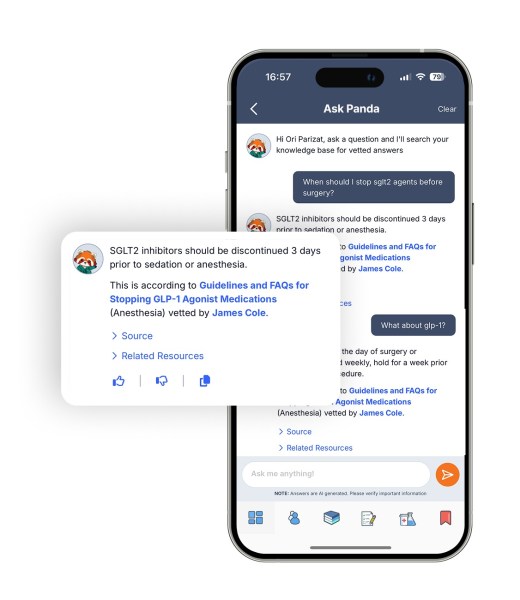

© Supplied by SSEN Transmission

© Supplied by SSEN TransmissionExpanding grid capacity, particularly in areas already experiencing congestion, is a slow, expensive, and politically contentious process.

Behind-the-meter generation helps mitigate these issues by enabling businesses to meet a portion of their energy needs without relying on the grid at all.

This is critical for the UK to maintain its renewable energy ambitions.

As grid connections become scarcer and more expensive, the role of decentralised energy solutions must be part of the solution.

By enabling businesses to self-generate, we reduce the need for costly and time-consuming grid reinforcements and avoid inefficient curtailment of renewable energy projects.

The cost of maintaining grid stability with intermittent renewable sources, like solar and wind, is rising, and while grid-scale storage is an important part of the solution, behind-the-meter generation can reduce the need for additional storage and grid capacity by directly serving the energy needs of large consumers.

Moreover, behind-the-meter generation directly contributes to decarbonising the consumer’s energy profile, rather than simply purchasing renewable energy through a Power Purchase Agreement (PPA) that may not always meet the same standards.

Many PPAs allow businesses to buy renewable energy from grid-connected sources that are often distant from the point of consumption, which doesn’t guarantee that the power used by the business is fully decarbonised.

In contrast, behind-the-meter solutions offer a genuine reduction in emissions.

Broader benefits for industry and economy

In addition to helping decarbonise energy use and reduce grid dependence, behind-the-meter generation has significant economic advantages.

For large industrial consumers, it lowers energy costs and increases energy security, making businesses more competitive.

Moreover, by investing in on-site or near-site renewable energy, businesses can strengthen their resilience to the changing energy landscape – something that will be increasingly important as the UK moves towards a more varied and decarbonised grid system, and more precarious energy landscape globally.

The positive effects of behind-the-meter generation go beyond individual businesses.

For local communities, behind-the-meter projects contribute directly to job creation and economic growth.

These businesses are often significant employers, and by lowering their energy costs, they can remain competitive and continue to expand, benefiting the local economy.

Furthermore, these projects can help reduce local pollution by displacing fossil fuel-intensive energy consumption, offering tangible environmental benefits.

On a macro level, behind-the-meter generation can stimulate investment in clean energy technologies and support the government’s broader industrial strategy.

By decentralising energy production, we can build a more resilient, sustainable industrial base, helping to attract foreign investment and improve the UK’s global competitiveness in the clean energy sector.

Levelling barriers to adoption

Despite its potential, the uptake of behind-the-meter generation in the UK has been slow.

One of the main reasons for this is the current regulatory framework, which still favours corporate PPAs and green tariffs over self-generation.

Uptake in this vital practice will require a more supportive governmental directive, encouraging businesses to make the bold and vital choice to generate their own power.

Uptake has also been hampered by slow-moving preference profiles from business leaders themselves.

Many are unaware of its benefits, and some may be daunted at the prospect of an on-site renewable development.

But if behind-the-meter developments are to have the uptake we need, doubts need to be reassured, priorities need to be clarified, and misconceptions need to be challenged.

The benefits of cheaper, more reliable, 100% renewable energy to businesses and industry need to be effectively advertised, alongside demonstrations of viable and accessible routes for on-site development.

There is a plethora of examples of successful BTM development globally. California, Australia, and Germany have all seen a relatively rapid uptake in industry self-generation over the last 10-15 years – stories such as these, alongside plenty of other examples, should be used to demonstrate the models viability.

Whilst uptake in the UK has been slower than necessary, the several successful case studies that do exist also need to be championed, demonstrating how the model applies to our unique energy, land development, and political environment.

2025 and beyond – the path to net zero

The UK’s net zero ambitions are in reach, but the path to 2030 is fraught with challenges.

The government’s emphasis on decarbonising energy supply through large-scale grid-connected projects is important, but it is not sufficient to meet the demand for clean energy.

The reality is that the grid is already at capacity, and the process of expanding it is slow, costly, and politically challenging.

Behind-the-meter generation offers a fast, cost-effective alternative that not only reduces reliance on the grid but also enables businesses to decarbonise quickly and affordably.

We must not wait for the grid to catch up with our renewable energy ambitions.

Instead, we must encourage businesses to take control of their energy supply through decentralised generation, which will reduce grid pressure, lower costs, and help meet the UK’s 2030 emissions reductions target.

The time to act is now—policy must adapt to these new realities, and the UK’s industrial sector must rise to the challenge.

Phil Thompson is the chief executive officer of UK renewable and flexible energy developer Balance Power.

Recommended for you

UK on track to miss 2030 clean power targets, research suggests