Woodside Energy Group Ltd. has reported 3.09 billion barrels of oil equivalent (boe) proven and probable (2P) reserves as of the end of 2024, up 46.2 million boe (MMboe) when excluding divestments and production.

The Australian company had 2P reserves of 3.76 billion boe at the end of 2023. “Excluding the impact of divestments and production, reserves additions [in 2024] were driven by strong performance at Sangomar, successful FIDs [final investment decisions] of projects in Australia and the US, and performance-based revisions across the portfolio, notably North West Shelf and Bass Strait”, Woodside said in an online statement.

It started production in the Sangomar field offshore Senegal in the second quarter of 2024, delivering the West African country’s first offshore oil development.

“Early performance from the S500 reservoirs has demonstrated excellent productivity”, Woodside said of Sangomar. “This has resulted in proved and proved plus probable reserves additions of 16.2 MMboe and 15.4 MMboe respectively”.

Woodside’s proven reserves stood at 1.98 billion boe at year-end 2024, compared to 2.45 billion boe at year-end 2023.

The sale of a 25.1 percent stake in the Scarborough field offshore Western Australia to Japanese companies reduced proven reserves by 323 MMboe and 2P reserves by 504.7 MMboe, Woodside said.

Best-estimate contingent resources remaining were 5.87 billion boe at year-end 2024, compared to 5.9 billion boe at year-end 2023.

“The reserves update underscores Woodside’s high-quality assets and disciplined execution”, chief executive Meg O’Neill commented. “The outstanding early performance at Sangomar again demonstrates Woodside’s proven record of delivering large-scale projects that provide sustainable returns over the long term.

“Sangomar is forecast to continue producing on plateau into the second quarter of 2025 and with continued strong asset performance across the portfolio we are well positioned for another year of delivering value for shareholders”.

Sangomar, which Woodside operates with an 82 percent stake, produced 13.3 MMboe of crude last year.

“The project ramped up in less than nine weeks, and achieved over 94 percent production reliability in Q4 2024”, Woodside said. “Both water and gas injection systems have been fully commissioned”.

“Future development decisions will be informed by 12-24 months of production data”, it said.

O’Neill added, “As Woodside embarks on the next phase of growth, continuing to execute Scarborough and Trion and preparing for a final investment decision on Louisiana LNG, we will maintain our disciplined approach and commitment to safety, reliability and performance”.

Trion is an under-construction field on the Mexican side of the Gulf of Mexico. Woodside plans start-up in the second half of 2025. Trion will have a floating production unit with an output capacity of 100,000 barrels a day, to be connected to a floating storage and offloading vessel with a capacity of 950,000 barrels.

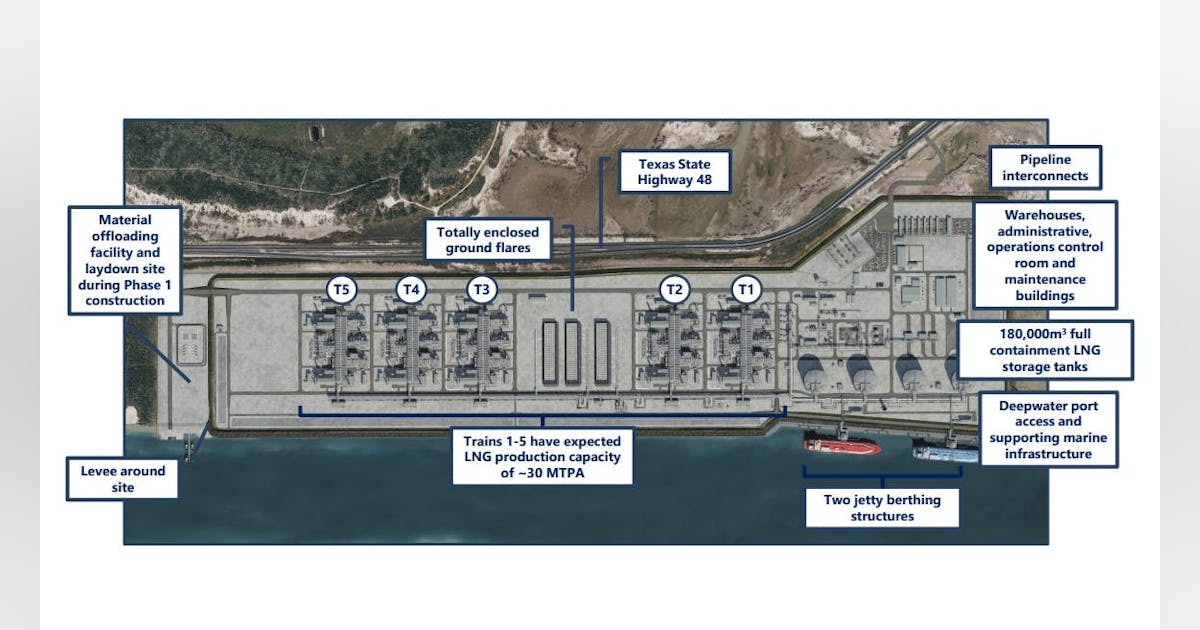

In Louisiana LNG, formerly Driftwood LNG, Woodside expects to make a final investment decision this quarter. Woodside took over Driftwood LNG, located near Lake Charles, Lousiana, when it acquired Tellurian Inc. for about $1.2 billion.

The under-construction project is planned to have a capacity of 27.6 million metric tons a year of LNG.

To contact the author, email [email protected]

What do you think? We’d love to hear from you, join the conversation on the

Rigzone Energy Network.

The Rigzone Energy Network is a new social experience created for you and all energy professionals to Speak Up about our industry, share knowledge, connect with peers and industry insiders and engage in a professional community that will empower your career in energy.

MORE FROM THIS AUTHOR